How to Use Claude Code Free With Local AI Models

Key Takeaways

- Claude Code is free and open source. The subscription pays for Anthropic's API, not the tool itself.

- You can connect Claude Code to local models through Ollama to avoid API costs entirely.

- Local models like DeepSeek-R1 can handle most coding tasks, though they won't match Claude Sonnet's performance.

You're paying for the model, not the tool

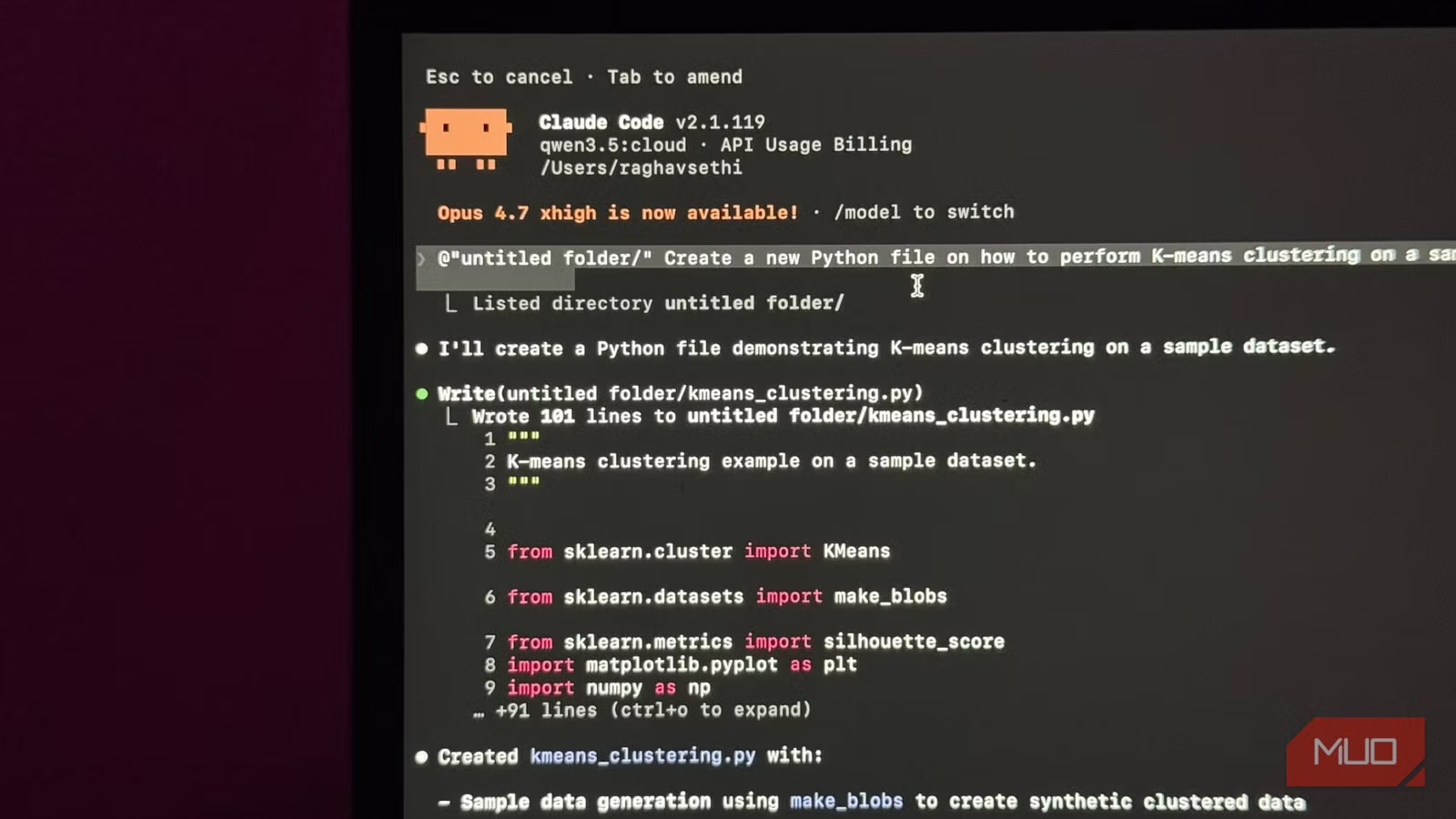

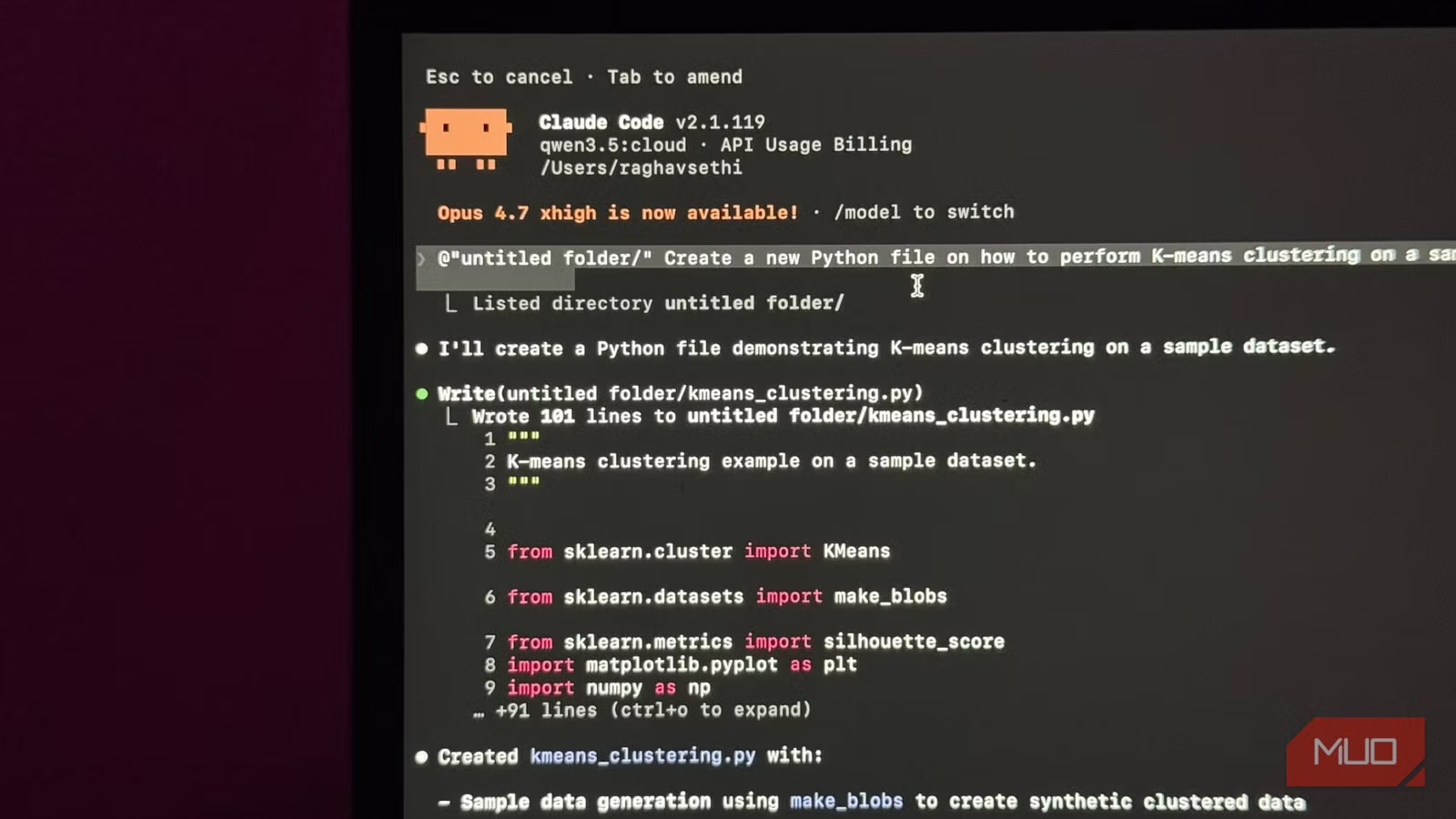

Claude Code isn't a standalone AI. It's an intermediary layer that figures out which files to read, what changes to make, and what terminal commands to run. The actual thinking happens in a language model, usually Claude Sonnet or Opus.

The model reads your code, understands your request, and generates output. Claude Code takes that output and does something useful with it: editing files, running commands, managing context across your project.

Here's the key insight: Claude Code itself is free and open source. You can install it right now at zero cost. What you're paying for is the API call to Anthropic's models. Every prompt, every file it reads, every response it generates goes through Anthropic's API. That's what shows up on your bill.

Logicity's Take

The solution: local models through Ollama

Nothing forces you to use Anthropic's models. Claude Code can connect to any compatible language model, including ones running locally on your machine through Ollama.

Ollama is a tool that lets you run open-source language models on your own hardware. Models like DeepSeek-R1, Llama 3, and Mistral can handle coding tasks reasonably well. They won't match Claude Sonnet's performance on complex reasoning, but for everyday coding work, they're often good enough.

The setup requires installing Ollama, downloading a model, and configuring Claude Code to point at your local endpoint instead of Anthropic's API. Once connected, Claude Code works the same way. It still manages your files and context. It just sends requests to your local model instead of the cloud.

What you give up

Local models have real limitations. They're smaller than Claude Sonnet or Opus, which means they struggle with complex multi-file refactoring and nuanced code review. Response times depend on your hardware. A MacBook Air will be slower than a desktop with a dedicated GPU.

Context windows are also smaller. Claude Sonnet can handle 200,000 tokens. Most local models top out around 32,000 or 128,000. For large codebases, you'll hit limits faster.

But for writing functions, debugging scripts, generating boilerplate, and handling routine coding tasks, local models work fine. The quality gap matters less than the cost gap for many developers.

More free workarounds that improve your tech without subscriptions

When to pay anyway

The subscription makes sense if you're using Claude Code heavily for complex work. Multi-file refactoring, architecture decisions, code review across large projects. These tasks benefit from Claude Sonnet's larger context window and better reasoning.

If you're dipping into Claude Code occasionally, or mostly using it for simpler tasks, local models give you 80% of the value at 0% of the cost.

Frequently Asked Questions

Is Claude Code actually free to install?

Yes. Claude Code is open source. You can install it without paying anything. The subscription covers Anthropic's API usage, not the tool itself.

What local models work best with Claude Code?

DeepSeek-R1, Llama 3, and Mistral are popular choices. DeepSeek-R1 is especially good at coding tasks and runs well on consumer hardware.

How much slower are local models compared to Claude Sonnet?

It depends on your hardware. A MacBook with M-series chips handles most models acceptably. Desktop GPUs with 12GB+ VRAM will be faster. Response times range from a few seconds to 30+ seconds for complex requests.

Can I switch between local and cloud models?

Yes. You can configure Claude Code to use different endpoints. Some developers use local models for routine work and switch to Claude Sonnet for complex tasks.

Need Help Implementing This?

Source: MakeUseOf

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

How to Jailbreak Your Kindle: Escape Amazon's Control Before They Brick Your E-Reader

Amazon is cutting off support for older Kindles starting May 2026, but you don't have to buy a new device. Jailbreaking your Kindle lets you install custom software like KOReader, read ePub files natively, and keep your e-reader alive for years to come.

X-Sense Smoke and CO Detectors at Home Depot: UL-Certified Alarms You Can Actually Trust

X-Sense just made their UL-certified smoke and carbon monoxide detectors available at Home Depot stores nationwide. The lineup includes wireless interconnected models that can link up to 24 units, 10-year sealed batteries, and smart features designed to cut down on those annoying false alarms that make people disable their detectors entirely.

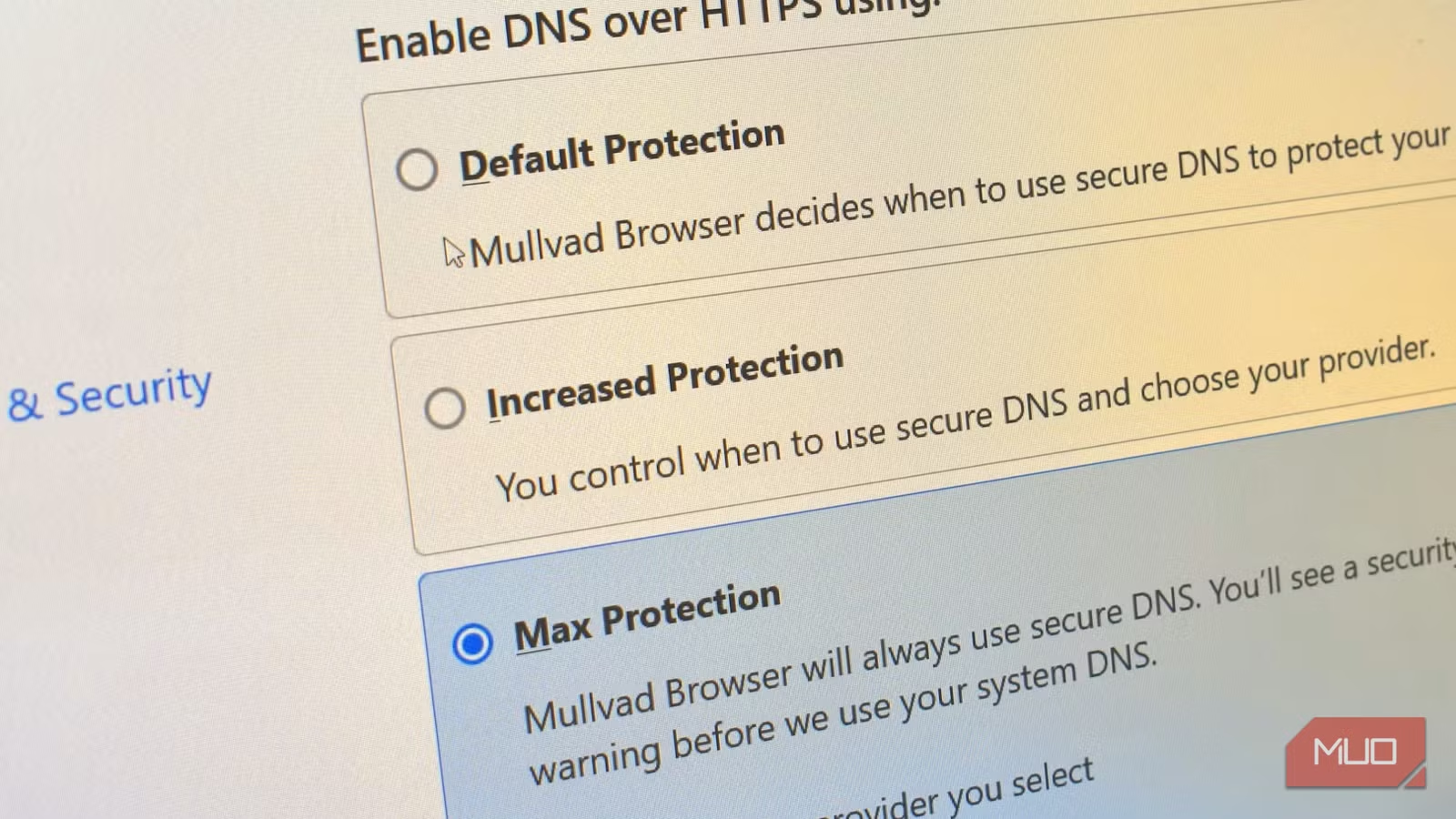

How to Change Your Browser's DNS Settings for Faster, Private Browsing in 2026

Your browser's default DNS settings are probably slowing you down and leaking your browsing history to your ISP. Here's why changing this one setting should be the first thing you do on any new device, and how to pick the right DNS provider for your needs.

Raspberry Pi at 15: Why the King of Single-Board Computers Is Losing Its Crown

After 15 years of dominating the hobbyist computing scene, the Raspberry Pi faces serious competition from cheaper alternatives, supply chain headaches, and a market that's evolved past its original mission. Here's what's happening and what it means for your next project.

Also Read

3 Pixel Camera Settings That Fix Your Focus Problems

Google's Pixel phones offer powerful computational photography, but sometimes the AI makes frustrating decisions about focus and exposure. These three settings give you back control when the automatic mode fails.

Pixxel and Sarvam Plan India's First Orbital Data Centre by 2026

Spacetech startup Pixxel will launch a 200-kg satellite called Pathfinder in Q4 2026, equipped with data centre-class GPUs and AI models from Sarvam. The satellite will process hyperspectral imagery directly in orbit, eliminating delays from traditional ground-based analysis.

Musk vs OpenAI Trial: What's at Stake This Week

The high-profile lawsuit between Elon Musk and OpenAI enters its second week with key testimony from cofounder Greg Brockman. Musk wants to force OpenAI back to nonprofit status, claiming he was sidelined after contributing $38 million. The outcome could reshape the future of an AI company now valued at over $850 billion.