GPT-5.5 vs Claude Opus 4.7: OpenAI Reclaims Agentic AI Lead

Key Takeaways

- GPT-5.5 scored 82.7% on Terminal-Bench 2.0, beating Claude Opus 4.7's 69.4%

- The new model is optimized for 'agentic' AI tasks that require planning and tool coordination

- GPT-5.5 costs $5 per million input tokens, with output tokens at $30 per million

The Benchmark Battle That Actually Matters

For months, Claude and ChatGPT sat in a stalemate. Claude Opus 4.7 had the larger context window, the flexible writing styles, the superior data visualization. Many developers had migrated to Anthropic's flagship model and stayed there.

That calculus changed on April 23, 2026. OpenAI released GPT-5.5, internally codenamed "Spud," and the benchmarks tell a clear story. On Terminal-Bench 2.0, which tests complex command-line workflows requiring planning, iteration, and tool coordination, GPT-5.5 hit 82.7% accuracy. Claude Opus 4.7 scored 69.4%. Gemini 3.1 Pro landed at 68.5%.

That 13.3 point gap is not a marginal improvement. It represents a category shift. An AI that suggests code is useful. An AI that can open your terminal, run commands, and verify fixes is something else entirely.

What 'Agentic' Actually Means

OpenAI describes GPT-5.5 as a "fundamental redesign aimed at agentic performance." Strip away the marketing language and you get a model built to use computers, not just talk about them.

Traditional LLMs respond to prompts. You ask a question, you get an answer. Agentic AI takes a goal and executes multi-step tasks to reach it. It plans ahead. It uses tools. It checks its own work.

“What is really special about this model is how much more it can do with less guidance. It's way more intuitive to use.”

— Greg Brockman, President of OpenAI

On the OSWorld-Verified benchmark, which measures how well AI can operate a standard desktop operating system, GPT-5.5 scored 78.7%. Claude came close at 78.0%. The desktop navigation gap is narrow. The terminal gap is not.

Speed and Efficiency Claims

Smarter models are usually slower. OpenAI claims GPT-5.5 breaks that pattern. The company says it matches GPT-4's per-token latency while delivering significantly better reasoning.

The model is also more "token efficient." It uses fewer tokens to complete the same task because it understands intent faster. For API users paying per token, this matters for cost calculations.

The Price Tag

GPT-5.5 costs $5.00 per million input tokens. Output tokens run $30 per million. For comparison, Claude Opus charges $25 per million output tokens. The new model is more expensive, but if it completes tasks in fewer tokens and with fewer retries, the math could still favor OpenAI.

| Model | Terminal-Bench 2.0 | OSWorld-Verified | Output Cost (per 1M tokens) |

|---|---|---|---|

| GPT-5.5 | 82.7% | 78.7% | $30 |

| Claude Opus 4.7 | 69.4% | 78.0% | $25 |

| Gemini 3.1 Pro | 68.5% | N/A | N/A |

Community Reaction: Real-World Tests

Early adopters are already stress-testing the model. Pietro Schirano, a prominent AI developer, posted a video of GPT-5.5 merging a complex Git branch with hundreds of changes in 20 minutes. The model planned the merge strategy, resolved conflicts, and ran verification tests without human intervention.

“Claude for the architecture, GPT for the execution.”

— Fireship, Tech Educator and YouTuber

That framing captures the emerging consensus. Claude remains strong for research, writing, and system design conversations. GPT-5.5 pulls ahead when you need the AI to actually do something on your computer.

On Reddit, users report that GPT-5.5's "Thinking" mode effectively eliminates hallucinated race conditions in complex code. Hacker News threads show extensive debate over new safety protocols, with some users noting the API blocks certain reverse-engineering tools until users provide explicit intent explanations.

What This Means for the LLM Race

The AI benchmark wars have shifted terrain. Raw intelligence and writing quality were the battleground in 2024 and 2025. In 2026, the question is becoming: what can the model actually do?

Sam Altman posted his own take on the release: "In my experience, the model simply 'knows what to do.' It's a threshold shift for agentic AI." Whether that holds up across diverse use cases remains to be seen, but the Terminal-Bench numbers are hard to argue with.

Anthropic will likely respond. Claude Opus 4.7 still holds advantages in context window size and certain creative tasks. But for developers building AI agents that need to operate autonomously, GPT-5.5 is now the benchmark to beat.

Context on how the LLM coding benchmark race has evolved

Logicity's Take

Frequently Asked Questions

What is GPT-5.5 Terminal-Bench score?

GPT-5.5 scored 82.7% on Terminal-Bench 2.0, which tests complex command-line workflows requiring planning and tool coordination.

How much does GPT-5.5 cost?

GPT-5.5 costs $5.00 per million input tokens and $30 per million output tokens through the OpenAI API.

Is GPT-5.5 better than Claude Opus 4.7?

For agentic tasks like terminal operations and autonomous computer use, GPT-5.5 leads significantly. Claude Opus 4.7 still competes closely on desktop navigation and maintains advantages in context window size and certain writing tasks.

What does agentic AI mean?

Agentic AI refers to models that can plan ahead, use tools, and complete multi-step tasks autonomously rather than simply responding to individual prompts.

When was GPT-5.5 released?

OpenAI released GPT-5.5 on April 23, 2026. The model was internally codenamed "Spud."

Need Help Implementing This?

Source: MakeUseOf

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

How to Jailbreak Your Kindle: Escape Amazon's Control Before They Brick Your E-Reader

Amazon is cutting off support for older Kindles starting May 2026, but you don't have to buy a new device. Jailbreaking your Kindle lets you install custom software like KOReader, read ePub files natively, and keep your e-reader alive for years to come.

X-Sense Smoke and CO Detectors at Home Depot: UL-Certified Alarms You Can Actually Trust

X-Sense just made their UL-certified smoke and carbon monoxide detectors available at Home Depot stores nationwide. The lineup includes wireless interconnected models that can link up to 24 units, 10-year sealed batteries, and smart features designed to cut down on those annoying false alarms that make people disable their detectors entirely.

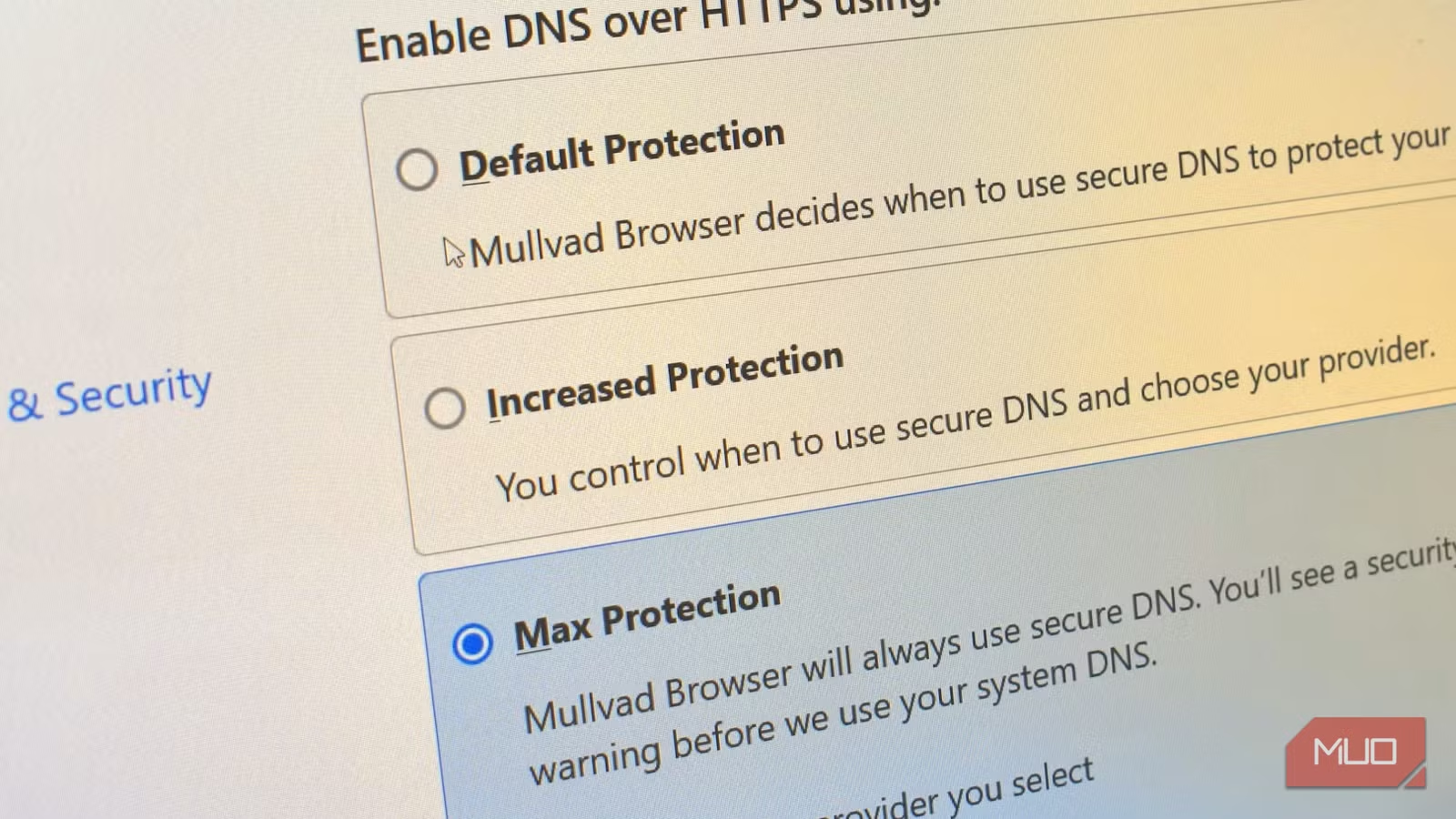

How to Change Your Browser's DNS Settings for Faster, Private Browsing in 2026

Your browser's default DNS settings are probably slowing you down and leaking your browsing history to your ISP. Here's why changing this one setting should be the first thing you do on any new device, and how to pick the right DNS provider for your needs.

Raspberry Pi at 15: Why the King of Single-Board Computers Is Losing Its Crown

After 15 years of dominating the hobbyist computing scene, the Raspberry Pi faces serious competition from cheaper alternatives, supply chain headaches, and a market that's evolved past its original mission. Here's what's happening and what it means for your next project.

Also Read

Why Samsung's Missing MagSafe Magnets Force Cases on Users

Samsung's Galaxy S25 Ultra supports Qi2 wireless charging but lacks built-in alignment magnets. This forces users who want MagSafe-style accessories to add cases, even if they prefer going caseless.

Why Wi-Fi Is Your Smart Home's Weakest Link

Your router handles streaming, browsing, and work traffic. Adding dozens of smart plugs and bulbs on top can overwhelm it. There's a better approach using dedicated protocols like Zigbee and Thread.

3 Paramount+ Shows Worth Binging This Weekend

Looking for weekend viewing? Paramount+ offers three standout series: Dexter: Resurrection brings Michael C. Hall's serial killer to New York with an all-star cast, Killing Eve delivers spy thriller twists, and Everybody Hates Chris remains a comedy classic. Here's what makes each worth your time.