Claude vs ChatGPT vs Gemini: Which AI Debugs Code Best?

Key Takeaways

- Claude was the only AI to identify all three bugs in the test JavaScript file

- Gemini spotted scoping issues but missed the async race condition entirely

- Running the same prompt multiple times produced inconsistent results across all models

The Test Setup

Yadullah Abidi, a full-stack developer and tech journalist at MakeUseOf, created a JavaScript file with three specific bugs: a scoping issue, an async race condition, and an index-based assignment that caused non-deterministic ordering. These aren't the kind of errors that throw obvious console messages. They're subtle problems that can waste hours of debugging time.

The premise was simple. Hand the same broken code to Gemini, ChatGPT, and Claude. See which one actually finds the root cause, not just something that looks wrong.

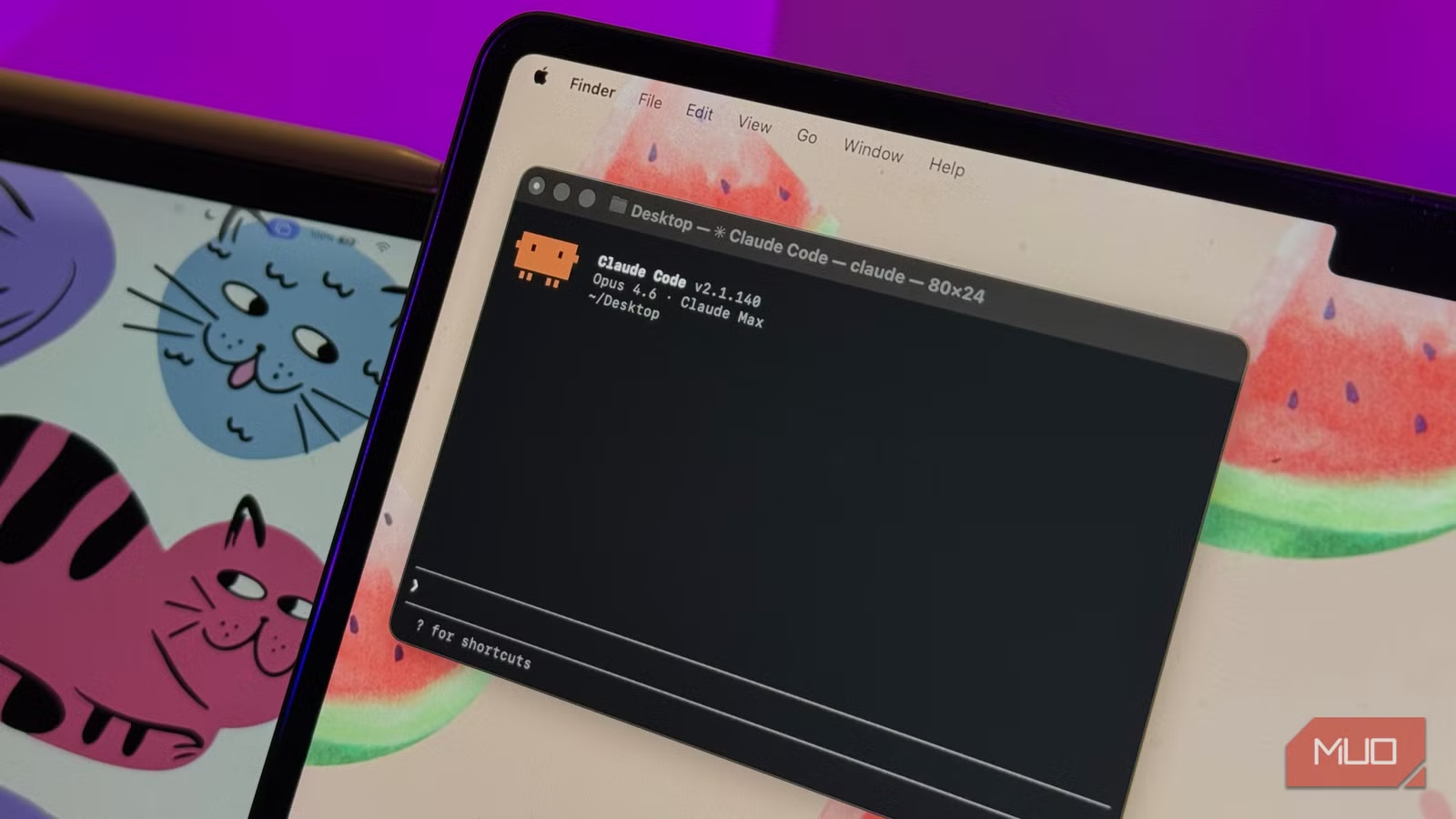

Gemini: Fast but Incomplete

Gemini sat in the middle for speed, responding before ChatGPT but after Claude. It correctly identified the scoping issue and explained block scoping as part of its suggested fix. That's the good news.

The bad news: Gemini completely missed the random delay race condition. The fix it suggested would have made the code look correct on the surface. But run it, check the console, and the problems would persist.

Abidi noted another issue. One of Gemini's two responses didn't even explain the changes or how they affected the code. Running the same prompt multiple times produced different results. Sometimes it caught the async race issue but still missed the index-based assignment bug. Consistency was a problem.

ChatGPT: Close but Not Quite

ChatGPT got close to a complete diagnosis. According to the test, it identified more issues than Gemini but still fell short of finding all three bugs. The specific gaps in ChatGPT's analysis weren't detailed in the source material, but the verdict was clear: partial credit, not a pass.

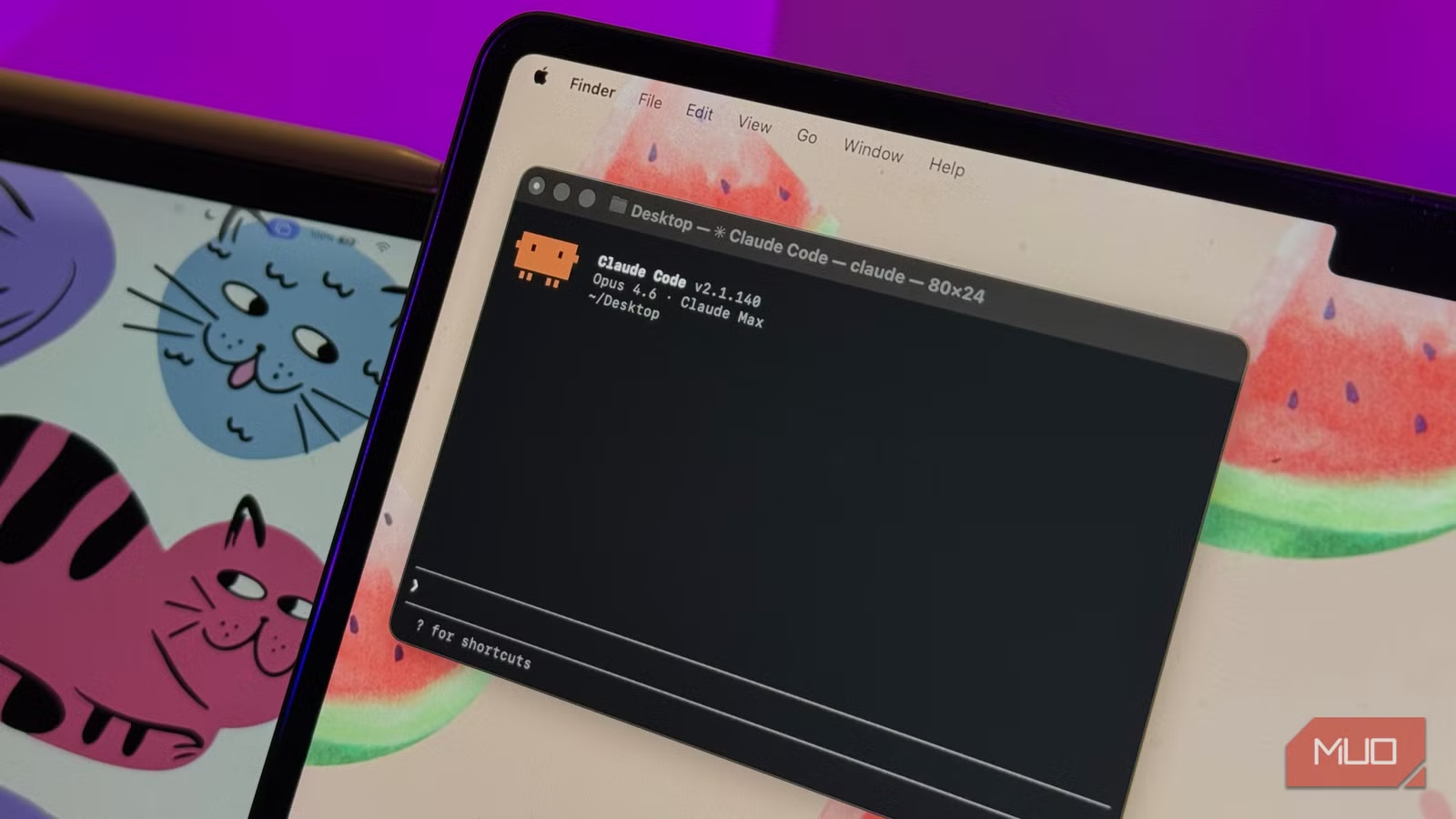

Claude: The Only Complete Fix

Claude was the only AI assistant to identify all three bugs in the test file. It caught the scoping issue, the async race condition, and the index-based assignment problem that caused non-deterministic ordering. The fix it provided would have actually resolved the underlying issues, not just masked symptoms.

Why This Matters for Real Debugging

The test highlights a critical gap between what AI coding assistants promise and what they deliver. All three tools can spot obvious syntax errors. The challenge is finding bugs that don't announce themselves, the kind where console output actively misleads you.

Race conditions and non-deterministic ordering bugs are particularly nasty. They might work fine in testing, then fail unpredictably in production. An AI that catches these before they ship saves more than time. It saves the debugging session at 2 AM when your async calls suddenly stop behaving.

The inconsistency issue is equally important. If running the same prompt twice gives different answers, you can't trust any single response. You'd need to run multiple iterations and compare results, which defeats the purpose of quick AI-assisted debugging.

Practical Takeaways

- For tricky async bugs, Claude appears to have an edge in this particular test

- Gemini handles basic scoping issues well but may miss deeper problems

- Run your debugging prompt multiple times and compare responses for consistency

- Don't assume a fix is complete just because the AI says so. Verify the actual behavior

This was a single test with one specific JavaScript file. Different code, different bugs, and different complexity levels might produce different results. But for developers choosing an AI assistant for debugging work, it's useful data.

| AI Assistant | Scoping Bug | Async Race Condition | Index Assignment Bug | Consistency |

|---|---|---|---|---|

| Claude | Found | Found | Found | High |

| ChatGPT | Found | Partial | Partial | Medium |

| Gemini | Found | Missed | Missed | Low |

More on how technical tools evolve in unexpected ways

Logicity's Take

Frequently Asked Questions

Which AI is best for debugging JavaScript code?

In this specific test, Claude was the only AI to find all three bugs in a JavaScript file. However, results may vary depending on the type and complexity of bugs in your code.

Can AI assistants find race conditions in code?

Some can. Claude identified the async race condition in this test, while Gemini missed it entirely. ChatGPT had partial success. These subtle bugs remain challenging for AI tools.

Are AI debugging results consistent?

Not always. The test found that running the same prompt multiple times on Gemini produced different results. It's wise to verify AI suggestions and run prompts more than once for important debugging tasks.

Should I rely solely on AI for debugging?

No. AI assistants are useful for initial analysis and catching common issues, but they can miss subtle bugs or provide incomplete fixes. Always verify the suggested solution actually resolves your problem.

Need Help Implementing This?

Source: MakeUseOf

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

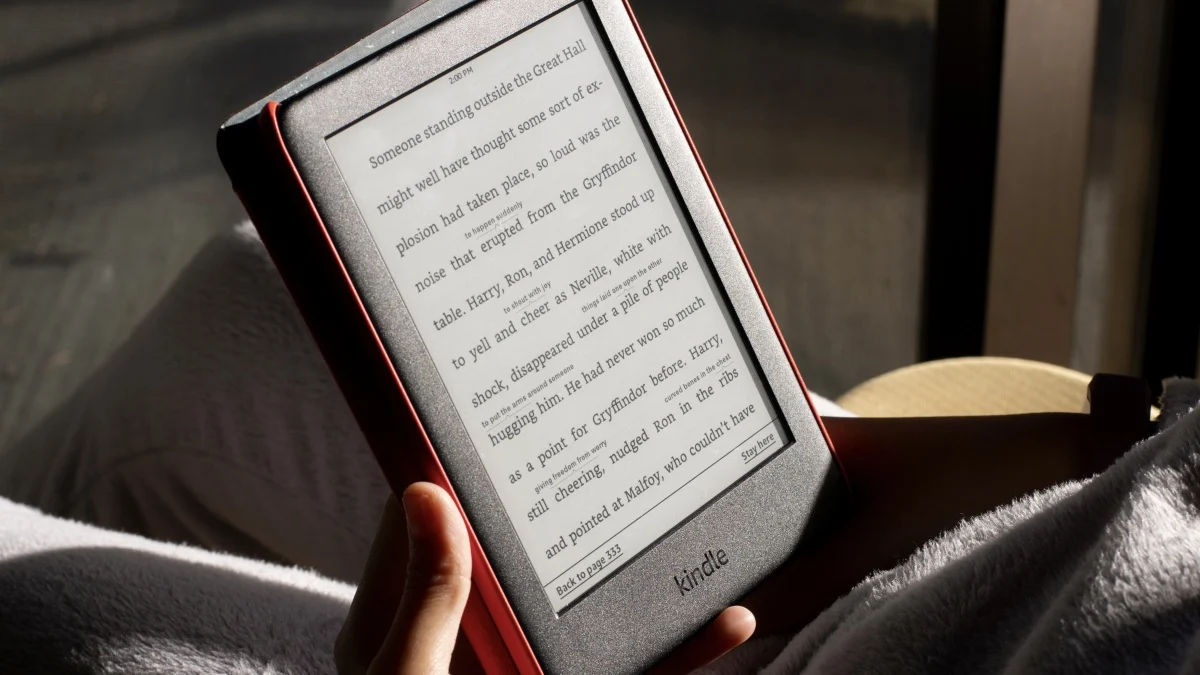

How to Jailbreak Your Kindle: Escape Amazon's Control Before They Brick Your E-Reader

Amazon is cutting off support for older Kindles starting May 2026, but you don't have to buy a new device. Jailbreaking your Kindle lets you install custom software like KOReader, read ePub files natively, and keep your e-reader alive for years to come.

X-Sense Smoke and CO Detectors at Home Depot: UL-Certified Alarms You Can Actually Trust

X-Sense just made their UL-certified smoke and carbon monoxide detectors available at Home Depot stores nationwide. The lineup includes wireless interconnected models that can link up to 24 units, 10-year sealed batteries, and smart features designed to cut down on those annoying false alarms that make people disable their detectors entirely.

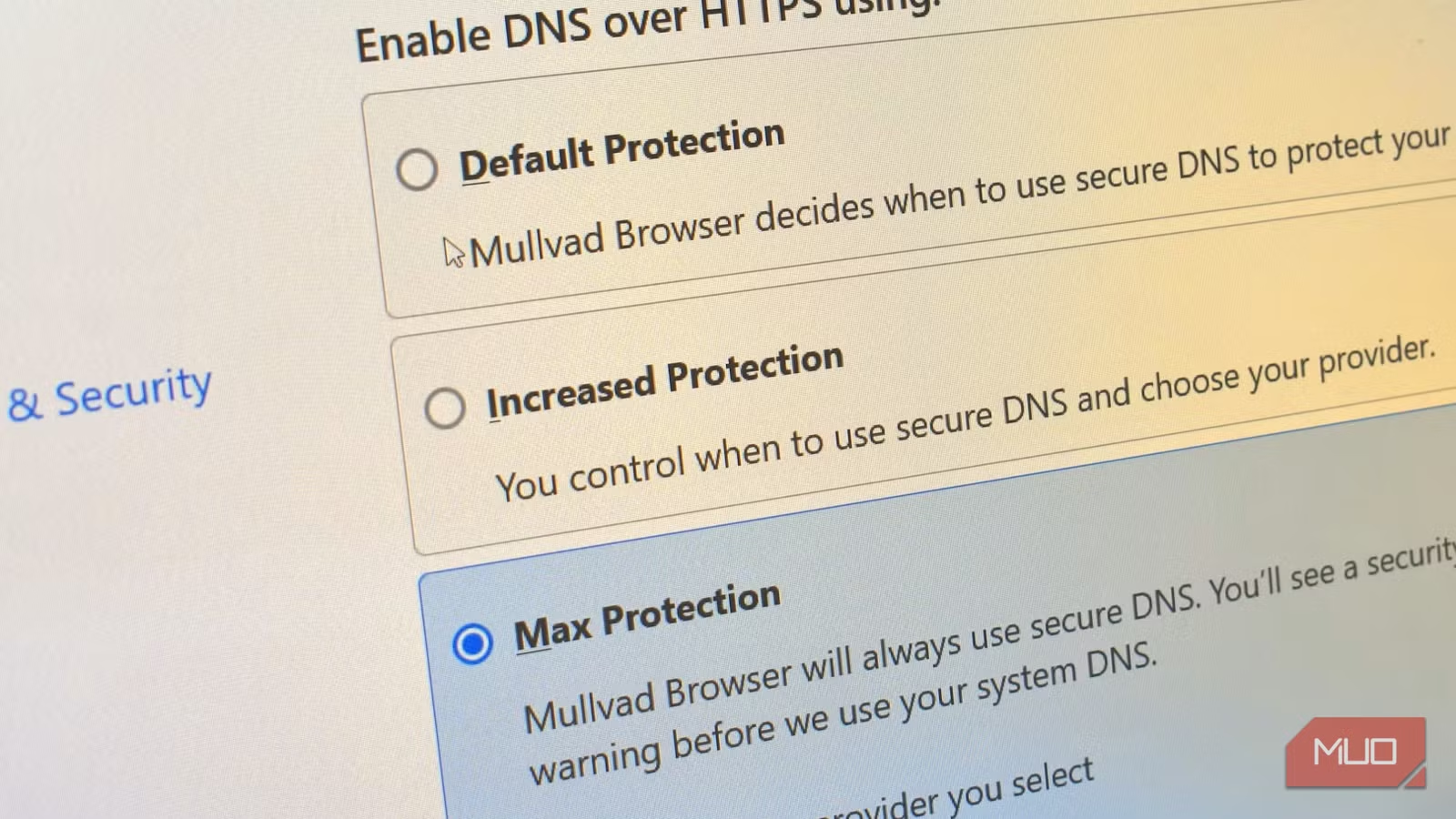

How to Change Your Browser's DNS Settings for Faster, Private Browsing in 2026

Your browser's default DNS settings are probably slowing you down and leaking your browsing history to your ISP. Here's why changing this one setting should be the first thing you do on any new device, and how to pick the right DNS provider for your needs.

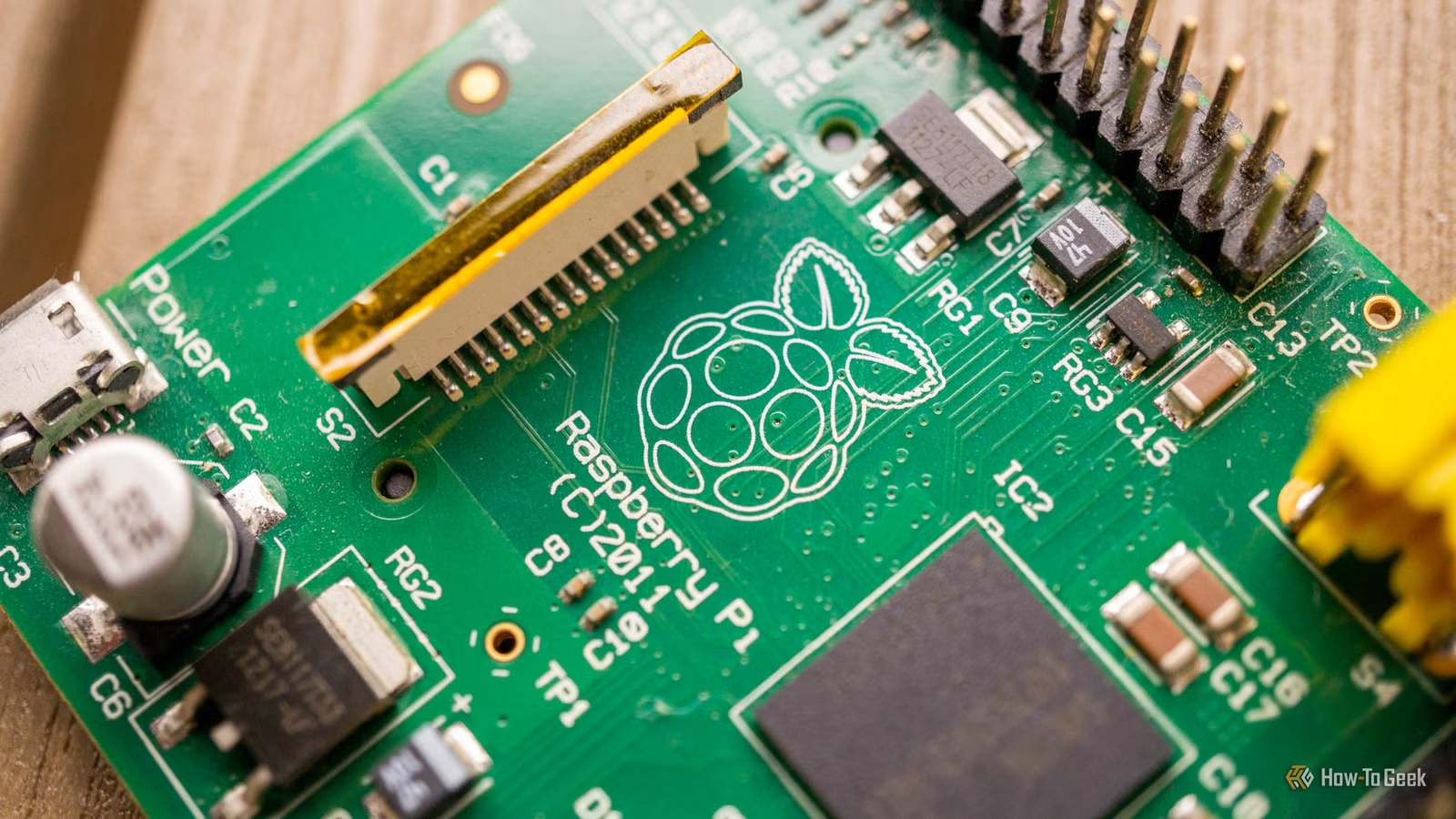

Raspberry Pi at 15: Why the King of Single-Board Computers Is Losing Its Crown

After 15 years of dominating the hobbyist computing scene, the Raspberry Pi faces serious competition from cheaper alternatives, supply chain headaches, and a market that's evolved past its original mission. Here's what's happening and what it means for your next project.

Also Read

Galaxy S26 Series Hits $200 Off, Motorola Razr 2026 Up for Pre-Order

Samsung's Galaxy S26 lineup sees its first significant discounts less than three months after launch, with the S26+ dropping to $890. Meanwhile, Motorola's Razr 2026 foldables open for pre-orders ahead of their May 21 ship date.

Microsoft Rejects Azure Vulnerability, Blocks CVE Assignment

A security researcher says Microsoft quietly patched a critical Azure Backup for AKS privilege escalation flaw after rejecting his report. CERT validated the vulnerability, but Microsoft blocked CVE issuance, leaving the researcher without formal recognition.

4 Emergency Car Gadgets That Stay in My Vehicle Year-Round

A mechanical engineer shares the four emergency tools he carries in his 4Runner. Three sit unused for months. One earns its keep weekly. All cost under $150 combined.