ChatGPT Prompt Engineering: MIT's Framework for Better AI Output

Key Takeaways

- Structured prompts with role assignments, constraints, and output formats dramatically improve AI response quality

- Asking AI what it doesn't know prevents costly decisions based on hallucinated or incomplete information

- Reverse-engineering successful prompts creates reusable templates that scale across teams

According to [Livemint](https://www.livemint.com/technology/tech-news/how-to-reverse-engineer-the-perfect-chatgpt-prompt-according-to-an-mit-professor-gemini-claude-ai-11776662638146.html), MIT professor Andrew Lo has developed a systematic framework for crafting AI prompts that transforms generic chatbot responses into actionable business intelligence. Speaking at Harvard, the director of MIT's Laboratory for Financial Engineering revealed techniques that separate casual AI users from those extracting real strategic value.

Read in Short

MIT's Andrew Lo argues that most AI users get poor results because they treat chatbots like Google searches. His framework: assign a specific role, provide detailed context, request structured outputs, and always ask what the AI is uncertain about. The payoff? Responses you can actually bring to a board meeting.

Why Most Business Leaders Get Garbage from ChatGPT

Lo doesn't mince words: "Garbage in, garbage out." That age-old computing proverb explains why executives who type "How should I approach Q3 strategy?" get responses they'd never show their board. The problem isn't the AI. It's the assumption that these tools understand context the way a human colleague would.

Here's what most leaders miss: Large language models have no memory of your company, your constraints, or your goals. Every conversation starts from zero. When you ask a vague question, the AI fills in blanks with generic assumptions. The result? "Gobbledy gook that will not be particularly helpful," as Lo puts it.

The MIT Prompt Framework: What to Include Every Time

Lo shared a detailed example from financial planning that translates directly to any business context. The structure has five components that transform AI from a search engine into a strategic advisor.

Lo's Five-Part Prompt Structure

1. **Role Assignment**: "Assume you are a fee-only fiduciary advisor" 2. **Context Dump**: Goals, constraints, tax bracket, assets, risk tolerance, timeline 3. **Structured Output Request**: Base case strategy, key assumptions, risks 4. **Failure Modes**: What could invalidate this plan 5. **Uncertainty Check**: What information are you missing?

Notice what's happening here. You're not asking for generic advice. You're creating a simulation where the AI plays a specific expert, works within your actual constraints, and delivers output in a format you can immediately use. This is the difference between "give me some ideas" and "give me a board-ready recommendation."

ChatGPT Prompt Engineering for Strategic Decisions

Let's translate Lo's framework into scenarios business leaders actually face. The principles remain constant: role, context, structure, failure modes, uncertainty.

| Scenario | Weak Prompt | Lo Framework Prompt |

|---|---|---|

| Vendor Selection | Which CRM should I use? | Act as a B2B SaaS analyst. Given: 50-person sales team, $30K annual budget, Salesforce integration required, 6-month implementation window. Provide: Top 3 options with pricing, integration complexity, hidden costs, and what factors would make each the wrong choice. |

| Market Entry | Should we expand to Europe? | Act as an international expansion strategist. Context: $5M ARR SaaS, 80% US revenue, no EU entity, no GDPR expertise in-house. Provide: Go/no-go recommendation, required investments, timeline to first EU revenue, regulatory risks, and what assumptions could invalidate this analysis. |

| Hiring Decisions | How do I hire a CTO? | Act as a technical recruiting advisor. Context: Series A startup, $2M runway, current team of 8 engineers, no senior technical leadership. Provide: CTO vs VP Engineering analysis, compensation benchmarks, red flags in candidates, interview process, and what you're uncertain about given this limited context. |

The weak prompts aren't stupid. They're how we naturally communicate with humans who share context. But AI doesn't share context. Every piece of information you withhold is a gap the model fills with generic assumptions.

How to Ask AI What It Doesn't Know

Lo's most counterintuitive advice: always ask the AI about its limitations. This matters because LLMs deliver wrong answers with the same confident tone as correct ones. Without explicitly asking, you won't know when the model is guessing.

“Always ask the LLM, what are you uncertain about? What information are you missing? Because you want to understand the limitations of what they come up with.”

— Andrew Lo, MIT

This technique has saved companies from expensive mistakes. When you ask ChatGPT for competitive analysis, it might confidently cite a competitor's pricing that's two years outdated. When you ask "What are you uncertain about in this analysis?" the model will often admit its information might be stale. That's your signal to verify before making decisions.

Understanding AI limitations is crucial when building business-critical workflows

The Reverse-Engineering Technique for Prompt Templates

Here's where Lo's approach gets powerful at scale. After you finally get a useful response through back-and-forth conversation, ask the chatbot directly: "What prompt should I have used to get this response in one shot?"

The AI will generate a structured prompt template you can save, share with your team, and reuse. This transforms tribal knowledge into organizational capability. Your best prompt engineers don't hoard techniques. They create templates others can use.

- Have a detailed conversation to reach a useful output

- Ask: "What prompt would have generated this response directly?"

- Save the template to your team's prompt library

- Test the template with variations to ensure reliability

- Document which context variables need updating for each use case

This is how consulting firms are building AI-powered service offerings. They're not just using ChatGPT. They're creating prompt libraries that encode their methodology. A junior analyst with the right prompt template can produce output that previously required senior expertise.

Why Preparation Beats Improvisation with AI

Lo's most old-school advice: step away from the computer before you start prompting. "It does actually make sense to spend a little bit of time away from the computer and making a list of your questions," he said, comparing it to preparing for a meeting with a professional advisor.

This resonates with how effective leaders use any strategic tool. You don't walk into a board meeting without an agenda. You don't hire a consultant without defining the scope. Why would you approach AI differently?

The preparation checklist is simple: What decision am I trying to make? What constraints must the answer respect? What format would make this immediately useful? What would make this answer wrong?

Apple's AI improvements will make prompt engineering skills relevant across all platforms

Building Prompt Engineering into Your Organization

The companies gaining competitive advantage from AI aren't just using these tools. They're systematizing how they use them. This means prompt libraries, style guides, and shared learning about what works.

- Create a shared document for successful prompts by department

- Include context variables that need updating (budget figures, team size, timelines)

- Note which AI models work best for which task types

- Document failure modes: prompts that consistently produce unusable output

- Schedule monthly reviews to update templates as AI capabilities evolve

Finance teams might have templates for scenario modeling. Marketing might have templates for competitive messaging analysis. Engineering might have templates for architecture review. The specifics vary. The principle doesn't: capture what works, share it widely, iterate constantly.

Frequently Asked Questions About AI Prompt Engineering

Frequently Asked Questions

How much time should leaders invest in learning prompt engineering?

Lo's framework can be learned in an afternoon. The real investment is building the habit of structured prompting and creating templates for recurring tasks. Most leaders see immediate improvement with 2-3 hours of deliberate practice.

Does this work with Claude and Gemini, or just ChatGPT?

The framework applies to all major LLMs. The principles of role assignment, context provision, and structured output requests work across ChatGPT, Claude, Gemini, and enterprise AI tools. Some models handle certain prompt structures better than others, so testing across platforms is worthwhile.

Should companies hire dedicated prompt engineers?

For most organizations, embedding prompt skills across existing roles is more effective than dedicated hires. Exceptions: companies building AI-powered products, consulting firms scaling AI-assisted services, or organizations with heavy research workloads.

What's the ROI of better prompt engineering?

Direct measurement is difficult, but proxies include: reduced time to useful AI output (often 3-5x improvement), fewer iterations needed per task, and increased confidence in AI-assisted decisions. Some enterprise users report saving 5-10 hours weekly per knowledge worker.

How do I know if AI output is reliable enough for business decisions?

Lo's uncertainty check is essential: always ask what the AI doesn't know. Beyond that, verify any factual claims, cross-reference with domain experts for high-stakes decisions, and treat AI output as a first draft requiring human review.

AI security considerations matter when integrating these tools into business workflows

Logicity's Take

We've built AI agents for clients using Claude's API and n8n automation, so Lo's framework resonates deeply with what we see in production systems. The gap between a well-structured prompt and a vague one isn't just response quality. It's the difference between AI that automates reliably and AI that requires constant human babysitting. What Lo doesn't mention, but we've learned through implementation: prompt engineering is just the first layer. When you're building business-critical AI workflows, you need error handling for when prompts produce unexpected outputs, version control for prompt templates, and monitoring to catch when model updates break existing prompts. For Indian businesses specifically, we'd add: test prompts with India-specific context. Global AI models sometimes default to US assumptions about regulations, market dynamics, and business practices. A prompt that works for US tax planning won't automatically work for GST scenarios. The framework is universal. The context is local.

Need Help Implementing This?

Logicity builds AI automation systems that go beyond individual prompts. We help businesses create prompt libraries, integrate AI into existing workflows, and build reliable agent systems that scale. If you're moving from casual AI use to systematic implementation, let's talk about what that looks like for your organization.

Source: mint / Aman Gupta

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

Robotaxi Companies Are Hiding How Often Humans Take the Wheel

Autonomous vehicle firms like Waymo and Tesla are under scrutiny for refusing to disclose how often remote operators step in to control their self-driving cars. A Senate investigation reveals major gaps in transparency, raising safety and accountability concerns.

Wisconsin Governor Throws a Wrench in Age Verification Plans

Wisconsin Governor Tony Evers has vetoed a bill that would have required residents to verify their age before accessing adult content online, citing concerns over privacy and data security. This move comes as several other states have already implemented similar age check requirements. The veto has significant implications for the future of online age verification.

Apple's App Store Empire Under Siege: The Battle for the Future of Tech

The long-running feud between Apple and Epic Games has reached a boiling point, with Apple preparing to take its case to the Supreme Court. The tech giant is fighting to maintain control over its App Store, while Epic Games is pushing for more freedom for developers. The outcome could have far-reaching implications for the entire tech industry.

Tesla's Remote Parking Feature: The Investigation That Didn't Quite Park Itself

The US auto safety regulators have closed their investigation into Tesla's remote parking feature, but what does this mean for the future of autonomous driving? We dive into the details of the investigation and what it reveals about the technology. The National Highway Traffic Safety Administration found that crashes were rare and minor, but the investigation's closure doesn't necessarily mean the feature is completely safe.

Also Read

8 Sci-Fi and Horror Books Releasing June 2026

June 2026 delivers a strong lineup of new releases spanning space opera, body horror, magical realism, and short fiction. Highlights include Peter F. Hamilton's EXODUS tie-in novel and Daniel Kraus's hybrid sci-fi horror The Sixth Nik.

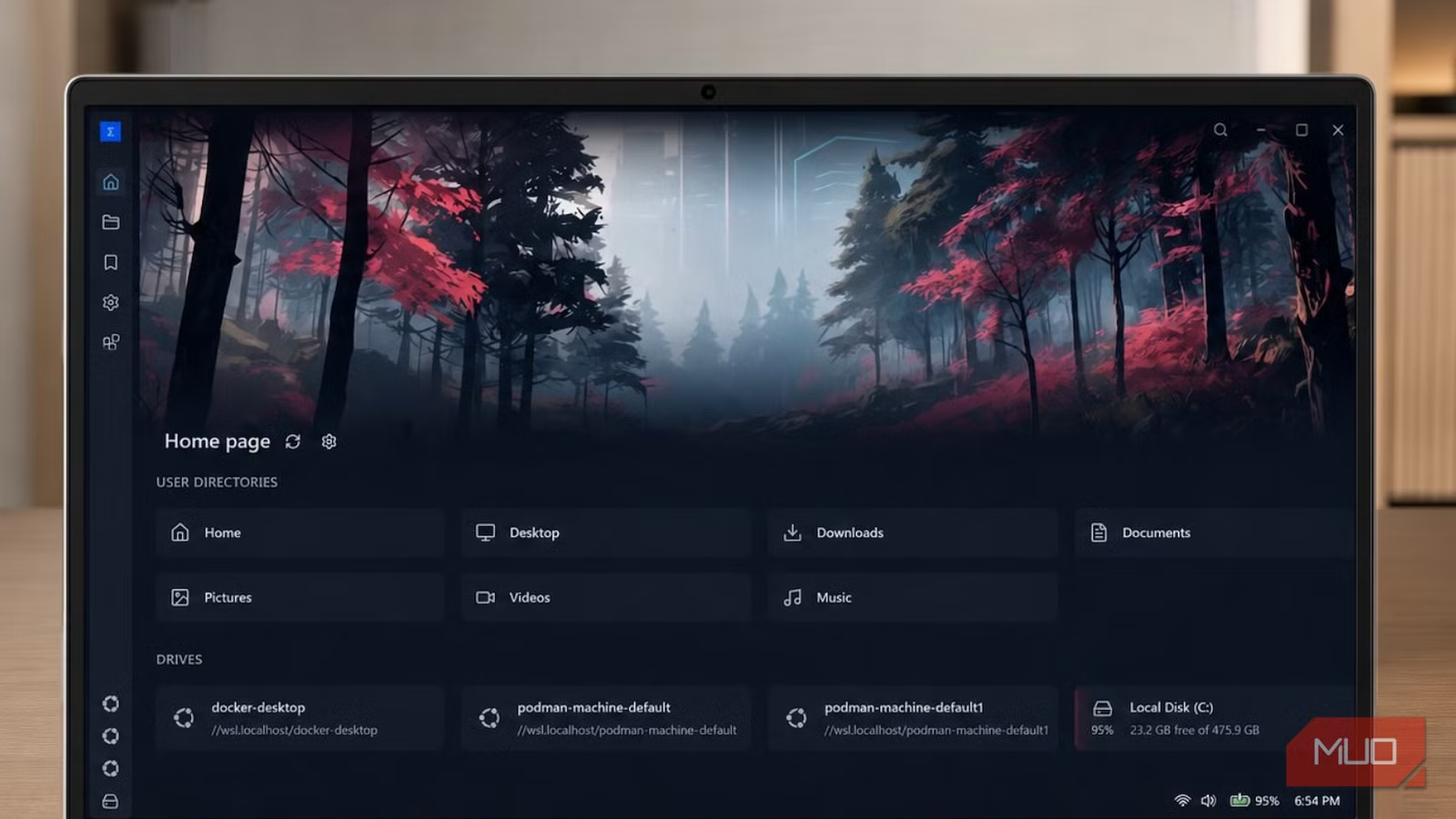

Sigma File Manager Shows What Windows Explorer Should Be

Windows File Explorer hasn't fundamentally changed since the Windows 7 era. Sigma File Manager, a free open-source alternative, demonstrates what modern file management could look like with better navigation, tagging, and project-focused workflows.

This $199 Adapter Brings CarPlay Back to GM EVs

General Motors removed Apple CarPlay and Android Auto from its electric vehicles, but a third-party adapter called EV Play LT restores both features. The device works on 2024-2026 model year Chevy, Cadillac, and GMC EVs, though GM could disable it via software update.