AI Vendor Lock-In Risk: Anthropic Suspensions Hit Fintech

Key Takeaways

- Single AI provider dependency can halt operations instantly with no recourse

- Appeals processes at major AI vendors often lack enterprise-grade SLAs

- Multi-provider AI strategies cost more upfront but protect business continuity

According to [Livemint](https://www.livemint.com/technology/tech-news/fintech-cto-lashes-out-at-anthropic-over-mass-claude-suspensions-warns-developers-never-put-all-your-eggs-in-one-baske-11776645648438.html), Anthropic abruptly suspended more than 60 Claude AI accounts belonging to Belo, a Latin American fintech, temporarily crippling the startup's internal workflows and sparking a public warning about AI vendor dependency risks.

Here's what should concern every executive reading this: a legitimate fintech company lost access to a critical business tool overnight. No phone call. No account manager. No SLA-backed response time. The only recourse? A Google Form. If you're building AI into your operations, this isn't just Belo's problem. It's a preview of your worst-case scenario.

What Happened to Belo's Claude AI Access?

Belo, a Latin American fintech specializing in international transfers and currency exchange, had integrated Claude AI deeply into its operations. Over 60 employees relied on Claude for daily workflows, integrations, and conversation histories. Then, without warning, Anthropic's Safeguards Team pulled the plug.

“Suddenly, more than 60 people were left without a fundamental tool for getting work done. Integrations, skills, conversation histories: everything lost or, in the best-case scenario, on indefinite hold.”

— Pato Molina, Belo CTO

The suspension notice cited "a high volume of signals associated with your account which violate our Usage Policy" but provided zero specifics. Molina publicly stated he had no idea what policy they allegedly violated. The company received an email, and that was it. More than 15 hours later, after Molina's posts went viral on X, Anthropic restored access and apologized. It was a false positive.

Executive Summary

A fintech lost 60+ Claude accounts to automated suspension. No warning, no specifics, no enterprise support channel. Resolution came only after public pressure on social media. The incident took 15+ hours to resolve and was ultimately attributed to a false positive.

Why AI Vendor Lock-In Is a Board-Level Risk

Most businesses evaluating AI focus on capability comparisons: which model writes better code, which handles context windows better, which offers the best pricing. Few consider what happens when access disappears. But vendor lock-in in AI isn't like traditional SaaS lock-in. It's worse.

- Conversation histories don't transfer between providers

- Custom integrations and workflows must be rebuilt from scratch

- Employee muscle memory and prompt expertise becomes worthless

- Automated systems dependent on specific APIs break immediately

- No industry-standard data portability exists for AI workloads

When Salesforce suspends your account, you lose CRM access but your data is exportable. When your AI provider suspends you, you lose the tool AND the institutional knowledge embedded in months of conversations and refined workflows.

How Much Does AI Provider Redundancy Cost?

Molina acknowledged the tradeoffs openly. Belo had discussed multi-provider strategies internally before this incident. The main advantage is obvious: operational continuity during outages. But the costs are real.

| Factor | Single Provider | Multi-Provider Strategy |

|---|---|---|

| Monthly API costs | Baseline | +30-50% (duplicate capacity) |

| Integration maintenance | 1x | 2-3x engineering hours |

| Staff training | One platform | Multiple platforms |

| Prompt optimization | Single optimization | Per-provider tuning |

| Switching speed during outage | N/A (no alternative) | Minutes to hours |

| Data/history portability | Complete loss risk | Partial preservation |

Molina noted that Belo has access to Gemini, but switching would mean losing conversation history and integrated processes. Many companies, he explained, end up "marrying" certain providers with good service track records. That marriage works until it doesn't.

Another case study in reducing vendor dependency costs

What Should CTOs Do About AI Vendor Risk?

The Belo incident isn't an argument against using AI. It's an argument for treating AI vendors like critical infrastructure, which means redundancy planning, SLA negotiations, and exit strategies.

- Audit your AI dependency map: Which workflows break if your primary AI provider goes dark tomorrow? List every integration, automation, and daily use case.

- Negotiate enterprise agreements: Consumer and prosumer tiers offer no SLAs. If AI is business-critical, you need contractual response times and escalation paths.

- Build abstraction layers: API wrappers that can route to multiple providers cost engineering time upfront but enable rapid switching during incidents.

- Export and backup: Regular exports of prompts, conversation logs, and system configurations. Even if not portable, documentation enables faster rebuilding.

- Test your failover: Quarterly drills where teams work with secondary providers identify gaps before emergencies.

Molina's parting shot was telling: if the Claude issue hadn't been resolved, Belo would have switched to OpenAI's Codex for development. Having that option ready turned a potential crisis into a 15-hour inconvenience.

Is Enterprise AI Support Ready for Business-Critical Use?

The uncomfortable truth exposed by this incident: most AI providers haven't built enterprise-grade support infrastructure. Anthropic's appeal process was a Google Form. There was no direct phone line, no dedicated account manager, no guaranteed response time. For a company valued at $60 billion, that's a gap.

✅ Pros

- • AI capabilities have reached enterprise-grade quality

- • API reliability for major providers exceeds 99.9% uptime

- • Pricing has become predictable and scalable

- • Integration documentation is comprehensive

❌ Cons

- • Support infrastructure lags behind enterprise expectations

- • Automated moderation creates false positive risks

- • No industry standards for data portability

- • Account suspension processes lack transparency

- • Social media often more effective than official channels

This isn't unique to Anthropic. OpenAI, Google, and other providers have similar gaps. The AI industry grew so fast that customer success infrastructure couldn't keep pace. For businesses, that means extra due diligence before treating these tools as mission-critical.

Building redundancy into business systems applies across technologies

The Real Cost of Social Media Being Your Escalation Path

Molina got his accounts restored. But he had 15,000+ followers and his post went viral. What about the startup founder with 200 followers facing the same situation? They're filling out that Google Form and hoping for the best.

“They restored our access. Apparently it was a false positive. And as always: Twitter is a service.”

— Pato Molina, after account restoration

When social media becomes your best escalation path, you've discovered a broken support system. It works for those with platforms. It fails everyone else. Business leaders should factor this reality into vendor evaluations: does this company have support infrastructure, or are they relying on viral pressure to surface legitimate issues?

Logicity's Take

We've built AI agent systems using Claude's API for clients across India and the Middle East. This incident hits close to home. Here's what we tell our clients: Claude is genuinely excellent for code generation and complex reasoning tasks. We use it daily. But we also build abstraction layers into every production system. Our n8n automations can route to OpenAI or Gemini within minutes. Our Next.js applications use provider-agnostic interfaces. This isn't paranoia; it's engineering maturity. The Belo situation also highlights why API access differs from account-based access. Programmatic API usage with proper monitoring gives you visibility into rate limits and potential issues before they become suspensions. Consumer account usage at scale, which appears to be part of what Belo was doing, creates patterns that automated systems can misread. If you're scaling AI usage beyond a handful of users, talk to sales teams about enterprise agreements. The extra cost buys you an actual human to call when things break. For Indian startups watching this unfold: your business continuity plan should include AI provider failure scenarios. Test them quarterly. The 15 hours Belo lost would be catastrophic for a company during a funding round or product launch.

Frequently Asked Questions

How much does AI vendor redundancy cost for a mid-size company?

Expect 30-50% higher API costs for maintaining secondary provider capacity, plus 2-3x engineering hours for integration maintenance. For a company spending $5,000/month on AI APIs, budget $7,500-10,000 for true redundancy.

Can I transfer Claude conversation history to another AI provider?

No. There's no industry-standard format for AI conversation portability. You can export some data manually, but integrated workflows and conversation context don't transfer. This is the core lock-in problem.

What SLAs do AI providers offer for enterprise accounts?

Varies significantly. Most consumer and pro tiers offer no SLAs. Enterprise agreements can include 24-hour response times and dedicated support, but you'll need to negotiate these terms directly with sales teams.

Is it worth switching AI providers after this Anthropic incident?

Switching providers doesn't solve the underlying problem since any single provider creates dependency risk. Instead, implement a multi-provider strategy with abstraction layers that allow rapid switching during incidents.

How long does it take to implement AI provider redundancy?

For existing integrations, expect 2-4 weeks of engineering work to build abstraction layers and test secondary providers. New projects should build provider-agnostic from day one, adding minimal overhead to initial development.

What This Means for Your AI Strategy

The Belo incident is a warning shot, not an anomaly. As AI becomes embedded in business operations, vendor dependency risks scale proportionally. Companies that treated AI as a nice-to-have tool are now discovering it's become critical infrastructure. And critical infrastructure requires redundancy.

The businesses that will thrive aren't those avoiding AI. They're the ones implementing it with the same operational rigor they apply to cloud infrastructure, payment systems, and other mission-critical dependencies. That means multiple providers, clear escalation paths, and regular failover testing.

Evaluating technology investments for business needs

Need Help Implementing This?

Logicity builds AI-powered systems with redundancy baked in. Our Claude API integrations include abstraction layers for rapid provider switching, and our n8n automations can route to multiple AI backends. If you're scaling AI usage and want to avoid single-vendor dependency, let's talk about building it right from the start.

Source: mint / Aman Gupta

Google Escalates Competition with Specialized AI Coding Strike Team

Google is reportedly forming an elite 'Coding Strike Team' led by co-founder Sergey Brin to challenge Anthropic's dominance in AI-assisted programming. The initiative includes the development of self-improving models and an internal tool called 'Agent Smith' designed to automate complex developer tasks.

Resolution and Recourse Details

The new article provides a critical update: Belo's access was restored after 15 hours, likely due to public pressure. It also adds specific details such as the CEO's name (Patricio Molina) and the fact that a Google Form was the only provided method for appeal.

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse allZuckerberg's Superintelligence Lab Faces Setback

The first AI model from Zuckerberg's superintelligence lab has failed to impress compared to its rivals, sparking concerns about the lab's direction. We take a closer look at what happened and why it matters.

Muse Spark Launch Propels Meta AI App to Top 5

The recent launch of Muse Spark has significantly boosted the popularity of Meta AI app, pushing it into the top 5. We explore what this means for the AI landscape.

Meta's Muse Spark AI Model Lags Behind ChatGPT and Claude

Meta's Muse Spark AI model still can't outperform ChatGPT and Claude in key areas, despite its advancements. We explore what this means for the AI landscape.

Meta Launches Muse Spark AI To Challenge ChatGPT

Meta launches Muse Spark AI to challenge ChatGPT and Claude, we explore what this means for the AI landscape. Muse Spark AI is a significant development in the AI chatbot space.

Also Read

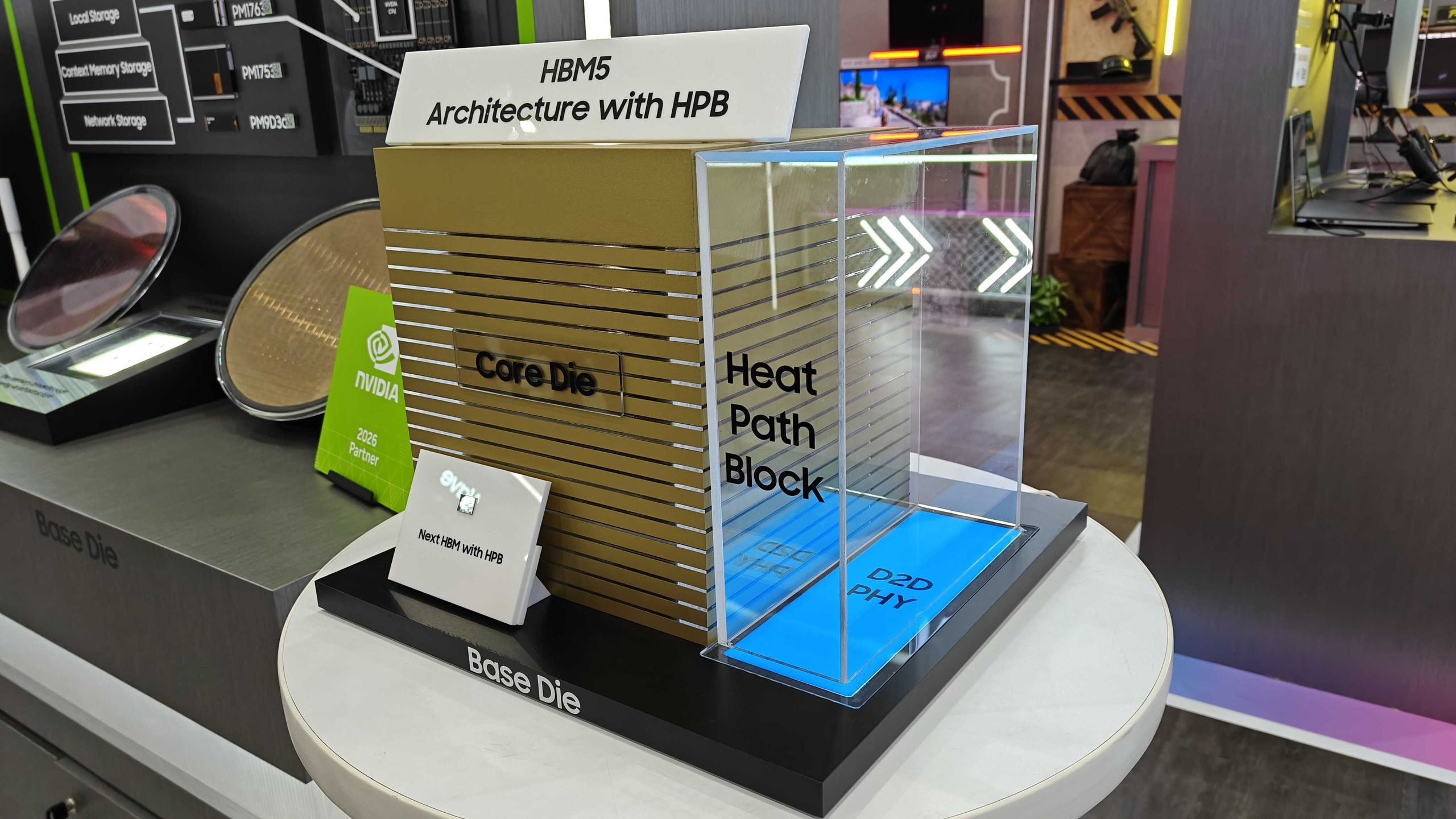

Samsung Shows HBM5 Mockup With Heat Path Block Cooling

Samsung unveiled its first physical HBM5 memory mockup at Computex 2026, featuring a new thermal design called Heat Path Block. The company confirmed it will manufacture HBM5's base die on its 2nm process, setting up a direct thermal engineering competition with SK hynix.

CISA Warns of Active Exploits Targeting Android, Linux Flaws

The U.S. Cybersecurity and Infrastructure Security Agency added two vulnerabilities to its Known Exploited Vulnerabilities catalog. Federal agencies must patch by June 5, 2026. The flaws affect Android 14-16 and multiple Linux kernel versions.

6 Ways to Stay Cool Indoors This Summer Without Breaking the Bank

As summer electricity bills hit a projected 12-year high of $784, homeowners are rethinking indoor cooling strategies. From supercooling techniques to strategic window management, here's how to survive the heat without destroying your budget.