AI Compute Costs 2026: Why Scarcity Drives Real Value

Key Takeaways

- Training frontier AI models costs $500M-$2B, creating real economic barriers that separate serious players from pretenders

- Compute scarcity prevents monopolies by forcing companies to compete on efficiency rather than just capital

- Companies winning the AI race aren't those with the most GPUs—they're the ones extracting maximum value per compute dollar

Read in Short

AI compute isn't cheap software you can copy infinitely. Training a frontier model costs hundreds of millions to billions of dollars in real hardware, electricity, and time. This scarcity creates genuine competition where winners are determined by efficiency, not just capital. For business leaders evaluating AI investments or partnerships, understanding compute economics separates the sustainable players from those burning cash.

What Do AI Compute Costs Mean for Your Business?

Forget the hype about AI being free or nearly free. The romantic notion that information wants to be free has crashed headfirst into brutal physical reality. AI compute isn't information. It's scarce hardware time. And scarcity creates genuine economics that every business leader needs to understand.

When you're evaluating AI vendors, considering infrastructure investments, or trying to understand which AI companies will survive the next shakeout, compute economics tells you more than any pitch deck. The companies that understand this constraint will thrive. Those that don't will burn through capital and disappear.

Why Training Frontier AI Models Costs Billions

Training a frontier AI model isn't an accounting abstraction. It's GPUs running 24/7 in data centers, consuming megawatts of electricity, for months on end. That's real capital, real energy, and real opportunity cost that shows up on balance sheets.

OpenAI didn't train GPT-4 for fun. They did it because they believed they could extract more value from compute than its market price. When their bets are wrong, they lose billions. This is the same calculation every AI company makes, and it's why AI compute costs matter so much for evaluating partnerships and investments.

Executive Summary: The Compute Constraint

Unlike software that costs nothing to copy, you cannot arbitrage away AI compute scarcity. Manufacturing semiconductors takes years and billions in capital investment. You cannot create more compute overnight. This structural scarcity is what makes AI economics real and predictable.

This constraint affects your business in three concrete ways. First, AI services will never be free at scale. Second, providers who claim otherwise are likely subsidizing costs unsustainably. Third, efficiency improvements matter more than raw compute access for long-term competitiveness.

How Scarcity Creates Real Competition in AI

Here's the counterintuitive insight that most business coverage misses: scarcity is actually good for competition. When a resource is truly limited, companies must compete on efficiency, strategic allocation, and extracting maximum value per unit. This is how healthy markets work.

OpenAI competes with Anthropic, Google, and others not on licensing terms but on better training efficiency, smarter data selection, and superior inference optimization. The winner isn't who can undercut on price. It's who can do more with less.

| Competition Factor | Unlimited Resources | Scarce Resources |

|---|---|---|

| Primary advantage | Capital access | Efficiency and innovation |

| Barrier to entry | Low | High but surmountable |

| Long-term winners | First movers | Best operators |

| Innovation pressure | Low | High |

| Market sustainability | Prone to commoditization | Maintains value creation |

Startups investing in inference optimization like Mistral or Together aren't parasites on the AI ecosystem. They're economic actors responding to real scarcity. Smaller teams with 10% of Google's compute budget must innovate harder. This constraint breeds value and creates opportunities for strategic investors to identify winners early.

Making AI investment decisions requires the same confidence frameworks that separate good executives from great ones

Does Compute Concentration Create Monopoly Risk?

Some analysts argue that compute concentration creates monopoly power. They're missing the bigger picture. Compute scarcity actually prevents monopoly in ways that software markets don't.

If one player controlled enough of the world's compute to train world-class models and nobody else could compete, market economics would kick in. Compute prices would rise, attracting massive investment in new capacity. This is already happening. TSMC, Samsung, and Intel are racing to expand semiconductor manufacturing with combined investments exceeding $300 billion through 2027.

The market self-corrects. The real competitive advantage isn't hoarding compute. It's how efficiently you use it, how you train your models, and how you deploy them. Open-source models prove this point decisively. Llama, trained on Meta's proprietary hardware, now competes effectively with closed systems from companies with far more resources.

What This Means for AI Investment Decisions

Understanding AI compute costs changes how you should evaluate AI companies, partnerships, and infrastructure investments. Here's the framework smart executives are using.

- Evaluate efficiency metrics, not just model performance. Ask potential AI partners about their compute efficiency trends. Companies improving efficiency quarter over quarter are better long-term bets.

- Be skeptical of unsustainable pricing. If an AI service seems too cheap, they're either subsidizing heavily to gain market share or cutting corners on quality. Neither is sustainable.

- Look for constraint-driven innovation. The most valuable AI companies are those turning scarcity into competitive advantage through better architectures, training methods, or deployment strategies.

- Consider the semiconductor supply chain. Your AI strategy depends on hardware availability. Understanding TSMC's capacity plans matters for your 3-5 year technology roadmap.

Another example of how resource constraints drive innovation in capital-intensive industries

The Winners Won't Have the Most Compute

Here's the bottom line for business leaders: scarcity isn't a bug in AI economics. It's the feature that guarantees genuine competition, innovation, and value creation.

The companies that win the AI race won't be those with the most compute. They'll be the ones who extract maximum value from every GPU hour. This means your evaluation criteria for AI investments and partnerships should focus on efficiency trajectories, not just current capabilities or funding announcements.

Strategic Implications for 2026

When evaluating AI vendors or investments, prioritize: 1) Efficiency improvements over raw performance gains, 2) Sustainable unit economics over growth metrics, 3) Technical teams with hardware optimization expertise, 4) Clear paths to profitability that don't assume unlimited compute access.

The executives who understand these dynamics will make better AI decisions. They'll avoid partnerships with companies burning through capital unsustainably. They'll identify emerging winners before the market catches on. And they'll build AI strategies that account for physical constraints rather than assuming infinite scalability.

Strategic thinking about AI investments requires the focused analysis these methods enable

Frequently Asked Questions

Frequently Asked Questions

How much does it cost to train a frontier AI model in 2026?

Training a frontier model like GPT-4 or Claude 3 costs between $500 million and $2 billion or more. This includes GPU hardware time, electricity costs running into megawatts, data center operations, and the engineering talent required. These costs have been declining about 30% annually due to efficiency improvements, but they remain substantial barriers to entry.

Is investing in AI companies still worth it given compute constraints?

Yes, but your evaluation criteria should shift. Look for companies demonstrating improving compute efficiency, not just those with the largest GPU clusters. The winners will extract more value per compute dollar. Be wary of companies whose business models assume compute costs will drop faster than historical trends suggest.

How long until compute scarcity becomes less of a constraint?

Semiconductor expansion takes 3-5 years from investment to production capacity. Current expansion plans from TSMC, Samsung, and Intel totaling $300B+ will increase capacity significantly by 2028-2029. However, demand for AI compute is growing faster than supply, so scarcity will likely persist as a structural feature of the market through at least 2030.

Should my company build or buy AI capabilities given compute economics?

For most companies, buying makes more sense. Building frontier AI capabilities requires hundreds of millions in compute investment plus world-class talent. However, fine-tuning existing models or using inference APIs is cost-effective for specific business applications. Focus your internal AI investments on use-case optimization rather than foundational model development.

What metrics should I track when evaluating AI vendor sustainability?

Track cost per inference over time, gross margins on AI services, compute efficiency improvements, and whether pricing requires ongoing subsidies. Ask vendors directly about their unit economics trajectory. Sustainable AI businesses should show improving margins even as they scale, not just revenue growth with deteriorating economics.

Need Help Implementing This?

Logicity helps business leaders navigate AI investment decisions and infrastructure planning. Our analysis combines technical depth with strategic business frameworks, helping you identify sustainable AI partnerships and avoid costly mistakes. Subscribe to our executive briefings for ongoing insights on AI economics and market dynamics.

Source: DEV Community

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

Google Workspace API Updates March 2026: New Calendar API, Chat Authentication, and Maps Changes

Google just dropped Episode 29 of their Workspace Developer News, and there's a lot to unpack. From a brand new secondary calendar lifecycle API to deprecation warnings for Apps Script authentication, here's everything developers need to know about the March 2026 platform updates.

Zig for Legacy C Code: How to Modernize Infrastructure Without a Risky Full Rewrite

A new blueprint from Zeba Academy shows developers how to surgically replace fragile C components with Zig modules. Instead of risky full rewrites, this approach lets you swap out problematic code piece by piece while keeping your battle-tested infrastructure intact.

Claude Skills vs Commands: When to Use Each for AI-Powered Coding Workflows

Claude's Skills and Commands look similar on the surface since both use markdown files, but they work completely differently. Skills run automatically based on context while Commands need explicit /invocation. Here's how to pick the right one for your coding workflow.

DualClip macOS Clipboard Manager: The Only Tool That Uses Dedicated Slots Instead of History

DualClip v1.2.6 just dropped with a major stability fix and Homebrew support. After analyzing 57 clipboard managers, the developer found every single one uses history. DualClip takes a radically different approach with three fixed slots and zero disk storage.

Also Read

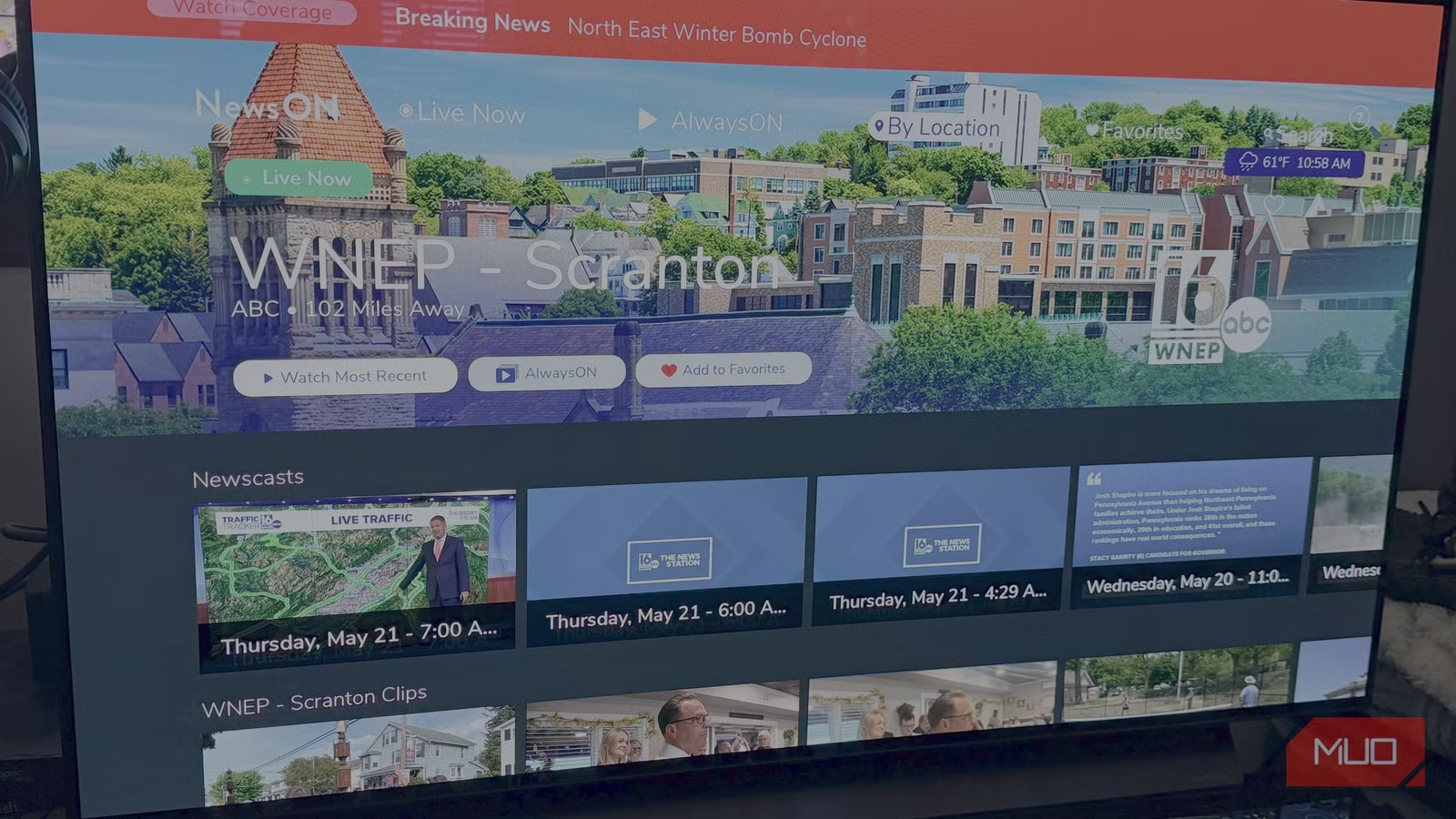

How to Watch Local News Free After Cutting Cable

With 80.7 million U.S. households now cord-free, the question of local news access keeps coming up. A tech journalist who cut cable two years ago shares the exact tools and methods that work in 2026, from modern antennas to free streaming apps.

WWDC 2026 Preview: AI Siri Finally Arrives After Two-Year Delay

Apple's annual developer conference kicks off June 8 with the long-promised AI-powered Siri as the headline feature. After missing its 2024 debut, the upgraded assistant will reportedly understand screen context, take actions across apps, and get its own standalone chatbot app.

10 Microsoft Copilot Tricks That Save Time and Protect Privacy

Microsoft Copilot ships with useful features that most users never discover. From keyboard shortcuts to Gmail integration to privacy controls, these tricks turn the default Windows AI assistant into something actually practical.