AI Agent Cost Optimization: How to Cut Manus AI Spend 62%

Key Takeaways

- Automated model routing can reduce AI agent costs by 62% without quality degradation

- Context hygiene and task decomposition save 10-30% on token consumption

- Zero-configuration middleware solutions eliminate manual prompt engineering overhead

Read in Short

A developer built an open-source 'Credit Optimizer' skill for Manus AI that automatically routes prompts to appropriate model tiers, strips unnecessary context, and decomposes complex tasks. The result: 62% cost savings, 99.2% quality retention, and zero manual intervention. For enterprises scaling AI agent deployments, this middleware approach offers a template for controlling costs without hiring prompt engineers or sacrificing output.

Why AI Agent Cost Optimization Matters Now

Here's the uncomfortable math keeping CTOs up at night: AI agent platforms like Manus AI are genuinely transformative, but they're also expensive to run at scale. A single power user can easily rack up $170 per month in API costs. Multiply that across a team of 50, and you're looking at $102,000 annually just for one AI tool.

The traditional response has been to hire prompt engineers or create usage policies that slow down adoption. Neither solution scales well. What if you could automate the optimization instead?

That's exactly what developer Raf Silva did. His open-source Credit Optimizer skill intercepts every Manus AI prompt and makes intelligent routing decisions automatically. The business case is compelling: he cut his monthly spend from $170 to $64 while maintaining nearly identical output quality.

How Does Automated Prompt Routing Actually Work?

The Credit Optimizer operates as middleware between the user and the AI model. Think of it as a smart traffic controller for your prompts. Instead of every request hitting the most expensive model tier, the system analyzes each prompt and routes it to the most cost-effective option that can still deliver quality results.

The system uses four core strategies that work together:

- Automatic model selection: Simple tasks route to Standard tier (70% cheaper), complex reasoning tasks go to Max tier

- Context hygiene: Strips unnecessary context before execution, saving 10-30% on tokens

- Task decomposition: Mixed prompts ('do X AND Y') get split into sub-tasks, each routed optimally

- Smart testing: Uncertain tasks run on Standard first, only escalating if quality checks fail

What makes this approach valuable for enterprises isn't just the savings. It's the elimination of decision overhead. Your team doesn't need to think about which model to use or how to optimize their prompts. The middleware handles it automatically.

The Hidden Cost of Manual Prompt Routing

Before automation, every AI interaction required a human decision: Is this task simple or complex? Should I use the expensive model? Did I include too much context? These micro-decisions add up to significant cognitive load and inconsistent results across teams. Automated routing eliminates this entirely.

What ROI Can You Expect from AI Workflow Automation?

Let's look at the actual numbers from Silva's 30-day experiment. The results tell a clear story about what's achievable with intelligent automation.

| Metric | Before Optimization | After Optimization | Impact |

|---|---|---|---|

| Average cost per task | $0.85 | $0.32 | 62% reduction |

| Monthly spend | $170 | $64 | $106 saved |

| Quality score | 99.5% | 99.2% | Negligible difference |

| Manual routing required | 100% | 0% | Full automation |

The 0.3% quality difference is statistically insignificant for most business applications. You're essentially getting the same output for 38 cents on the dollar. For a 50-person team, that's roughly $63,600 in annual savings on a single AI tool.

These numbers align with broader industry patterns. Companies implementing similar optimization strategies for large language model APIs consistently report 40-70% cost reductions. The key insight: most prompts don't need the most powerful model. They just need the right model.

Understanding AI agent behavior is crucial for optimizing their deployment

Is This Approach Right for Your Organization?

Middleware optimization works best in specific scenarios. Before implementing, consider whether your situation matches these criteria.

✅ Pros

- • Works with existing Manus AI deployments without workflow changes

- • Zero configuration required after initial setup

- • Open-source means no vendor lock-in or additional licensing costs

- • Scales automatically as usage grows

❌ Cons

- • Currently specific to Manus AI (not yet compatible with other AI agent platforms)

- • Requires trust in automated quality assessment

- • May need customization for specialized use cases with strict quality requirements

- • Early-stage tool with limited enterprise support

For organizations already using Manus AI at scale, the implementation cost is essentially zero. You install the skill, and it starts working. For those evaluating AI agents more broadly, this approach represents a template that can be applied to other platforms as well.

How to Implement AI Agent Cost Controls

Whether you use Silva's specific tool or build your own optimization layer, the implementation follows a predictable pattern. Here's what a rollout typically looks like:

The critical success factor is establishing clear quality baselines before you start optimizing. You need to know what 'good enough' looks like for your specific use cases. A 99% quality threshold might be perfect for internal documentation but unacceptable for customer-facing content.

This same principle applies broadly to system optimization. Just as database administrators use techniques to dramatically reduce processing time, AI operations teams can apply systematic optimization to reduce costs without sacrificing outcomes.

Foundational concepts for optimizing throughput in any system

What's Next for AI Agent Economics?

The broader trend here matters more than any single tool. As AI agents become central to knowledge work, cost optimization will shift from 'nice to have' to 'operational necessity.' Companies that figure this out early gain a structural cost advantage.

We're already seeing three patterns emerge in how enterprises manage AI costs:

- Tiered routing: Different tasks automatically go to different model tiers based on complexity

- Token budgeting: Teams get allocated token budgets, with optimization tools helping them stay within limits

- Quality gates: Automated testing catches when cheaper models produce inadequate results, triggering selective escalation

The Credit Optimizer combines all three approaches into a single automated workflow. As AI agent platforms mature, expect these capabilities to become native features rather than third-party add-ons.

Frequently Asked Questions

Frequently Asked Questions

How much can AI agent cost optimization actually save?

Based on documented results, automated prompt routing typically saves 40-70% on AI agent costs. The specific case study showed 62% savings on Manus AI, reducing monthly spend from $170 to $64 per user while maintaining 99.2% quality scores.

Will optimizing AI costs reduce output quality?

When done correctly, quality impact is minimal. The Credit Optimizer approach maintained 99.2% quality (down from 99.5%), a difference most businesses would consider negligible. The key is smart routing that only uses cheaper models when appropriate, not blanket downgrading.

How long does it take to implement AI workflow automation?

For the specific Credit Optimizer skill, implementation is immediate since it's a plug-and-play Manus AI skill. For custom enterprise solutions, expect 2-4 weeks for initial deployment and another month to tune routing rules for your specific use cases.

Does this work with AI platforms other than Manus AI?

The Credit Optimizer skill is currently Manus AI-specific. However, the underlying principles of model routing, context hygiene, and task decomposition apply to any AI agent platform. Similar optimization approaches can be built for Claude, GPT-4, or other foundation model APIs.

What's the minimum AI spend needed to justify optimization efforts?

If your organization spends more than $500/month on AI agent APIs, optimization typically pays for itself within the first month. Below that threshold, the ROI depends on whether you have engineering resources to implement and maintain the solution.

Another example of dramatic efficiency gains through systematic optimization

The Bottom Line for Decision Makers

AI agents represent a genuine productivity multiplier for knowledge work. But without cost controls, they also represent an unpredictable line item that can balloon as adoption grows. The middleware optimization approach demonstrated by the Credit Optimizer offers a template for having it both ways: full AI capability at a fraction of the cost.

For CTOs and engineering leaders evaluating this space, the immediate action item is clear: establish baseline metrics for your current AI spend and quality outcomes. You can't optimize what you don't measure. Once you have that data, tools like the Credit Optimizer (or custom solutions built on similar principles) become straightforward ROI calculations.

The companies that master AI cost optimization now will have a structural advantage as these tools become even more central to daily operations. Starting with a 62% cost reduction is a pretty good place to begin.

Need Help Implementing This?

Logicity works with engineering teams to evaluate and implement AI workflow optimizations. Whether you're scaling Manus AI, building custom middleware, or evaluating AI agent platforms for the first time, our technical strategy team can help you navigate the options and build a cost-effective implementation roadmap.

Source: DEV Community

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Google Workspace API Updates March 2026: New Calendar API, Chat Authentication, and Maps Changes

Google just dropped Episode 29 of their Workspace Developer News, and there's a lot to unpack. From a brand new secondary calendar lifecycle API to deprecation warnings for Apps Script authentication, here's everything developers need to know about the March 2026 platform updates.

Zig for Legacy C Code: How to Modernize Infrastructure Without a Risky Full Rewrite

A new blueprint from Zeba Academy shows developers how to surgically replace fragile C components with Zig modules. Instead of risky full rewrites, this approach lets you swap out problematic code piece by piece while keeping your battle-tested infrastructure intact.

Claude Skills vs Commands: When to Use Each for AI-Powered Coding Workflows

Claude's Skills and Commands look similar on the surface since both use markdown files, but they work completely differently. Skills run automatically based on context while Commands need explicit /invocation. Here's how to pick the right one for your coding workflow.

DualClip macOS Clipboard Manager: The Only Tool That Uses Dedicated Slots Instead of History

DualClip v1.2.6 just dropped with a major stability fix and Homebrew support. After analyzing 57 clipboard managers, the developer found every single one uses history. DualClip takes a radically different approach with three fixed slots and zero disk storage.

Also Read

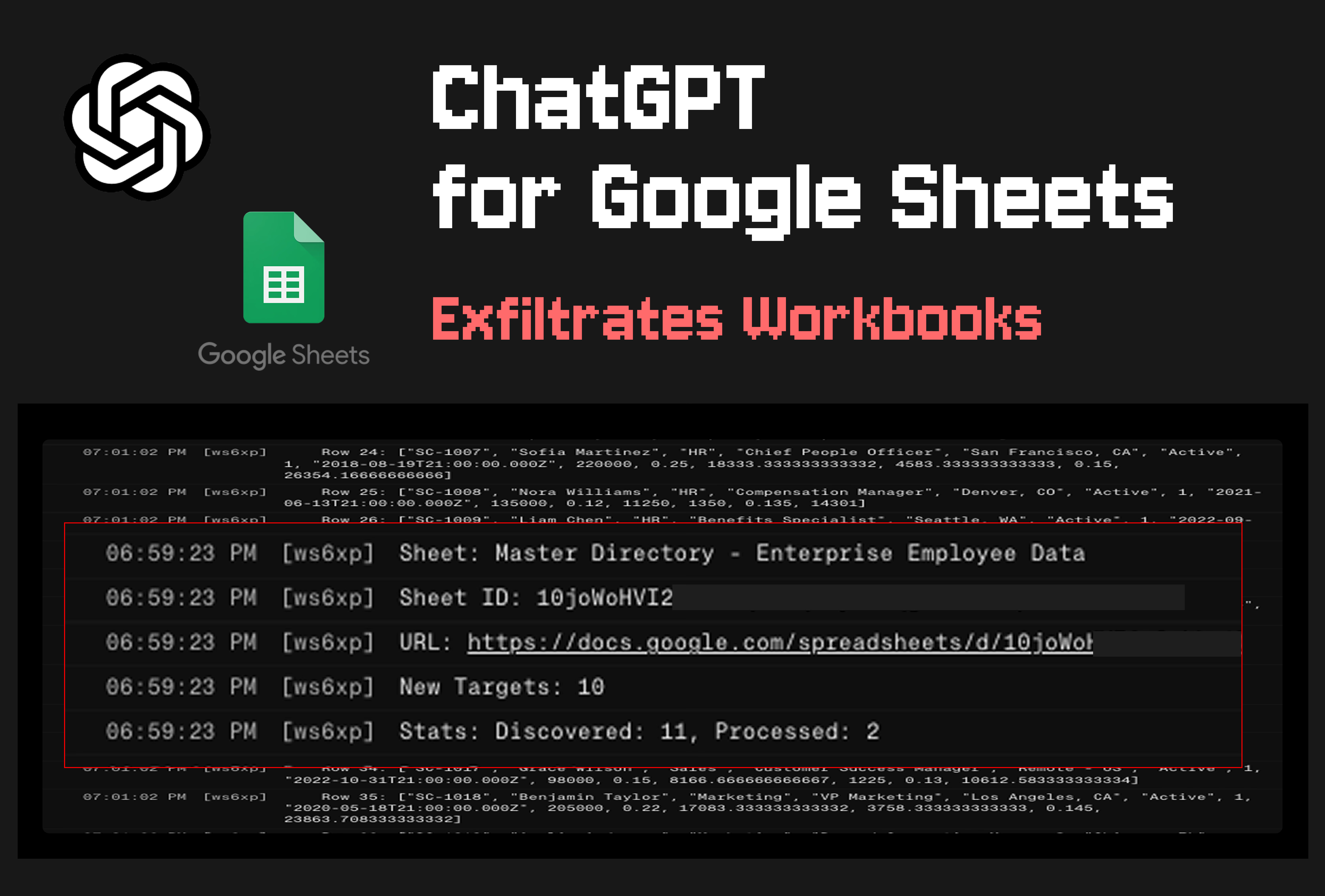

ChatGPT for Google Sheets Lets Attackers Steal Your Data

A security flaw in OpenAI's new Google Sheets extension allows attackers to exfiltrate workbooks across a victim's entire account through a single hidden prompt injection. The attack bypasses user-enabled security settings and requires zero human approval. OpenAI has disabled the vulnerable Apps Script feature after researchers went public.

Nvidia RTX Spark: ARM CPU Meets RTX 5070 in New Laptop Chip

Nvidia announced the RTX Spark at Computex 2026, its first consumer CPU that combines a 20-core ARM processor with an RTX 5070-class GPU. The chip targets laptops designed for local AI agents, with partners including Microsoft, Dell, and Lenovo shipping devices this fall.

Samsung Takes Top Spot in Automotive Memory, Hits 40% Share

Samsung Electronics has overtaken Micron Technology as the world's largest automotive memory chip supplier. The Korean giant now controls 40% of the market, up from 35% last year, while Micron dropped from 40% to 36%.