PostgreSQL Bulk Import: Cut Data Load Time 75%

Key Takeaways

- ORM-based imports cost 4x more engineering time than native PostgreSQL COPY

- A 75% reduction in import time means monthly data refreshes become viable for real-time business intelligence

- The same techniques scale to any large dataset: customer records, transaction logs, IoT sensor data

Read in Short

A developer building a free Brazilian company lookup platform cut PostgreSQL import time from 12+ hours to under 3 hours by switching from ORM inserts to native COPY commands. The business case is clear: faster imports mean fresher data, lower infrastructure costs, and engineering teams freed up for higher-value work.

Why PostgreSQL Bulk Import Speed Matters for Your Business

Here's a scenario every data-driven company faces: you have millions of records that need to get into your database. Maybe it's a monthly refresh from a government data source. Maybe it's migrating customer records from an acquired company. Maybe it's loading transaction history for a new analytics platform.

The difference between a 12-hour import and a 3-hour import isn't just about patience. It's about whether your data team can run imports during a single night shift or needs to block an entire weekend. It's about whether your business intelligence dashboards show yesterday's reality or last week's.

Pedro Parker, a Brazilian developer, faced this exact challenge when building CNPJ Aberto, a free platform for looking up Brazilian company data. The Brazilian Federal Revenue Service publishes data on 55 million companies, distributed across 40 ZIP files containing about 25GB of CSV data. The data updates monthly, so this wasn't a one-time migration problem. It was an ongoing operational challenge.

The Real Cost of Slow Database Imports

Before diving into the technical solution, let's talk about what slow imports actually cost your organization.

| Cost Factor | 12-Hour Import | 3-Hour Import |

|---|---|---|

| Server compute costs | $48-96 (cloud instance time) | $12-24 |

| Engineering supervision | Full day blocked | Morning task |

| Data freshness | Weekly updates realistic | Daily updates possible |

| Failure recovery time | Restart = another 12 hours | Restart = 3 hours |

| Maintenance windows | Weekend required | Overnight sufficient |

The compound effect matters most. If your team dreads running imports because they take forever, they'll run them less frequently. Less frequent imports mean stale data. Stale data means worse business decisions. It's a hidden tax on your entire data strategy.

How PostgreSQL Bulk Import Performance Breaks Down

Parker's journey through three different approaches reveals exactly where time gets wasted in typical database imports.

Approach 1: ORM-Based Inserts (The Default Trap)

Most developers reach for their ORM first. It's comfortable. It's what the framework documentation shows. In Parker's case, using SQLAlchemy with batch commits every 1,000 records produced about 1,200 inserts per second.

At that rate, 55 million records take over 12 hours. The ORM adds overhead on every single record: type validation, state tracking, dynamic SQL construction. For a single insert, this overhead is negligible. Multiply it by 55 million and you've burned an entire day.

Parker noted that AI coding assistants (he used Opus 4.6) helped somewhat with optimization, but hallucinated frequently when suggesting query improvements and sometimes created nonsensical indexes. The lesson for engineering managers: AI tools accelerate development but still require experienced developers to verify suggestions, especially for performance-critical operations.

Understanding when to trust AI-generated code suggestions is critical for engineering leaders

Approach 2: Direct SQL with Batches (Better, Not Good)

Removing the ORM and using psycopg2 directly with batched executemany calls improved throughput to about 4,000 inserts per second. That's a 3x improvement, bringing import time down to around 4 hours.

Still not good enough for a monthly operation. The fundamental problem: executemany still generates one INSERT statement per row. PostgreSQL has to parse and plan each statement individually. You're paying the query planning tax 55 million times.

Approach 3: PostgreSQL COPY Command (The Right Tool)

The COPY command is PostgreSQL's native bulk loading mechanism. It bypasses the SQL parser, the query planner, and the standard executor. Data streams directly into the table's heap storage.

Combined with two configuration tweaks, Parker achieved import times under 3 hours. The tweaks were setting synchronous_commit to off (allowing transactions to confirm without waiting for disk flush) and increasing work_mem to 256MB (giving PostgreSQL more memory for sorting and processing).

When to Use These Settings

The synchronous_commit = off setting is safe for data loads where you can simply reimport if the server crashes. Don't use this for transactional data you can't recreate. For one-time migrations or refreshable datasets, it's a massive performance win with acceptable risk.

What This Means for Your Data Architecture

The specific numbers from Parker's case study matter less than the patterns they reveal. Here's what CTOs and engineering managers should take away.

- Default approaches (ORMs, standard inserts) optimize for developer convenience, not throughput. That tradeoff makes sense for transactional workloads but destroys performance for bulk operations.

- PostgreSQL has specialized tools for specialized jobs. COPY exists because bulk loading is a common enough need that it warranted dedicated engineering.

- Configuration tuning for batch operations differs from production settings. Knowing when to temporarily relax durability guarantees can make previously impossible operations routine.

- The 75% time reduction compounds with frequency. If you can refresh data 4x faster, you can refresh it 4x more often.

This pattern applies beyond company registries. Customer data migrations, IoT sensor ingestion, financial transaction loading, log aggregation: any scenario where you're moving millions of records benefits from the same approach.

Understanding throughput mathematics helps you predict and plan for data pipeline capacity

Implementation Roadmap for Engineering Teams

If your team is dealing with slow bulk imports, here's a practical path forward.

- Benchmark your current state. Measure actual inserts per second, not just total time. This gives you a baseline and helps identify bottlenecks.

- Identify whether you're ORM-bound. If you're using Django, Rails, or SQLAlchemy for bulk inserts, you're leaving performance on the table.

- Evaluate COPY feasibility. COPY works best when you can format data as CSV or binary streams. If your data requires complex transformation, you may need a preprocessing step.

- Test with relaxed durability. For refreshable datasets, try synchronous_commit = off in a staging environment. Measure the improvement.

- Build a repeatable pipeline. Document the process so monthly or weekly refreshes become routine operations, not engineering projects.

Frequently Asked Questions

Frequently Asked Questions

How much does PostgreSQL bulk import optimization cost to implement?

The techniques described are configuration changes and code refactoring, not new infrastructure. Engineering time is typically 1-2 days for an experienced developer to benchmark, implement COPY-based loading, and validate results. The ROI comes from reduced compute costs and faster time-to-insight on refreshed data.

Is PostgreSQL COPY safe for production data?

COPY itself is production-safe and used widely. The synchronous_commit = off setting trades durability for speed. Only use it for data you can reimport if a crash occurs. For irreplaceable transactional data, keep default durability settings and accept slower import speeds.

How long does it take to implement COPY-based imports?

For a team already familiar with PostgreSQL, expect 2-4 days including testing. The main work is reformatting data into COPY-compatible streams and adjusting any validation that previously happened in the ORM layer. Teams new to PostgreSQL internals should budget additional time for learning.

Does this approach work with other databases?

Most databases have bulk loading equivalents. MySQL has LOAD DATA INFILE, SQL Server has BULK INSERT, and Oracle has SQL*Loader. The principle of bypassing row-by-row processing applies universally, though syntax and configuration differ.

What's the break-even point for optimizing imports?

If you're importing more than 1 million records or running imports more than monthly, optimization likely pays off within 2-3 cycles. For smaller or infrequent imports, the engineering investment may not be justified.

The Bottom Line for Business Leaders

Data is only valuable if it's current. Every hour your import pipeline runs is an hour your business intelligence lags behind reality. The techniques Parker used to import 55 million Brazilian company records in under 3 hours aren't exotic. They're PostgreSQL fundamentals that most teams overlook because the default ORM approach works well enough for small datasets.

The question for your organization: are slow imports a hidden bottleneck limiting how often you refresh critical datasets? If your team has accepted multi-hour or overnight imports as normal, there's likely a 75% improvement waiting to be claimed.

Need Help Implementing This?

Logicity works with engineering teams to optimize data pipelines and infrastructure decisions. If your organization is struggling with bulk data operations, ETL performance, or database migration challenges, our technical content and advisory services can help you find the right approach for your specific stack and scale.

Source: DEV Community

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Google Workspace API Updates March 2026: New Calendar API, Chat Authentication, and Maps Changes

Google just dropped Episode 29 of their Workspace Developer News, and there's a lot to unpack. From a brand new secondary calendar lifecycle API to deprecation warnings for Apps Script authentication, here's everything developers need to know about the March 2026 platform updates.

Zig for Legacy C Code: How to Modernize Infrastructure Without a Risky Full Rewrite

A new blueprint from Zeba Academy shows developers how to surgically replace fragile C components with Zig modules. Instead of risky full rewrites, this approach lets you swap out problematic code piece by piece while keeping your battle-tested infrastructure intact.

Claude Skills vs Commands: When to Use Each for AI-Powered Coding Workflows

Claude's Skills and Commands look similar on the surface since both use markdown files, but they work completely differently. Skills run automatically based on context while Commands need explicit /invocation. Here's how to pick the right one for your coding workflow.

DualClip macOS Clipboard Manager: The Only Tool That Uses Dedicated Slots Instead of History

DualClip v1.2.6 just dropped with a major stability fix and Homebrew support. After analyzing 57 clipboard managers, the developer found every single one uses history. DualClip takes a radically different approach with three fixed slots and zero disk storage.

Also Read

4 Open-Source Android Apps That Fix What Google Won't

Google's default Android apps prioritize retention and data collection over user control. These four open-source alternatives restore customization, local file access, and features Google deliberately omits from the Pixel Launcher and Files app.

4 Free AI Tools That Can Replace Your Paid Subscriptions

Subscription fatigue is real. A veteran tech journalist breaks down which free AI tools handle specific tasks well enough to skip the $20/month chatbot tax. The trick is matching the right tool to the right job.

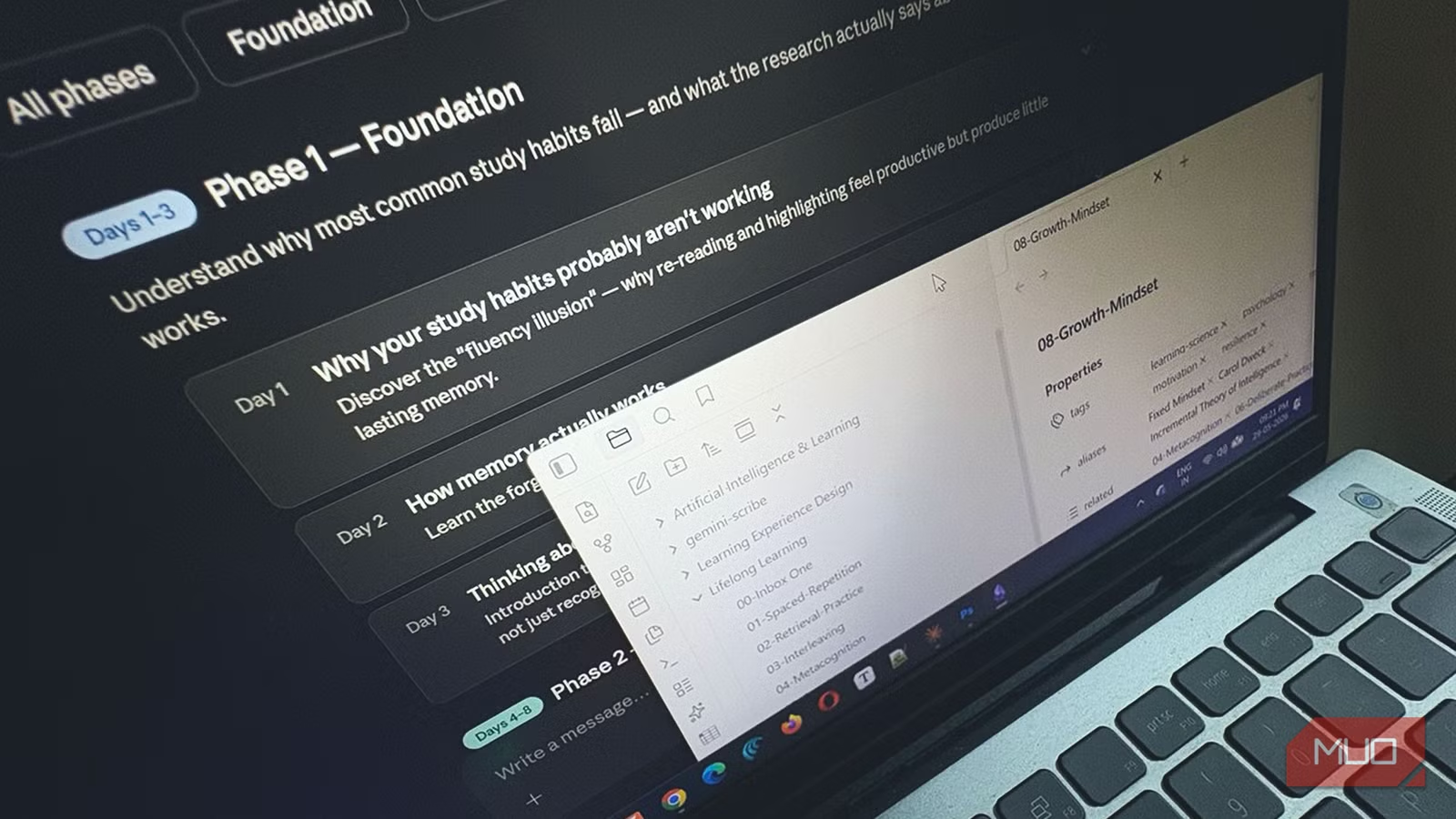

How to Connect Claude AI to Your Obsidian Vault

A productivity writer shares three methods for pairing Anthropic's Claude with Obsidian notes. The setup transforms a passive note collection into an active research environment where AI finds connections across your existing ideas.