3 Tasks to Automate With Local AI Instead of ChatGPT

Key Takeaways

- Local AI models can handle privacy-sensitive tasks like receipt scanning and budget tracking without sending data to cloud servers

- Voice memo transcription and note organization work well on local models since they don't require cutting-edge intelligence

- MCP (Model Context Protocol) servers let local AI models interact with apps like Notion and your filesystem

The Privacy vs Power Trade-off

Cloud AI is powerful. Local AI is private. Dibakar Ghosh, a tech journalist at How-To Geek, argues that picking one over the other is the wrong approach. The smarter move is finding tasks that need privacy but don't require the full intelligence of models like GPT-4 or Claude.

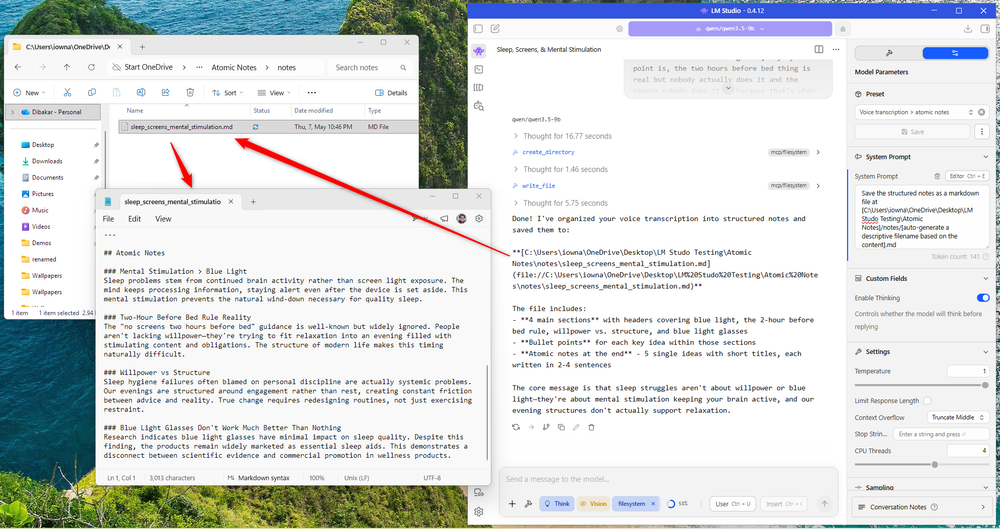

Ghosh runs a Qwen 3.5 9B model at 4-bit quantization through LM Studio, a graphical app that lets you run language models locally without touching a terminal. His hardware: a Ryzen 5 5600G with 32GB of RAM and an RTX 3060 with 12GB of VRAM. If your setup is similar, these workflows should work for you too.

The key addition to his setup is MCP (Model Context Protocol) servers. These give the local model access to tools like your filesystem, Notion, and Asana. Without MCPs, the model can only chat. With them, it can actually do things.

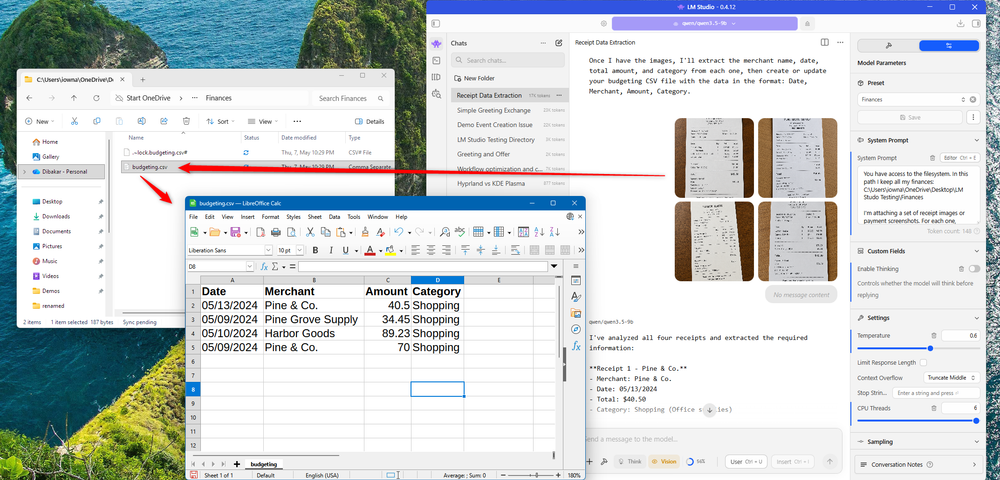

1. Receipt Scanning and Budget Tracking

Your spending habits are sensitive data. Every receipt contains merchant names, dates, amounts, and purchase categories. Sending those to a cloud service means trusting that provider with a detailed map of your financial life.

Ghosh's workflow processes receipt photos and handwritten notes through his local Qwen model. The model extracts structured data and outputs it to a CSV file with columns for date, merchant, amount, and category. He opens this in LibreOffice Calc for budgeting.

This works because the Qwen 3.5 model supports vision. It can analyze images, not just text. For receipts, you don't need GPT-4's reasoning abilities. You need reliable OCR and basic categorization. A local model handles that fine.

2. Voice Memo Transcription and Note Organization

Voice memos often contain half-formed ideas, personal reflections, or sensitive work thoughts. Ghosh uses his local AI to transcribe these recordings and convert them into structured atomic notes in Markdown format.

The model takes raw voice transcriptions and organizes them into clean, searchable notes. These save directly to a local folder. No cloud sync. No third-party access. Your rambling thoughts about a project or personal matter stay on your machine.

Transcription and basic summarization don't require frontier model intelligence. A 9B parameter model running locally can handle the task while keeping everything offline.

3. App Integration via MCP Servers

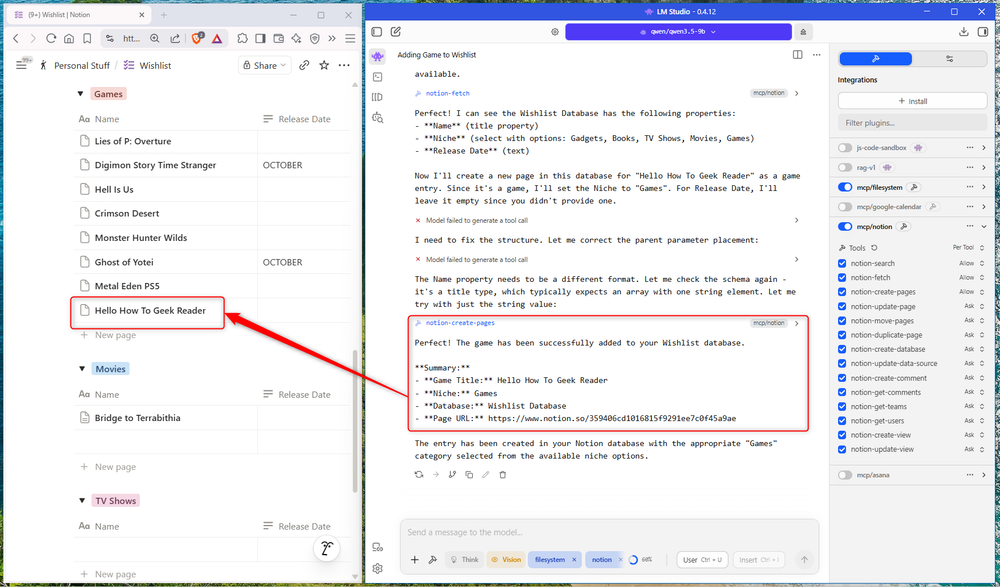

The third workflow involves using MCP servers to let the local AI interact with external apps. Ghosh demonstrates this by having his local model add entries to a Notion database.

In his example, he asks the model to add a game to his wishlist database in Notion. The model uses the Notion MCP to create a new page with the relevant details. The interaction happens through the local LM Studio interface, but the action executes in Notion.

This matters because it means your prompts and workflow logic stay local. The only data that reaches external services is the final action, not your full conversation history or reasoning chain.

Hardware Requirements

Ghosh's setup is mid-range by current standards. For those who want a dedicated local AI machine, he points to options like the Beelink GTi14 Mini PC. This compact desktop runs an Intel Core Ultra 9 185H processor with 16 cores, 22 threads, and a 5.1GHz clock speed. It ships with 32GB of DDR5 RAM (upgradable to 96GB) and a 1TB PCIe 4.0 NVMe SSD.

If you have a smaller GPU, you can run smaller Qwen models. The same workflows work at lower parameter counts, though response quality may vary for complex tasks.

Understanding AI agent capabilities and risks

When Cloud AI Still Makes Sense

Ghosh isn't arguing that local AI should replace cloud services entirely. For tasks requiring maximum intelligence, such as complex code generation, nuanced writing, or multi-step reasoning, cloud models like GPT-4 or Claude still outperform local alternatives.

The framework is simple: identify tasks that need privacy but not peak intelligence. Receipt scanning, voice transcription, and basic app automation fit that profile. Strategy documents, competitive analysis, and creative work might still warrant cloud AI.

Logicity's Take

More local-first tech tools

Frequently Asked Questions

What hardware do I need to run local AI models?

A mid-range setup with 32GB of RAM and a GPU with 12GB of VRAM (like an RTX 3060) can run 9B parameter models comfortably. Smaller GPUs can run smaller models with reduced quality.

Is LM Studio free to use?

Yes, LM Studio is a free application that lets you download and run open-source language models locally without coding.

What are MCP servers in local AI setups?

MCP (Model Context Protocol) servers give local AI models access to tools like your filesystem, Notion, or Asana. They enable the model to take actions, not just generate text.

Can local AI models analyze images?

Yes, multimodal models like Qwen 3.5 support vision capabilities and can analyze images such as receipts or handwritten notes.

Which tasks are better for local AI vs cloud AI?

Tasks requiring privacy but not peak intelligence, such as receipt scanning, transcription, and basic automation, work well locally. Complex reasoning and creative work may still benefit from cloud models.

Need Help Implementing This?

Source: How-To Geek

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

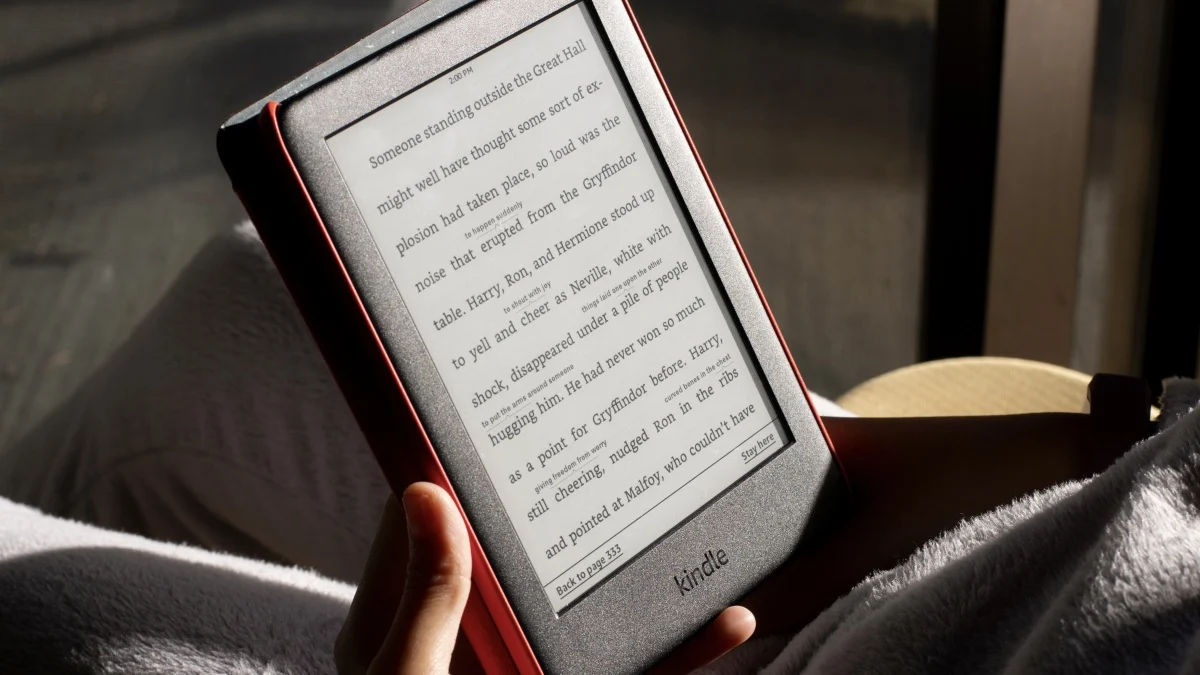

How to Jailbreak Your Kindle: Escape Amazon's Control Before They Brick Your E-Reader

Amazon is cutting off support for older Kindles starting May 2026, but you don't have to buy a new device. Jailbreaking your Kindle lets you install custom software like KOReader, read ePub files natively, and keep your e-reader alive for years to come.

X-Sense Smoke and CO Detectors at Home Depot: UL-Certified Alarms You Can Actually Trust

X-Sense just made their UL-certified smoke and carbon monoxide detectors available at Home Depot stores nationwide. The lineup includes wireless interconnected models that can link up to 24 units, 10-year sealed batteries, and smart features designed to cut down on those annoying false alarms that make people disable their detectors entirely.

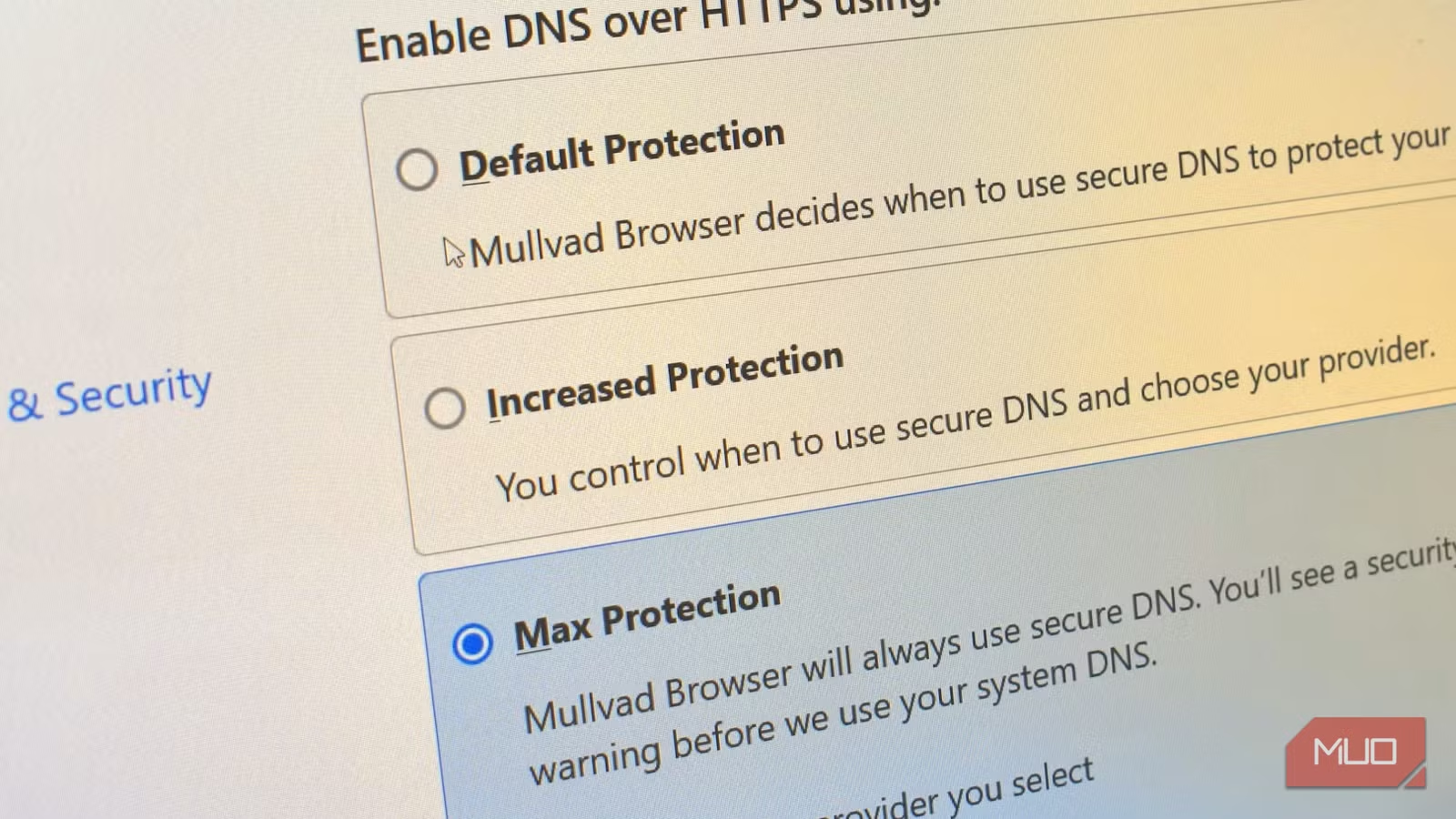

How to Change Your Browser's DNS Settings for Faster, Private Browsing in 2026

Your browser's default DNS settings are probably slowing you down and leaking your browsing history to your ISP. Here's why changing this one setting should be the first thing you do on any new device, and how to pick the right DNS provider for your needs.

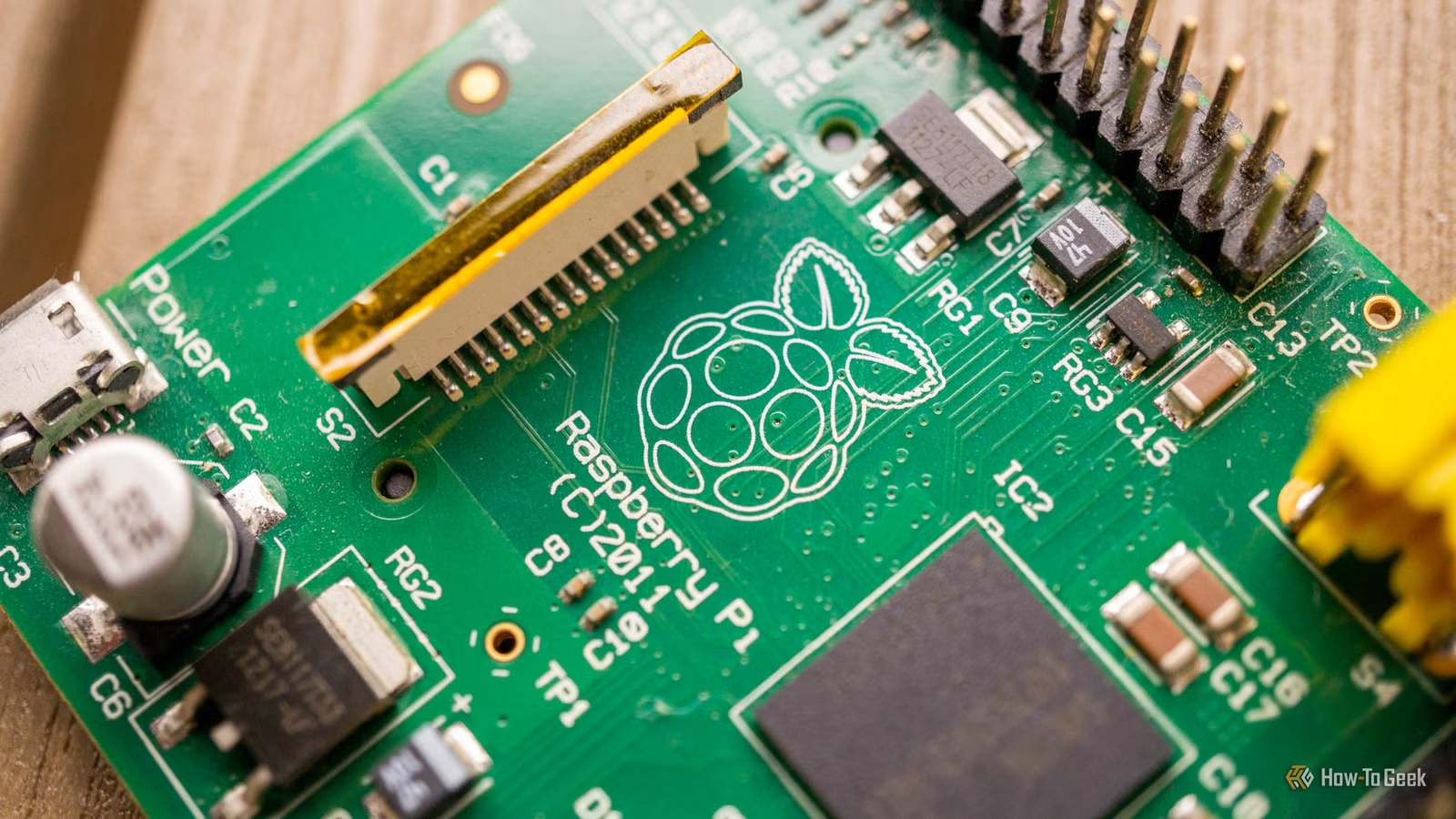

Raspberry Pi at 15: Why the King of Single-Board Computers Is Losing Its Crown

After 15 years of dominating the hobbyist computing scene, the Raspberry Pi faces serious competition from cheaper alternatives, supply chain headaches, and a market that's evolved past its original mission. Here's what's happening and what it means for your next project.

Also Read

Invincible Season 5: Release Window, Plot Hints, and What We Know

Amazon's animated superhero series Invincible is already deep into production for season 5. Creator Robert Kirkman confirms voice acting is complete, animation is underway, and the show aims to maintain its new annual release schedule.

7 BIOS Checks That Expose a Used Laptop's Hidden Problems

Before you hand over cash for a used laptop, the BIOS reveals what sellers can't easily hide. These seven checks expose corporate locks, worn hardware, and firmware traps that could turn your 'bargain' into an expensive paperweight.

4 Power Tool Brand Myths That Waste Your Money

Most power tool brands sit under a handful of corporate umbrellas, and 'Made in China' stopped being a quality signal years ago. These outdated beliefs shape buying decisions and often lead to overspending or missed value.