Vercel Breach Shows the Real Risk of Shadow AI Integrations

Key Takeaways

- OAuth connections to AI apps persist even after employees stop using them, creating permanent attack pathways

- The Vercel breach started when Context.ai was compromised and attackers used an existing OAuth bridge

- Shadow integrations are more dangerous than employees uploading data to chatbots because they grant programmatic access

The Breach That Started With a Forgotten Trial

Security teams worry about employees pasting sensitive data into ChatGPT or Claude. That's a real concern. But it's not the biggest one.

The Vercel breach shows why. A Vercel employee connected an AI app called Context.ai to their Google Workspace account via OAuth. This created a programmatic bridge between Vercel's environment and a third party. When Context.ai was breached, attackers used that bridge to access Vercel's systems.

Vercel wasn't even a paying customer of Context.ai. The connection came from what appears to be a self-service trial of Context.ai's "AI Office Suite" product. That product was deprecated. The employee had likely stopped using it. But the OAuth connection remained active.

Logicity's Take

Why OAuth Connections Are Different From Chat Uploads

When an employee pastes code into ChatGPT, that's a point-in-time data leak. Bad, but contained. When an employee grants an AI app OAuth access to Google Workspace or Microsoft 365, they're creating something else entirely: a persistent, programmatic connection.

That connection can read email, access files, and pull data continuously. It doesn't need the employee to be actively using the app. It doesn't require the employee to be at their computer. And it doesn't disappear when the employee forgets about the app and moves on.

If the third-party vendor gets compromised, attackers inherit that access. They don't need to phish your employees or guess passwords. They have a legitimate API connection sitting there, waiting to be used.

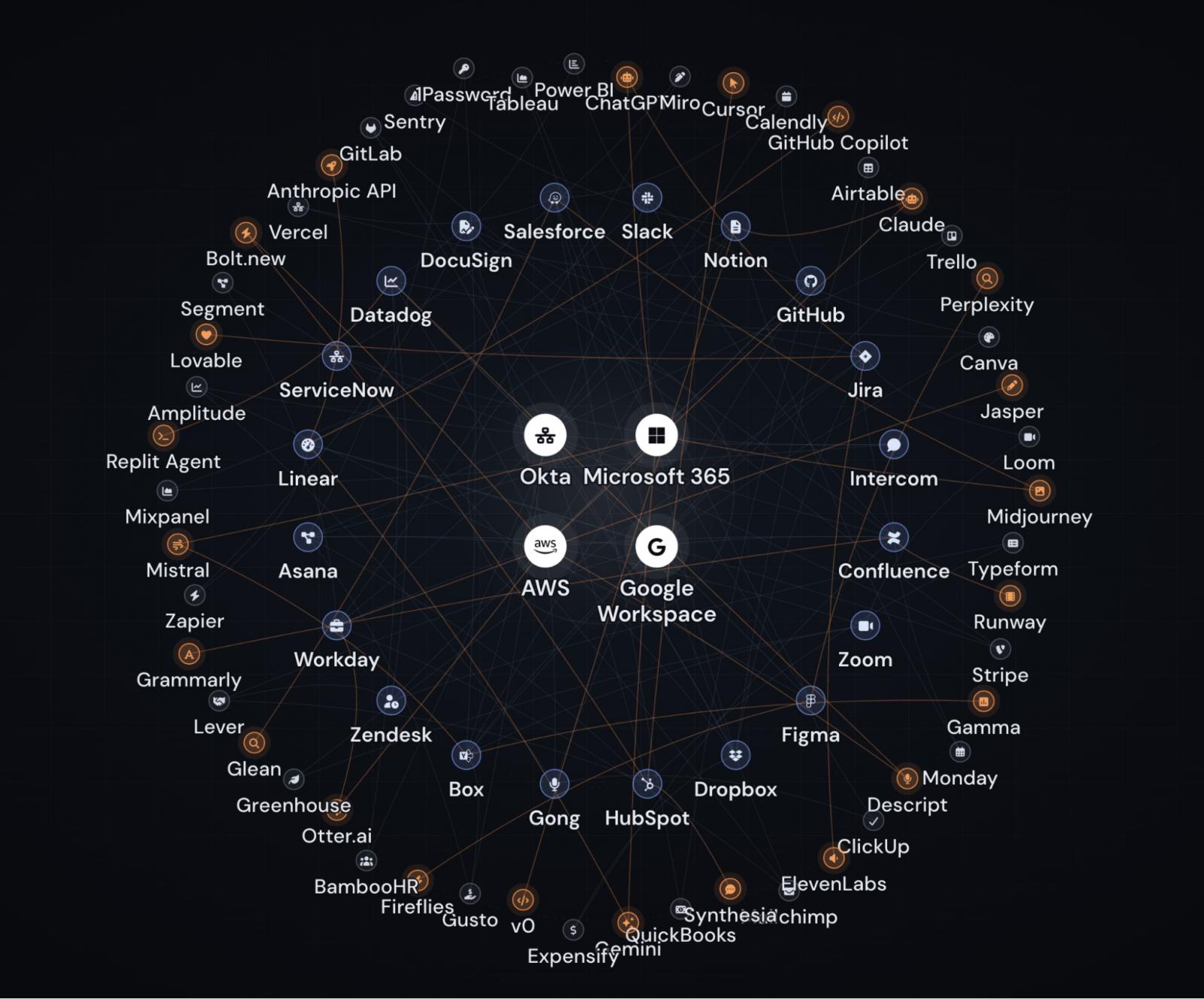

Four Types of Shadow AI to Track

The AI adoption rush has created multiple categories of shadow IT that security teams need to track:

- Shadow apps: Employees sign up for AI tools using corporate or personal accounts without IT approval. The tool might be legitimate. The company just doesn't know it's being used.

- Shadow tenants: Employees access approved apps with personal accounts, creating company data in environments outside corporate control.

- Shadow extensions: Browser extensions that come with AI apps or third-party AI extensions that have access to browser activity and page content.

- Shadow integrations: OAuth connections between apps, even when the apps themselves are approved. An approved AI tool plugged directly into Salesforce with full data access wasn't necessarily sanctioned.

The Vercel breach was specifically a shadow integration problem. Context.ai might have been fine as a standalone app. The risk came from connecting it directly into Google Workspace with OAuth permissions.

The Force Multiplier Effect

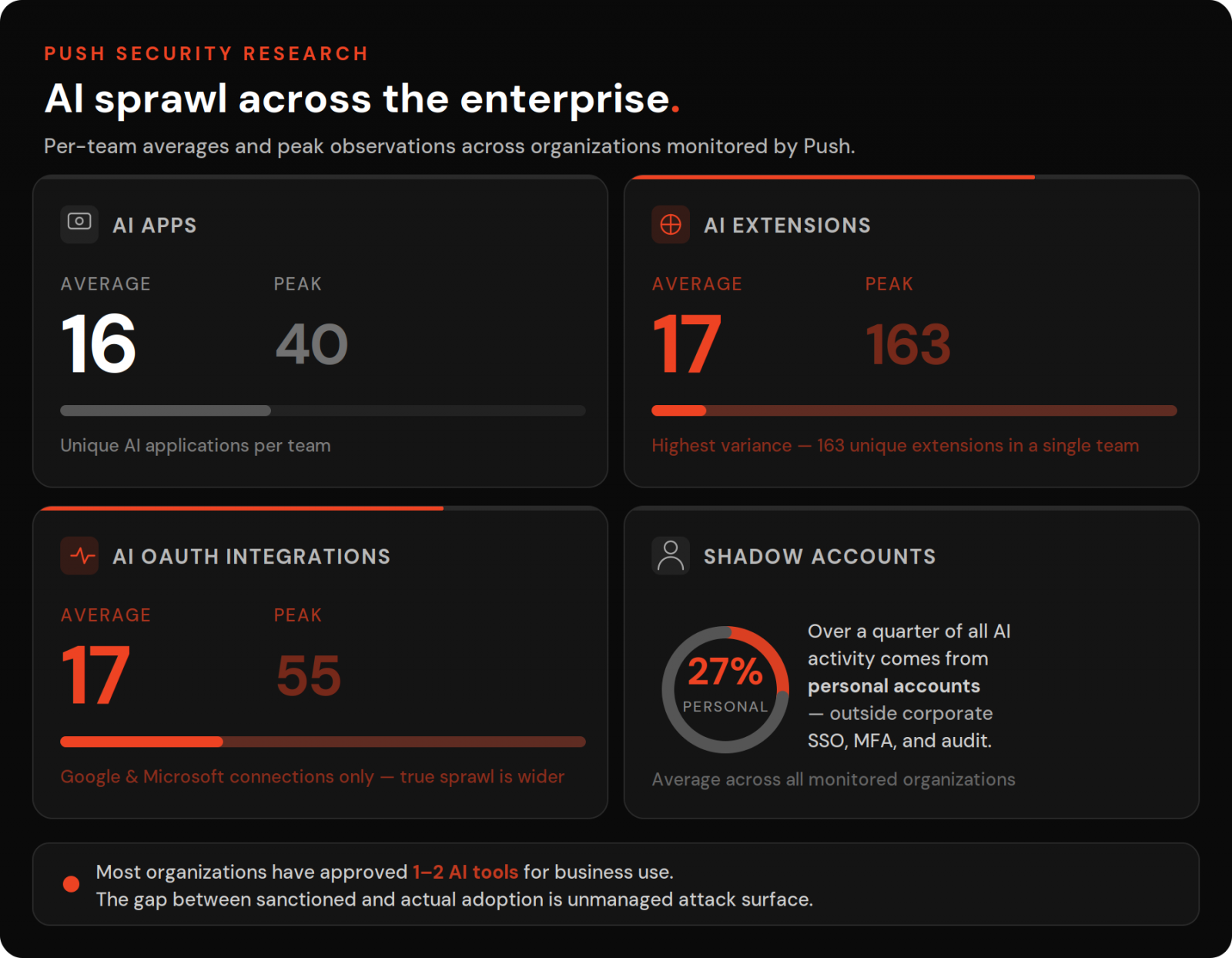

Shadow IT isn't new. Most organizations run hundreds of SaaS apps, many adopted by employees without formal approval. Security teams have dealt with this for years.

AI is different because of velocity. The pressure to adopt AI tools quickly has employees signing up for new apps weekly. Each app wants OAuth access to your core platforms. Each connection is another potential breach pathway.

The math is simple. If you have 500 employees and each connects three AI apps to your Google Workspace, you have 1,500 OAuth bridges to third parties. You need one of those vendors to get breached for your company to be exposed.

What the Vercel Case Got Wrong

The Context.ai connection that exposed Vercel had several warning signs that went unnoticed:

- The connected app was a consumer-grade product, not an enterprise tool

- The product had been deprecated by its vendor

- Vercel had no business relationship with Context.ai

- The OAuth connection had Google Workspace access despite being unused

Any one of these should have triggered a review. Together, they represent a common pattern: an employee trial that was granted permissions, briefly used, and then abandoned while the access remained active.

Fixing OAuth Sprawl

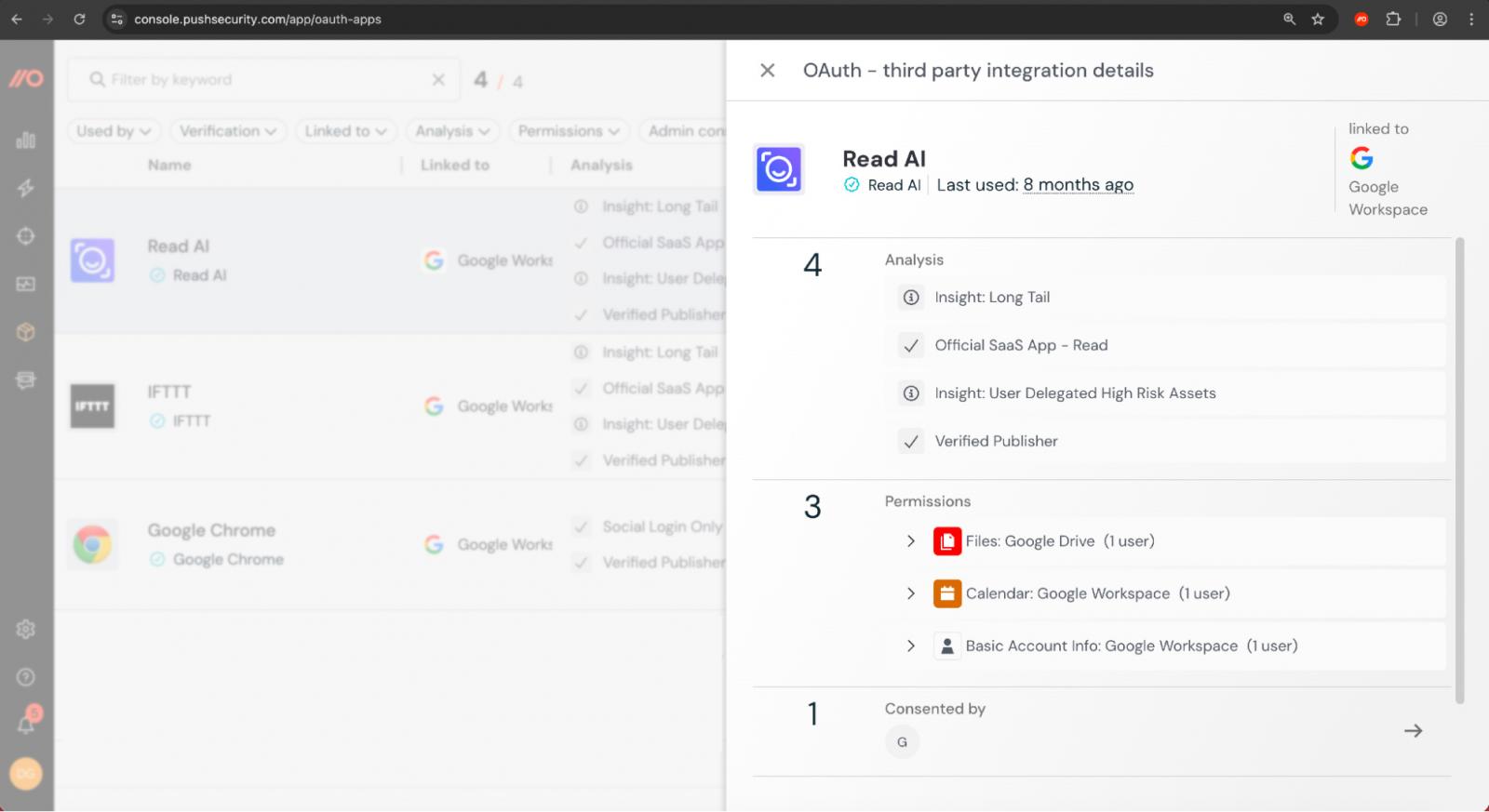

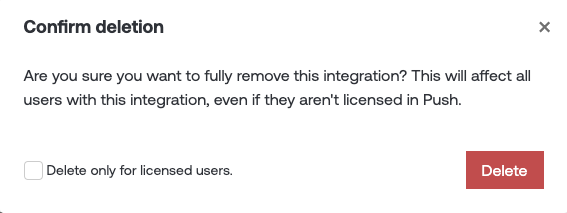

The immediate fix is visibility. You can't revoke OAuth connections you don't know exist. Security teams need to inventory all OAuth grants across Google Workspace, Microsoft 365, Salesforce, and other core platforms.

Once you have visibility, the work becomes triage. Which connections are to approved vendors? Which have excessive permissions? Which are to deprecated or suspicious apps?

- Audit existing OAuth grants across all core SaaS platforms

- Flag connections to consumer apps, deprecated products, and unknown vendors

- Implement approval workflows for new OAuth connections to core systems

- Set automatic expiration for unused integrations

- Monitor for new connections being created without approval

The goal isn't to block all AI adoption. It's to ensure that OAuth connections to your core systems are known, approved, and actively monitored.

The Bigger Pattern

The Vercel breach is one example of a pattern that will become more common. AI vendors are attractive targets for attackers precisely because they have OAuth access to many corporate environments. Compromising one AI startup can provide pathways into dozens of enterprise customers.

This makes vendor security due diligence more important, not less. But it also means accepting that some vendors will be breached regardless of their security practices. The defense is limiting what access they have and monitoring for misuse.

The employee who connected Context.ai wasn't being careless. They were trying a tool that might help them work better. That's exactly what you want employees to do. The failure was organizational: no visibility into what they connected, no process to review it, and no mechanism to expire unused grants.

Related look at infrastructure decisions that affect data security

Frequently Asked Questions

What is a shadow AI integration?

A shadow AI integration is an OAuth connection between an AI app and a core enterprise platform like Google Workspace or Microsoft 365 that was created without IT approval. The connection grants the AI app programmatic access to company data.

How did the Vercel breach happen?

A Vercel employee connected Context.ai's AI Office Suite to their Google Workspace account via OAuth. When Context.ai was breached, attackers used that existing OAuth connection to access Vercel's environment.

Why are OAuth connections more dangerous than uploading data to ChatGPT?

OAuth connections create persistent, programmatic access to your systems. They continue working even when employees stop using the app. If the connected vendor is breached, attackers can use that access immediately without needing to phish your employees.

How can companies prevent shadow AI integration breaches?

Companies should audit existing OAuth grants, implement approval workflows for new connections, set automatic expiration for unused integrations, and continuously monitor for new unauthorized connections.

What made the Context.ai connection particularly risky?

Context.ai's product was deprecated, consumer-grade, and Vercel had no business relationship with the vendor. The OAuth connection had remained active despite the app being unused.

Need Help Implementing This?

Source: BleepingComputer

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Kraken Crypto Exchange Extortion: Hackers Threaten to Leak Internal Videos After Insider Breach

Cryptocurrency exchange Kraken is being extorted by hackers who obtained videos of internal systems through bribed support employees. The company says no funds were compromised and refuses to pay, with only about 2,000 accounts affected. Kraken is working with federal law enforcement to prosecute everyone involved.

Windows 11 KB5083769 and KB5082052: April 2026 Patch Tuesday Brings Smart App Control Changes and Security Fixes

Microsoft's April 2026 Patch Tuesday updates are now live for Windows 11, bringing critical security patches alongside a welcome change to Smart App Control. You can finally toggle SAC on or off without wiping your entire system. The updates cover versions 23H2, 24H2, and 25H2.

Zero Trust Identity Security: 5 Ways This Framework Actually Stops Credential Theft

Stolen credentials caused 22% of breaches in 2025, making them the top attack vector. Zero Trust promises to fix this, but only when it's built around identity as the core principle. Here's how organizations can implement it properly.

Open Source PR Backlogs: Why Your GitHub Contribution Sits Unreviewed for a Year

A developer's Jellyfin pull request has been waiting over a year for merge despite two approvals, exposing a systemic crisis in open source maintenance. Queuing theory explains why backlogs grow exponentially, and 60% of maintainers have quit or considered quitting due to burnout.

Also Read

Samsung Foundry Hits 80% Yield on 4nm Chips

Samsung's 4nm chip manufacturing process has crossed the 80% yield threshold, signaling process maturity after six years of production. The milestone positions Samsung to compete more directly with TSMC for AI accelerator and automotive chip contracts.

5 Best VPNs for Gaming in 2026: Speed Tested and Ranked

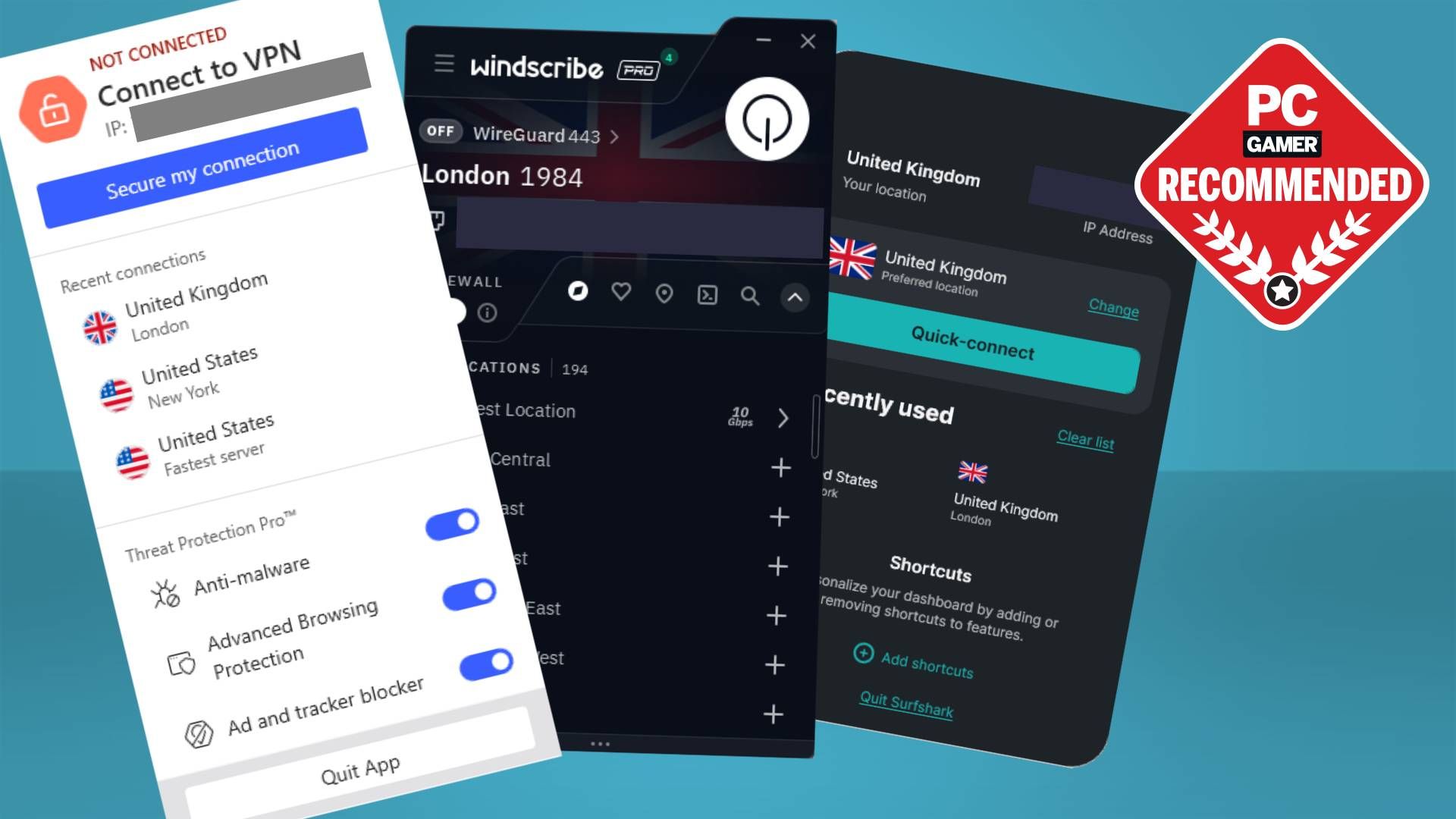

PC Gamer overhauled its VPN gaming guide with fresh benchmarks. NordVPN takes the overall crown, but Windscribe delivered the lowest ping in testing. Here's how each top pick performs for different use cases.

Why Affinity 2 Beats Photoshop for Most Users

Serif's Affinity suite went free after Canva's acquisition, offering Photoshop-level features without Adobe's $240/year subscription. For photographers, designers, and casual users, it's now the best alternative to both Photoshop and Canva.