Trump Admin Weighs Mandatory AI Model Vetting Before Release

Key Takeaways

- The proposed executive order would create an AI working group of tech executives and government officials to develop oversight procedures

- Anthropic's Mythos model, described as too dangerous for public release, appears to have triggered the policy reversal

- The system would grant government early access to models without blocking their release

A Sharp Reversal on AI Regulation

The Trump administration is considering an executive order that would establish government review of new AI models before they reach the public, according to a New York Times report citing unnamed U.S. officials. The proposed order would create an "AI working group" composed of tech executives and government officials to develop oversight procedures.

White House staff briefed leaders from Anthropic, Google, and OpenAI on the plans last week. If enacted, the policy would mark a dramatic shift from the administration's earlier position. On his first day back in office, Trump revoked a Biden-era executive order that addressed AI risks.

The timing isn't coincidental. David Sacks, who led the administration's AI deregulation push as AI czar, left the role in March. Since then, White House Chief of Staff Susie Wiles and Treasury Secretary Scott Bessent have taken more active roles in shaping AI policy.

How the Review System Would Work

The proposed approach resembles the UK's AI Security Institute model, where government bodies evaluate frontier models against safety benchmarks before and after deployment. According to officials who spoke with The New York Times, three agencies could oversee the review process: the NSA, the Office of the National Cyber Director, and the Director of National Intelligence.

One important detail: the system would grant the government early access to models without blocking their release. This isn't a gatekeeper model where companies need approval before launch. It's a preview system that gives federal agencies time to assess risks.

Logicity's Take

Anthropic's Mythos: The Catalyst

The catalyst for this policy reversal appears to be Anthropic's Mythos model. The company's marketing described Mythos as capable of finding thousands of critical software vulnerabilities, and Anthropic deemed it too dangerous for public release. That claim drew immediate government attention.

The Mythos situation comes at a tense moment in Anthropic's relationship with the federal government. The Pentagon designated Anthropic a supply chain risk after the company refused to remove guardrails on autonomous weapons and mass surveillance applications. A federal judge later called that designation "Orwellian."

The dispute stems from a collapsed $200 million Pentagon contract. Despite that conflict, the NSA has already used Mythos to assess vulnerabilities in government Microsoft software deployments. Other agencies remain cut off from Anthropic's tools.

The Bigger Picture for AI Companies

For OpenAI, Google, and Anthropic, pre-release government review creates a new compliance layer. Companies would need processes for sharing unreleased models with federal reviewers. They'd need legal frameworks for handling government feedback. And they'd need to decide how to respond if an agency raises objections.

The proposal also raises questions about smaller AI labs. Would the review requirement apply to all models, or only those above certain capability thresholds? The UK's approach focuses on "frontier" models, those at the cutting edge of capability. A similar scope limitation would spare most AI companies while targeting the handful building the most powerful systems.

Some analysts have questioned whether Mythos's capabilities match Anthropic's marketing claims. That skepticism matters here. If a company's self-assessment of danger triggers mandatory review, there's an incentive to downplay capabilities. The government would need independent evaluation methods, not just company-provided documentation.

What Happens Next

The discussions are ongoing, and no executive order has been signed. The AI working group concept suggests the administration wants industry input before finalizing requirements. But the direction is clear: a government that six months ago revoked AI safety rules is now building a pre-release review system.

The shift reflects a broader pattern. Frontier AI capabilities are advancing faster than anyone predicted. Models that can find software vulnerabilities, generate persuasive content, or assist with scientific research also create risks that cross into national security territory. The question was never whether government oversight would come, but what form it would take.

Frequently Asked Questions

Would the government be able to block AI model releases?

No. According to the current discussions, the system would grant government early access to models without blocking their release. This is a preview system, not an approval process.

Which agencies would review AI models?

Officials told The New York Times that the NSA, the Office of the National Cyber Director, and the Director of National Intelligence could oversee the review process.

Why did the Trump administration change its position on AI regulation?

The shift appears linked to Anthropic's Mythos model, which the company described as capable of finding thousands of software vulnerabilities and too dangerous for public release. Leadership changes also played a role, with AI czar David Sacks departing in March.

What is Anthropic's Mythos model?

Mythos is an AI model that Anthropic described as capable of finding thousands of critical software vulnerabilities. The company deemed it too dangerous for public release. The NSA has already used it to assess vulnerabilities in government Microsoft software deployments.

How does this compare to AI regulation in other countries?

The proposed approach resembles the UK's AI Security Institute model, where government bodies evaluate frontier models against safety benchmarks before and after deployment.

Need Help Implementing This?

Source: Latest from Tom's Hardware

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Alienware AW2726DM Review: The $350 QD-OLED Gaming Monitor That Changes Everything

Dell's Alienware AW2726DM shatters the OLED gaming monitor price barrier at just $350, delivering 27-inch QHD resolution, 240Hz refresh rate, and Quantum Dot color that rivals monitors costing twice as much. This isn't an incremental price drop. It's a complete reset of what budget-conscious gamers can expect.

iPhone Fold Launch 2026: Apple's First Foldable Could Capture 19% Market Share Instantly

Apple's long-awaited foldable iPhone is finally coming, and analysts predict it'll rocket the company to third place in the foldable market behind Samsung and Huawei. The secret weapon? Some seriously clever material science that could solve the crease problem that's plagued every foldable phone so far.

FAA Approves Military Laser Weapons for Drone Defense: What the New Airspace Rules Mean for Border Security

The FAA has given the Pentagon full approval to use high-energy laser systems against drones in US airspace, ending a two-month standoff that started when lasers shot down party balloons mistaken for cartel drones. The decision comes after safety assessments concluded these weapons don't pose increased risk to civilian aircraft.

China Chip Subsidies Reach $142 Billion: 3.6x More Than US Spent on Semiconductor Manufacturing

A new CSIS report reveals China has poured $142 billion into semiconductor subsidies over the past decade, dwarfing US spending by a factor of 3.6. But here's the twist: despite this massive investment, Chinese chipmakers still lag years behind TSMC and struggle with abysmal yields at advanced nodes.

Also Read

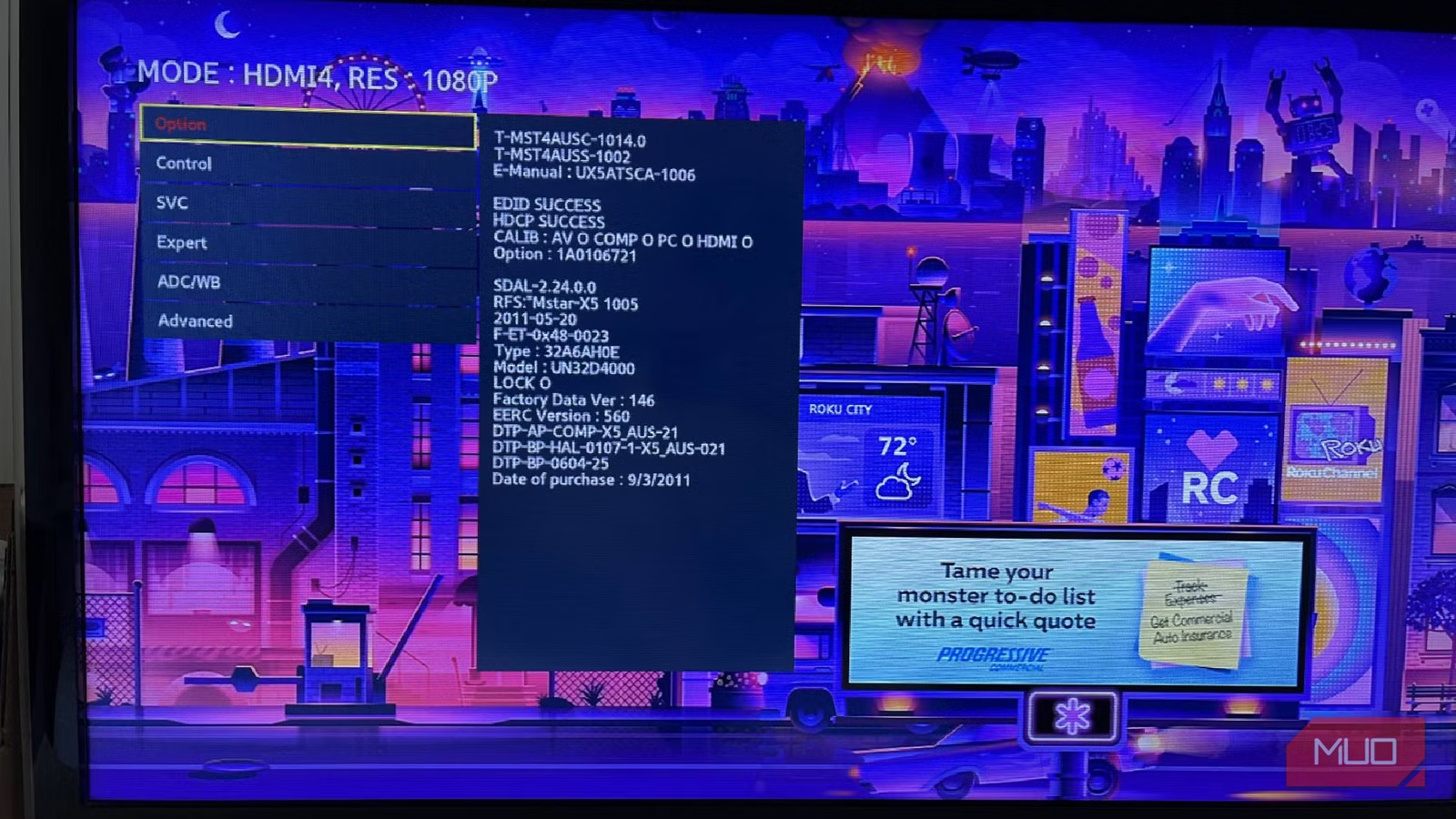

5 Things Samsung TV's Secret Service Menu Can Actually Do

Every Samsung TV has a hidden service menu that technicians use for deep diagnostics and calibration. With the right button combo, you can access factory reset options, panel settings, and diagnostic tools. But proceed with caution: one wrong setting can brick your TV.

Call of Duty 2026 Drops PS4 and Xbox One Support

Activision confirms this year's Call of Duty, rumored to be Modern Warfare 4, will not release on PlayStation 4 or Xbox One. The move marks the franchise's first full break from last-gen hardware since 2013, following Black Ops 7's disappointing fifth-place sales finish last year.

Why Customizing Android to Feel Like Pixel Wastes Your Time

A tech journalist spent hours installing third-party launchers, removing bloatware, and tweaking UI settings to make a Samsung Galaxy S24 Ultra feel like a Google Pixel. The conclusion: the effort wasn't worth it, and buying a Pixel would have been the smarter choice.