OpenAI Outage 2025: What It Means for AI-Dependent Teams

Key Takeaways

- OpenAI's partial outage affected ChatGPT, Codex, and API services, disrupting thousands of users across web and mobile

- This is OpenAI's second major outage in 2025, raising questions about reliability for enterprise adoption

- Business leaders should evaluate multi-vendor AI strategies to avoid single points of failure

According to [Inc42 Media](https://inc42.com/buzz/openai-outage-chatgpt-codex-down-for-thousands-of-users-globally/), OpenAI is facing a partial outage that has impacted operations of ChatGPT, the Codex coding platform, and API services, with thousands of users globally unable to access key features.

Read in Short

OpenAI's services went down for thousands of users globally, affecting ChatGPT conversations, login, voice mode, image generation, and the Codex coding platform. This is the company's second major outage in 2025. For businesses relying on OpenAI's API for production workflows, this incident is a reminder that AI infrastructure needs the same resilience planning as any critical system.

What Happened in the OpenAI Outage?

Reports started flooding in around 7:35 PM IST when Downdetector showed a sudden spike in complaints. At its peak, over 800 users reported problems accessing OpenAI's services. The outage wasn't a complete blackout. Instead, it was marked as a "partial outage" affecting a wide range of features.

- ChatGPT conversations failing or timing out

- Login issues across web and mobile apps

- Voice mode unavailable

- Image generation not working

- Codex (agentic coding platform) inaccessible

- API calls returning errors

India was among the most affected regions, with hundreds of complaints forming a significant share of total reports. Users reported disruptions on both web and mobile platforms, making it impossible to rely on any access point.

OpenAI acknowledged they were "investigating" but hasn't disclosed what caused the problem. In a separate update, the company mentioned they had applied mitigation for an issue affecting ChatGPT Business users trying to upgrade plans or add seats. Whether these two issues are connected remains unclear.

Why Should Business Leaders Care About OpenAI Outages?

If your company has integrated ChatGPT or OpenAI's API into production workflows, this outage likely cost you money. Customer support bots went silent. Content generation pipelines stalled. Developers using Codex lost their AI pair programmer mid-task. The productivity gains you've been celebrating? They reverse the moment your AI vendor goes down.

The Real Cost of AI Downtime

Most businesses don't calculate the true cost of AI service outages. If your customer support team handles 500 tickets per hour with AI assistance, and that assistance disappears for 2 hours, you're looking at delayed responses, frustrated customers, and overworked agents. For companies using AI in revenue-generating workflows, hourly downtime costs can run into thousands of dollars.

This isn't a one-time glitch. This is OpenAI's second major outage in 2025. In March, ChatGPT went down for thousands of users around 2 AM IST. OpenAI cited "elevated error rates" but never disclosed the root cause. A pattern is emerging, and it's one that enterprise buyers need to factor into their AI strategy.

How Does OpenAI's Reliability Compare to Other AI Providers?

Every cloud service experiences outages. The question is frequency, transparency, and recovery time. Here's how the major AI providers stack up based on publicly reported incidents and their communication during outages.

| Provider | Notable 2025 Outages | Transparency | Enterprise SLA Available |

|---|---|---|---|

| OpenAI (ChatGPT/API) | 2 major incidents | Limited - root cause rarely disclosed | Yes (Enterprise tier) |

| Anthropic (Claude) | 1 minor incident | Moderate - status page updates | Yes (API agreements) |

| Google (Gemini/Vertex) | 1 major incident | High - detailed postmortems | Yes (GCP SLAs apply) |

| AWS Bedrock | 0 major incidents | High - AWS-level transparency | Yes (standard AWS SLAs) |

OpenAI's enterprise tier does come with SLAs, but most businesses using ChatGPT Plus or the standard API tier don't have contractual uptime guarantees. If you're building mission-critical applications on OpenAI, you're accepting more risk than you might realize.

Another reminder that AI infrastructure creates new attack and failure surfaces

What Is the Business Risk of Single-Vendor AI Dependency?

The OpenAI outage highlights a growing problem: many companies have gone all-in on a single AI provider without building redundancy. This mirrors the infrastructure concentration risk that became painfully clear in a Cloudflare outage months ago, which took down ChatGPT, X, Spotify, and PayPal simultaneously.

The logic is understandable. Integrating one AI provider is hard enough. Building for multiple providers means maintaining multiple API integrations, prompt formats, and billing relationships. But the downside of concentration is exactly what we saw today: when OpenAI goes down, so does your business process.

✅ Pros

- • Simpler integration and maintenance

- • Consistent model behavior and outputs

- • Easier prompt optimization

- • Single vendor relationship to manage

❌ Cons

- • Complete workflow stoppage during outages

- • No leverage in pricing negotiations

- • Vulnerability to vendor policy changes

- • No fallback for region-specific issues

How Can Businesses Build AI Resilience?

Smart enterprise teams are starting to treat AI providers like they treat cloud infrastructure: with redundancy built in. Here's what a resilient AI strategy looks like in practice.

- Identify critical vs. non-critical AI workflows. Not every AI use case needs 99.99% uptime. Customer-facing chatbots? Critical. Internal content drafts? Less so.

- Build abstraction layers. Use middleware or internal APIs that can route requests to different AI providers. When OpenAI is down, automatically fall back to Claude or Gemini.

- Maintain prompt parity. Keep prompts optimized for at least two providers. This takes work but ensures you can switch quickly.

- Monitor AI service health. Don't wait for user complaints. Set up automated monitoring that alerts your ops team before Downdetector knows.

- Negotiate enterprise SLAs. If AI is business-critical, pay for enterprise tiers with contractual uptime guarantees and dedicated support.

The investment in redundancy might seem like overkill today. But as AI becomes more embedded in core business processes, the cost of downtime will only grow. Better to build resilience now than scramble during the next outage.

A deeper look at how enterprises are planning for AI service disruptions

What Should You Do Right Now?

If you're reading this while your ChatGPT integrations are failing, here's your immediate action plan.

- Check OpenAI's status page (status.openai.com) for official updates

- Communicate with affected teams that this is a vendor issue, not an internal failure

- Document which workflows were impacted and for how long

- If you have enterprise support, open a ticket to establish a paper trail

- After recovery, schedule a meeting to discuss AI redundancy strategy

The outage will resolve. They always do. But the conversation about AI infrastructure resilience shouldn't end when the services come back online.

The Bigger Picture: AI Infrastructure Is Still Immature

We're in the early innings of enterprise AI adoption. The infrastructure that powers these services is scaling faster than anyone predicted. OpenAI went from a research lab to processing hundreds of millions of queries daily in just a few years. Growing pains are inevitable.

“The companies that will win in the AI era aren't just the ones adopting AI fastest. They're the ones building sustainable, resilient AI operations that work even when things go wrong.”

— Enterprise AI Strategy Framework, 2025

For business leaders, this means adjusting expectations. AI isn't magic. It's infrastructure. And like all infrastructure, it requires redundancy planning, vendor diversification, and operational maturity. The OpenAI outage is a reminder that we're not there yet, but we need to start building toward it.

A reminder that big tech dependencies carry regulatory as well as operational risks

Logicity's Take

We build AI-powered workflows for clients using Claude API, OpenAI, and open-source models. Today's outage reinforced something we've been telling clients for months: never build production systems on a single AI provider without a fallback. From our experience shipping AI agents and n8n automation workflows, the abstraction layer approach works. We typically build internal routing logic that can switch between Claude and OpenAI based on availability and cost. It adds maybe 20% to initial development time but saves exponentially more during incidents like this. For Indian startups especially, where customer support windows often span IST business hours, an outage at 7:35 PM hits right when volume peaks. If your AI chatbot goes down during that window, you're not just losing productivity. You're losing customers who expected instant responses. Our advice: treat AI vendors like you treat payment gateways. You wouldn't run a business on a single payment processor without a backup. AI infrastructure deserves the same respect.

Frequently Asked Questions

Frequently Asked Questions

How long do OpenAI outages typically last?

Based on recent incidents, OpenAI outages typically resolve within 2-4 hours. The March 2025 outage was resolved within a few hours, and partial outages like today's tend to recover faster as specific features come back online incrementally. However, full recovery with stable performance can take longer.

Does OpenAI offer refunds or credits for outages?

OpenAI's enterprise tier includes SLAs with potential credits for downtime. Standard API and ChatGPT Plus users don't have contractual uptime guarantees, so refunds aren't typically offered for partial outages. Check your specific agreement if you're on an enterprise plan.

What's the cost of building AI redundancy with multiple providers?

Building multi-provider AI redundancy typically adds 15-30% to development costs and requires maintaining prompt libraries for each provider. However, the operational cost savings during outages often justify this investment within 12-18 months for companies with business-critical AI workflows.

Should we switch from OpenAI to a more reliable provider?

Switching providers entirely isn't necessarily the answer. All major AI providers experience occasional outages. The better strategy is building redundancy so you can automatically route requests to alternate providers during incidents. This keeps you on OpenAI for normal operations while ensuring business continuity.

How do we monitor AI service health proactively?

Set up automated monitoring that pings your AI endpoints every few minutes and alerts your ops team when response times spike or errors occur. Tools like Better Uptime, Datadog, or custom n8n workflows can detect issues before they impact users. Also subscribe to OpenAI's status page for official updates.

Need Help Implementing This?

Logicity helps businesses build resilient AI infrastructure with multi-provider redundancy, automated failover, and production-grade monitoring. Whether you're starting your AI journey or hardening existing workflows, we can help you avoid the pain of single-vendor dependency. Get in touch to discuss your AI resilience strategy.

Source: Inc42 Media / Lokesh Choudhary

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

Indian Startup IPOs 2026: ₹47,000 Cr Pipeline Reshapes Exit Strategy

India's startup IPO market is set for its biggest year yet, with unicorns like Flipkart, Zepto, and OYO planning to raise over ₹47,000 Cr. But public market investors are demanding profitability over hype, forcing founders to rethink their approach to going public.

Griffin Retreat 2026: $200Bn Founder Network Lessons

Inc42's Griffin Retreat gathered 100 founders representing $200 billion in valuation for closed-door strategy sessions. Here's what business leaders can learn from India's most exclusive founder gathering about building networks, scaling companies, and navigating the shift from growth to institutional building.

Loopworm Insect Protein: How This Bengaluru Startup Cuts Biologics Costs by 80%

Loopworm is using silkworms and black soldier flies to manufacture protein biologics and animal feed ingredients, potentially slashing pharmaceutical manufacturing costs to one-fifth of traditional methods. The startup reported ₹4.5 Cr revenue in FY25 and projects ₹15-18 Cr for FY26.

TraqCheck $8 Million Series A: AI Recruitment Agents Nina and Trace Target European Expansion

Indian enterprise tech startup TraqCheck just closed an $8 million Series A round to scale its AI-powered recruitment agents across Europe. The company, which started with background verification, is now building autonomous agents that source candidates, initiate conversations, and connect them directly with hiring managers.

Also Read

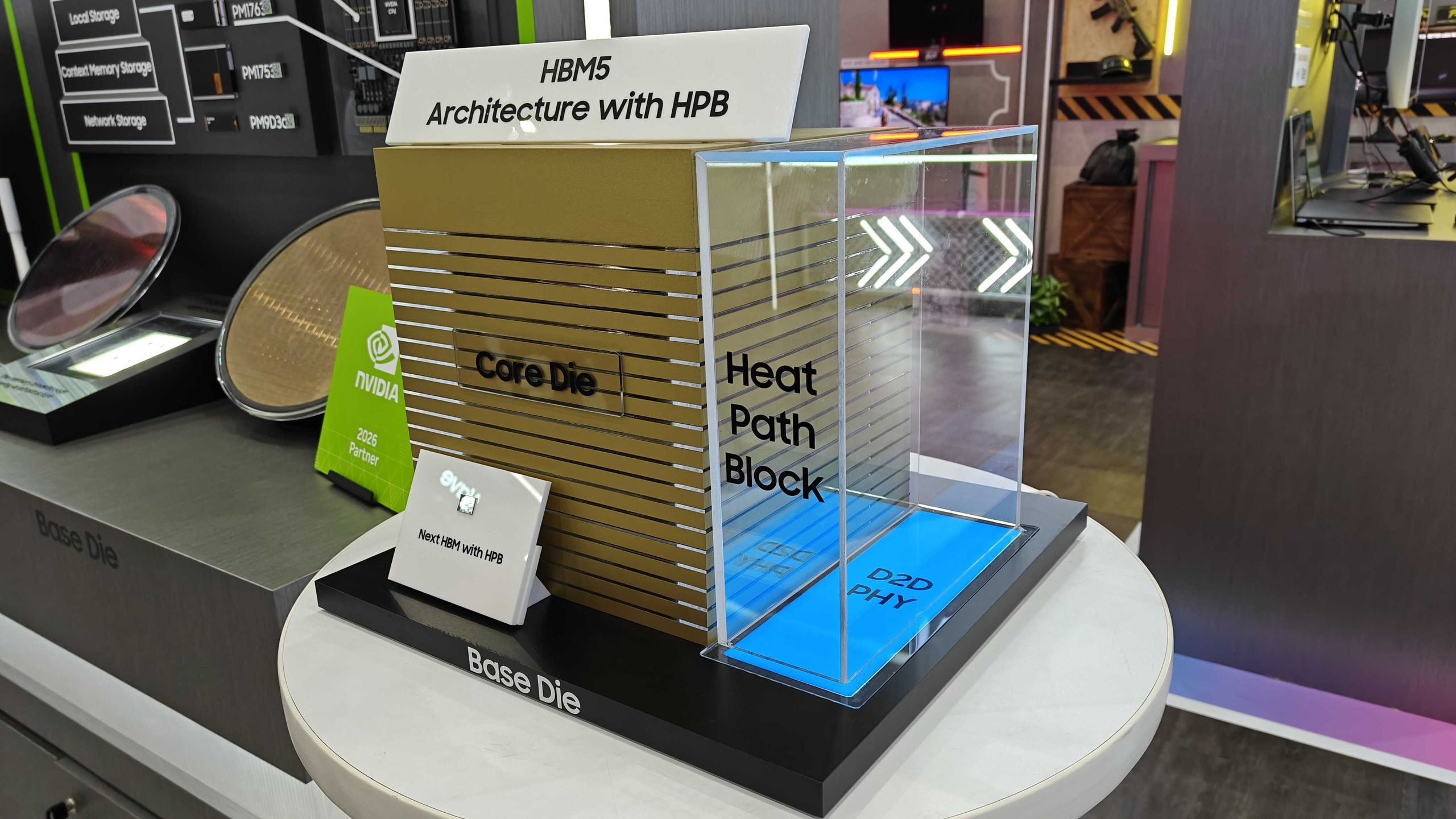

Samsung Shows HBM5 Mockup With Heat Path Block Cooling

Samsung unveiled its first physical HBM5 memory mockup at Computex 2026, featuring a new thermal design called Heat Path Block. The company confirmed it will manufacture HBM5's base die on its 2nm process, setting up a direct thermal engineering competition with SK hynix.

CISA Warns of Active Exploits Targeting Android, Linux Flaws

The U.S. Cybersecurity and Infrastructure Security Agency added two vulnerabilities to its Known Exploited Vulnerabilities catalog. Federal agencies must patch by June 5, 2026. The flaws affect Android 14-16 and multiple Linux kernel versions.

6 Ways to Stay Cool Indoors This Summer Without Breaking the Bank

As summer electricity bills hit a projected 12-year high of $784, homeowners are rethinking indoor cooling strategies. From supercooling techniques to strategic window management, here's how to survive the heat without destroying your budget.