Anthropic Adds 'Dreaming' to Claude Agents for Error Learning

Key Takeaways

- Dreaming analyzes up to 100 past sessions to build organized memory from patterns and errors

- Outcomes lets a separate evaluator grade agent outputs against fixed criteria, with up to 20 revision attempts

- Multiagent Orchestration supports up to 20 specialized agents running 25 parallel threads

How Dreaming Works

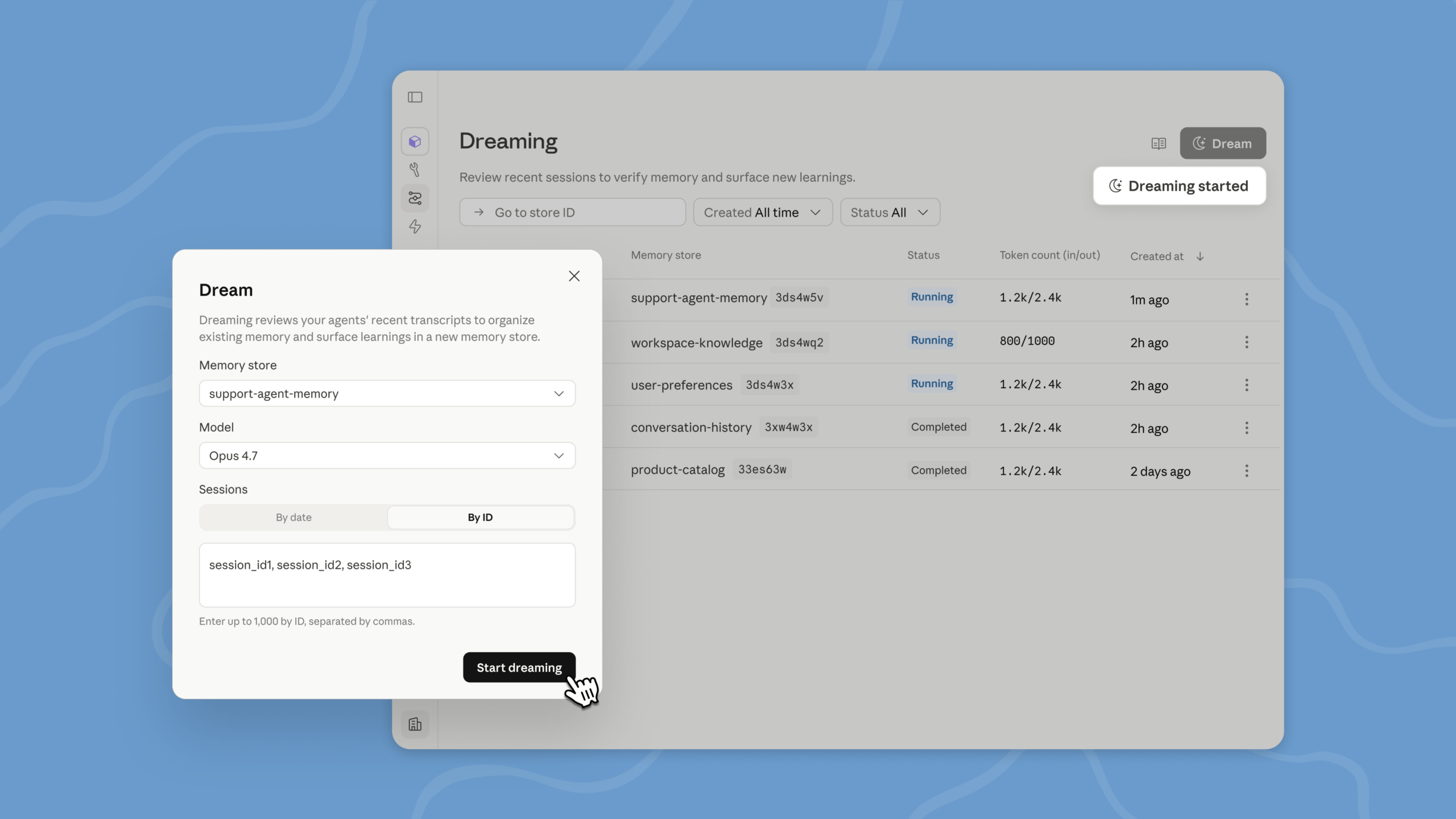

Anthropic launched its Claude Managed Agents platform in April. Now it is adding Dreaming, a system that lets AI agents review their own history and learn from it. The feature runs as an asynchronous job. It reads an existing memory store and can process up to 100 past sessions.

The process cleans up duplicates and outdated entries, then builds a new, organized memory. The original memory stays intact. Think of it as an AI agent that periodically reflects on what went wrong and what worked.

Dreaming supports Claude Opus 4.7 and Claude Sonnet 4.6. Billing follows standard API token pricing. Developers can access the Dreaming interface through the Claude Console, where they select a memory store, pick a model, specify which sessions to analyze, and start the process.

Dreaming is currently available as a research preview. Developers can request access through a form on Anthropic's website.

Outcomes: A Separate Grader for Agent Work

Outcomes is moving from research preview to public beta. The feature lets developers define a rubric with specific success criteria. For example: 'The CSV file contains a price column with numerical values.'

A separate evaluator, called a grader, then checks the agent's output against these criteria. The grader runs in its own context window, isolated from the agent's reasoning. This separation matters. It prevents the grader from being influenced by how the agent justified its work.

If the output does not meet the specs, the grader identifies the gaps. The agent then revises its work. By default, it can retry up to three times. The maximum is 20 attempts.

Multiagent Orchestration: Parallel Specialized Agents

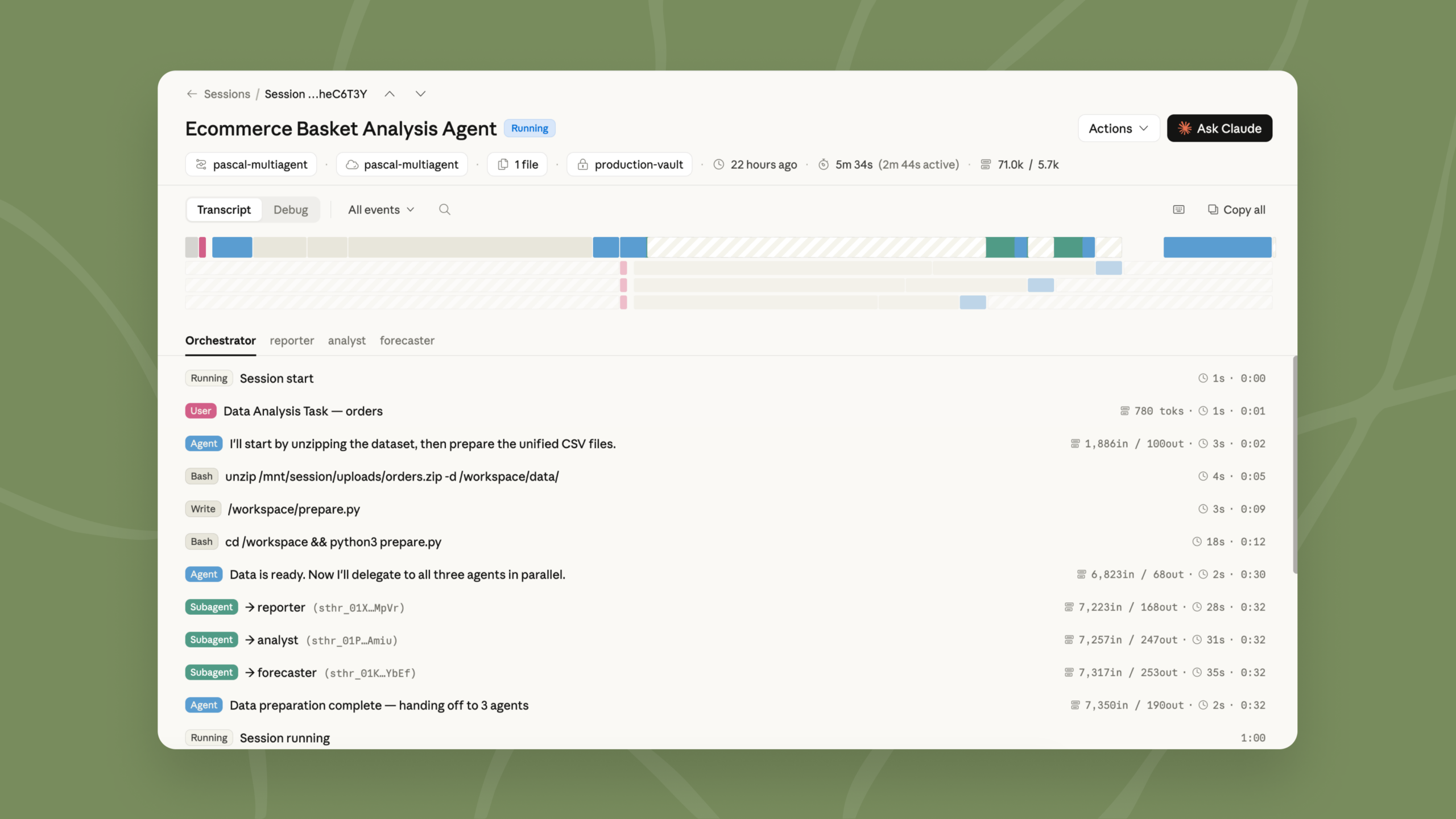

Multiagent Orchestration is also moving to public beta. In this setup, a lead agent, called a coordinator, manages several specialized agents. Each agent runs in its own thread with an isolated context. They have their own model, system prompt, and dedicated tools. But they share the same file system.

The coordinator can hand out tasks in parallel. For instance, it might delegate code review to one agent and test creation to another, running both at the same time. The system supports up to 20 different agents and a maximum of 25 threads running simultaneously.

What This Means for Agent Reliability

These three features address a core problem with AI agents: they make mistakes, and they do not remember them. Dreaming tackles the memory problem. Outcomes adds a quality check. Multiagent Orchestration lets complex tasks be broken into pieces handled by specialists.

Memory is part of the same public beta. It works alongside these features as part of Anthropic's Managed Agents platform. Full documentation is available on Anthropic's website and in the Claude Console.

Logicity's Take

Related coverage of Anthropic's infrastructure investments

Comparison of how major AI providers handle user data and memory

Frequently Asked Questions

What is Claude's Dreaming feature?

Dreaming is a process that reviews up to 100 past agent sessions, identifies patterns like recurring errors or successful workflows, and builds an organized memory that agents can reference in future sessions.

Which Claude models support Dreaming?

Dreaming currently supports Claude Opus 4.7 and Claude Sonnet 4.6. Billing follows standard API token pricing.

How does Outcomes work in Claude Managed Agents?

Developers define success criteria in a rubric. A separate evaluator grades the agent's output against those criteria. If it fails, the agent can revise its work up to 20 times.

What are the limits for Multiagent Orchestration?

The system supports up to 20 different specialized agents and a maximum of 25 threads running simultaneously. All agents share the same file system.

How can developers access the Dreaming feature?

Dreaming is available as a research preview. Developers can request access through a form on Anthropic's website. Outcomes and Multiagent Orchestration are in public beta.

Need Help Implementing This?

Source: The Decoder / Matthias Bastian

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse allZuckerberg's Superintelligence Lab Faces Setback

The first AI model from Zuckerberg's superintelligence lab has failed to impress compared to its rivals, sparking concerns about the lab's direction. We take a closer look at what happened and why it matters.

Muse Spark Launch Propels Meta AI App to Top 5

The recent launch of Muse Spark has significantly boosted the popularity of Meta AI app, pushing it into the top 5. We explore what this means for the AI landscape.

Meta's Muse Spark AI Model Lags Behind ChatGPT and Claude

Meta's Muse Spark AI model still can't outperform ChatGPT and Claude in key areas, despite its advancements. We explore what this means for the AI landscape.

Meta Launches Muse Spark AI To Challenge ChatGPT

Meta launches Muse Spark AI to challenge ChatGPT and Claude, we explore what this means for the AI landscape. Muse Spark AI is a significant development in the AI chatbot space.

Also Read

5 Home Devices You Didn't Know Could Be Automated

Smart home automation goes beyond lights and thermostats. Energy monitoring plugs and variable electricity plans unlock automation possibilities for turntables, water heaters, and other unexpected devices.

Michigan Towns Block AI Data Centers After $16B Override

At least 19 Michigan municipalities have enacted moratoriums on data center development after OpenAI and Oracle's $16 billion Stargate facility was approved despite near-unanimous local opposition. The backlash has triggered county resolutions, bipartisan state legislation, and a regional water authority refusing to serve proposed facilities.

Claude Design vs ChatGPT vs NotebookLM: Infographic Test

A tech writer tested three AI tools on the same task: creating a detailed Raspberry Pi 4 infographic. Claude Design, ChatGPT Images 2, and NotebookLM each took different approaches. Only one produced accurate, usable results.