$200 Nvidia V100 Hack Outperforms Modern GPUs in AI

Key Takeaways

- A $100 Tesla V100 with a $100 adapter outperforms an RTX 3060 and RX 7800 XT in LLM inference

- The V100 achieved 130 tokens per second versus 90 for the RX 7800 XT in Ollama benchmarks

- Older data center GPUs with high VRAM offer better value for local AI workloads than modern consumer cards

Server GPU meets consumer PC

Running large language models locally requires serious VRAM. Modern consumer GPUs with 16GB or more cost upward of $500. But YouTuber Hardware Haven found a workaround that costs $200 and outperforms cards three times its price.

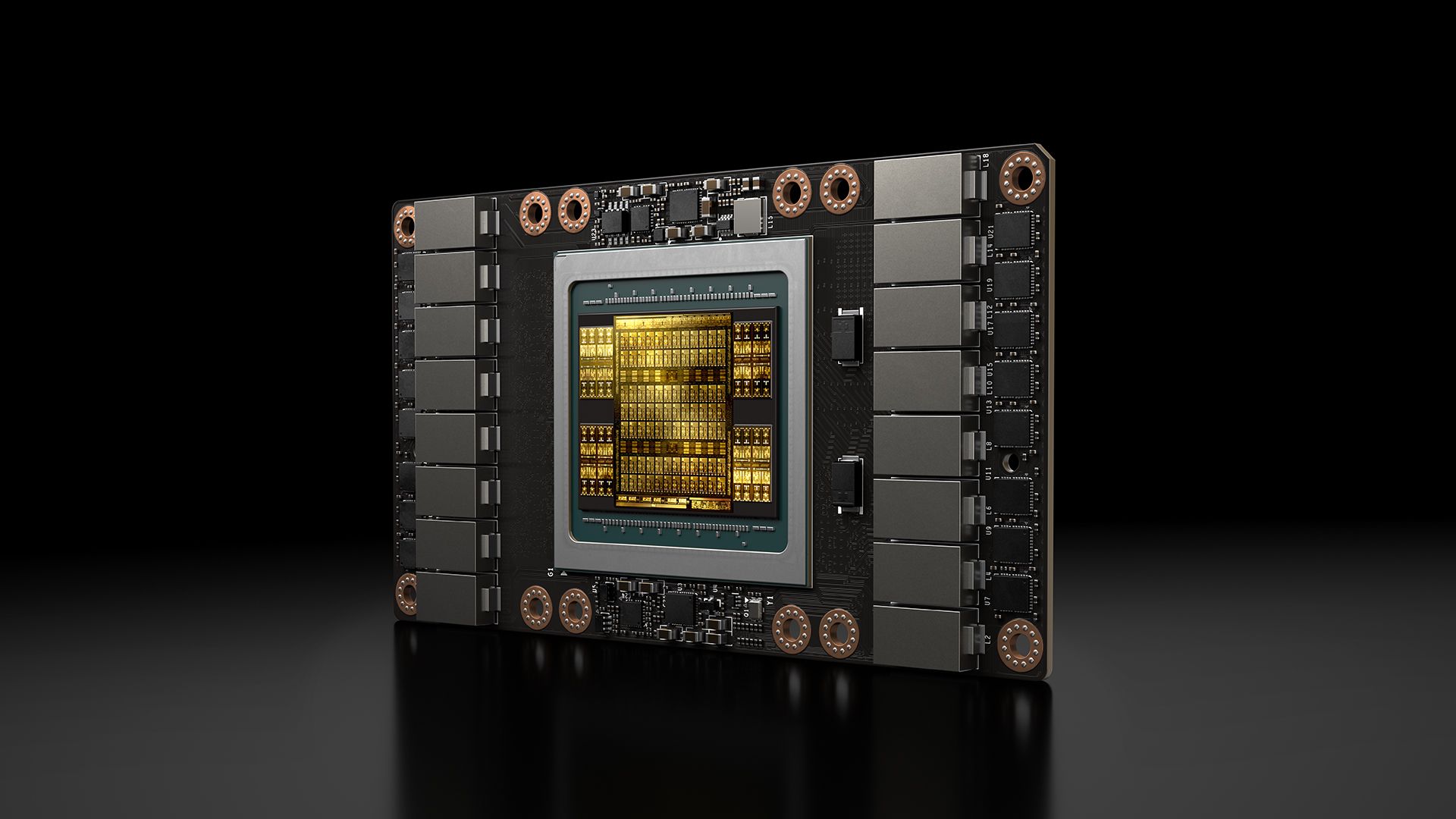

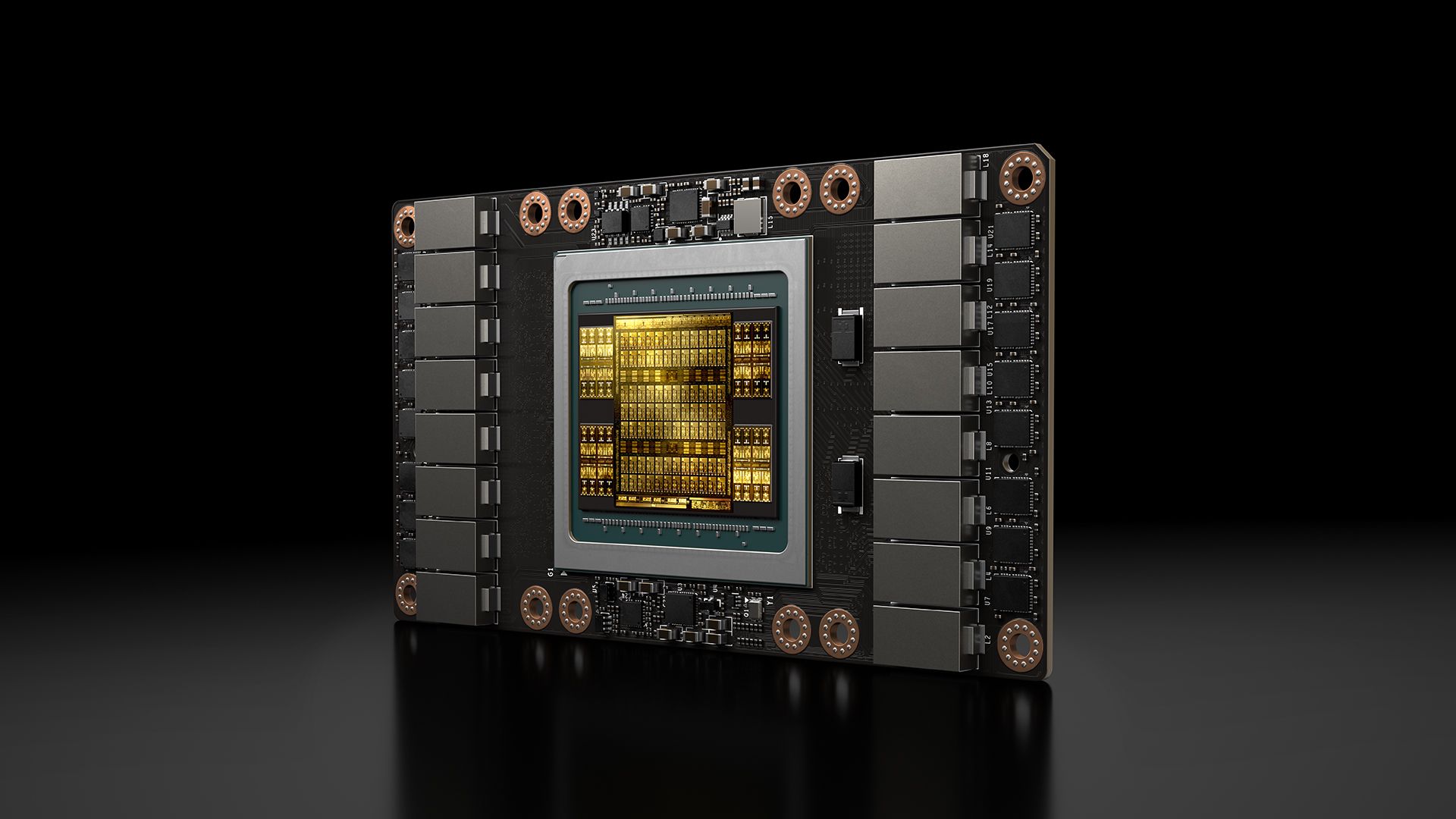

The project starts with an Nvidia Tesla V100, a data center GPU from 2017. This isn't a standard PCIe card. It uses the SMX2 socket, a mezzanine connector designed for rack-scale server deployments. The GPU mounts flat against a specialized baseboard and screws down like a CPU.

Hardware Haven bought the V100 for $100 on the secondhand market. A matching SMX-to-PCIe x16 adapter cost another $100. Total investment: $200 for a GPU with 16GB of HBM2 memory and 900 GB/s of bandwidth.

Custom cooling required

The adapter card doesn't include cooling. Server GPUs rely on chassis airflow, not onboard fans. The V100 itself is just a heatsink mounted on a PCB.

Hardware Haven solved this by designing a custom air duct and 3D printing it. An 80mm Noctua fan attaches to one end, drawing fresh air across the heatsink. The adapter provides two 8-pin PCIe power connectors and three 4-pin PWM headers for fan control.

One limitation: the adapter lacks a secondary SMX socket for NVLink, Nvidia's high-speed GPU interconnect. Adapters with NVLink support exist but cost significantly more.

Benchmark results

With the V100 installed in a standard Ryzen system, Hardware Haven ran LLM inference tests. One catch: the V100 has no display output. You need a CPU with integrated graphics to actually use the computer.

Using Ollama with the gpt-oss-20b model, the V100 generated 130 tokens per second. The YouTuber's daily driver, an AMD Radeon RX 7800 XT, managed only 90 tokens per second. Both cards have 16GB of VRAM. The 7800 XT is newer with supposedly more efficient silicon. But Nvidia's CUDA ecosystem and software support give it a clear edge in AI workloads.

To test Nvidia versus Nvidia, Hardware Haven compared the V100 against an RTX 3060 12GB. Running Google's gemma4:e4b model, the V100 hit 108 tokens per second. The RTX 3060 managed 76 tokens per second.

| GPU | VRAM | Price | Tokens/sec (gpt-oss-20b) | Power Draw |

|---|---|---|---|---|

| Nvidia V100 (modded) | 16GB HBM2 | $200 | 130 | 293W |

| AMD RX 7800 XT | 16GB GDDR6 | ~$450 | 90 | N/A |

| Nvidia RTX 3060 12GB | 12GB GDDR6 | ~$300 | 76 | 235W |

Power and efficiency trade-offs

The V100 does consume more power. It drew 293W in the gemma4 test compared to 235W for the RTX 3060. That's about 25% more electricity for 42% more performance.

For continuous use cases like inference servers, the power cost matters. For hobbyists running occasional LLM queries, the $200 entry price makes the V100 hard to beat.

Why old data center GPUs work

The V100 launched in 2017 for over $10,000. It was built for machine learning from the start. While consumer GPUs prioritize gaming performance, the V100 was designed around high VRAM bandwidth and tensor operations.

HBM2 memory gives the V100 900 GB/s of bandwidth. A modern RTX 4060 has 272 GB/s. For LLM inference, where the GPU constantly shuffles massive model weights, bandwidth often matters more than raw compute.

As companies upgrade their data centers, older cards like the V100 flood the secondhand market. The SMX form factor makes them incompatible with consumer PCs by default. That gap between supply and demand is exactly what drives prices down to $100.

Logicity's Take

Practical considerations

This isn't a plug-and-play solution. You need to source a working V100, find a compatible adapter, design or print cooling, and troubleshoot driver issues. Server GPUs sometimes require specific firmware or BIOS settings.

There's also no warranty. These cards have already served years in data centers. Failure rates are unknown, and replacements require sourcing another used unit.

Still, for hobbyists and small teams experimenting with local LLMs, the economics are compelling. A $200 GPU that outperforms a $450 consumer card changes the math on building AI infrastructure.

Related AI research news

Frequently Asked Questions

Can I use a Tesla V100 in a regular gaming PC?

Yes, with an SMX-to-PCIe adapter. You also need custom cooling since the V100 lacks onboard fans. Note that it has no display output, so you need a CPU with integrated graphics or a second GPU for video.

How much VRAM does the Nvidia V100 have?

The V100 comes in 16GB and 32GB variants. Both use HBM2 memory with 900 GB/s bandwidth, significantly faster than consumer GDDR6.

Is the V100 good for running local LLMs?

Yes. In benchmarks, a $200 V100 achieved 130 tokens per second in Ollama, outperforming both an RTX 3060 and RX 7800 XT in LLM inference.

Where can I buy a used Tesla V100?

Used V100s appear on eBay, AliExpress, and specialized server hardware resellers. Prices vary from $100 to $300 depending on condition and memory variant.

What's the power consumption of the V100?

The V100 drew 293W during AI inference tests. That's higher than an RTX 3060's 235W, but the V100 delivered 42% more performance.

Need Help Implementing This?

Source: Latest from Tom's Hardware

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

Alienware AW2726DM Review: The $350 QD-OLED Gaming Monitor That Changes Everything

Dell's Alienware AW2726DM shatters the OLED gaming monitor price barrier at just $350, delivering 27-inch QHD resolution, 240Hz refresh rate, and Quantum Dot color that rivals monitors costing twice as much. This isn't an incremental price drop. It's a complete reset of what budget-conscious gamers can expect.

iPhone Fold Launch 2026: Apple's First Foldable Could Capture 19% Market Share Instantly

Apple's long-awaited foldable iPhone is finally coming, and analysts predict it'll rocket the company to third place in the foldable market behind Samsung and Huawei. The secret weapon? Some seriously clever material science that could solve the crease problem that's plagued every foldable phone so far.

FAA Approves Military Laser Weapons for Drone Defense: What the New Airspace Rules Mean for Border Security

The FAA has given the Pentagon full approval to use high-energy laser systems against drones in US airspace, ending a two-month standoff that started when lasers shot down party balloons mistaken for cartel drones. The decision comes after safety assessments concluded these weapons don't pose increased risk to civilian aircraft.

China Chip Subsidies Reach $142 Billion: 3.6x More Than US Spent on Semiconductor Manufacturing

A new CSIS report reveals China has poured $142 billion into semiconductor subsidies over the past decade, dwarfing US spending by a factor of 3.6. But here's the twist: despite this massive investment, Chinese chipmakers still lag years behind TSMC and struggle with abysmal yields at advanced nodes.

Also Read

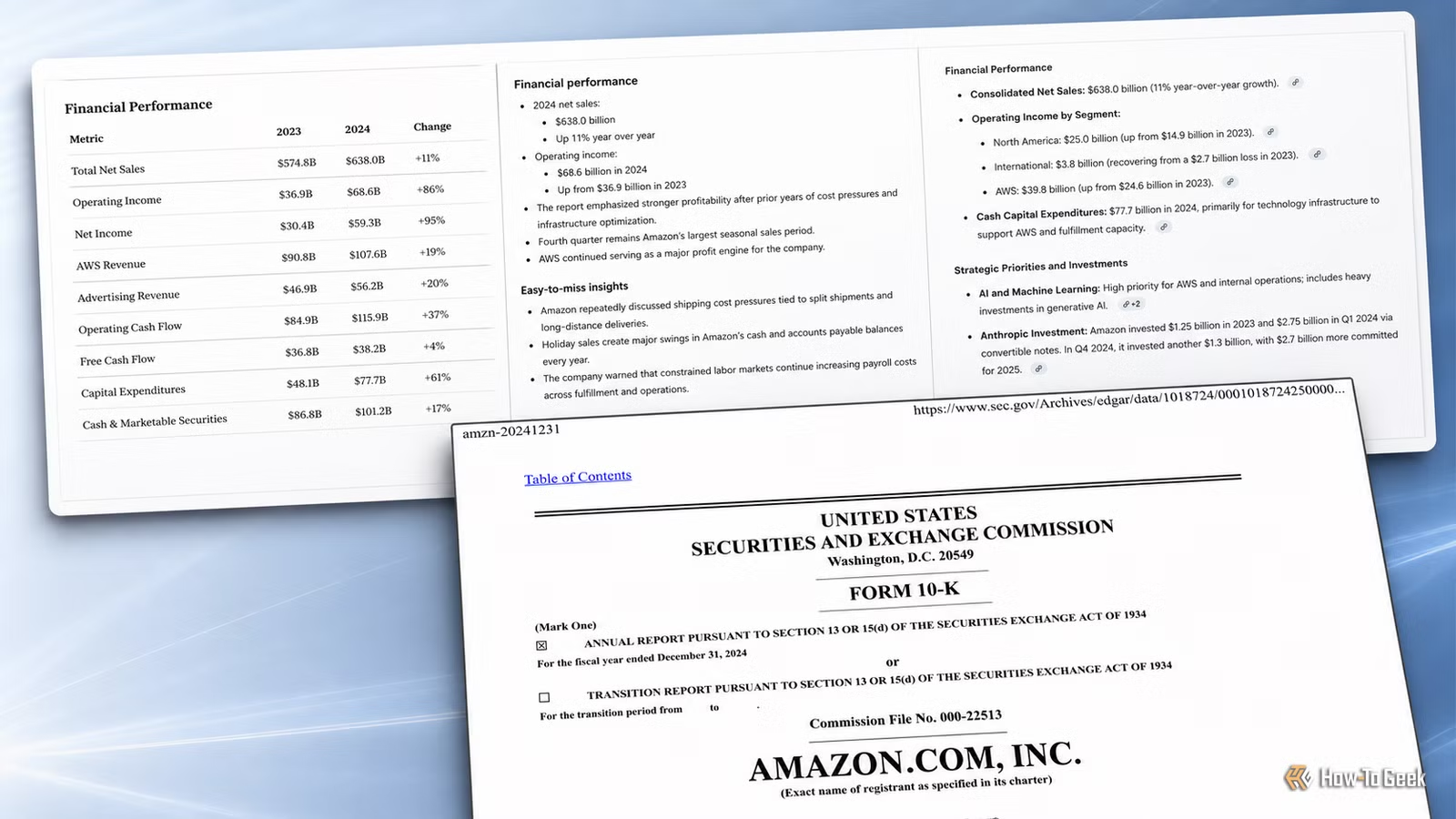

Claude vs ChatGPT vs Gemini: Which Summarizes PDFs Best?

A tech journalist tested all three major AI chatbots on the same 121-page Amazon investor filing using identical prompts. The results showed clear differences in how each tool handles long document summarization, with one emerging as the most reliable for professional use.

Samsung Galaxy S26 Drops Up to €500, iPhone Air Hits €850

European retailers are slashing prices on Samsung's S26 lineup as the company shifts focus to its foldables. The Galaxy S26+ 512GB now sells for under €1,000, while Apple's iPhone Air has dropped another €40 since last week.

Windows 11 Pro Has a Disposable PC Built In

Windows Sandbox lets you run a complete, isolated Windows environment that wipes itself clean when you close it. It's perfect for testing suspicious files, sketchy apps, or risky scripts without touching your main system.