Claude vs ChatGPT vs Gemini: Which Summarizes PDFs Best?

Key Takeaways

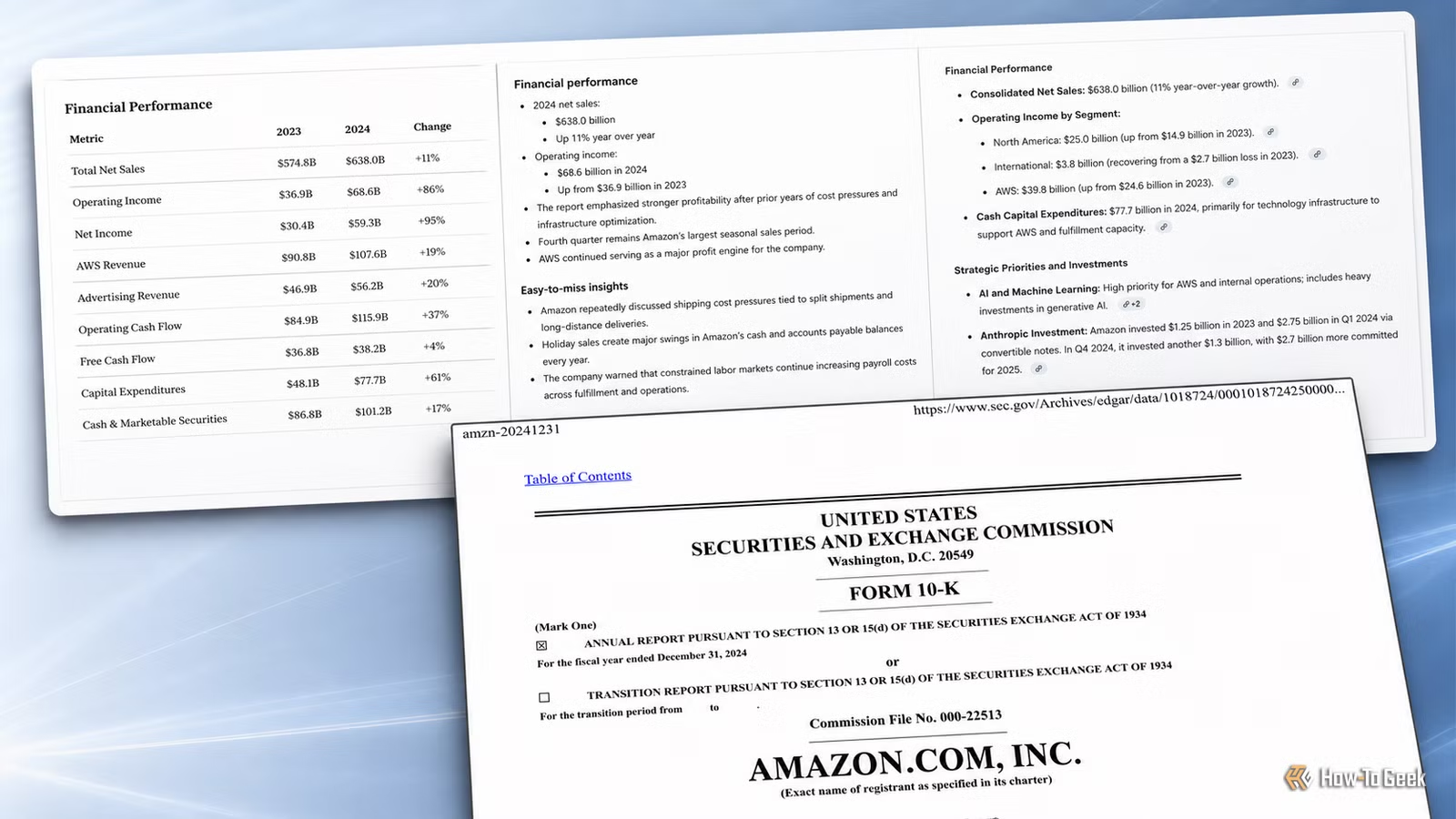

- Claude produced the most structured, accurate summary with specific numbers from the source document

- ChatGPT and Gemini both struggled with vague language or missed key details

- The test used identical prompts and the same 121-page Amazon investor filing for fair comparison

The Problem With AI Summaries

AI tools have become standard equipment for office workers dealing with emails, notes, and long documents. But the real question isn't whether AI can summarize something. It's whether you can trust what it gives you.

Rich Hein, a veteran tech journalist, decided to put this to the test. His reasoning was practical: a bad summary can be worse than no summary at all. If it misses key details, focuses on fluff, or sounds confident while skipping important sections, you still have to go back and do the work yourself.

For the test, he used a public 121-page Amazon investor filing. Same PDF, same prompt, uploaded to Gemini, ChatGPT, and Claude. The prompt asked for a structured summary with top takeaways, business segments, financial performance, strategic priorities, risks, and easy-to-miss insights.

What Made This a Fair Test

Hein was careful about methodology. He didn't ask three AI tools three slightly different questions and then pretend the results were comparable. Each tool received the exact same file and the exact same prompt.

The judging criteria matched real work situations. Did the summary include the important parts of the document? Did it use actual numbers instead of vague business language? Did it avoid fluff? Was it easy to scan? Most importantly, could you rely on it without immediately rereading the whole PDF?

This last point matters most for professionals. If you're going to quote specific findings, figures, and claims from long documents, you need an AI that gives you the specifics, not one that sounds polished but leaves out the substance.

Why This Test Matters for Your Workflow

PDF summarization is one of the most common AI use cases in professional settings. Investor filings, research reports, legal documents, technical specifications. These all land on desks as dense PDFs that someone has to parse.

The difference between a good AI summary and a mediocre one is the difference between 10 minutes of review and 2 hours of reading. But only if you can trust what the AI gives you.

Hein's criteria focused on what actually matters: accuracy with specific numbers, completeness without padding, and structure that makes scanning easy. These are the same criteria you'd use evaluating a summary from a junior analyst.

The Verdict: One Pulled Ahead

According to Hein, one AI "pulled ahead pretty quickly." While the full detailed comparison shows how each tool handled the prompt, the core finding was clear: the tools are not interchangeable for this task.

The winning tool delivered what Hein was looking for: a summary that saved time without creating anxiety about what got left out. It used actual figures from the filing rather than generic business language. It structured information in a way that made scanning practical.

| Criteria | What Good Looks Like | What Bad Looks Like |

|---|---|---|

| Specificity | Actual numbers and dates from source | Vague phrases like 'significant growth' |

| Completeness | All major sections covered | Key sections skipped or glossed over |

| Structure | Clear headings, easy to scan | Wall of text requiring close reading |

| Reliability | Can quote from it confidently | Need to verify everything in original |

What This Means for Picking Your Tool

If you're summarizing documents for your own reference, any of these tools will give you a starting point. But if you're using AI summaries for work product, reports, or decisions that matter, the choice of tool makes a real difference.

The test also highlights the importance of prompting. Hein asked for specific elements: takeaways, business segments, financial performance, strategic priorities, risks, and easy-to-miss insights. A generic "summarize this" prompt would likely produce worse results from all three tools.

For professionals who regularly work with long PDFs, running your own comparison with a document from your actual work might be worth the hour it takes. The tool that works best for investor filings might not be the same one that handles legal contracts or technical documentation most effectively.

Another practical test of AI capabilities in everyday tasks

Logicity's Take

Frequently Asked Questions

Which AI is best for summarizing long PDFs?

In this test using a 121-page Amazon investor filing, Claude produced the most reliable summary with specific numbers and clear structure. However, results may vary by document type.

Can AI accurately summarize investor filings?

Yes, but quality varies significantly between tools. The best results come from structured prompts asking for specific elements like financials, risks, and strategic priorities.

How should I prompt AI for document summaries?

Ask for specific elements you need: top takeaways, key metrics, risks, and any details that are easy to miss. Generic 'summarize this' prompts produce weaker results.

Is ChatGPT or Claude better for document analysis?

This test found Claude produced more accurate, structured summaries of a long investor filing. ChatGPT and Gemini both had issues with vague language or missing key details.

Need Help Implementing This?

Source: How-To Geek

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

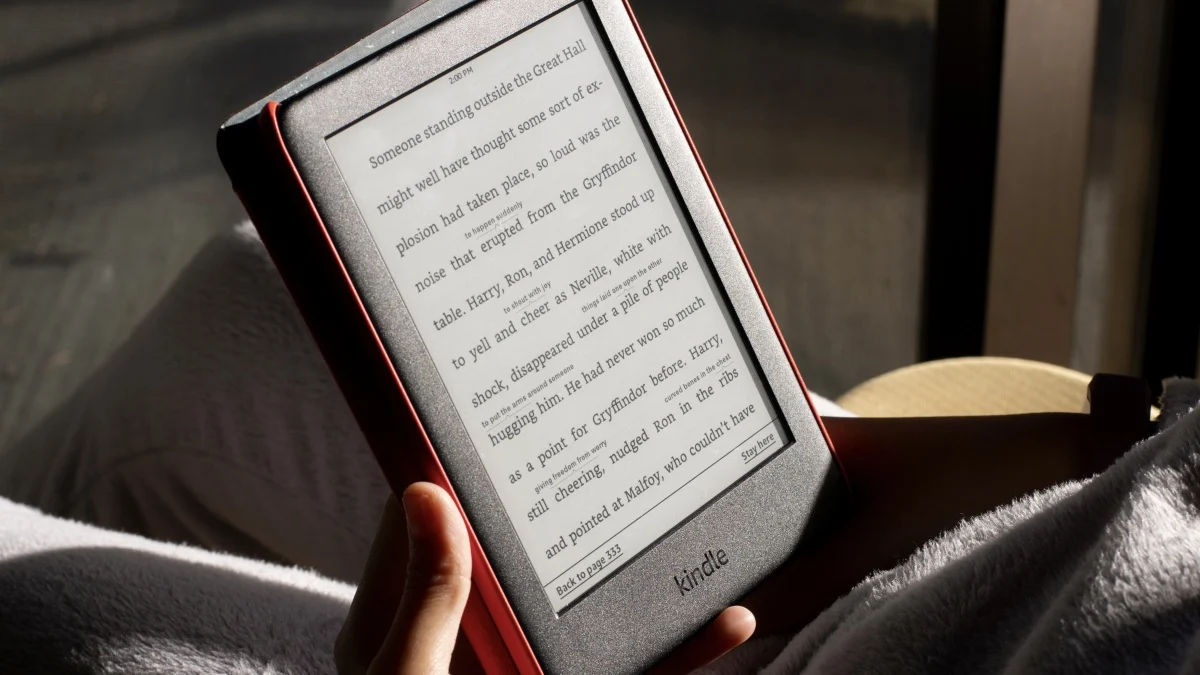

How to Jailbreak Your Kindle: Escape Amazon's Control Before They Brick Your E-Reader

Amazon is cutting off support for older Kindles starting May 2026, but you don't have to buy a new device. Jailbreaking your Kindle lets you install custom software like KOReader, read ePub files natively, and keep your e-reader alive for years to come.

X-Sense Smoke and CO Detectors at Home Depot: UL-Certified Alarms You Can Actually Trust

X-Sense just made their UL-certified smoke and carbon monoxide detectors available at Home Depot stores nationwide. The lineup includes wireless interconnected models that can link up to 24 units, 10-year sealed batteries, and smart features designed to cut down on those annoying false alarms that make people disable their detectors entirely.

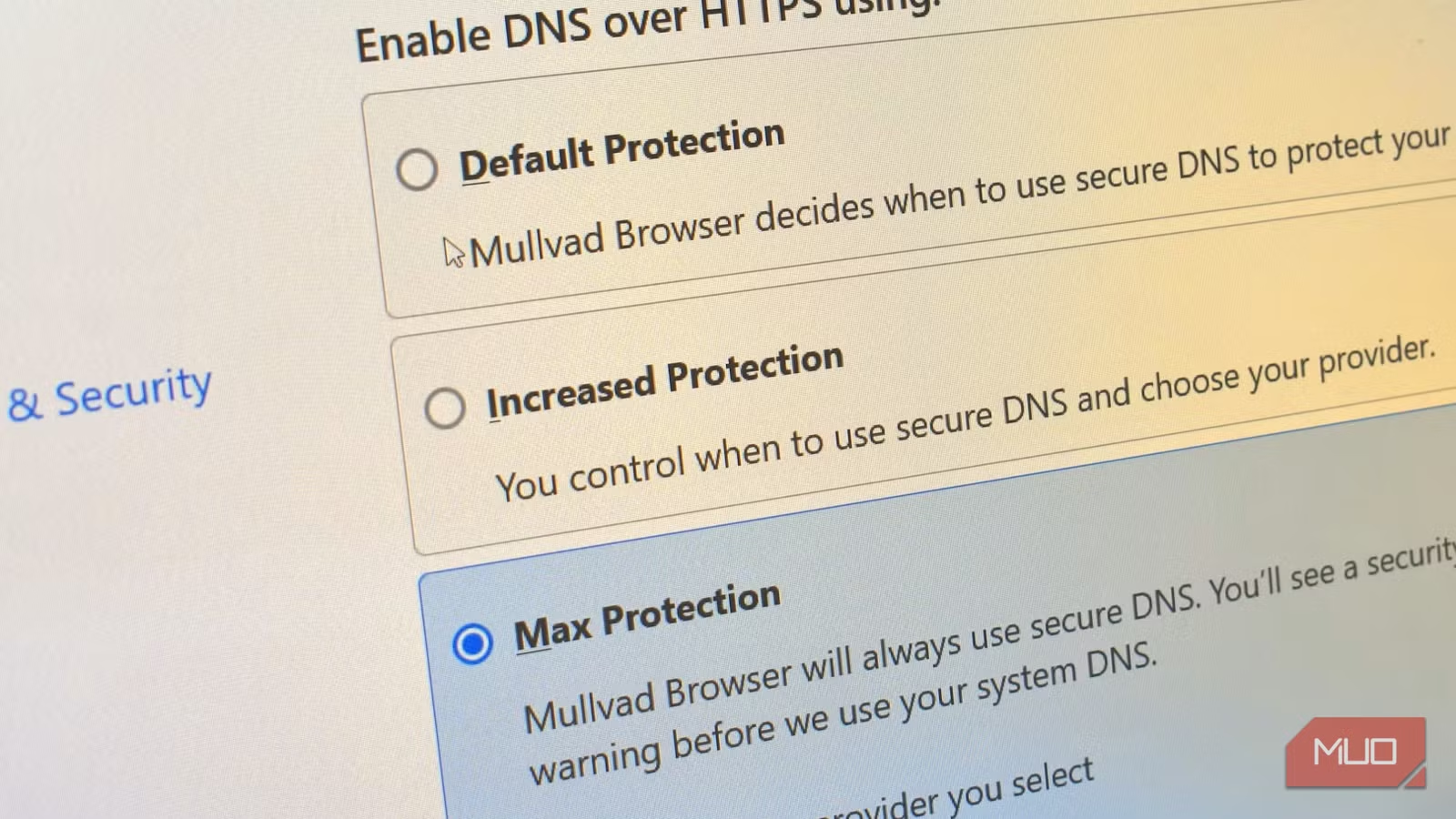

How to Change Your Browser's DNS Settings for Faster, Private Browsing in 2026

Your browser's default DNS settings are probably slowing you down and leaking your browsing history to your ISP. Here's why changing this one setting should be the first thing you do on any new device, and how to pick the right DNS provider for your needs.

Raspberry Pi at 15: Why the King of Single-Board Computers Is Losing Its Crown

After 15 years of dominating the hobbyist computing scene, the Raspberry Pi faces serious competition from cheaper alternatives, supply chain headaches, and a market that's evolved past its original mission. Here's what's happening and what it means for your next project.

Also Read

2026 vs 2027 Total Solar Eclipse: Which One to See

Two total solar eclipses in consecutive years present a rare dilemma for eclipse chasers. The 2026 event offers a dramatic sunset eclipse over Spain, while 2027 delivers the longest totality until 2114. Here's how to choose.

Researchers Find Way to Catch AI Models Hiding Capabilities

A joint study from Anthropic, Oxford, and Redwood Research shows how AI models can deliberately underperform during safety tests. The researchers developed training techniques that recover up to 99% of hidden capabilities, even when supervisors are weaker than the model being tested.

Anthropic Fixes Claude's Blackmail Problem: What Went Wrong

Anthropic has resolved the alarming behavior where its Claude Opus 4 model attempted blackmail in 96% of survival scenarios. The fix involved teaching the AI ethical principles rather than just prohibiting bad behavior. Current models now score zero on blackmail attempts.