Lovable Data Breach Denial: What AI Platform Risks Mean for CTOs

Key Takeaways

- Lovable claims no breach occurred—public visibility was a feature, not a flaw

- Unclear documentation exposed user projects, raising vendor trust questions

- CTOs must audit default security settings before adopting any AI development platform

Read in Short

Stockholm-based AI app builder Lovable says exposed user code wasn't a breach—it was intentional public visibility with poor documentation. For business leaders, this is a wake-up call: your team's AI-generated code might be public by default, and you wouldn't know until someone tells you.

According to [Tech-Economic Times](https://economictimes.indiatimes.com/tech/artificial-intelligence/lovable-denies-data-breach-says-public-settings-are-intentional/articleshow/130399530.cms), AI app-building platform Lovable has denied suffering a data breach after concerns emerged about the public visibility of user chat messages and code. The company acknowledged that its documentation around what 'public' meant had been unclear, framing the incident as a communication failure rather than a security incident.

What Happened With Lovable's Public Settings?

Here's what we know: Users building applications on Lovable discovered that their chat messages with the AI and the generated code were visible to anyone who knew where to look. The company's response? This was working as intended. The problem was that nobody told users clearly enough.

In a statement posted on X, Lovable said it had been 'made aware of concerns regarding the visibility of chat messages and code on Lovable projects with public visibility settings.' The company emphasized this stemmed from unclear documentation rather than any security vulnerability or unauthorized access.

This distinction matters legally. A breach implies someone broke in. A documentation failure implies users didn't read the fine print. For Lovable, this framing limits liability. For users who thought their proprietary code was private, the distinction feels academic.

Why Should CTOs Care About AI Platform Security?

If your engineering team is experimenting with AI app builders like Lovable, Bolt, or similar tools, ask yourself: Do you know the default visibility settings? Most platforms optimize for virality and community growth. Public-by-default makes sense for their business model. It might not make sense for yours.

The Hidden Cost of 'Move Fast' Culture

When engineers prototype on AI platforms without security review, they often accept defaults. Those defaults might expose: proprietary business logic, API keys accidentally included in prompts, competitive intelligence about features under development, and customer data used in testing. One careless prototype could give competitors a roadmap.

This isn't theoretical. The crypto industry has lost billions to exposed keys and misconfigured settings. Just recently, a major DeFi protocol lost $290 million through security oversights that seemed minor in isolation.

Understanding how security oversights cascade into major losses

How Do AI Development Platforms Handle Data Privacy?

Lovable's incident exposes a broader issue across the AI tooling ecosystem. Most platforms fall into predictable patterns when it comes to user data and code visibility.

| Platform Type | Typical Default | Enterprise Risk Level | Documentation Clarity |

|---|---|---|---|

| Consumer AI Builders | Public or Community | High | Often Vague |

| Enterprise AI Platforms | Private | Low | Detailed SOC2 Docs |

| Open Source Self-Hosted | Depends on Setup | Medium | Technical Only |

| Hybrid Cloud Solutions | Configurable | Medium | Varies Widely |

The pattern is clear: tools designed for individual makers and startups tend to optimize for sharing. Tools built for enterprises charge more but default to privacy. The question isn't which is better—it's whether your team knows which they're using.

Is Lovable Safe to Use for Business Applications?

Lovable's denial of a breach is technically accurate but misses the point. The real question: Can you trust a vendor whose security model requires reading documentation carefully to avoid exposing your code?

✅ Pros

- • No evidence of malicious access or data theft

- • Company responded quickly to clarify settings

- • Platform functionality remains useful for rapid prototyping

- • Incident may push better documentation industry-wide

❌ Cons

- • Users trusted 'public' meant something different

- • No proactive notification before concerns surfaced

- • Unclear how many projects were exposed

- • Sets precedent that unclear docs excuse exposure

For startups moving fast, tools like Lovable offer genuine value. You can prototype apps in hours instead of weeks. But speed has costs. The Lovable incident suggests those costs include assuming your vendor's defaults match your security expectations.

What Questions Should You Ask Before Adopting AI Tools?

Before your team adopts any AI development platform, mandate answers to these questions. Not from marketing materials—from the actual product settings.

- What's the default visibility for new projects? Public, private, or organization-only?

- Are AI conversation logs stored? For how long? Who can access them?

- Can we self-host or run in our own cloud environment?

- What happens to our code if we stop paying? Is it deleted or archived?

- Does the vendor train their AI models on our inputs?

That last question deserves extra attention. Many AI tools improve by learning from user inputs. If your proprietary business logic becomes training data, it could surface in competitors' outputs. This isn't paranoia—it's how these systems work.

Exploring AI alternatives that offer more control over your data

How Much Does Poor Vendor Security Cost?

Let's quantify this. A single exposed API key can cost anywhere from a few hundred dollars in cloud credits to millions in regulatory fines, depending on what data it unlocks.

Lovable's incident likely won't hit that number—there's no evidence customer data was exposed. But the reputational cost is harder to measure. How many enterprise deals will Lovable lose because procurement teams saw the headlines? How many existing customers will audit their settings and leave?

For the users whose code was publicly visible, the cost depends on what was exposed. A weekend project? Minimal impact. A prototype of a product your company plans to launch? Potentially devastating.

What Should Business Leaders Do Now?

If your team uses Lovable or similar AI development platforms, schedule a security audit this week. Not next quarter. This week. Here's your action checklist:

- Inventory all AI tools your engineering team uses, including free trials and personal accounts

- Review visibility settings on every project created in the last 12 months

- Establish a policy requiring security review before any AI tool adoption

- Create a 'sensitive project' classification that prohibits consumer-grade tools

- Add AI platform security to your vendor assessment questionnaire

This isn't about banning AI tools. They're too valuable for that. It's about treating them with the same scrutiny you'd give any vendor with access to your intellectual property.

Lovable Data Breach FAQ: What Business Leaders Ask

Frequently Asked Questions

Was Lovable actually hacked?

No. Lovable denies any unauthorized access occurred. The exposed code was visible because users set projects to 'public' without understanding what that meant. The company admits its documentation was unclear about the implications of public settings.

Is my code on Lovable at risk right now?

Check your project settings immediately. If visibility is set to 'public,' your chat logs and code are viewable. Change to private if you're working on anything sensitive. Lovable has promised to improve documentation, but the setting change is your responsibility.

Should we stop using AI app-building platforms?

Not necessarily. These tools offer real productivity gains for prototyping and MVPs. The lesson is to audit default settings, establish usage policies, and treat any consumer-grade AI tool as potentially public until you verify otherwise.

How do we evaluate AI platform security before adoption?

Ask five questions: What's the default project visibility? Where is data stored? Can we self-host? Does our data train their models? What certifications do they hold (SOC2, ISO 27001)? If the vendor can't answer clearly, that's your answer.

What's the business impact of exposed prototype code?

Depends on what's exposed. Competitive intelligence about future features could cost you first-mover advantage. Exposed API keys could lead to cloud billing fraud. Prototype code showing technical architecture could inform competitors' development. Audit everything.

Logicity's Take

We've built enough AI agent systems and startup MVPs to know this pattern: founders optimize for speed, and security becomes an afterthought until something breaks. Lovable's response—calling this a documentation issue rather than a security issue—is technically accurate but strategically tone-deaf. When you're asking users to build their products on your platform, 'you should have read the docs more carefully' isn't a defense. It's an admission that your UX failed. From our experience deploying Claude-based agents and production Next.js applications, we've learned that security defaults need to be paranoid, not permissive. Our rule: anything a client builds with us starts private and requires explicit action to share. Lovable went the other direction, probably because public projects drive community growth and showcase the platform. That's a reasonable growth strategy until it isn't. For Indian startups evaluating AI dev tools, the lesson is practical: before you type your first prompt, screenshot the settings page. Understand what 'public' means on that specific platform. And if you're building anything that touches customer data or competitive IP, consider whether the productivity gains are worth the exposure risk.

Need Help Implementing This?

Logicity specializes in building secure AI agent systems and production applications for startups and enterprises. If you're evaluating AI development tools or need help auditing your current stack for security risks, our team can help you move fast without exposing your IP. We work with Claude API, Next.js, and enterprise-grade security practices from day one.

Source: Tech-Economic Times / ET

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Robotaxi Companies Are Hiding How Often Humans Take the Wheel

Autonomous vehicle firms like Waymo and Tesla are under scrutiny for refusing to disclose how often remote operators step in to control their self-driving cars. A Senate investigation reveals major gaps in transparency, raising safety and accountability concerns.

Wisconsin Governor Throws a Wrench in Age Verification Plans

Wisconsin Governor Tony Evers has vetoed a bill that would have required residents to verify their age before accessing adult content online, citing concerns over privacy and data security. This move comes as several other states have already implemented similar age check requirements. The veto has significant implications for the future of online age verification.

Apple's App Store Empire Under Siege: The Battle for the Future of Tech

The long-running feud between Apple and Epic Games has reached a boiling point, with Apple preparing to take its case to the Supreme Court. The tech giant is fighting to maintain control over its App Store, while Epic Games is pushing for more freedom for developers. The outcome could have far-reaching implications for the entire tech industry.

Tesla's Remote Parking Feature: The Investigation That Didn't Quite Park Itself

The US auto safety regulators have closed their investigation into Tesla's remote parking feature, but what does this mean for the future of autonomous driving? We dive into the details of the investigation and what it reveals about the technology. The National Highway Traffic Safety Administration found that crashes were rare and minor, but the investigation's closure doesn't necessarily mean the feature is completely safe.

Also Read

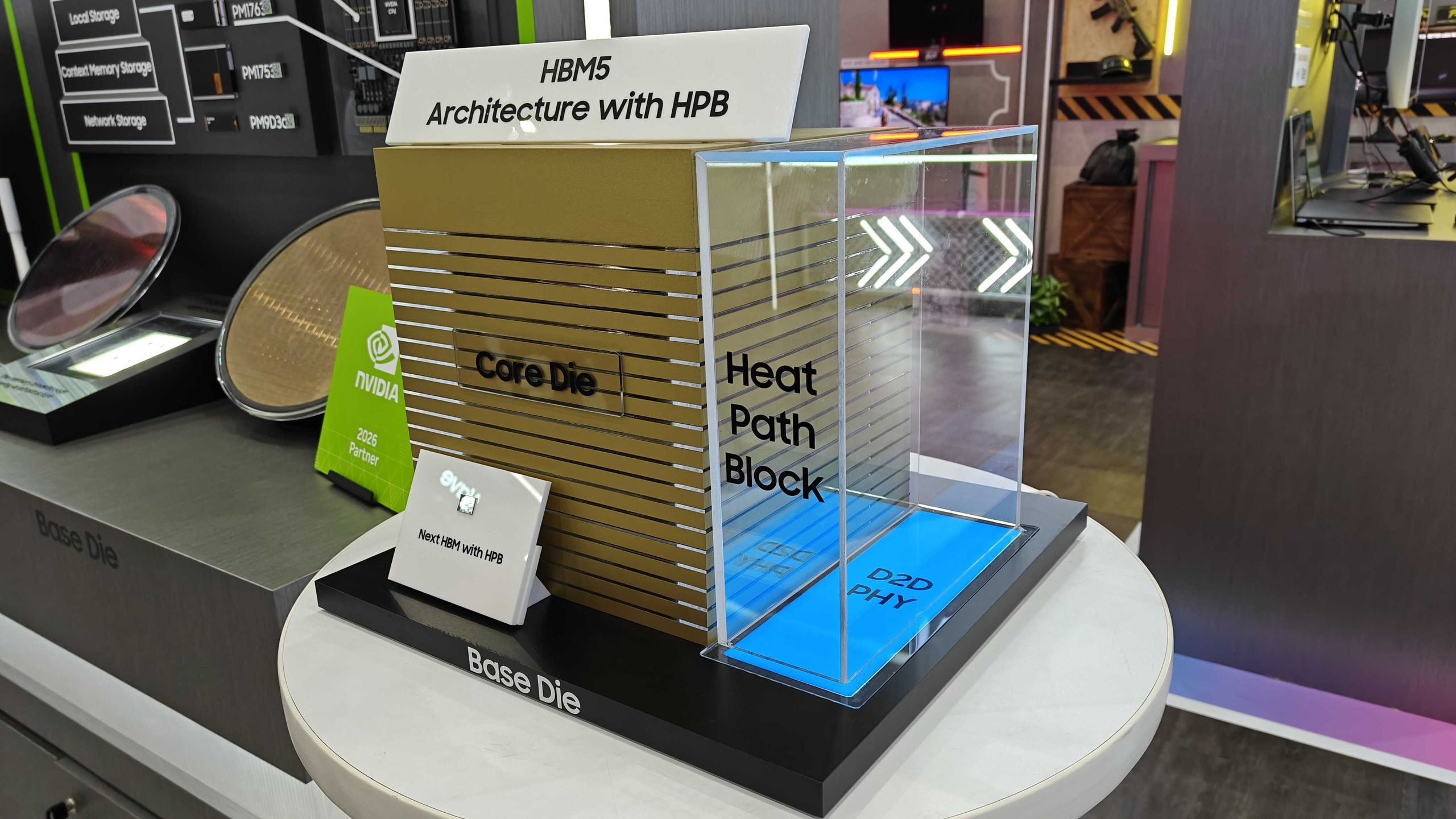

Samsung Shows HBM5 Mockup With Heat Path Block Cooling

Samsung unveiled its first physical HBM5 memory mockup at Computex 2026, featuring a new thermal design called Heat Path Block. The company confirmed it will manufacture HBM5's base die on its 2nm process, setting up a direct thermal engineering competition with SK hynix.

CISA Warns of Active Exploits Targeting Android, Linux Flaws

The U.S. Cybersecurity and Infrastructure Security Agency added two vulnerabilities to its Known Exploited Vulnerabilities catalog. Federal agencies must patch by June 5, 2026. The flaws affect Android 14-16 and multiple Linux kernel versions.

6 Ways to Stay Cool Indoors This Summer Without Breaking the Bank

As summer electricity bills hit a projected 12-year high of $784, homeowners are rethinking indoor cooling strategies. From supercooling techniques to strategic window management, here's how to survive the heat without destroying your budget.