Google Finds First AI-Developed Zero-Day Exploit in the Wild

Key Takeaways

- Google documented the first zero-day exploit created with AI assistance, targeting 2FA in a web administration tool

- Malware now modifies its own source code in real-time to evade detection

- An Android backdoor called PROMPTSPY uses Google Gemini to screenshot phones and simulate user interactions

The Google Threat Intelligence Group (GTIG) has published a report documenting what appears to be the first zero-day vulnerability developed with AI assistance. The exploit, a Python script targeting two-factor authentication in a web-based system administration tool, showed clear signs of AI-generated code.

But the zero-day is just one finding in a broader report that paints a concerning picture. Attackers are now deploying malware that rewrites its own source code while running. They're using Google's own Gemini cloud service to build backdoors that can take screenshots of your phone and tap buttons on your behalf. The game has changed.

How AI Found the Zero-Day

The exploit in question abuses a logic flaw in a "popular open-source, web-based system administration tool," according to GTIG. The code bore what researchers describe as "all the hallmarks of AI usage." It bypasses two-factor authentication entirely.

GTIG notes that large language models struggle with complex enterprise authorization flows. The systems are too convoluted for AI to navigate reliably. But LLMs excel at something else: contextual reasoning. They can read source code, understand what a developer intended to implement, compare that to what actually got implemented, and spot the gap.

In other words, AI found the bug not by brute-forcing attack vectors but by reading the code and noticing that the logic didn't match the developer's apparent goal. It found an unconsidered corner case that humans missed.

Malware That Rewrites Itself

The GTIG report identifies malware families that can modify their own source code and create exploit payloads dynamically. Some generate decoy code to throw off security researchers. Examples cited include malware families called CANFAIL and LONGSTREAM.

These aren't static files that signature-based antivirus can catch. The code morphs as it runs. It adds filler code to obscure attack logic. It layers indirection so the true intent stays hidden. Traditional security software has a much harder time detecting or containing something that keeps changing shape.

AI agents can now alter their source code in real-time and adjust attack strategies mid-execution to evade detection. This extends beyond malware to phishing and network attacks as well.

Gemini-Powered Backdoors

One of the more unsettling findings involves an Android backdoor called PROMPTSPY. It uses Google Gemini's cloud API (not the on-device version) to manipulate infected phones.

The backdoor takes screenshots and uses AI to understand what UI elements are displayed. It then simulates user interactions, tapping buttons and navigating menus on the victim's behalf. The capabilities include capturing PIN and pattern authentication and intercepting taps on the Uninstall button so victims can't remove the malware.

This represents a shift from traditional malware that exploits technical vulnerabilities. PROMPTSPY exploits the phone's own interface, acting like a remote user who can see the screen and tap anything.

AI-Generated Phishing at Scale

The report also documents how attackers use AI to generate organizational charts for target companies and craft custom phishing emails. The AI collects real information from news articles, LinkedIn profiles, and press releases to make the messages convincing.

This isn't a new technique. Spear phishing has always used personal details to increase success rates. But AI dramatically reduces the time and skill required. One attacker with AI tools can now do what previously required a team of researchers manually gathering intelligence.

What This Means for Defense

The GTIG findings suggest that detection strategies need to evolve. Signature-based detection struggles against self-modifying code. Static analysis fails when the malware generates new payloads dynamically.

Behavioral analysis becomes more important. Instead of asking "does this code match a known threat," security systems need to ask "is this code doing something suspicious, regardless of what it looks like." That's harder and more resource-intensive.

For the zero-day problem specifically, the finding suggests that AI-assisted code review should become standard practice. If attackers are using AI to find logic flaws, defenders need to do the same before the code ships.

Logicity's Take

This report marks a turning point, not because AI-assisted attacks are new but because we now have documented proof of AI finding zero-days in production systems. The defenders are playing catch-up. Companies relying on yesterday's security stack are now exposed to threats that evolve faster than their detection systems can adapt.

Frequently Asked Questions

What is the first AI-developed zero-day exploit?

According to Google's Threat Intelligence Group, it's a Python script that bypasses two-factor authentication in a popular open-source web administration tool. The code shows clear indicators of AI assistance and exploits a logic flaw that AI identified through contextual reasoning.

How does self-morphing malware evade detection?

The malware modifies its own source code while running, generates decoy code, and creates exploit payloads dynamically. This constant shape-shifting makes signature-based detection ineffective because the code never matches known threat patterns.

What is PROMPTSPY and how does it work?

PROMPTSPY is an Android backdoor that uses Google Gemini's cloud API to analyze screenshots and simulate user interactions. It can capture PIN authentication, navigate the phone's UI, and intercept attempts to uninstall it.

Can AI tools be used to defend against these attacks?

Yes. The same contextual reasoning that helps AI find vulnerabilities can be used in defensive code review. Companies should consider AI-assisted security audits to catch logic flaws before attackers do.

Related coverage on AI industry leadership and oversight

Need Help Implementing This?

If your organization needs to evaluate its security posture against AI-powered threats or implement AI-assisted code review, our team can connect you with specialists in this space. Reach out to discuss your specific situation.

Source: Latest from Tom's Hardware

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Alienware AW2726DM Review: The $350 QD-OLED Gaming Monitor That Changes Everything

Dell's Alienware AW2726DM shatters the OLED gaming monitor price barrier at just $350, delivering 27-inch QHD resolution, 240Hz refresh rate, and Quantum Dot color that rivals monitors costing twice as much. This isn't an incremental price drop. It's a complete reset of what budget-conscious gamers can expect.

iPhone Fold Launch 2026: Apple's First Foldable Could Capture 19% Market Share Instantly

Apple's long-awaited foldable iPhone is finally coming, and analysts predict it'll rocket the company to third place in the foldable market behind Samsung and Huawei. The secret weapon? Some seriously clever material science that could solve the crease problem that's plagued every foldable phone so far.

FAA Approves Military Laser Weapons for Drone Defense: What the New Airspace Rules Mean for Border Security

The FAA has given the Pentagon full approval to use high-energy laser systems against drones in US airspace, ending a two-month standoff that started when lasers shot down party balloons mistaken for cartel drones. The decision comes after safety assessments concluded these weapons don't pose increased risk to civilian aircraft.

China Chip Subsidies Reach $142 Billion: 3.6x More Than US Spent on Semiconductor Manufacturing

A new CSIS report reveals China has poured $142 billion into semiconductor subsidies over the past decade, dwarfing US spending by a factor of 3.6. But here's the twist: despite this massive investment, Chinese chipmakers still lag years behind TSMC and struggle with abysmal yields at advanced nodes.

Also Read

8 Ways Your VPN Is Leaking Data Right Now

VPNs promise privacy, but most users don't realize their DNS requests, browser fingerprints, and real IP addresses can still be exposed. A How-To Geek analysis breaks down the eight most common VPN leaks and how to fix them.

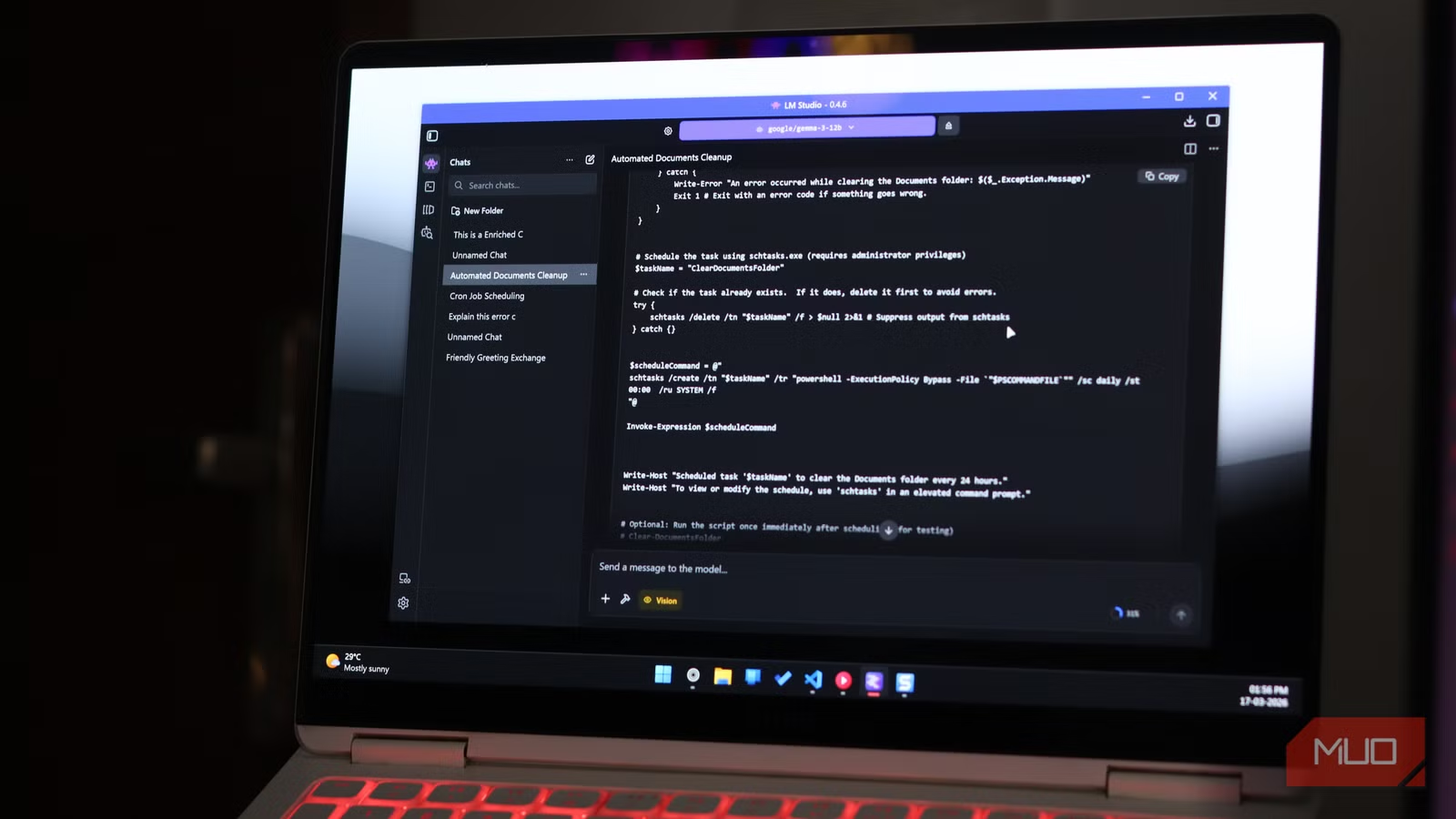

Why I Ditched ChatGPT for a Local LLM on My Laptop

A tech writer explains why he canceled his AI subscription and switched to running LLMs locally with LM Studio. The setup costs nothing after initial hardware, runs faster than expected, and solves the privacy concerns that come with cloud-based AI.

Sam Altman Faces Congressional Probe Over Investment Conflicts

The House Oversight Committee has demanded Sam Altman testify by May 22 about potential conflicts of interest involving his personal investments. Six Republican attorneys general are also pushing the SEC to investigate whether the OpenAI CEO pressured the company to invest in startups where he holds stakes.