ChatGPT Now Alerts a Trusted Friend If You're at Risk

Key Takeaways

- ChatGPT users can now nominate one adult who OpenAI may contact if the system detects serious self-harm risk

- A trained human team reviews each case before any notification goes out, typically within one hour

- OpenAI says more than 1 million of its 800 million weekly users express suicidal thoughts in conversations

What Is Trusted Contact?

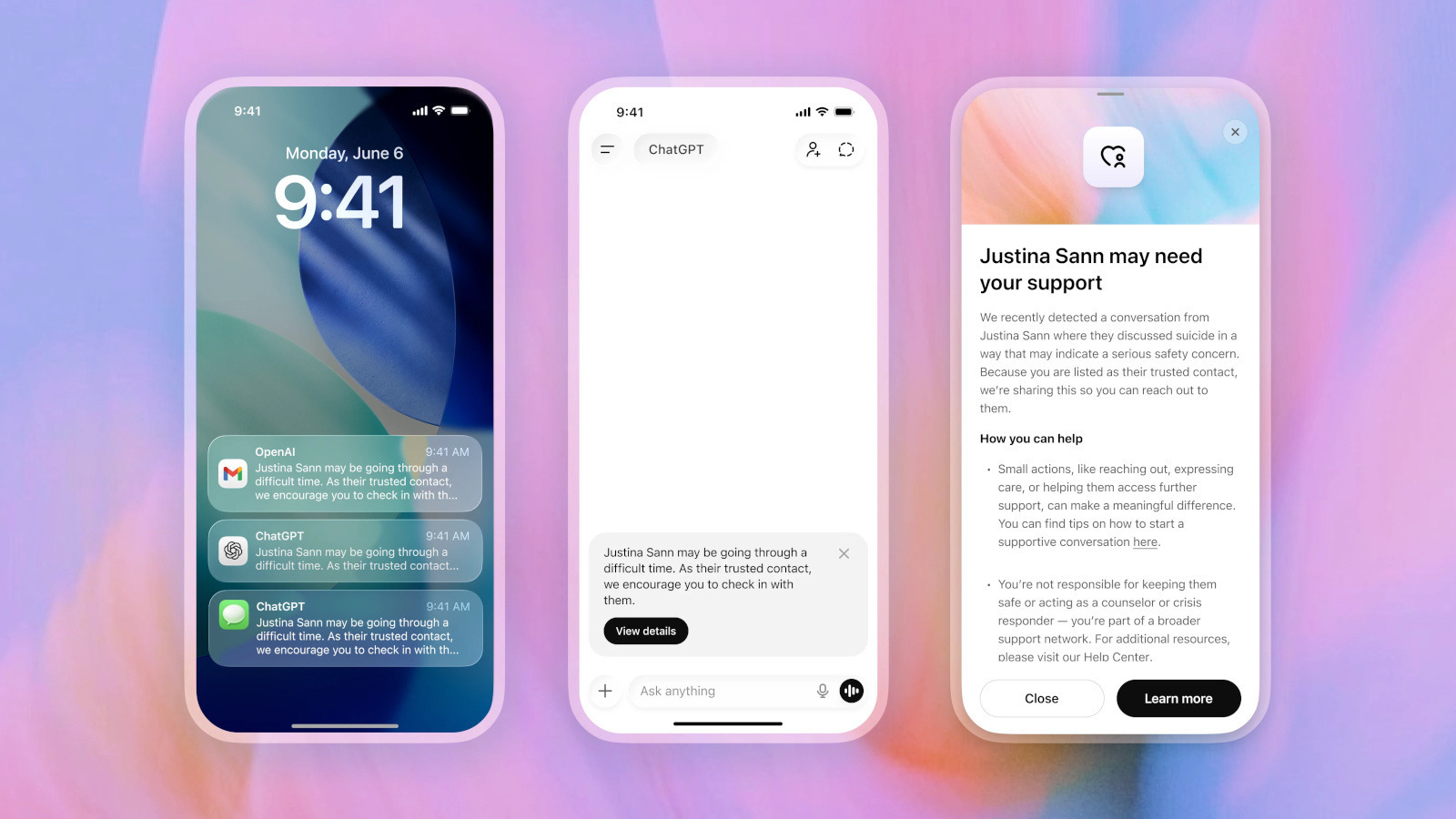

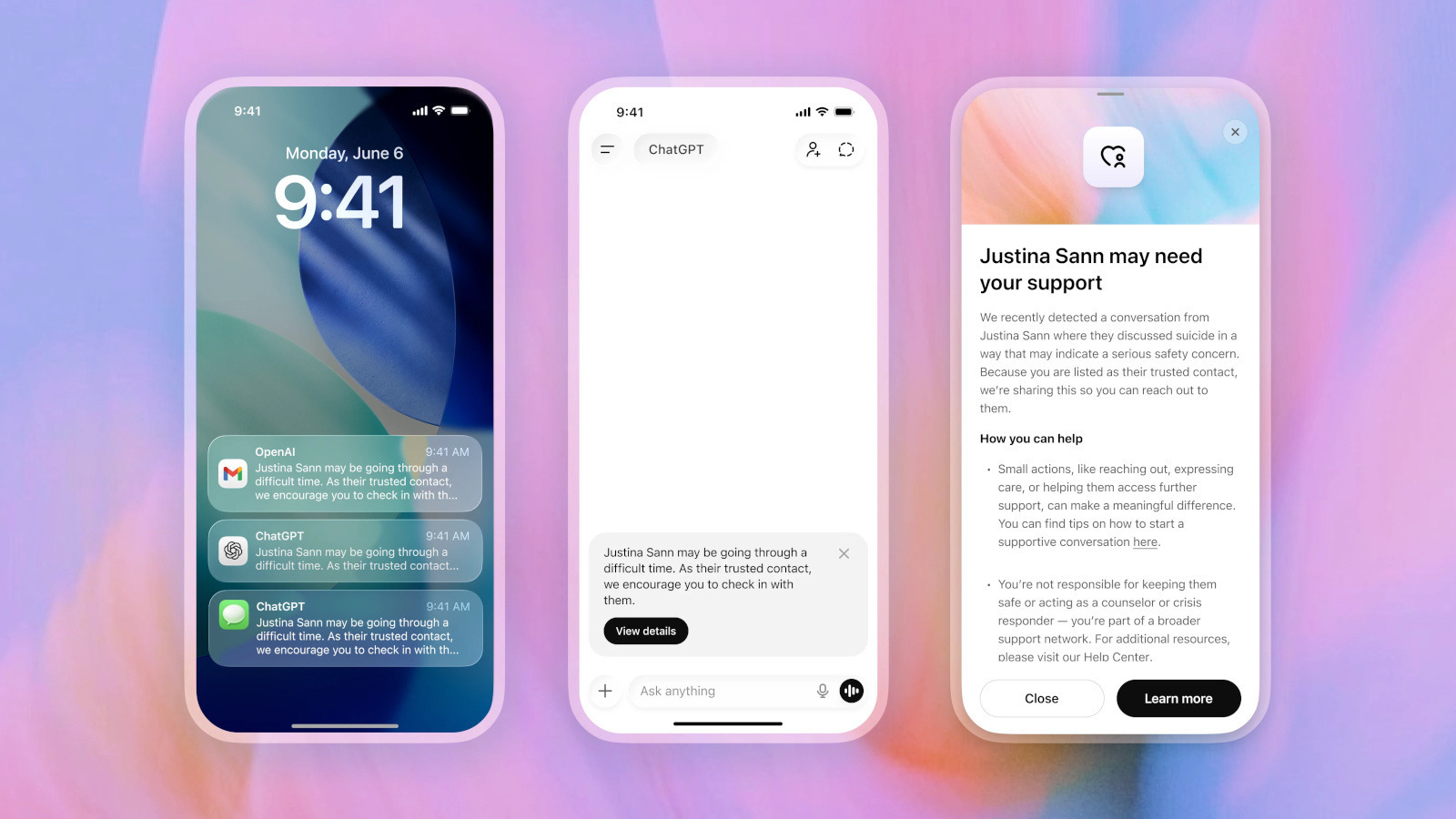

OpenAI announced Trusted Contact on May 8, 2026. The feature lets ChatGPT users 18 and older nominate one adult friend or family member. If OpenAI's system detects a serious possibility that the user might hurt themselves, the company can reach out to that person.

Users add a contact through ChatGPT's settings. The nominated person receives an invitation and has one week to accept. If they don't respond, the user can pick someone else.

The system isn't a simple trigger. When ChatGPT flags a concerning conversation, a "small team of specially trained people" reviews the situation. Only if they determine there's genuine risk does the contact receive an email, text message, or in-app notification.

How the Alert Works

Before enabling Trusted Contact, ChatGPT warns users that the company may notify their contact if it detects serious self-harm risk. When a concerning conversation happens, the system first encourages the user to reach out to their friend directly. It even suggests conversation starters.

If the trained review team decides an alert is necessary, the contact receives a message: "[The user] may be going through a difficult time. As their Trusted Contact, we encourage you to check in with them."

The contact can view more details, including that OpenAI detected a conversation about suicide. But the company won't share transcripts. User privacy remains intact.

“While no system is perfect, and a notification to a Trusted Contact may not always reflect exactly what someone is experiencing, every notification undergoes trained human review before it is sent, and we strive to review these safety notifications in under one hour.”

— OpenAI announcement

Why OpenAI Built This Now

The feature arrives after a difficult year for OpenAI's reputation on mental health. In 2025, the company faced a wrongful death lawsuit alleging that ChatGPT helped a teenager plan his suicide after he discussed four previous attempts with the chatbot.

A BBC investigation published in November 2025 found that in at least one case, ChatGPT advised a user on methods to end her life. OpenAI told the BBC it had improved how the chatbot responds to people in distress since that investigation.

The company has acknowledged the scale of the problem. More than a million people each week use ChatGPT to discuss suicidal thoughts. That's roughly one in 800 of its weekly active users.

Comparing ChatGPT's capabilities against other AI assistants

ChatGPT as Digital Therapist

More people have been turning to ChatGPT for mental health support. The chatbot is available 24/7, doesn't judge, and costs less than therapy. For many users, especially those without access to professional help, it fills a gap.

But AI chatbots aren't trained therapists. They can miss warning signs, give harmful advice, or respond in ways that make things worse. Trusted Contact is OpenAI's attempt to add a human safety net around an AI system that millions use for emotional support.

The feature builds on ChatGPT's existing parental controls, extending the concept of oversight to adult users who opt in.

Limitations to Consider

Trusted Contact is opt-in. Users who don't enable it won't have this safety net. And users in crisis may not think to set it up in advance.

The system relies on AI detection plus human review. Both can fail. OpenAI acknowledges this: "no system is perfect." A notification might come too late, or not at all.

There's also a privacy tradeoff. Some users may avoid honest conversations with ChatGPT if they know someone might be contacted. The feature works only for people willing to share that vulnerability.

Logicity's Take

If You Need Help

If you or someone you know is experiencing suicidal thoughts, contact the National Suicide Prevention Lifeline at 1-800-273-8255. The line is open 24/7, and online chat is available if phone isn't an option.

Frequently Asked Questions

How do I enable Trusted Contact on ChatGPT?

Go to ChatGPT settings and add one adult contact. They'll receive an invitation and have one week to accept it.

Will my Trusted Contact see my ChatGPT conversations?

No. OpenAI will tell them a concerning conversation was detected, but it won't share transcripts.

Is the alert automatic or does a human review it?

A trained human team reviews each case before any notification is sent. OpenAI aims to complete reviews within one hour.

Can I have more than one Trusted Contact?

No. Currently you can only nominate one adult contact at a time.

Is Trusted Contact available to users under 18?

No. The feature is only available to adults 18 and older. Younger users have separate parental controls.

Need Help Implementing This?

Source: Engadget

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

Robotaxi Companies Are Hiding How Often Humans Take the Wheel

Autonomous vehicle firms like Waymo and Tesla are under scrutiny for refusing to disclose how often remote operators step in to control their self-driving cars. A Senate investigation reveals major gaps in transparency, raising safety and accountability concerns.

Wisconsin Governor Throws a Wrench in Age Verification Plans

Wisconsin Governor Tony Evers has vetoed a bill that would have required residents to verify their age before accessing adult content online, citing concerns over privacy and data security. This move comes as several other states have already implemented similar age check requirements. The veto has significant implications for the future of online age verification.

Apple's App Store Empire Under Siege: The Battle for the Future of Tech

The long-running feud between Apple and Epic Games has reached a boiling point, with Apple preparing to take its case to the Supreme Court. The tech giant is fighting to maintain control over its App Store, while Epic Games is pushing for more freedom for developers. The outcome could have far-reaching implications for the entire tech industry.

Tesla's Remote Parking Feature: The Investigation That Didn't Quite Park Itself

The US auto safety regulators have closed their investigation into Tesla's remote parking feature, but what does this mean for the future of autonomous driving? We dive into the details of the investigation and what it reveals about the technology. The National Highway Traffic Safety Administration found that crashes were rare and minor, but the investigation's closure doesn't necessarily mean the feature is completely safe.

Also Read

Pentagon Releases 161 Declassified UFO Files With 30 Videos

The Pentagon published its first batch of declassified UAP files on May 8, responding to President Trump's February directive. The release includes 161 files with nearly 30 videos showing unidentified objects captured by military sensors, plus eyewitness accounts from Apollo astronauts.

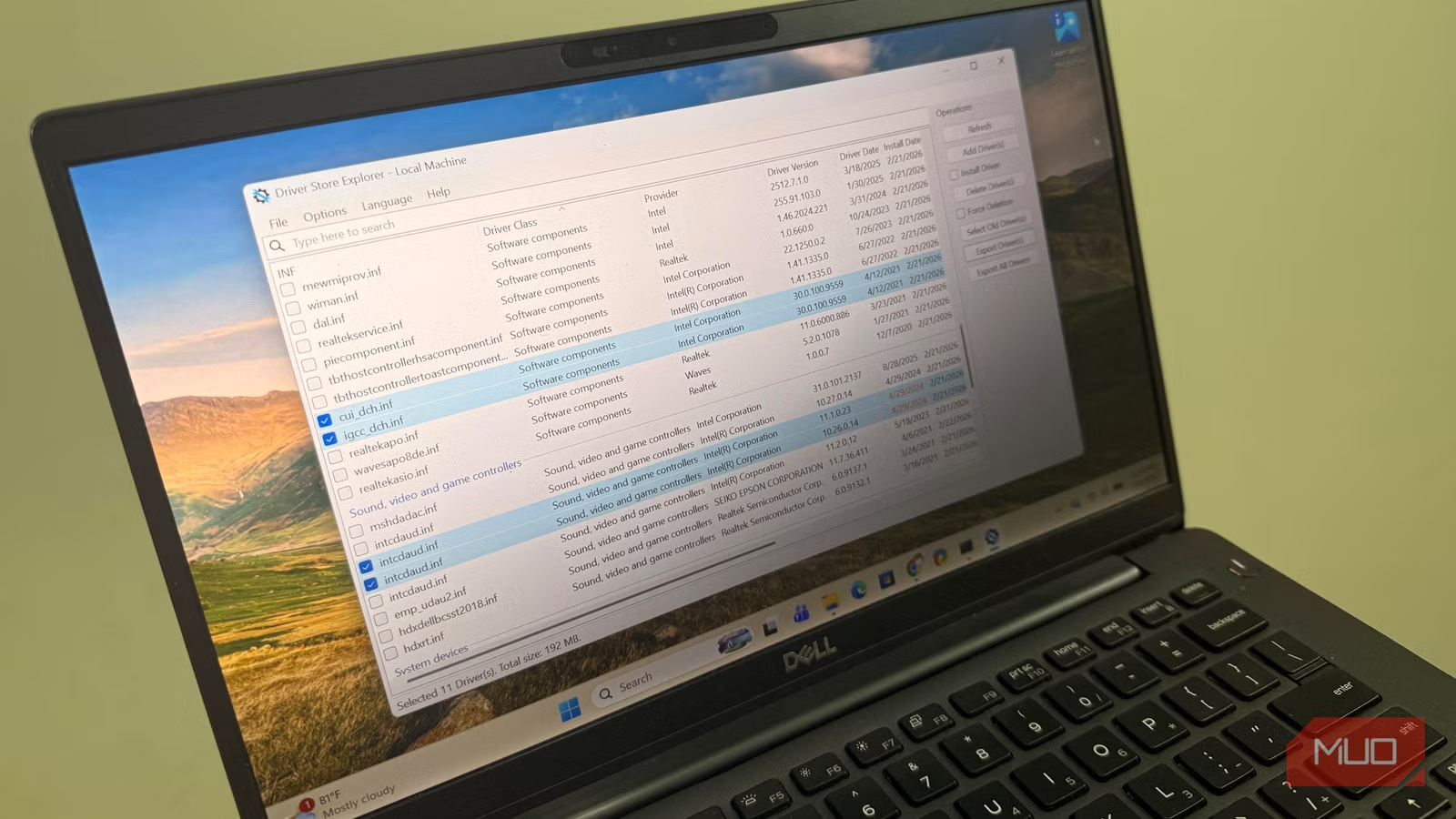

How to Clear Old Windows Drivers Wasting Your SSD Space

Windows stores every driver you've ever installed but never cleans up old versions. This hidden folder can grow to 30GB on gaming PCs. Here's how to safely reclaim that space.

Diablo 4 Gold Bug Gives Players 900% Boost

A Horadric seal item in Diablo 4: Lord of Hatred is giving players a 900% gold bonus. This appears to be a decimal point error. Players are exploiting it to earn billions of gold per hour before Blizzard patches it.