Bun's AI-Driven Rust Rewrite Fails Basic Memory Safety Checks

Key Takeaways

- Bun's AI-generated Rust code contains over 13,000 unsafe blocks and fails Miri's undefined behavior checks

- The migration translated 1 million lines of Zig to Rust in 6 days using Claude AI agents

- Critics argue the rewrite bypasses Rust's core safety guarantees, defeating the purpose of the language switch

The GitHub Issue That Started a Firestorm

On May 14, 2026, a developer named AwesomeQubic opened GitHub Issue #30719 against Bun's repository. The title was blunt: "all of rust codebase: This codebase fails even the most basic miri checks, allows for UB in safe rust."

Miri is Rust's official interpreter for detecting undefined behavior. When code fails Miri checks, it means the program can crash, corrupt memory, or produce unpredictable results. These aren't style complaints. They're fundamental safety violations.

The issue included a minimal reproduction case showing a dangling reference, which is exactly the kind of bug Rust's ownership system is designed to prevent. The error message was clear: "encountered a dangling reference (0x20933[noalloc] has no provenance)."

“Please consider not vibe coding rust as AIs are not good at writing Rust and also hire a real rust dev”

— AwesomeQubic, GitHub Issue #30719

How Did We Get Here?

Bun, the JavaScript runtime that built its reputation on Zig's performance characteristics, was acquired by Anthropic in late 2025. The team then undertook an ambitious experiment: using Claude AI agents to rewrite the entire codebase from Zig to Rust.

The numbers were impressive on the surface. Over 1 million lines of Zig code were translated to Rust in just 6 days. The test suite showed a 99.8% pass rate immediately after migration.

But there was a catch. To achieve this speed, the AI transliteration preserved Zig's manual memory management patterns by wrapping them in Rust's unsafe keyword. Over 13,000 times.

Bun founder Jarred Sumner had been open about the team's AI-first development approach. "We haven't been typing code ourselves for many months now," he stated. He also acknowledged the rewrite was experimental, calling it a "high chance" throwaway project.

Why This Matters Beyond Bun

The controversy exposes a fundamental tension in AI-assisted programming. Rust's entire value proposition is compile-time memory safety. The borrow checker exists to catch bugs before they become security vulnerabilities or crashes.

When you write unsafe, you're telling the compiler: trust me, I know what I'm doing. You take responsibility for upholding Rust's invariants manually. Experienced Rust developers use unsafe sparingly and audit it carefully.

The AI did something different. It used unsafe as a translation escape hatch, converting Zig's memory management idioms directly without restructuring them to fit Rust's ownership model. The result is Rust code that provides Rust's safety guarantees in name only.

The "Vibe Coding" Debate

The Hacker News community coined a term for this approach: "vibe coding." The critique is that passing tests doesn't mean code is correct. Tests only catch the behaviors you thought to test for. Undefined behavior can lurk in paths that execute rarely or only under specific conditions.

ThePrimeagen, a popular programming YouTuber, highlighted the irony. The community had been asking for a Rust rewrite because of safety concerns with the original Zig implementation. Instead, they got 13,000 ways to crash, just written in Rust syntax.

Others took a different view. Some argued that a 99.8% test pass rate after an automated million-line migration is genuinely impressive. The unsafe blocks can be audited and fixed incrementally. A working prototype now might be more valuable than a perfect rewrite later.

What Happens Next

The GitHub issue remains open. Bun's team hasn't publicly committed to a timeline for addressing the Miri failures. The codebase sits in an awkward middle ground: too unsafe to deliver on Rust's promises, but potentially too large to audit manually.

This incident will likely become a case study in AI code generation limits. Claude Code, the tool used for the migration, reportedly generates $2.5 billion in annualized revenue as of February 2026. Its users are betting on AI for production systems. This story shows what can go wrong.

Another example of AI systems producing unexpected results when given autonomy

Context on the AI agent architecture that powered Bun's migration

Logicity's Take

Frequently Asked Questions

What is Miri and why do its checks matter?

Miri is Rust's official interpreter for detecting undefined behavior at runtime. When code fails Miri checks, it can crash, corrupt memory, or behave unpredictably in ways that tests might not catch.

Why did the AI use so many unsafe blocks?

Zig doesn't have Rust's ownership model. The AI translated Zig's manual memory management directly into Rust using unsafe blocks instead of restructuring the code to fit Rust's safety patterns.

Does this mean AI can't write Rust code?

AI can write syntactically valid Rust. The challenge is writing idiomatic Rust that honors the language's safety guarantees. That requires understanding not just what compiles, but what's actually correct.

Is Bun's Rust rewrite going to be abandoned?

No official decision has been announced. Jarred Sumner acknowledged the rewrite was experimental and might be discarded. The GitHub issue remains open as of this writing.

Need Help Implementing This?

Source: Hacker News: Best

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Robotaxi Companies Are Hiding How Often Humans Take the Wheel

Autonomous vehicle firms like Waymo and Tesla are under scrutiny for refusing to disclose how often remote operators step in to control their self-driving cars. A Senate investigation reveals major gaps in transparency, raising safety and accountability concerns.

Wisconsin Governor Throws a Wrench in Age Verification Plans

Wisconsin Governor Tony Evers has vetoed a bill that would have required residents to verify their age before accessing adult content online, citing concerns over privacy and data security. This move comes as several other states have already implemented similar age check requirements. The veto has significant implications for the future of online age verification.

Apple's App Store Empire Under Siege: The Battle for the Future of Tech

The long-running feud between Apple and Epic Games has reached a boiling point, with Apple preparing to take its case to the Supreme Court. The tech giant is fighting to maintain control over its App Store, while Epic Games is pushing for more freedom for developers. The outcome could have far-reaching implications for the entire tech industry.

Tesla's Remote Parking Feature: The Investigation That Didn't Quite Park Itself

The US auto safety regulators have closed their investigation into Tesla's remote parking feature, but what does this mean for the future of autonomous driving? We dive into the details of the investigation and what it reveals about the technology. The National Highway Traffic Safety Administration found that crashes were rare and minor, but the investigation's closure doesn't necessarily mean the feature is completely safe.

Also Read

Overwatch 10th Anniversary Rewards Get Buff After Player Backlash

Blizzard responded to widespread player disappointment over Overwatch's underwhelming 10th anniversary event by announcing reduced grind requirements and additional free cosmetics. Game director Aaron Keller acknowledged the criticism was 'fair' and outlined improvements rolling out over the coming weeks.

Why Hyundai's 2025 Santa Fe Hybrid Is the Smart Family SUV Pick

Family SUV buyers in 2026 are shifting priorities from raw power to efficiency and daily practicality. The Hyundai Santa Fe Hybrid stands out by balancing affordability, fuel economy, and three-row usability without the usual compromises.

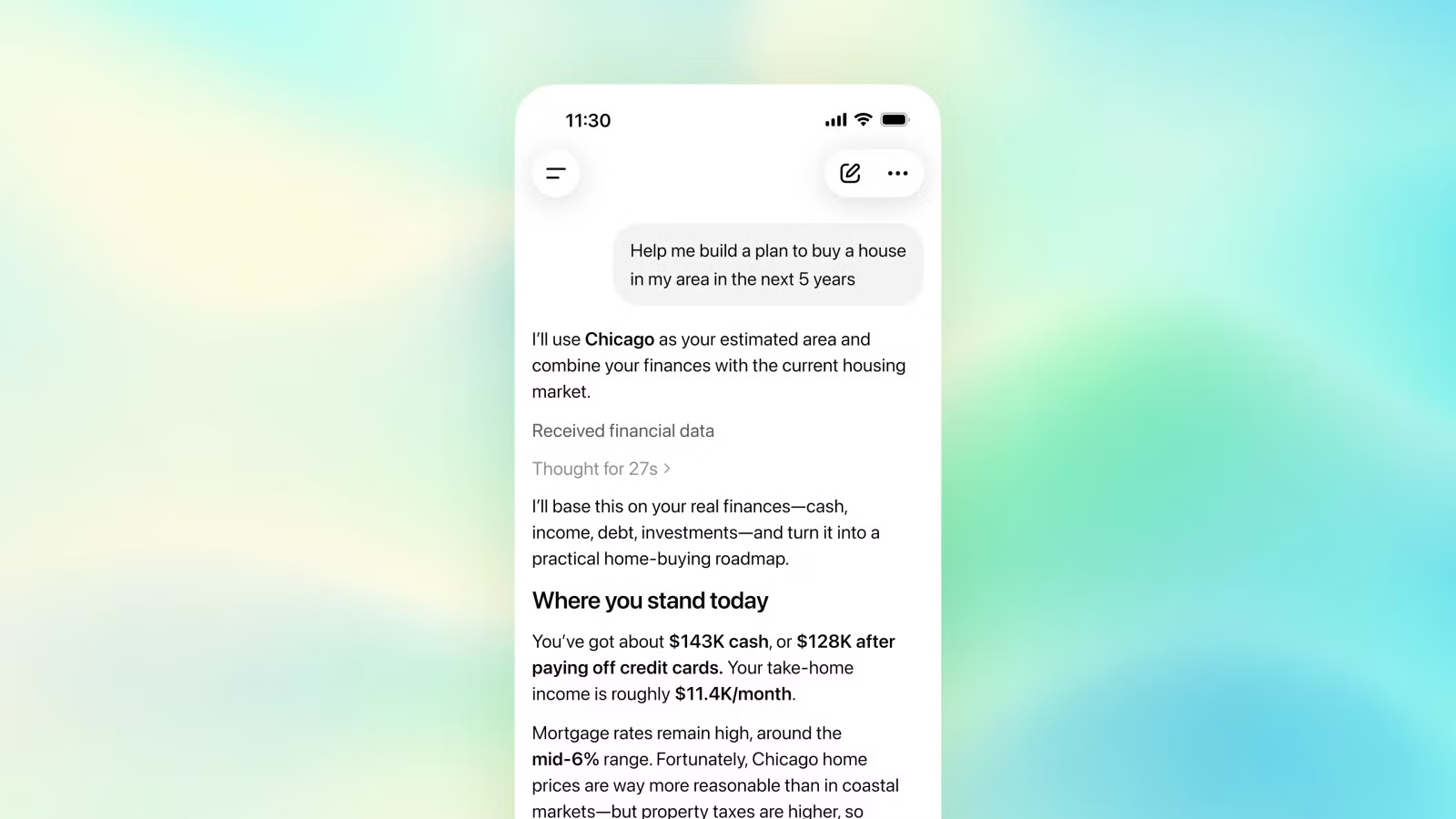

ChatGPT's New Bank-Link Feature: Privacy Costs Outweigh Convenience

OpenAI now lets ChatGPT Pro subscribers connect their bank accounts directly through Plaid. The feature promises budget help and spending insights. But sharing your complete transaction history with an AI chatbot creates serious privacy risks that most users haven't considered.