AI Vibe Coding Creates Hidden Risks for Home Assistant Users

Key Takeaways

- AI vibe coding tools like Claude Code and Codex let non-programmers create Home Assistant integrations

- Community-shared AI-generated integrations may contain hidden security vulnerabilities

- These integrations often request sensitive data like API keys and login credentials

The rise of AI coding assistants has unlocked a new era for smart home enthusiasts. Tools like Anthropic's Claude Code and OpenAI's Codex now let anyone describe what they want in plain English and get working code in return. For Home Assistant users, this means custom integrations are no longer limited to those who can write Python.

But there's a catch. These AI-generated integrations, often shared freely across Home Assistant forums and communities, may carry security risks that aren't obvious at first glance. And once installed, they have access to your entire smart home ecosystem.

How vibe coding changed Home Assistant development

Home Assistant integrations are built from code. Traditionally, creating one required programming knowledge and familiarity with the platform's architecture. That barrier kept the number of custom components relatively small and the quality relatively consistent.

Vibe coding flipped that dynamic. You can now describe your idea to Claude Code or Codex in natural language. The AI writes the code. If you want an integration that doesn't exist, you tell the AI what you want it to do, and it generates a working component.

The AI tools have plenty of source material to work with. Thousands of open-source Home Assistant integrations exist, and the AI can reference these when generating new code. The result is that anyone with an idea can now build and share integrations with the community.

Some members of the Home Assistant community are already doing exactly this. Forum posts offer custom components that promise useful functionality. Installation takes moments. The integration appears to work as advertised.

The security problems lurking beneath the surface

Here's where things get risky. An integration that works isn't the same as an integration that's safe. AI-generated code can contain serious security flaws that aren't visible to the average user.

Many integrations request sensitive information to function. Login credentials. API keys. Access tokens. Official Home Assistant integrations ask for these too, so users don't think twice about providing them. But with AI-generated code from unknown authors, you're trusting that the person who prompted the AI, and the AI itself, handled your credentials safely.

The person who created the integration may not understand the code they're sharing. They described what they wanted. The AI delivered something that seemed to work. But without the ability to audit the code, they can't verify what else it might be doing.

Logicity's Take

Why AI-generated code has unique vulnerabilities

AI coding tools are trained on vast repositories of existing code. That includes both good practices and bad ones. The AI doesn't inherently know the difference. It generates what statistically fits the pattern of the request.

Common issues in AI-generated integrations include:

- Credentials stored in plain text rather than encrypted

- API keys logged to files that could be accessed remotely

- Missing input validation that allows injection attacks

- Overly broad permissions that expose more than necessary

- Outdated security patterns copied from old source code

A human developer with security training would catch many of these issues during code review. But vibe coders often can't perform that review. They don't know what to look for. The AI told them the code works, and it does. That's enough for them to share it.

What's actually at stake

Your Home Assistant instance likely controls devices throughout your home. Smart locks. Security cameras. Garage doors. Climate systems. A compromised integration could give an attacker visibility into your daily patterns, the ability to unlock doors, or access to camera feeds.

Beyond direct control, integrations often connect to external services. A poorly coded integration might expose credentials for your cloud accounts, home network details, or personal information stored in connected services.

Another look at how convenience features can create security blind spots

How to protect yourself

The safest approach is to stick with official integrations from the Home Assistant ecosystem. These go through review processes and have active maintainers who patch security issues.

If you want to use community integrations, look for those with active development histories, multiple contributors, and visible security discussions. A single-author repository with no issues or pull requests is a red flag.

For your own vibe-coded projects, treat them as experiments rather than production systems. Run them in isolated environments. Don't connect them to critical infrastructure like locks or alarms. And never share code you can't personally verify.

Network security starts with your router configuration

The bigger picture

Vibe coding isn't going away. It's making software development accessible to millions of people who couldn't participate before. That's genuinely valuable. But it's also creating a new category of risk: functional code written by people who don't understand what they've created.

For smart home platforms like Home Assistant, the community will need new norms around AI-generated contributions. Better review processes. Security scanning tools. Clear labeling of vibe-coded vs. traditionally developed integrations. Until those systems exist, users need to be more cautious about what they install.

Frequently Asked Questions

What is vibe coding?

Vibe coding refers to using AI tools like Claude Code or Codex to generate working code by describing what you want in plain English, without needing programming knowledge.

Are all AI-generated Home Assistant integrations dangerous?

No. Many work fine and are created by people who understand the output. The risk comes from code that hasn't been reviewed by someone who can identify security flaws.

How can I tell if a community integration is safe?

Look for integrations with multiple contributors, active development history, visible security discussions, and responsive maintainers. Single-author projects with no review history are riskier.

Can official Home Assistant integrations have security issues too?

Yes, but they go through review processes and have established channels for reporting and patching vulnerabilities. Community-shared vibe-coded integrations often lack these safeguards.

Should I stop using Home Assistant custom components entirely?

Not necessarily. Custom components extend Home Assistant's capabilities significantly. Just be selective about sources and avoid installing code from unknown authors who may not understand what they've generated.

Need Help Implementing This?

Source: How-To Geek

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

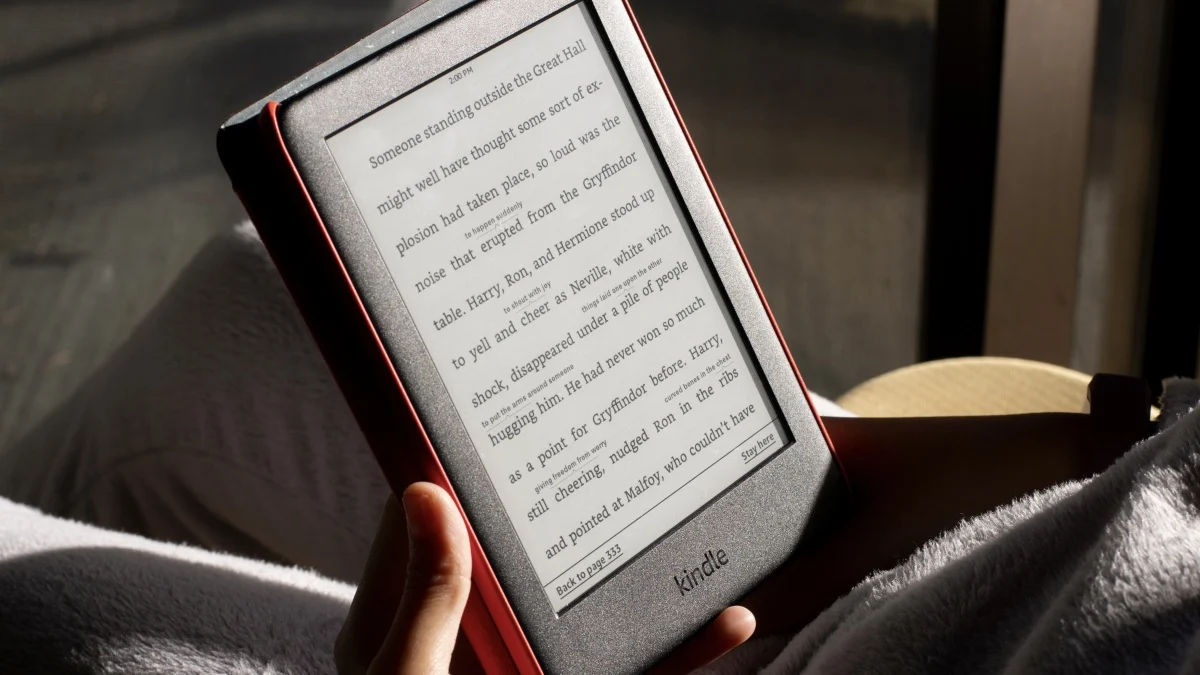

How to Jailbreak Your Kindle: Escape Amazon's Control Before They Brick Your E-Reader

Amazon is cutting off support for older Kindles starting May 2026, but you don't have to buy a new device. Jailbreaking your Kindle lets you install custom software like KOReader, read ePub files natively, and keep your e-reader alive for years to come.

X-Sense Smoke and CO Detectors at Home Depot: UL-Certified Alarms You Can Actually Trust

X-Sense just made their UL-certified smoke and carbon monoxide detectors available at Home Depot stores nationwide. The lineup includes wireless interconnected models that can link up to 24 units, 10-year sealed batteries, and smart features designed to cut down on those annoying false alarms that make people disable their detectors entirely.

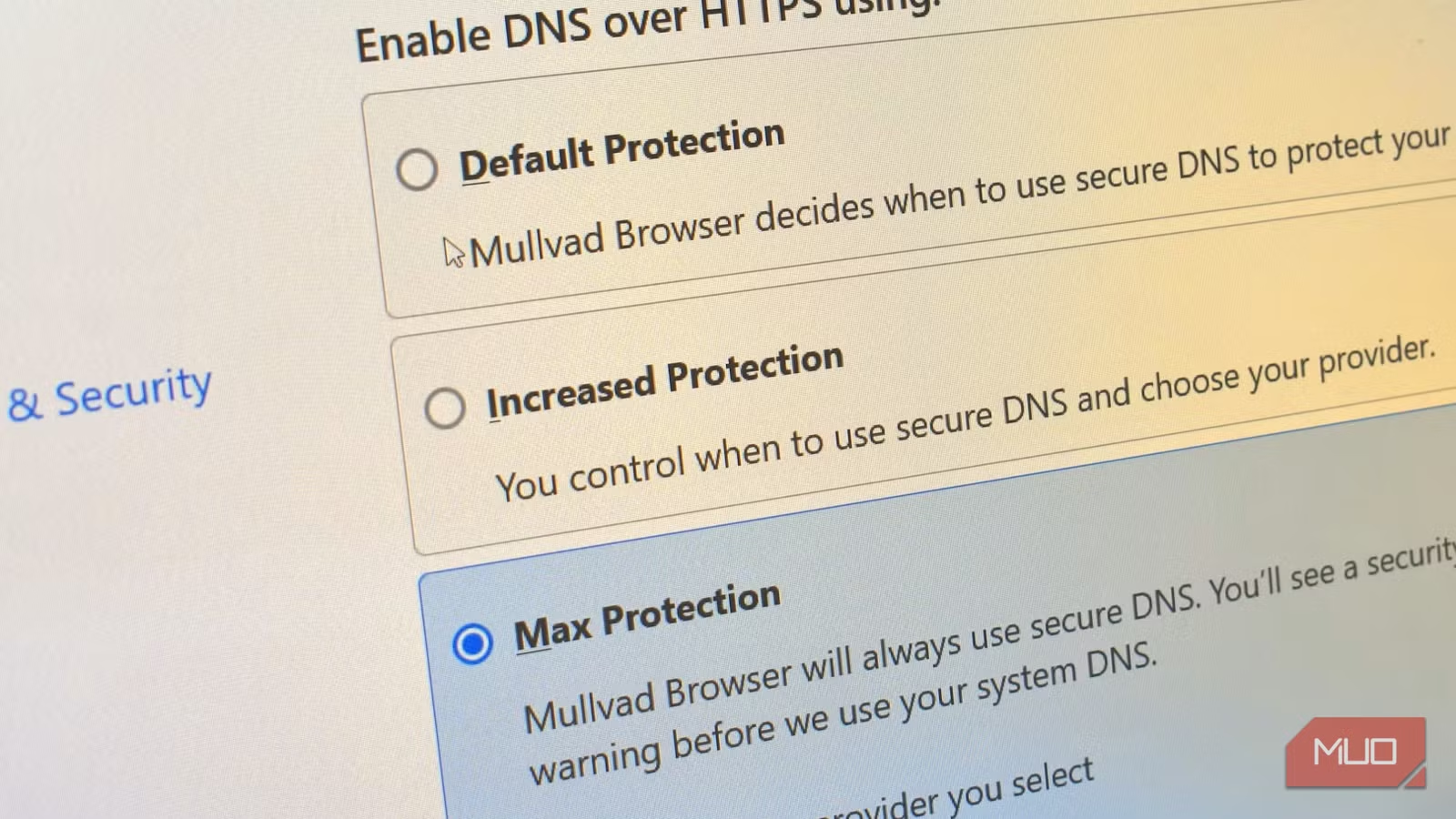

How to Change Your Browser's DNS Settings for Faster, Private Browsing in 2026

Your browser's default DNS settings are probably slowing you down and leaking your browsing history to your ISP. Here's why changing this one setting should be the first thing you do on any new device, and how to pick the right DNS provider for your needs.

Raspberry Pi at 15: Why the King of Single-Board Computers Is Losing Its Crown

After 15 years of dominating the hobbyist computing scene, the Raspberry Pi faces serious competition from cheaper alternatives, supply chain headaches, and a market that's evolved past its original mission. Here's what's happening and what it means for your next project.

Also Read

Why ChatGPT Feels Worse: You Outgrew It

A longtime ChatGPT user explains why the AI chatbot now feels limiting. After four years of daily use, the problem isn't that ChatGPT degraded. It's that power users developed needs it was never designed to meet.

Netflix Delays Narnia for Full Theatrical Release in 2027

Netflix pushed Greta Gerwig's 'The Magician's Nephew' from Thanksgiving 2026 to February 2027, expanding from a limited IMAX run to a full global theatrical window. The film won't hit streaming until April 2027, marking Netflix's most ambitious cinema commitment yet.

Can Python Predict a Spotify Hit? One Analysis of 30,000 Songs

A data journalist used Python and a Kaggle dataset of 30,000 Spotify tracks to explore what makes a song popular. The project demonstrates how accessible data science tools can help anyone analyze music trends.