Xiaomi's MiMo-V2.5-Pro Builds a Compiler in 4.3 Hours

Key Takeaways

- MiMo-V2.5-Pro completed a university-level compiler project in 4.3 hours with 672 tool calls

- The model uses 40-60% fewer tokens than Claude Opus 4.6 or Gemini 3.1 Pro for similar tasks

- With 1.02 trillion parameters and a 1 million token context window, it can run autonomously for over 11 hours

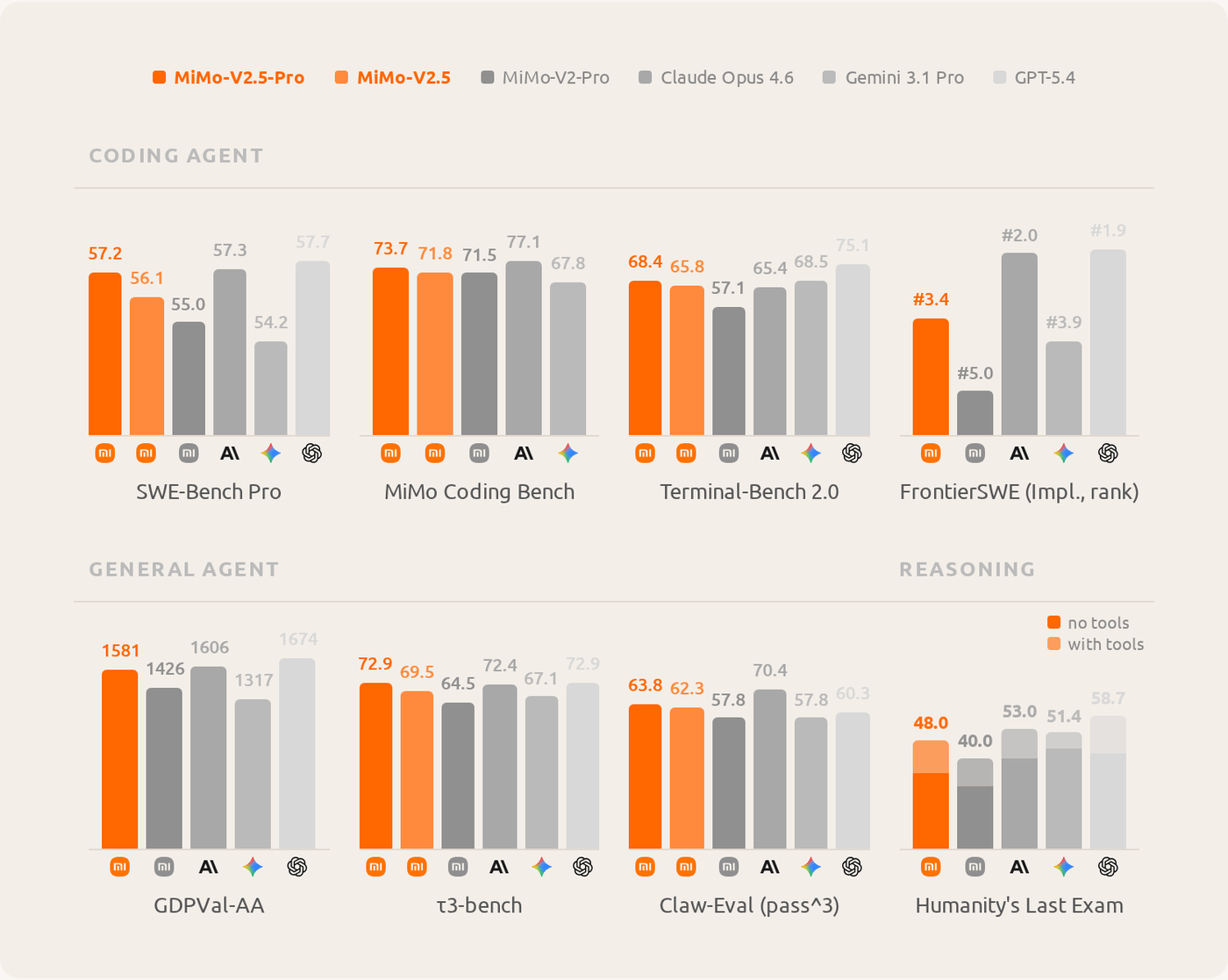

Xiaomi's AI lab has released MiMo-V2.5-Pro, an open-weight model that completed a university compiler project in 4.3 hours. The company says it matches Anthropic's Claude Opus 4.6 on coding benchmarks while burning through far fewer tokens.

The model is a mixture-of-experts architecture. It contains 1.02 trillion total parameters but activates only 42 billion per request. This design lets it handle tasks that run for hours without choking on compute costs.

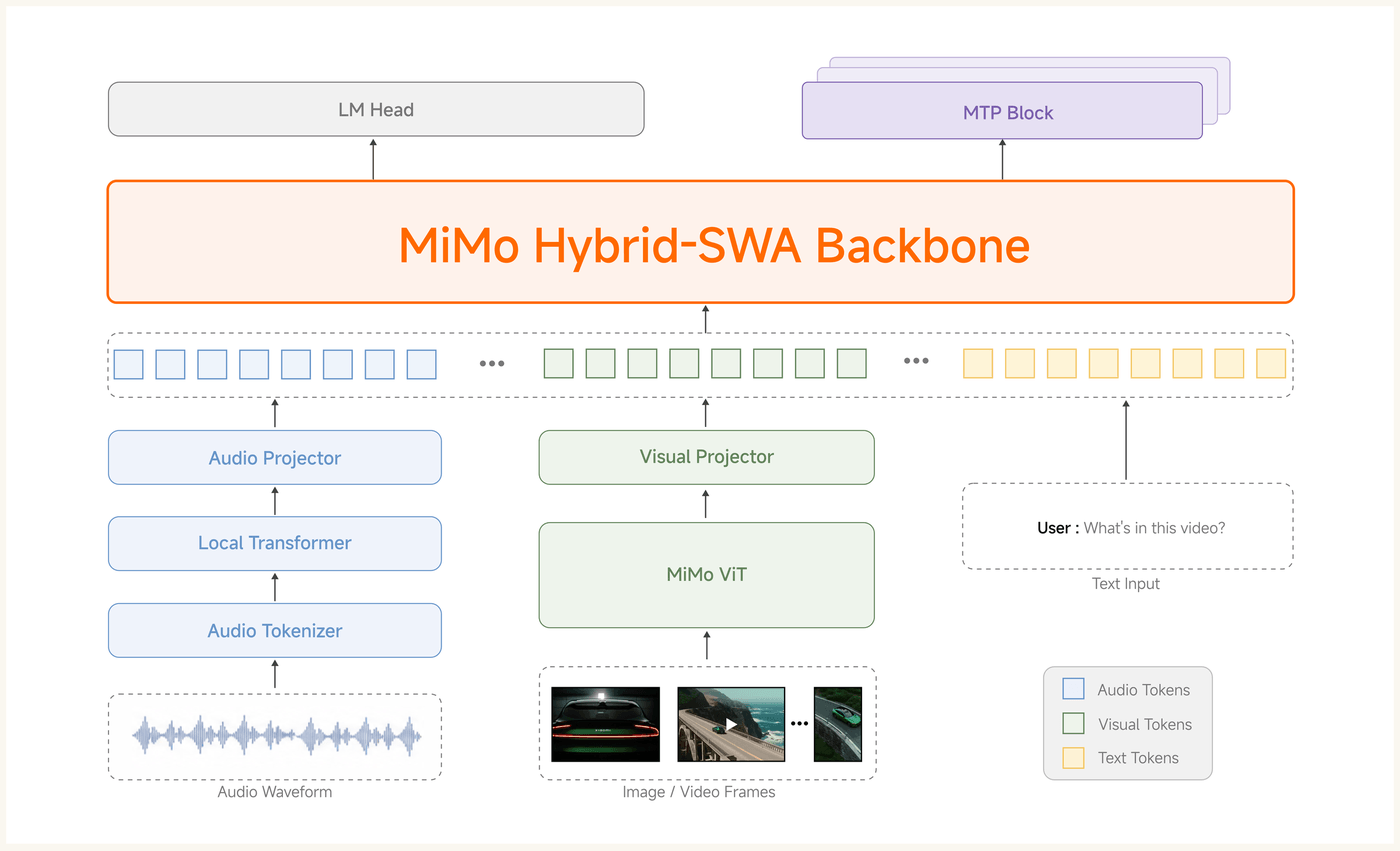

How the Architecture Works

MiMo-V2.5-Pro processes audio, images, and text through separate encoders. Each encoder translates its input into a format the language model can understand. All three feed into the same backbone, letting the model reason across modalities.

The context window is among the largest available. The main version handles up to 1 million tokens at once. A base version without additional training caps out at 256,000 tokens. For comparison, Claude's current context window sits at 200,000 tokens.

The Compiler Demo

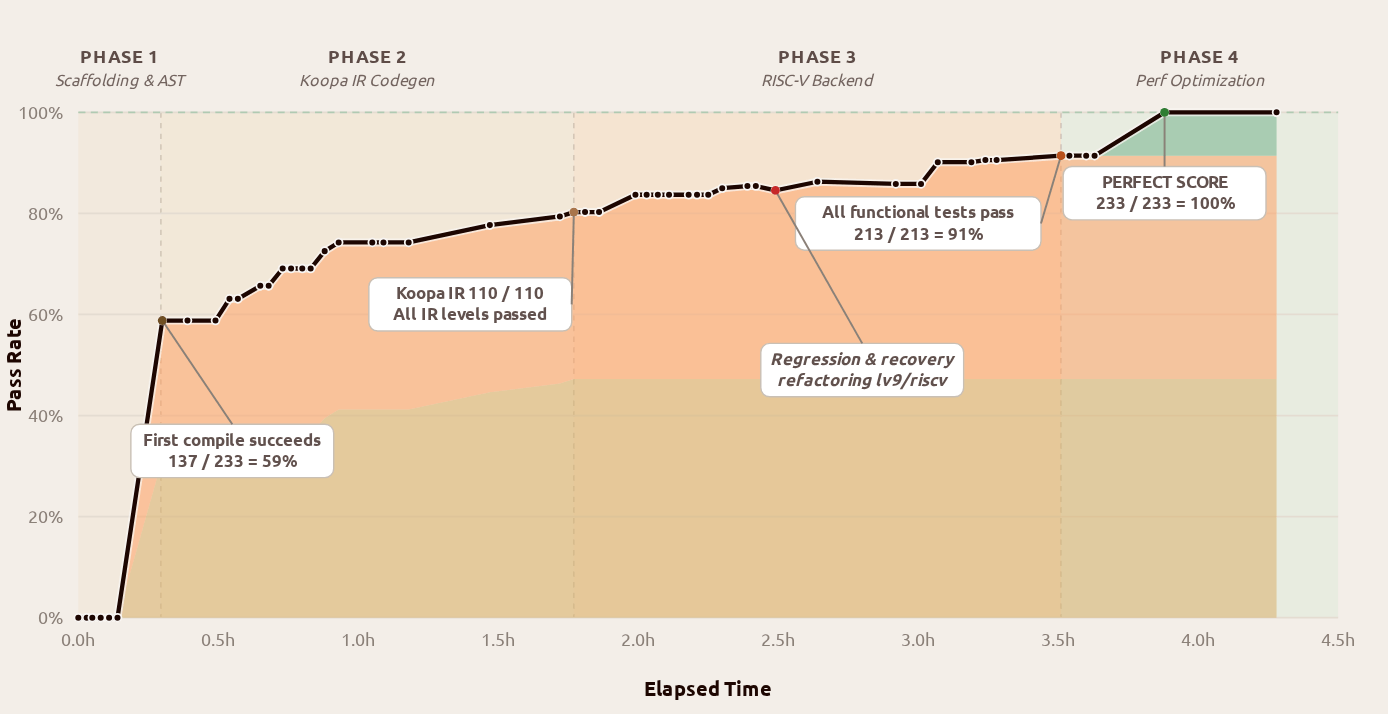

Xiaomi demonstrated the model's capabilities with three coding challenges. The headline demo involved building a compiler from a Peking University computer science course.

MiMo-V2.5-Pro worked through the compiler in four phases. Its first compile run already passed 137 of 233 tests. By the end, it hit 233 of 233, a perfect score on the hidden test suite.

The process took 4.3 hours and 672 tool calls. Xiaomi says the approach mattered as much as the result. The model first laid out the entire pipeline as scaffolding, then worked through each stage layer by layer. When a refactoring phase introduced a regression, the model diagnosed and fixed it on its own.

11 Hours of Autonomous Coding

The second demo pushed the model harder. MiMo-V2.5-Pro wrote a desktop video editor from just a few prompts. The final codebase hit roughly 8,000 lines.

This task ran for 11.5 hours with about 1,870 tool calls. The model worked without human intervention throughout, planning, writing, debugging, and refining the code on its own.

For the third demo, Xiaomi connected the model to a circuit simulator through Claude Code. The task was designing a voltage regulator. Within an hour, the result hit all six technical specifications.

Token Efficiency Claims

Xiaomi says MiMo-V2.5-Pro requires 40 to 60 percent fewer tokens than Claude Opus 4.6 or Gemini 3.1 Pro for comparable tasks. Fewer tokens means lower API costs and faster completion times.

These are internal benchmarks, not independent tests. The efficiency gap, if it holds in real-world use, would matter for companies running long autonomous tasks where token costs add up fast.

Open Weights vs Closed APIs

MiMo-V2.5-Pro is released with open weights. This means developers can download and run the model on their own hardware rather than paying per-token API fees.

Running a 1 trillion parameter model requires serious compute. Most organizations would need multi-GPU clusters. But for companies with that infrastructure, open weights offer control over data, customization options, and predictable costs.

Another open-source project challenging established players

What This Means for AI Coding Tools

Current AI coding assistants like GitHub Copilot or Cursor work well for short tasks. Autocomplete a function, explain a code block, fix a bug. They struggle with multi-hour projects that require sustained planning.

MiMo-V2.5-Pro is built for the opposite use case. Its 1 million token context and mixture-of-experts efficiency let it tackle projects that take hours and thousands of tool calls. If the demos reflect real capability, this is a different category of coding assistant.

Logicity's Take

Frequently Asked Questions

What is MiMo-V2.5-Pro?

MiMo-V2.5-Pro is Xiaomi's new open-weight AI model with 1.02 trillion parameters, designed for long-running autonomous coding tasks.

How does MiMo-V2.5-Pro compare to Claude Opus?

According to Xiaomi's internal benchmarks, MiMo-V2.5-Pro lands close to Claude Opus 4.6 on coding tasks while using 40-60% fewer tokens.

Can I run MiMo-V2.5-Pro locally?

Yes, the model has open weights. However, running a 1 trillion parameter model requires significant GPU infrastructure.

What is the context window size for MiMo-V2.5-Pro?

The main version handles up to 1 million tokens. The base version without retraining caps at 256,000 tokens.

What tasks has MiMo-V2.5-Pro completed in demos?

Xiaomi showed it building a complete compiler in 4.3 hours, writing an 8,000-line video editor in 11.5 hours, and designing a voltage regulator circuit in under an hour.

Need Help Implementing This?

Source: The Decoder / Jonathan Kemper

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse allZuckerberg's Superintelligence Lab Faces Setback

The first AI model from Zuckerberg's superintelligence lab has failed to impress compared to its rivals, sparking concerns about the lab's direction. We take a closer look at what happened and why it matters.

Muse Spark Launch Propels Meta AI App to Top 5

The recent launch of Muse Spark has significantly boosted the popularity of Meta AI app, pushing it into the top 5. We explore what this means for the AI landscape.

Meta's Muse Spark AI Model Lags Behind ChatGPT and Claude

Meta's Muse Spark AI model still can't outperform ChatGPT and Claude in key areas, despite its advancements. We explore what this means for the AI landscape.

Meta Launches Muse Spark AI To Challenge ChatGPT

Meta launches Muse Spark AI to challenge ChatGPT and Claude, we explore what this means for the AI landscape. Muse Spark AI is a significant development in the AI chatbot space.

Also Read

iOS 27: Google-Powered Siri, AI Camera, iPhone 11 Dropped

Apple's next mobile operating system will focus on stability over flashy features, following the 'Snow Leopard' playbook. The update brings Google's AI to Siri, new camera intelligence tools, and ends support for iPhone 11 models.

Kimi K2.6 Beats Claude, GPT-5.5, Gemini in Coding Challenge

Moonshot AI's open-weights model Kimi K2.6 won an AI coding contest with a 7-1-0 record, outperforming models from OpenAI, Anthropic, Google, and xAI. Xiaomi's MiMo V2-Pro took second place, leaving Western frontier labs in third through seventh positions.

Ladybird Browser Adds Inline PDFs, Faster HTML Parsing

Ladybird's April 2026 update brings 333 merged pull requests including an inline PDF viewer, speculative HTML parsing, and off-thread JavaScript compilation. The open-source browser project also welcomed $51,000 in new sponsorships from the Human Rights Foundation and individual donors.