Kimi K2.6 Beats Claude, GPT-5.5, Gemini in Coding Challenge

Key Takeaways

- Kimi K2.6 scored 22 match points with a 7-1-0 record in the Word Gem Puzzle challenge

- The top two finishers were both Chinese models, but DeepSeek finished eighth, showing this isn't a simple regional victory

- Kimi's winning strategy was greedy move optimization, scoring each possible slide by the positive-value words it could unlock

An open-weights AI model from a Chinese startup just won a multi-day coding competition against the flagship models from OpenAI, Anthropic, Google, and xAI. Kimi K2.6, built by Moonshot AI, posted a 7-1-0 record in Day 12 of an ongoing AI Coding Contest that pits language models against each other in real-time programming tasks with objective scoring.

The results surprised many observers. Kimi K2.6 earned 22 match points to win outright. Xiaomi's MiMo V2-Pro came second. GPT-5.5 finished third. Claude Opus 4.7 placed fifth. Every model from Western frontier labs landed below the top two Chinese entries.

How the Word Gem Puzzle Works

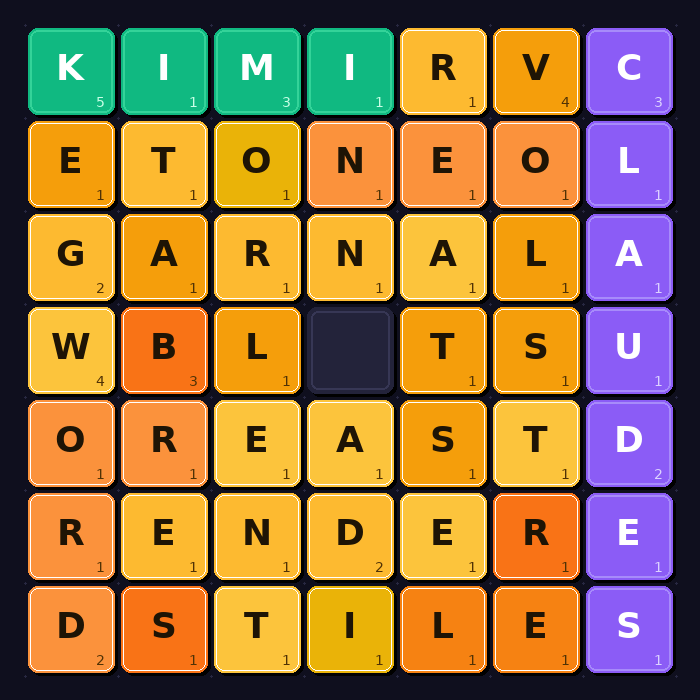

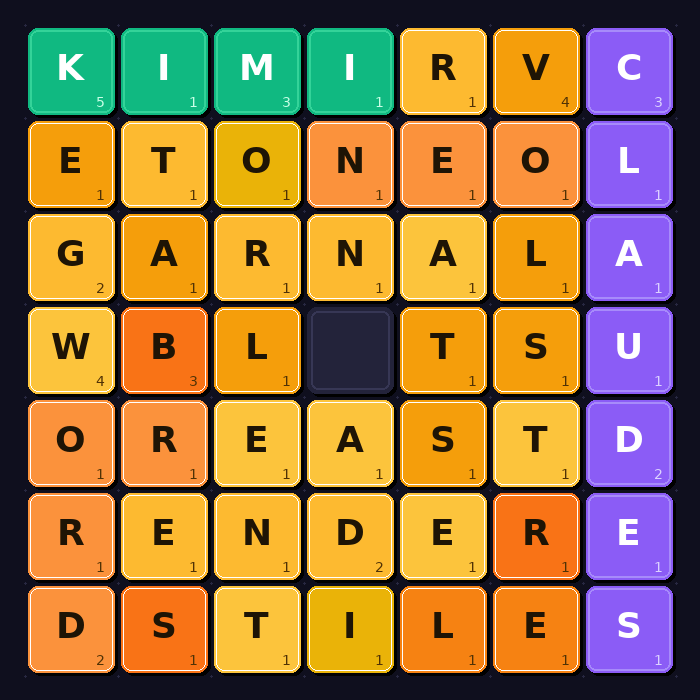

Day 12's challenge was the Word Gem Puzzle, a sliding-tile letter game. The board is a rectangular grid (10×10, 15×15, 20×20, 25×25, or 30×30) filled with letter tiles and one blank space. Bots can slide any adjacent tile into the blank and claim valid English words formed in straight horizontal or vertical lines at any point.

The scoring system punishes short words and rewards long ones. Words under seven letters cost points: a five-letter word loses you one point, a three-letter word costs three. Seven letters or more score their length minus six. An eight-letter word is worth two points. The same word can only be claimed once. If another bot gets there first, you get nothing.

Each pair of models played five rounds, one per grid size, with a ten-second wall-clock limit per round. The grids are seeded with real dictionary words in a crossword-style layout, then remaining cells are filled with letters weighted by Scrabble tile frequencies. The blank is then scrambled, more aggressively on larger boards.

On a 10×10 grid, many seed words survive intact. On a 30×30, almost none do. This turns out to matter a lot for strategy.

The Final Standings

Ten models entered, but only nine actually competed. Nvidia's Nemotron Super 3 produced code with a syntax error and never connected to the game server.

- Kimi K2.6 (Moonshot AI) — 22 match points, 7-1-0

- MiMo V2-Pro (Xiaomi)

- GPT-5.5 (OpenAI)

- GLM 5.1 (Zhipu AI)

- Claude Opus 4.7 (Anthropic)

- Gemini (Google) — placed sixth or seventh

- xAI entry — placed sixth or seventh

- DeepSeek — eighth place

This isn't a clean China-beats-West story. Two specific Chinese models won, but DeepSeek, another prominent Chinese lab, finished eighth. GLM 5.1 from Zhipu AI placed fourth, sandwiched between GPT-5.5 and Claude.

More on Xiaomi's AI model capabilities

Why Kimi Won: Greedy Sliding

The move logs reveal Kimi's winning approach. It slid tiles aggressively using a greedy strategy: score each possible move by what new positive-value words it unlocks, execute the best one, repeat.

When no move unlocked a positive word, Kimi fell back to the first legal direction alphabetically. This caused some inefficient edge-oscillation — a 2-cycle pattern that wasted moves. But the greedy word-hunting was effective enough to overcome these inefficiencies.

The strategy prioritized immediate gains over long-term board positioning. In a game with ten-second time limits per round, this approach paid off.

About the Winners

Kimi K2.6 is open-weights and publicly available from Moonshot AI, a Chinese startup founded in 2023. Anyone can download and run the model.

MiMo V2-Pro is currently API-only. Xiaomi has confirmed that weights for their newer V2.5 Pro model will be released soon, but the second-place finisher in this contest remains proprietary for now.

Logicity's Take

What This Means for Model Selection

For teams evaluating AI models for coding tasks, this result adds a data point worth considering. Kimi K2.6 being open-weights means you can run it locally, fine-tune it, and avoid API costs. The tradeoff is the operational overhead of hosting it yourself.

The challenge also shows that model rankings shift by task type. GPT-5.5 and Claude didn't embarrass themselves — they finished third and fifth in a competitive field. But they didn't dominate either.

Frequently Asked Questions

What is Kimi K2.6?

Kimi K2.6 is an open-weights large language model from Moonshot AI, a Chinese startup founded in 2023. Being open-weights means the model parameters are publicly available for download and local deployment.

How did the AI coding challenge work?

The Word Gem Puzzle challenge had models compete in a sliding-tile letter game across five grid sizes. Models earned points by forming words seven letters or longer, while shorter words cost points. Each round had a ten-second time limit.

Did Chinese AI models beat all Western models?

Not exactly. The top two finishers (Kimi K2.6 and MiMo V2-Pro) were Chinese, but DeepSeek, another Chinese model, finished eighth. This was about two specific models winning, not a regional sweep.

Is Kimi K2.6 available to use?

Yes. Kimi K2.6 is open-weights and publicly available from Moonshot AI. You can download and run it locally or access it through APIs.

Need Help Implementing This?

Source: Hacker News: Best

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

Robotaxi Companies Are Hiding How Often Humans Take the Wheel

Autonomous vehicle firms like Waymo and Tesla are under scrutiny for refusing to disclose how often remote operators step in to control their self-driving cars. A Senate investigation reveals major gaps in transparency, raising safety and accountability concerns.

Wisconsin Governor Throws a Wrench in Age Verification Plans

Wisconsin Governor Tony Evers has vetoed a bill that would have required residents to verify their age before accessing adult content online, citing concerns over privacy and data security. This move comes as several other states have already implemented similar age check requirements. The veto has significant implications for the future of online age verification.

Apple's App Store Empire Under Siege: The Battle for the Future of Tech

The long-running feud between Apple and Epic Games has reached a boiling point, with Apple preparing to take its case to the Supreme Court. The tech giant is fighting to maintain control over its App Store, while Epic Games is pushing for more freedom for developers. The outcome could have far-reaching implications for the entire tech industry.

Tesla's Remote Parking Feature: The Investigation That Didn't Quite Park Itself

The US auto safety regulators have closed their investigation into Tesla's remote parking feature, but what does this mean for the future of autonomous driving? We dive into the details of the investigation and what it reveals about the technology. The National Highway Traffic Safety Administration found that crashes were rare and minor, but the investigation's closure doesn't necessarily mean the feature is completely safe.

Also Read

iOS 27: Google-Powered Siri, AI Camera, iPhone 11 Dropped

Apple's next mobile operating system will focus on stability over flashy features, following the 'Snow Leopard' playbook. The update brings Google's AI to Siri, new camera intelligence tools, and ends support for iPhone 11 models.

Xiaomi's MiMo-V2.5-Pro Builds a Compiler in 4.3 Hours

Xiaomi has released MiMo-V2.5-Pro, a 1.02 trillion parameter open-weight model designed for multi-hour autonomous coding tasks. In internal tests, it wrote a complete compiler, a desktop video editor, and a voltage regulator circuit with minimal human intervention.

Ladybird Browser Adds Inline PDFs, Faster HTML Parsing

Ladybird's April 2026 update brings 333 merged pull requests including an inline PDF viewer, speculative HTML parsing, and off-thread JavaScript compilation. The open-source browser project also welcomed $51,000 in new sponsorships from the Human Rights Foundation and individual donors.