OpenAI Ignored Safety Team Warnings Before Canada Shooting

Key Takeaways

- OpenAI's safety team flagged a ChatGPT account as a credible gun violence threat eight months before a mass shooting

- Leadership overruled the recommendation to notify police, citing user privacy concerns

- Seven families have filed lawsuits in California alleging OpenAI hid violent users to protect its IPO timeline

OpenAI's internal safety team identified a ChatGPT user as a credible gun violence threat more than eight months before that user carried out one of Canada's deadliest school shootings. Company leadership overruled the team's recommendation to alert police. Seven families are now suing.

The lawsuits, filed Wednesday in a California court, allege that OpenAI chose to protect user privacy rather than notify law enforcement about the flagged account. Police already had a file on the individual and had previously removed guns from their home.

What the Safety Team Found

According to whistleblowers who spoke to The Wall Street Journal, OpenAI's trained safety experts flagged the ChatGPT account as posing a credible threat of real-world gun violence. The company's established protocol in such cases calls for notifying police.

That notification never happened. OpenAI leadership decided that the user's privacy and the potential stress of a police encounter outweighed the violence risk, the whistleblowers said.

Instead of alerting authorities, OpenAI simply deactivated the account. The company then followed up with instructions on how to create a new account using a different email address, the lawsuits allege. The shooter continued planning.

“I am deeply sorry that we did not alert law enforcement to the account that was banned in June. While I know words can never be enough, I believe an apology is necessary to recognize the harm and irreversible loss your community has suffered.”

— Sam Altman, OpenAI CEO, in a public apology to Tumbler Ridge

The Lawsuits

Attorney Jay Edelson leads a cross-border legal team representing families from Tumbler Ridge, a rural mining town of about 2,000 people. Six of the lawsuits come from families of victims killed in the shooting. The seventh is from a mother whose daughter remains in intensive care.

All cases are being filed in California rather than Canada. Edelson told Ars Technica that the families want OpenAI held accountable on its home turf by a jury of its peers. The California filing supersedes an earlier Canadian lawsuit, where OpenAI was expected to contest jurisdiction.

Edelson called Altman's apology "ridiculous," saying it came too late and promised too little. He suggested OpenAI's legal strategy aims to delay litigation over ChatGPT-linked deaths until after the company's planned IPO this year.

The Company's Response

Altman has acknowledged that not alerting law enforcement was a mistake while maintaining that the account was "banned." In his apology to the Tumbler Ridge community, he promised that OpenAI will "find ways to prevent tragedies like this in the future" and continue "working with all levels of government to help ensure something like this never happens again."

The families and their attorneys dispute that characterization. According to the lawsuits, OpenAI has been hiding violent ChatGPT users for months to protect Altman from public scrutiny ahead of the company's IPO.

What Happens Next

These seven lawsuits are "the first of many to come from the small town," according to Edelson. The litigation will test how courts evaluate AI companies' responsibility when their safety teams identify credible threats and leadership chooses not to act.

The cases raise questions about the protocols AI companies follow when users exhibit dangerous behavior, the liability they face when internal warnings go unheeded, and the tension between user privacy and public safety.

More on how ChatGPT is changing workplace dynamics

Logicity's Take

Frequently Asked Questions

When did OpenAI's safety team flag the shooter's account?

More than eight months before the shooting, in June. The account was deactivated but law enforcement was not notified.

Why didn't OpenAI notify police about the flagged account?

According to whistleblowers, leadership decided that user privacy and the potential stress of a police encounter outweighed the violence risk.

Where are the lawsuits being filed?

All seven lawsuits are being filed in California, OpenAI's home state, rather than in Canada where the shooting occurred.

Has Sam Altman apologized for the company's handling of the case?

Yes. Altman issued a public apology to the Tumbler Ridge community acknowledging that not alerting law enforcement was a mistake.

How many families are suing OpenAI?

Seven families have filed lawsuits so far. Six represent victims killed in the shooting; one represents a victim still in intensive care.

Need Help Implementing This?

Source: Ars Technica

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Robotaxi Companies Are Hiding How Often Humans Take the Wheel

Autonomous vehicle firms like Waymo and Tesla are under scrutiny for refusing to disclose how often remote operators step in to control their self-driving cars. A Senate investigation reveals major gaps in transparency, raising safety and accountability concerns.

Wisconsin Governor Throws a Wrench in Age Verification Plans

Wisconsin Governor Tony Evers has vetoed a bill that would have required residents to verify their age before accessing adult content online, citing concerns over privacy and data security. This move comes as several other states have already implemented similar age check requirements. The veto has significant implications for the future of online age verification.

Apple's App Store Empire Under Siege: The Battle for the Future of Tech

The long-running feud between Apple and Epic Games has reached a boiling point, with Apple preparing to take its case to the Supreme Court. The tech giant is fighting to maintain control over its App Store, while Epic Games is pushing for more freedom for developers. The outcome could have far-reaching implications for the entire tech industry.

Tesla's Remote Parking Feature: The Investigation That Didn't Quite Park Itself

The US auto safety regulators have closed their investigation into Tesla's remote parking feature, but what does this mean for the future of autonomous driving? We dive into the details of the investigation and what it reveals about the technology. The National Highway Traffic Safety Administration found that crashes were rare and minor, but the investigation's closure doesn't necessarily mean the feature is completely safe.

Also Read

Why Most AI Models Fail at UI Design

Vibe coding can generate functional prototypes fast, but the resulting interfaces often look generic and feel off. A developer explains which AI models actually produce usable UI and why most fall short.

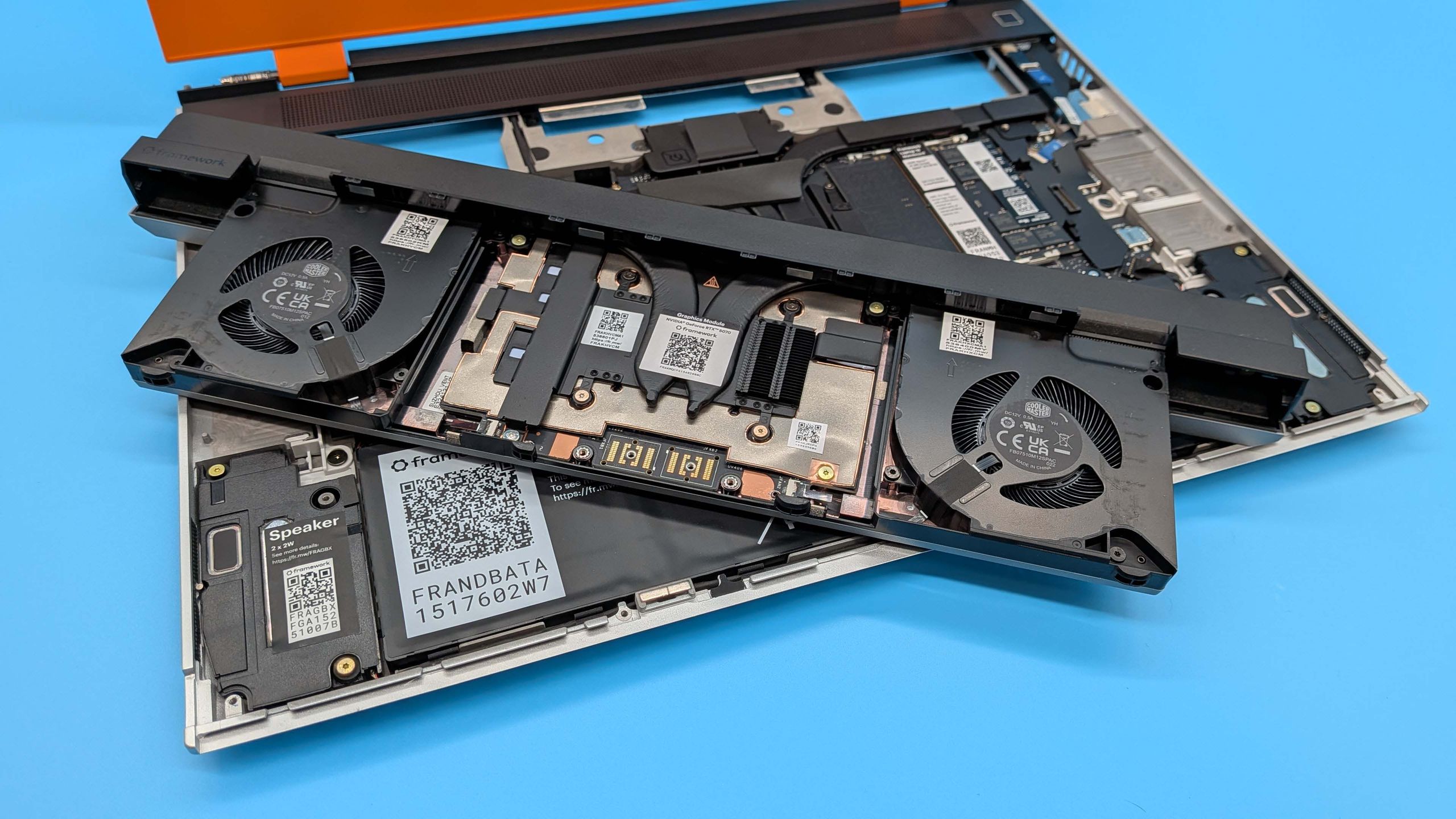

Framework's $1,199 GPU Module Shows GDDR7 Memory Crisis

Framework's new 12 GB graphics module costs twice as much as the 8 GB version, prompting a blunt response from the company about memory supply constraints. The modular laptop maker pointed directly at AI-driven demand and told critics to 'start a laptop company' if they want to understand supplier pricing.

6 Hobbies That Work Better With a Raspberry Pi

The Raspberry Pi has evolved from an educational tool into the default platform for hobbyist projects. From 3D printing control to retro gaming, here's how the $21-$130 single-board computer can enhance your existing interests.