Microsoft Unleashes 100+ AI Agents to Hunt Windows Bugs

Key Takeaways

- MDASH uses 100+ specialized AI agents that argue with each other about whether vulnerabilities are real

- The system found 16 new Windows vulnerabilities on May 12, 2026, including 4 critical remote code execution flaws

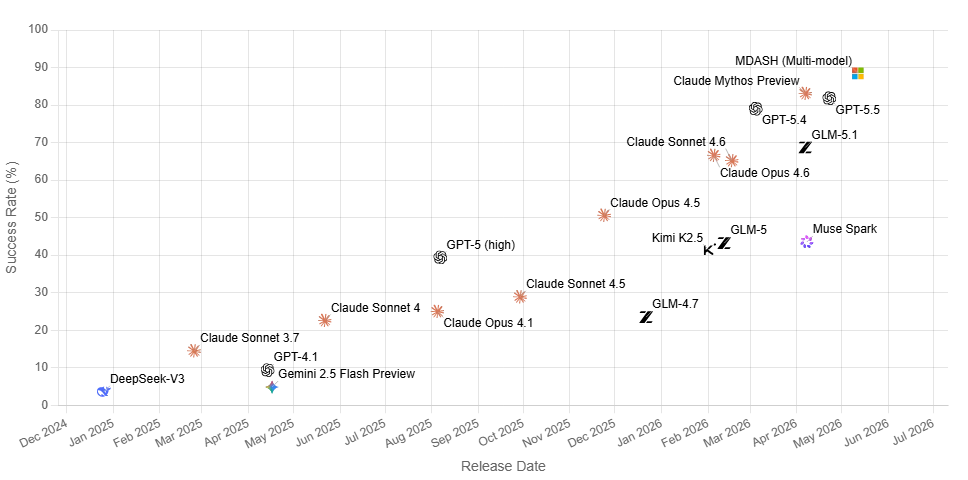

- MDASH scored 88.45% on the CyberGym benchmark, the highest result to date

What Is MDASH?

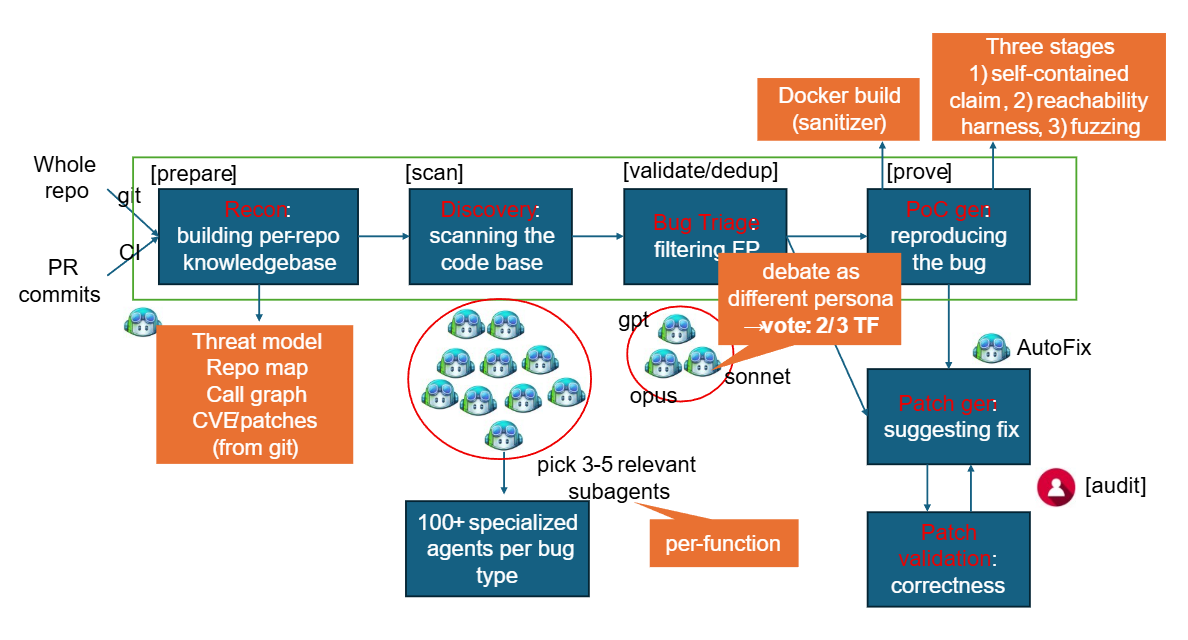

Microsoft has built a security system that sounds like a courtroom drama. Called MDASH (Multi-Model Agentic Scanning Harness), it orchestrates more than 100 specialized AI agents across multiple AI models. These agents don't just scan code. They argue with each other about what they find.

Unlike single-model approaches, MDASH runs what Microsoft describes as an ensemble of frontier and distilled models. The system is model-agnostic. When a new AI model comes out, Microsoft can test it against previous ones just by changing configuration settings.

How AI Agents Debate Each Other

MDASH works in four stages. First, it analyzes source code and maps out potential attack surfaces. Specialized auditor agents then scan for suspicious areas in the code.

The third stage is where things get interesting. A second group of agents, which Microsoft calls "debaters," argue for and against the exploitability of each finding. Think of it as prosecution versus defense, but for code vulnerabilities. After duplicates are merged, Evidence Leader agents try to trigger the vulnerability through specific inputs.

The system also accepts plugins that let security experts feed in domain-specific knowledge. Kernel calling conventions, IPC trust boundaries, and other technical details that no foundation model would know on its own can be added to improve detection.

The Vulnerabilities Found

Microsoft classifies four of the 16 discovered vulnerabilities as critical. These include remote code execution flaws in:

- tcpip.sys kernel component

- IKEv2 service (ikeext.dll)

- netlogon.dll

- dnsapi.dll

Ten of the 16 vulnerabilities affect kernel mode. Most are accessible from the network without authentication, which makes them particularly dangerous.

Microsoft points out that its own code base is especially hard to audit. Windows, Hyper-V, and Azure are proprietary. They aren't part of public training data that AI models learn from. That makes automated scanning more challenging and, arguably, more necessary.

Benchmark Performance Comes With a Caveat

On the public CyberGym benchmark, which contains 1,507 real vulnerabilities, MDASH scored 88.45%. That's the top result on the leaderboard, roughly five percentage points ahead of the next best model.

But there's a catch. Microsoft acknowledges the comparison is misleading. MDASH is an entire framework with 100+ agents working together. The models it's being compared against are individual systems. Those models would likely score higher if wrapped in a similar multi-agent framework.

Microsoft hasn't disclosed which specific AI models power MDASH. That makes independent evaluation difficult.

Why This Matters for Security Teams

Automated vulnerability detection isn't new. Static analysis tools have existed for decades. What's different here is the adversarial approach. Having AI agents argue with each other about vulnerabilities mimics how human security teams operate, with red teams finding flaws and blue teams trying to determine if they're exploitable.

The plugin architecture also matters. Security teams often have specialized knowledge about their systems that general-purpose tools miss. Being able to inject that knowledge into the scanning process could reduce false positives and catch context-specific vulnerabilities.

Logicity's Take

Frequently Asked Questions

What is Microsoft MDASH?

MDASH (Multi-Model Agentic Scanning Harness) is Microsoft's AI-powered security system that uses more than 100 specialized AI agents to automatically detect software vulnerabilities. The agents work in stages, with some scanning code and others debating whether findings are exploitable.

How many vulnerabilities has MDASH found?

MDASH discovered 16 new vulnerabilities (CVEs) in the Windows networking and authentication stack, reported on Patch Tuesday, May 12, 2026. Four of these are classified as critical, including remote code execution flaws.

What AI models does MDASH use?

Microsoft hasn't disclosed which specific AI models power MDASH. The company describes it as using an ensemble of frontier and distilled models, and the system is model-agnostic, meaning new models can be swapped in through configuration changes.

Is MDASH available for other companies to use?

Microsoft hasn't announced whether MDASH will be made available as a product or service. Currently, it appears to be an internal tool used for finding vulnerabilities in Microsoft's own products like Windows, Hyper-V, and Azure.

More on AI and cybersecurity threats

Need Help Implementing This?

Source: The Decoder / Matthias Bastian

UK Antitrust Regulator Launches Investigation into Microsoft Software Bundling

The UK's Competition and Markets Authority (CMA) has officially launched a strategic market status investigation into Microsoft's business software ecosystem, specifically examining the bundling of products like Windows, Office, and Copilot. The inquiry aims to determine if these practices are uncompetitive and is scheduled to conclude by February 2026.

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse allZuckerberg's Superintelligence Lab Faces Setback

The first AI model from Zuckerberg's superintelligence lab has failed to impress compared to its rivals, sparking concerns about the lab's direction. We take a closer look at what happened and why it matters.

Muse Spark Launch Propels Meta AI App to Top 5

The recent launch of Muse Spark has significantly boosted the popularity of Meta AI app, pushing it into the top 5. We explore what this means for the AI landscape.

Meta's Muse Spark AI Model Lags Behind ChatGPT and Claude

Meta's Muse Spark AI model still can't outperform ChatGPT and Claude in key areas, despite its advancements. We explore what this means for the AI landscape.

Meta Launches Muse Spark AI To Challenge ChatGPT

Meta launches Muse Spark AI to challenge ChatGPT and Claude, we explore what this means for the AI landscape. Muse Spark AI is a significant development in the AI chatbot space.

Also Read

OpenAI Confirms Employee Devices Hacked in Supply Chain Attack

OpenAI disclosed that hackers compromised two employee devices through a supply chain attack on the TanStack open source library. The company says no user data or production systems were accessed, but attackers stole credentials from internal code repositories.

4 Things to Know Before Building a UniFi Network

UniFi networking gear has a reputation for being complex and expensive. A recent hands-on build reveals the system is more accessible than expected, but comes with quirks worth understanding before you buy.

18-Year-Old NGINX Bug Allows DoS and Remote Code Execution

A critical vulnerability hiding in NGINX source code since 2008 has been uncovered by an AI-powered security scanner. The flaw, rated 9.2 severity, affects versions powering a third of top-ranked websites and can lead to denial of service or worse.