Italy Closes AI Probes After Hallucination Disclaimers Agreed

Key Takeaways

- Italy's AGCM closed investigations into DeepSeek, Mistral AI, and Scaleup Yazilim Hizmetleri after accepting binding commitments

- All three companies must add permanent disclaimers about AI hallucination risks to their websites and apps

- DeepSeek agreed to invest in technology to reduce hallucinations while acknowledging current tech cannot fully prevent them

What Happened

Italy's antitrust authority, the AGCM, announced Thursday it has closed investigations into three AI companies over alleged unfair commercial practices. The regulator accepted binding commitments from China's DeepSeek, France's Mistral AI SAS, and Turkey's Scaleup Yazilim Hizmetleri.

The probes focused on AI hallucinations. That's the industry term for when generative AI produces inaccurate or misleading content. The AGCM, which also enforces consumer rights in Italy, determined that users needed clearer warnings about these limitations.

The Commitments

All three companies agreed to better inform users about hallucination risks through their websites and apps. They will add permanent disclaimers to their chatbot services.

DeepSeek went further. The Chinese AI company agreed to invest in technology that reduces the risk of hallucinations. At the same time, DeepSeek acknowledged that current technology cannot prevent hallucinations entirely. This admission matters. It's rare for an AI company to state limitations so directly in a regulatory settlement.

Scaleup's NOVA AI chatbot faces a specific disclosure requirement. The cross-platform service provides a single interface for accessing multiple chatbots. Under the agreement, NOVA AI must clearly tell consumers that it does not aggregate or process responses from these underlying chatbots. It simply passes them through.

Why This Matters

Italy is emerging as an aggressive regulator of AI companies in Europe. The AGCM previously took action against OpenAI over ChatGPT's data practices. This round of enforcement signals that hallucination risks are now a consumer protection issue, not just a technical limitation.

The settlements create a template. Companies can avoid prolonged investigations by committing to transparency measures. Permanent disclaimers cost little to implement. They shift some legal risk from company to user by establishing that the user was warned.

Understanding AI's capabilities and limitations across industries

The Three Companies

DeepSeek has attracted significant attention since launching its competitive AI models at a fraction of the cost of Western alternatives. The Chinese company's rapid growth has made it a natural target for regulatory scrutiny in multiple jurisdictions.

Mistral AI, based in France, is one of Europe's most prominent AI startups. Founded by former Google and Meta researchers, the company has positioned itself as a European alternative to American AI giants. Italian regulatory compliance matters for its broader EU market access.

Scaleup Yazilim Hizmetleri is less well known. The Turkish company operates NOVA AI, which aggregates access to multiple chatbot services. Its business model of providing a unified interface for multiple AI services raised specific transparency concerns.

What Comes Next

The commitments are binding. If any of the three companies fail to implement the promised disclaimers and transparency measures, the AGCM can reopen investigations and pursue enforcement actions.

Other AI companies operating in Italy should take note. The AGCM has demonstrated it will investigate hallucination risks as potential violations of consumer protection law. Companies without clear hallucination disclaimers may face similar scrutiny.

More on AI regulation and institutional responses

Logicity's Take

Frequently Asked Questions

What is an AI hallucination?

An AI hallucination occurs when a generative AI system produces inaccurate, misleading, or entirely fabricated content. The AI presents false information confidently, as if it were true.

Why did Italy investigate DeepSeek, Mistral AI, and Scaleup?

Italy's AGCM investigated these companies for allegedly unfair commercial practices related to AI hallucinations. The regulator determined that users were not adequately informed about the risk of receiving inaccurate content.

What must these AI companies do now?

All three must add permanent disclaimers about hallucination risks to their websites, apps, and chatbot services. DeepSeek must also invest in technology to reduce hallucinations.

Does this affect other AI companies operating in Italy?

Yes. The settlements establish that hallucination risks are a consumer protection issue in Italy. AI companies without clear disclaimers may face similar investigations from the AGCM.

Can current AI technology prevent hallucinations?

No. DeepSeek acknowledged in its commitments that current technology cannot entirely prevent hallucinations. This limitation applies broadly to generative AI systems.

Need Help Implementing This?

Source: Tech-Economic Times / ET

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Robotaxi Companies Are Hiding How Often Humans Take the Wheel

Autonomous vehicle firms like Waymo and Tesla are under scrutiny for refusing to disclose how often remote operators step in to control their self-driving cars. A Senate investigation reveals major gaps in transparency, raising safety and accountability concerns.

Wisconsin Governor Throws a Wrench in Age Verification Plans

Wisconsin Governor Tony Evers has vetoed a bill that would have required residents to verify their age before accessing adult content online, citing concerns over privacy and data security. This move comes as several other states have already implemented similar age check requirements. The veto has significant implications for the future of online age verification.

Apple's App Store Empire Under Siege: The Battle for the Future of Tech

The long-running feud between Apple and Epic Games has reached a boiling point, with Apple preparing to take its case to the Supreme Court. The tech giant is fighting to maintain control over its App Store, while Epic Games is pushing for more freedom for developers. The outcome could have far-reaching implications for the entire tech industry.

Tesla's Remote Parking Feature: The Investigation That Didn't Quite Park Itself

The US auto safety regulators have closed their investigation into Tesla's remote parking feature, but what does this mean for the future of autonomous driving? We dive into the details of the investigation and what it reveals about the technology. The National Highway Traffic Safety Administration found that crashes were rare and minor, but the investigation's closure doesn't necessarily mean the feature is completely safe.

Also Read

5 Tasks Where Old 1GB USB Drives Beat Modern Storage

That dusty 1GB thumb drive in your drawer isn't obsolete. For BIOS updates, portable tools, and emergency recovery, smaller drives often work better than their modern counterparts. Here's why you shouldn't throw them away.

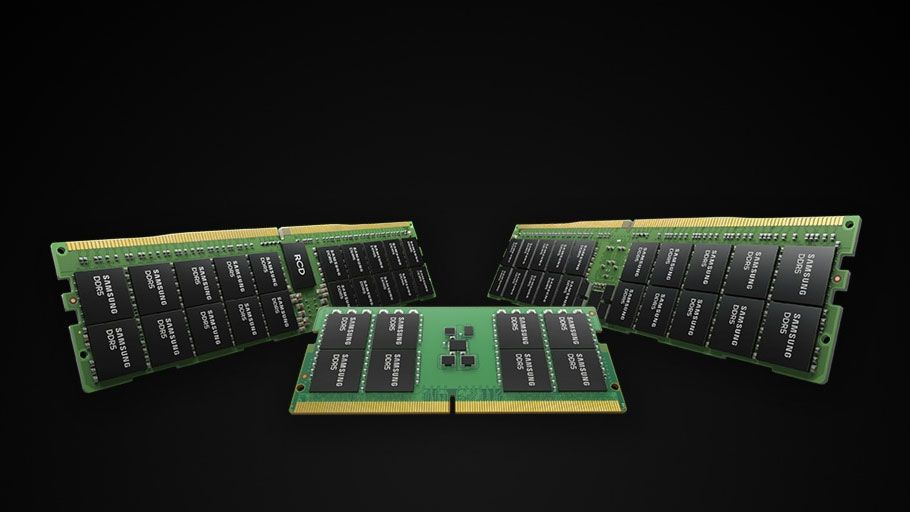

AI Memory Shortage Could Last Until 2027, Samsung and SK Hynix Warn

Samsung and SK Hynix, controlling over 90% of global DRAM production with Micron, are warning of memory shortages extending through 2027 and possibly to 2030. The crunch is driven by explosive demand for HBM chips used in AI accelerators, with customers already reserving supply years in advance.

NIELIT Director Calls AI Banking Flaw Discovery a Global Alert

Sheetal Chopra, Director at India's NIELIT, says the recent AI-led discovery of 27-year-old banking vulnerabilities should alarm financial institutions worldwide, not just in the US. Speaking at a CII event, she emphasized the need for rapid technology adoption and data security measures across the sector.