Google, Microsoft, xAI Agree to Pre-Release AI Testing by US

Key Takeaways

- All major US frontier AI labs now participate in voluntary pre-release government evaluations

- CAISI has completed over 40 model assessments, including unreleased state-of-the-art systems

- The agreements come as the Trump administration considers making pre-release AI reviews mandatory

Google, Microsoft, and Elon Musk's xAI agreed today to give the US Commerce Department access to their AI models before public release. The move brings every major American frontier AI lab under a single voluntary evaluation framework.

OpenAI and Anthropic already had evaluation partnerships dating to 2024. Both companies renegotiated their deals to align with priorities in Trump's AI Action Plan, according to the Commerce Department.

The agreements go to CAISI, the Center for AI Standards and Innovation. It operates within NIST and has completed more than 40 model assessments to date. Some of those evaluations covered unreleased state-of-the-art systems.

Same Function, New Name

CAISI started life as the AI Safety Institute under Biden in 2023. The Trump administration renamed it last June. Commerce Secretary Howard Lutnick called the rebrand a move away from regulation "used under the guise of national security."

The rhetoric shifted. The actual work did not. The center still evaluates frontier models for cybersecurity, biosecurity, and chemical weapons risks.

“These expanded industry collaborations help us scale our work in the public interest at a critical moment.”

— Chris Fall, CAISI director

Fall took over the center after a brief leadership crisis. Collin Burns, a former Anthropic and OpenAI researcher, was pushed out just four days into the job. The Washington Post reported that White House officials worried about Burns's Anthropic ties, given the administration's ongoing dispute with the company. Burns had relocated across the country and given up Anthropic equity to take the position.

Anthropic's Complicated Relationship

Anthropic's renegotiated CAISI deal sits alongside a hostile set of interactions with the federal government. The Pentagon designated Anthropic a supply chain risk in March after the company refused to lower guardrails on autonomous weapons.

A federal judge later called that Pentagon move "Orwellian." But the damage continues. Defense Secretary Pete Hegseth and Trump have both outlined a six-month phaseout period for government use of Anthropic's tools. Two active lawsuits remain unresolved.

Mandatory Reviews Under Consideration

The new agreements are voluntary. That could change. Reports emerged one day before this announcement that the Trump administration was considering mandatory pre-release review processes for AI models.

Trump's AI Action Plan, announced in July last year, directs CAISI to serve as part of an "AI evaluations ecosystem" and lead national security-related model assessments. It also instructs regulators to explore using evaluations when applying existing law to AI systems.

The center still lacks permanent legal standing. Some lawmakers have introduced draft legislation to codify it, but nothing has passed.

Logicity's Take

What This Means for AI Development

For AI labs, the agreements signal a willingness to accept government scrutiny in exchange for political cover. Pre-release evaluations let companies point to federal review when facing public criticism about safety.

For enterprises building on these models, the evaluations add a layer of third-party validation. CAISI focuses on specific risks: cybersecurity, biosecurity, chemical weapons. It is not evaluating general product quality or business suitability.

More on OpenAI's hardware and product strategy

Frequently Asked Questions

What is CAISI and what does it do?

CAISI is the Center for AI Standards and Innovation, a unit within NIST that evaluates frontier AI models for cybersecurity, biosecurity, and chemical weapons risks before public release.

Which AI companies are participating in pre-release government testing?

Google, Microsoft, xAI, OpenAI, and Anthropic have all signed agreements to let CAISI evaluate their AI models before release.

Are pre-release AI evaluations mandatory in the US?

Currently no. The CAISI agreements are voluntary. However, the Trump administration is reportedly considering mandatory pre-release review processes.

What happened to the AI Safety Institute?

The Trump administration renamed it CAISI in June 2024. The core function, evaluating AI models for specific security risks, remained the same.

Why does Anthropic have a hostile relationship with the federal government?

The Pentagon designated Anthropic a supply chain risk after it refused to lower guardrails on autonomous weapons. A federal judge called that designation "Orwellian," but a six-month phaseout of Anthropic tools in government continues.

Need Help Implementing This?

Source: Latest from Tom's Hardware

Huma Shazia

Senior AI & Tech Writer

Related Articles

Browse all

Alienware AW2726DM Review: The $350 QD-OLED Gaming Monitor That Changes Everything

Dell's Alienware AW2726DM shatters the OLED gaming monitor price barrier at just $350, delivering 27-inch QHD resolution, 240Hz refresh rate, and Quantum Dot color that rivals monitors costing twice as much. This isn't an incremental price drop. It's a complete reset of what budget-conscious gamers can expect.

iPhone Fold Launch 2026: Apple's First Foldable Could Capture 19% Market Share Instantly

Apple's long-awaited foldable iPhone is finally coming, and analysts predict it'll rocket the company to third place in the foldable market behind Samsung and Huawei. The secret weapon? Some seriously clever material science that could solve the crease problem that's plagued every foldable phone so far.

FAA Approves Military Laser Weapons for Drone Defense: What the New Airspace Rules Mean for Border Security

The FAA has given the Pentagon full approval to use high-energy laser systems against drones in US airspace, ending a two-month standoff that started when lasers shot down party balloons mistaken for cartel drones. The decision comes after safety assessments concluded these weapons don't pose increased risk to civilian aircraft.

China Chip Subsidies Reach $142 Billion: 3.6x More Than US Spent on Semiconductor Manufacturing

A new CSIS report reveals China has poured $142 billion into semiconductor subsidies over the past decade, dwarfing US spending by a factor of 3.6. But here's the twist: despite this massive investment, Chinese chipmakers still lag years behind TSMC and struggle with abysmal yields at advanced nodes.

Also Read

8 Underrated Netflix Shows to Binge Before They End

Netflix produces more content than anyone can track, which means quality shows often fly under the radar. Several underrated series are returning for new seasons in 2026, making now the perfect time to catch up on dramas, comedies, and sci-fi gems you may have missed.

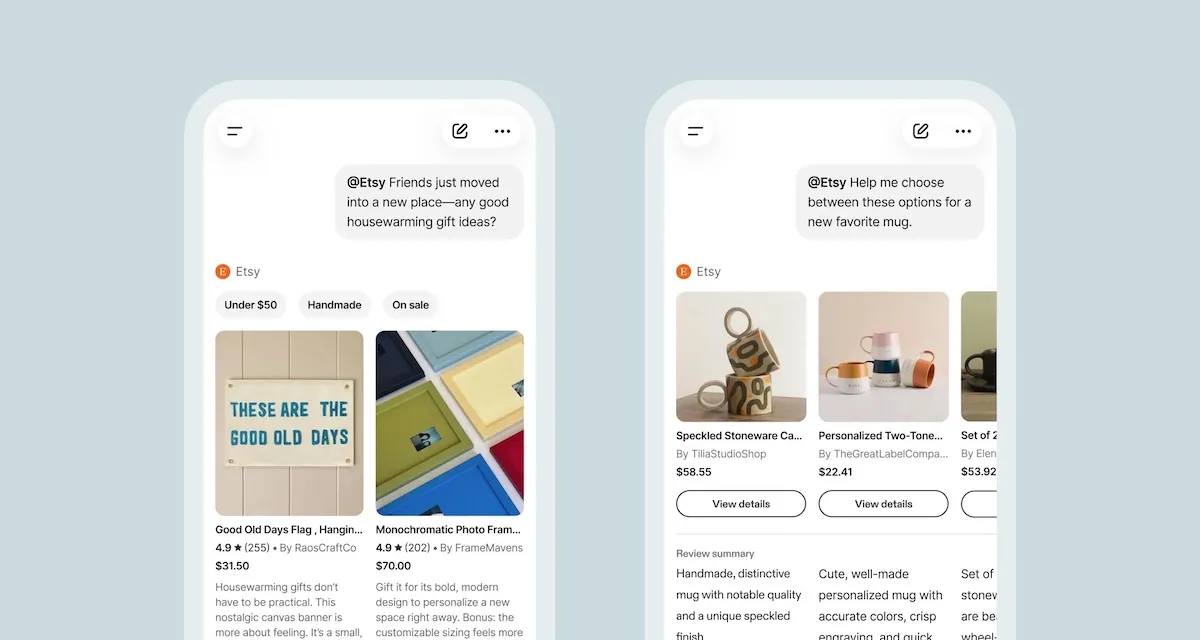

Etsy Launches Native App Inside ChatGPT for AI Shopping

Etsy now lets ChatGPT users tag @Etsy to search its 100 million listings using natural language. The move follows a failed Instant Checkout experiment and comes amid a broader AI push including a gift assistant and seller tools.

Samsung Galaxy Z Fold8 and Wide Fold Leak in One UI 9

Images of Samsung's next foldables surfaced inside a One UI 9 build, showing the Galaxy Z Fold8 and the new Galaxy Wide Fold. The Wide Fold brings a shorter, wider form factor with a spacious cover screen, while the Z Fold8 sticks close to the current design.