AI Data Privacy for Business: Protect Sensitive Info in ChatGPT

Key Takeaways

- Common PDF redaction methods provide zero actual protection—your data is still extractable

- A single data breach costs enterprises $4.45M on average, making proper AI data handling a board-level concern

- Free and low-cost redaction tools exist that permanently destroy sensitive text from documents

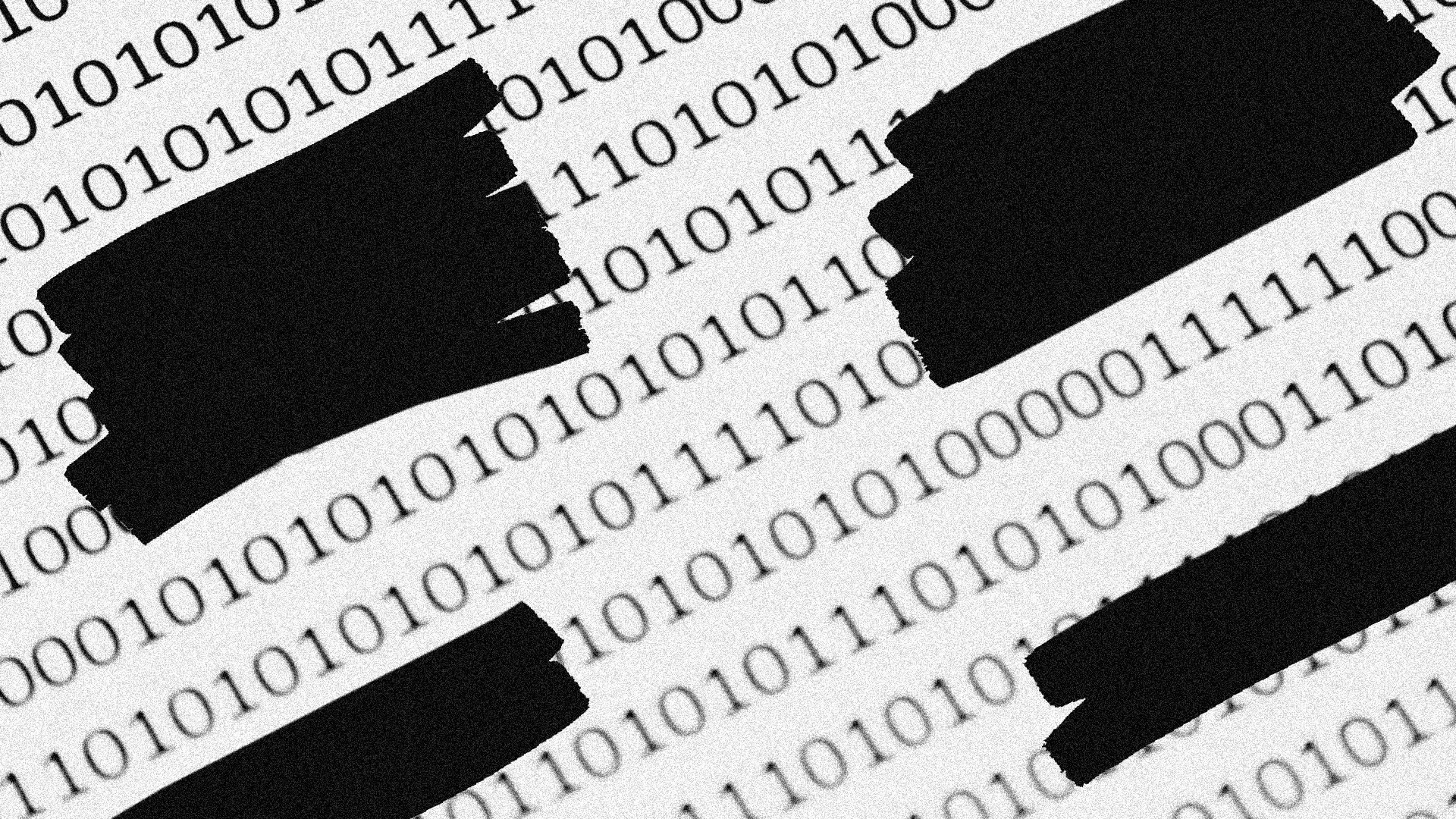

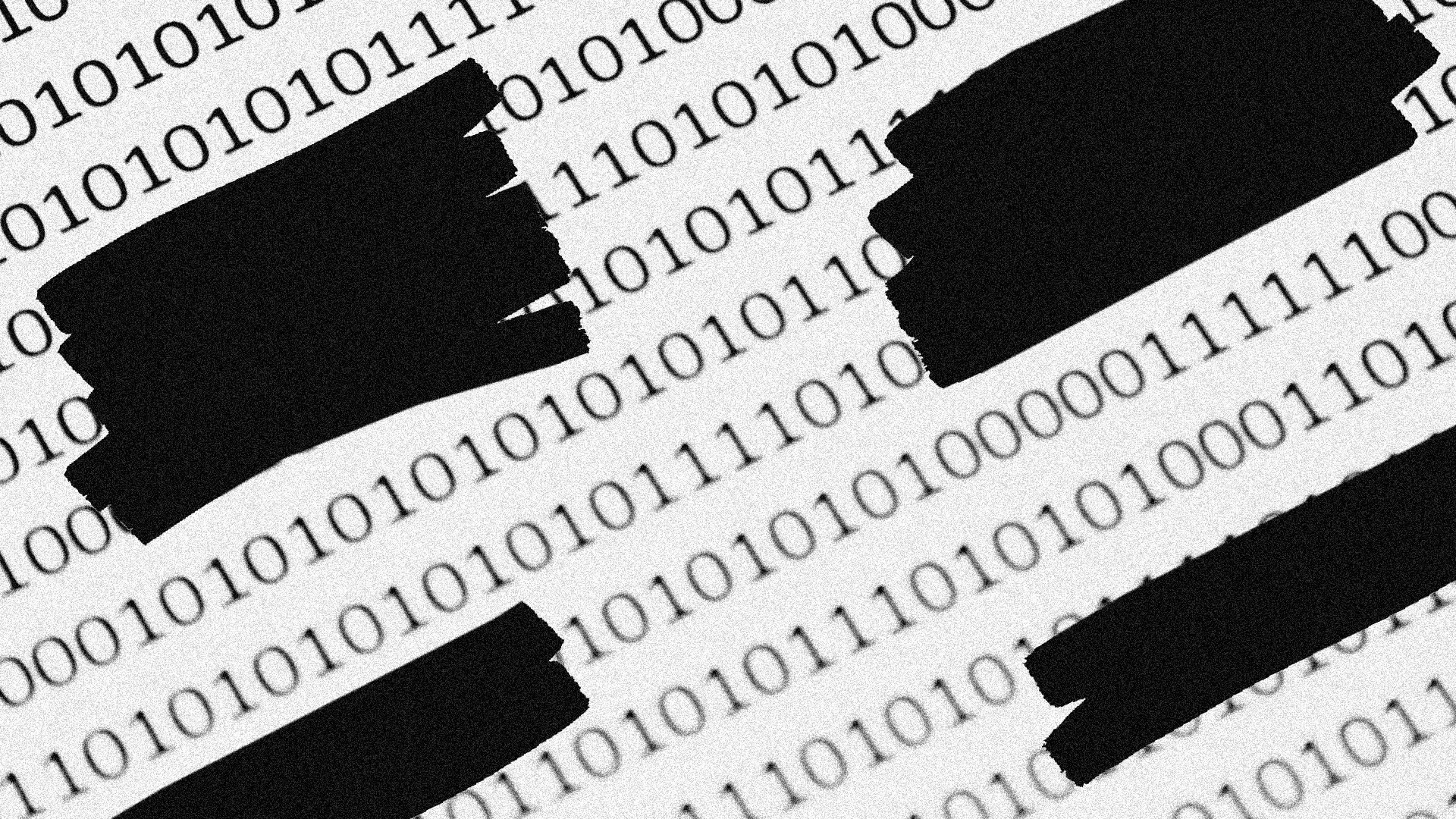

According to [Fast Company](https://www.fastcompany.com/91527262/how-to-redact-sensitive-info-chatgpt-ai-chatbot-pdf-hide-upload-mac-windows-upload-privacy), most professionals using AI chatbots to analyze sensitive documents are inadvertently exposing their data because common redaction methods like drawing black bars over text provide no actual protection—the underlying information remains fully extractable.

Here's a scenario playing out in your company right now. Someone in finance uploads a bank statement to ChatGPT for quick analysis. They've carefully drawn black boxes over account numbers using their PDF reader. They feel secure. They're not. That 'redacted' data can be recovered with a simple copy-paste. And it's now sitting on OpenAI's servers.

AI data privacy isn't a technical problem for your IT team to solve. It's a business risk that demands executive attention. With 77% of companies now using or exploring AI tools according to McKinsey, the volume of sensitive documents flowing through chatbots is exploding. Your contracts, medical records, financial statements, and customer data are all potential targets.

Why Common PDF Redaction Methods Fail Completely

Let's be direct: if your team is using markup tools to 'redact' documents before uploading them to AI chatbots, you have zero protection. This isn't a minor vulnerability. It's like locking your front door while leaving all the windows open.

The technical reason is simple. When you draw a black bar or use a highlighter tool in most PDF readers, you're adding a visual layer on top of the text. The original characters remain in the document's code. Anyone with basic PDF editing skills can remove that layer in seconds. Several attorneys general and lawyers have learned this lesson publicly, with redacted court documents revealing confidential information that was supposed to be hidden.

The Business Risk in Plain Terms

Think of visual redaction like placing electrical tape over a line of text. It hides the words from view, but peeling off the tape reveals everything underneath. When you upload a 'redacted' document to ChatGPT, you're handing over the tape-covered document—not a truly protected version. AI companies may store this data, use it for training, or expose it in future breaches.

What AI Companies Actually Do With Your Uploaded Data

Most AI companies claim they anonymize user data before using it to train models. OpenAI, Anthropic, and Google all have privacy policies stating some version of this. The catch? You're taking them at their word. There's no independent verification. No third-party audit you can review. No guarantee that anonymization is foolproof.

For business leaders, this creates a dual risk. First, your data could be incorporated into training sets that influence AI responses to competitors. Second, if these companies suffer a breach, your improperly redacted documents could end up on the dark web with all sensitive information intact.

- ChatGPT retains conversation data for 30 days minimum, even with history turned off

- Enterprise tiers offer better data isolation, but cost significantly more

- Most companies using AI tools have no formal policy for document uploads

- Regulatory frameworks like GDPR and HIPAA don't have clear guidance for AI chatbot interactions

How to Actually Protect Sensitive Data Before AI Upload

True redaction doesn't hide text. It destroys it. The correct approach uses tools specifically designed to remove underlying data from a PDF's internal code. Once properly redacted, the sensitive text literally doesn't exist in the document anymore. There's nothing to extract.

| Method | Protection Level | Cost | Best For |

|---|---|---|---|

| Visual markup (highlighter, pen) | None—text fully recoverable | Free | Never use for sensitive data |

| Adobe Acrobat Pro redaction tool | Complete—destroys underlying text | $22.99/month | Enterprises with Adobe licenses |

| PDF-XChange Editor | Complete—permanent removal | $56 one-time | SMBs needing occasional redaction |

| macOS Preview with flatten | Partial—depends on execution | Free | Quick personal use only |

| Online redaction tools | Varies—avoid for sensitive data | Free to low-cost | Non-confidential documents only |

Adobe Acrobat Pro's redaction feature is the gold standard for enterprises. It not only removes the visible text but scrubs all metadata and hidden layers. For companies already paying for Creative Cloud, this is the obvious choice. The tool marks areas for redaction first, letting you review before permanently applying changes.

Building an AI Data Privacy Policy for Your Organization

Tools alone won't protect you. You need a policy that your people actually follow. Most companies have acceptable use policies for email and cloud storage. Surprisingly few have clear guidelines for AI chatbot interactions.

- Classify document sensitivity levels and define which can never be uploaded to AI tools

- Mandate proper redaction tools for any sensitive documents that must be analyzed via AI

- Require enterprise AI tiers (like ChatGPT Enterprise or Claude for Business) for any business use

- Train employees quarterly on data handling—most breaches stem from ignorance, not malice

- Audit AI tool usage through IT monitoring to identify policy violations before they become breaches

The investment in AI agents and automation is accelerating across every industry. As companies explore these tools, the gap between AI capabilities and data protection policies is widening. The enterprises that close this gap first will have a competitive advantage in regulated industries.

Understanding the AI competitive environment helps contextualize data privacy risks

The Compliance Dimension: HIPAA, GDPR, and AI Tools

Here's where it gets uncomfortable for regulated industries. HIPAA doesn't explicitly address AI chatbot usage. Neither does GDPR. Regulators are playing catch-up with technology that's moving faster than legislation. This ambiguity doesn't protect you. If patient records or customer PII ends up exposed through improper AI tool usage, regulators will find a way to hold you accountable.

Healthcare organizations face the highest stakes. A medical practice uploading patient records to ChatGPT for quick summaries—even with attempted redaction—could face HIPAA violations exceeding $50,000 per incident. Financial services firms dealing with customer account data face similar exposure under GLBA and state privacy laws.

The smart move is treating AI chatbot interactions with the same rigor you'd apply to emailing sensitive documents externally. Would you email an unencrypted customer file to an external party with no data processing agreement? That's effectively what uncontrolled AI tool usage represents.

Enterprise AI Tiers: Are They Worth the Premium?

OpenAI's ChatGPT Enterprise, Anthropic's Claude for Business, and Google's Gemini for Workspace all offer enhanced data protections. The pricing reflects this—ChatGPT Enterprise runs custom quotes typically starting around $60 per user per month. Is it worth it?

✅ Pros

- • Data is not used for model training

- • SOC 2 compliance and enterprise-grade encryption

- • Admin controls for usage monitoring and policy enforcement

- • Dedicated support and higher rate limits

- • Custom data retention policies

❌ Cons

- • Significant cost increase over free or Plus tiers

- • Implementation requires IT involvement

- • Still requires proper redaction for highest-sensitivity documents

- • Doesn't eliminate all regulatory ambiguity

- • May still face breach risks from AI company side

For companies with more than 50 employees regularly using AI tools, enterprise tiers usually make financial sense when you factor in breach costs and compliance risks. For smaller teams, the calculus is trickier. Proper redaction practices combined with consumer-tier plans may suffice, but you're accepting more risk.

AI agent development is accelerating—understanding these tools helps assess enterprise readiness

AI Data Privacy FAQ: What Business Leaders Ask

Frequently Asked Questions

How much does proper PDF redaction cost for a business?

Adobe Acrobat Pro at $22.99/month per user is the most reliable solution. For occasional use, PDF-XChange Editor at $56 one-time is cost-effective. Factor in training time of 1-2 hours per employee for proper usage.

Is ChatGPT Enterprise worth the cost for AI data privacy?

For companies with 50+ regular AI users handling sensitive data, yes. The average data breach costs $4.45M. ChatGPT Enterprise at roughly $60/user/month provides contractual data protections, SOC 2 compliance, and audit trails that consumer plans lack.

Can I use AI chatbots for HIPAA-covered information?

Not without significant precautions. Most consumer AI tools don't offer BAAs (Business Associate Agreements). Even enterprise tiers require proper redaction of PHI. Consult your compliance officer before any patient data touches an AI system.

How long does it take to implement an AI data privacy policy?

A basic policy can be drafted in 1-2 weeks. Full implementation including tool deployment, training, and monitoring typically takes 2-3 months. Regulated industries should budget additional time for legal review.

What happens if an employee accidentally uploads sensitive data to ChatGPT?

Contact OpenAI support immediately to request data deletion. Document the incident for compliance records. Review whether the exposure triggers breach notification requirements under applicable regulations. Then strengthen your controls to prevent recurrence.

Logicity's Take

We build AI agents and automation systems for businesses daily, and this issue hits close to home. When we deploy Claude-based agents or n8n workflows for clients, data handling is the first conversation we have—not an afterthought. The reality is that most Indian startups and SMBs are adopting AI tools faster than their security practices can keep up. We've seen companies upload customer databases to ChatGPT for 'quick analysis' with zero redaction. The fix isn't complicated, but it requires intentionality. For our clients, we build data sanitization steps directly into automation workflows. Before any document hits an AI API, sensitive fields get stripped programmatically. It's not paranoia—it's basic hygiene. If you're a CTO or founder evaluating AI adoption, start with your data classification. Know what's sensitive, train your team on proper redaction, and invest in enterprise tiers for anything involving customer or financial data. The productivity gains from AI tools are real, but so are the risks. The companies that get this balance right will outcompete those that either avoid AI entirely or adopt it recklessly.

Need Help Implementing This?

Logicity builds secure AI automation systems and can help your team deploy enterprise-grade data handling practices. From Claude API integrations with built-in sanitization to comprehensive AI usage policies, we help businesses capture AI's benefits without the exposure. Get in touch to discuss your AI data privacy needs.

Source: Fast Company / Michael Grothaus

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse all

Pentagon vs Anthropic: How a $2B AI Company Fought Back with 43 Pages

A California judge blocks the Pentagon from labeling Anthropic a supply chain risk, Anthropic remains persona non grata with the government

Microsoft and Amazon Just Launched AI Health Tools - But Do They Really Work?

AI health tools are on the rise with Microsoft's Copilot Health and Amazon's Health AI, but their effectiveness is still under question. These tools aim to provide health advice to people with limited access to healthcare.

Pentagon Gets Burned: Anthropic AI Wins Major Court Battle Against $2B Contract Loss

A California judge blocks the Pentagon from labeling Anthropic a supply chain risk, a move that could have cost the AI company a $2B contract. The government's culture war tactic has backfired, with the judge citing a lack of evidence and First Amendment violations.

Artificial Intelligence Advances at Logicity

We explore the latest developments in artificial intelligence and what they mean for us. From machine learning to natural language processing, AI is changing the way we live and work.