This Robot Just Learned How to Fold Your Laundry and Fix Vacuums — And It’s Shockingly Good

Generalist's new AI-powered robot, GEN-1, achieves 99% success in delicate real-world tasks like folding clothes and repairing devices, thanks to massive human movement data and self-taught problem-solving. Unlike older models, it adapts on the fly — even recovering from mistakes no one trained it for.

Key Takeaways

- GEN-1 robot achieves 99% success in complex physical tasks like folding and packing

- Trained on over 500,000 hours of human movement data captured via wearable 'data hands'

- Can adapt to disruptions and improvise solutions not in its original training

- Learns robot-specific skills in under an hour after pretraining

- Represents a leap in general-purpose robotics using scalable AI models

In This Article

- The Robot That Just Got Shockingly Good at Being Human

- How GEN-1 Learned to Move Like a Human

- Improvising Like a Pro: The Robot That Recovers From Mistakes

- Why This Changes Everything for Robotics

The Robot That Just Got Shockingly Good at Being Human

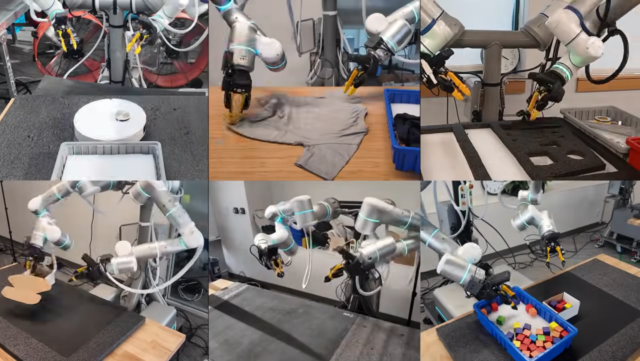

Robots have long struggled with tasks that feel second nature to us — folding a shirt, tucking a toy into a bag, or fixing a small appliance. But a new AI-powered system called GEN-1 is changing that, showing it can not only handle these delicate jobs but master them with near-perfect accuracy.

- Developed by robotics AI company Generalist, GEN-1 marks a major leap in physical machine learning

- It performs tasks like packing phones, folding boxes, and servicing robot vacuums with 99% reliability

- Unlike older robots that rely on rigid programming, GEN-1 learns and adapts like a human

How GEN-1 Learned to Move Like a Human

Most AI models train on internet text, but robots need to understand motion, touch, and spatial reasoning. So how do you teach a machine the subtle art of folding laundry or placing cash neatly in a wallet? Generalist found a clever workaround.

- Used wearable 'data hands' — sensor-laden pincers — to record how humans move during everyday tasks

- Collected over 500,000 hours and petabytes of real-world physical interaction data

- This human motion data forms the foundation of GEN-1’s pretraining, helping it understand natural manipulation

Improvising Like a Pro: The Robot That Recovers From Mistakes

Traditional robots fail the moment something goes off-script. But GEN-1 doesn’t just follow instructions — it thinks on its feet, adjusting in real time when things go wrong, even if it’s never seen that exact problem before.

- Can respond to disruptions outside its training, like re-folding a shirt that’s been moved mid-task

- Shown shaking a plastic bag to help a stuffed animal slide in — a move not pre-programmed

- Uses two hands to reposition slipped washers, demonstrating advanced dexterity and planning

Why This Changes Everything for Robotics

GEN-1 isn’t just another upgrade — it’s a sign that general-purpose robots are finally stepping out of labs and into real life. This could reshape how we think about automation in homes, factories, and repair shops.

- Achieves 99% success in delicate tasks at triple the speed of its predecessor, GEN-0

- Adapts to new robot bodies in under an hour, making deployment fast and scalable

- Moves beyond single-task robots toward truly flexible, intelligent machines

“Nobody has programmed the robot to make mistakes, therefore nobody has programmed the robot to recover from mistakes. And that just happens for free.”

— Felix Wang, Generalist Engineer

Final Thoughts

GEN-1 isn’t just better at folding boxes — it’s redefining what robots can learn and how they adapt. With human-like improvisation and self-correction, we’re inching closer to a future where robots aren’t just tools, but intuitive partners in daily life. The age of generalist robots might finally be here.

Sources & Credits

Originally reported by Ars Technica

Huma Shazia

Senior AI & Tech Writer

More Articles

You Won't Believe the Power Grab Just Made by RFK Jr. Over the CDC Vaccine Panel

NASA Astronauts Just Made History by Reaching a Record-Breaking Distance in Space