Hackers Are Complaining About AI Slop on Cybercrime Forums

Key Takeaways

- Researchers analyzed 97,895 AI-related conversations on cybercrime forums since ChatGPT launched in 2022

- Forum users complain about bullet-pointed AI explainers flooding their communities and undermining skilled members

- Cybercriminals have shifted from AI enthusiasm to skepticism as low-quality posts proliferate

"I'm disappointed that you are working to incorporate AI garbage into the site. No one is asking for this."

That complaint could have come from any frustrated user of a social media platform, productivity app, or news site. But this anonymous poster was complaining about a cybercrime forum's plans to add more generative AI features. The irony is unmistakable: even scammers, fraudsters, and hackers are tired of AI slop.

Nearly 100,000 AI Conversations Analyzed

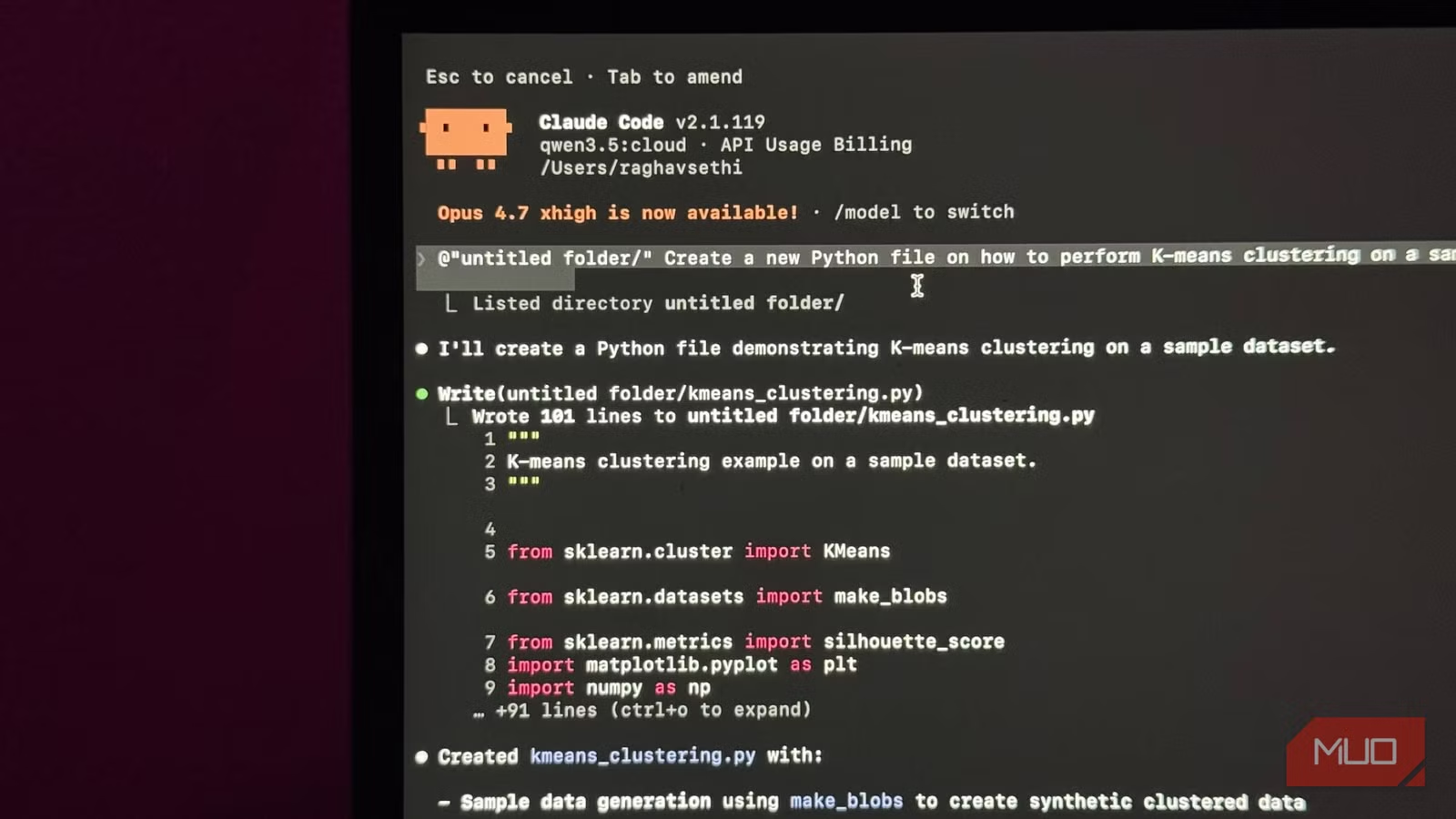

Researchers from the University of Edinburgh, University of Cambridge, and University of Strathclyde recently studied how low-level cybercriminals use AI. They analyzed 97,895 AI-related conversations on cybercrime forums from ChatGPT's launch in November 2022 through the end of 2024.

What they found surprised them. Forum members have shifted from initial enthusiasm about AI to growing skepticism. The complaints sound familiar to anyone who has watched AI-generated content spread across the internet.

- People dumping "bullet-pointed explainers" of basic cybersecurity concepts

- A flood of low-quality posts clogging up discussions

- Concerns about Google's AI search overviews driving down forum traffic

Social Spaces Under Threat

Cybercrime forums are not just marketplaces for stolen data and hacking services. They function as communities where users build reputations, compete in writing contests, and yes, post about their rivals. That social fabric is being damaged by AI-generated content.

“These are essentially social spaces. They really hate other people using [AI] on the forums. I think a lot of them are a bit ambivalent about AI because it undermines their claim to be a skilled person.”

— Ben Collier, security researcher and senior lecturer at the University of Edinburgh

The reputation economy on these forums depends on demonstrating actual expertise. When newcomers use ChatGPT to generate hacking tutorials, they gain status they did not earn. This annoys longtime members who built their credibility through genuine knowledge.

Real Complaints From Real Hackers

Posts on Hack Forums, a community for people interested in hacking techniques, show the frustration directly.

“I see a lot of members using AI for making their threads/posts and it pisses me off since they don't even take the time to write a simple sentence or two.”

— Hack Forums user

The complaint echoes what teachers say about student essays, what editors say about submitted articles, and what Reddit moderators say about comments. The difference is that these communities exist to facilitate crime. The shared frustration about AI slop cuts across every type of online community.

The Skill Question

One reason for the backlash is existential. Many people in these forums see themselves as skilled practitioners of a craft, even if that craft is fraud or hacking. AI threatens that identity.

If anyone can generate a passable phishing email or malware explainer using ChatGPT, what distinguishes the expert from the amateur? The forums have always had a hierarchy based on demonstrated skill. AI flattens that hierarchy in ways members find uncomfortable.

Logicity's Take

What This Means for Security

The findings suggest that AI has not transformed cybercrime as dramatically as some feared. Low-level criminals are using AI, but the results are often mediocre. The same tools that let anyone generate a hacking tutorial also make it obvious when content lacks real expertise.

For security teams, this is useful intelligence. AI-assisted attacks are likely to be more common but often less sophisticated. The truly dangerous actors are probably not the ones generating bullet-point explainers on forums.

Frequently Asked Questions

Are cybercriminals actually complaining about AI?

Yes. Researchers analyzed 97,895 AI-related conversations on cybercrime forums and found significant pushback against AI-generated content and features.

Why do hackers dislike AI-generated content on their forums?

Forum members see AI posts as low-quality slop that floods their communities and allows unskilled people to gain unearned reputations.

Has AI made cybercrime more dangerous?

The research suggests AI has not transformed low-level cybercrime as much as feared. The same quality problems affecting AI content elsewhere appear in criminal forums too.

Which universities conducted this research?

The study involved researchers from the University of Edinburgh, University of Cambridge, and University of Strathclyde.

Need Help Implementing This?

Source: Feed: Artificial Intelligence Latest / Matt Burgess

Manaal Khan

Tech & Innovation Writer

Related Articles

Browse allZuckerberg's Superintelligence Lab Faces Setback

The first AI model from Zuckerberg's superintelligence lab has failed to impress compared to its rivals, sparking concerns about the lab's direction. We take a closer look at what happened and why it matters.

Muse Spark Launch Propels Meta AI App to Top 5

The recent launch of Muse Spark has significantly boosted the popularity of Meta AI app, pushing it into the top 5. We explore what this means for the AI landscape.

Meta's Muse Spark AI Model Lags Behind ChatGPT and Claude

Meta's Muse Spark AI model still can't outperform ChatGPT and Claude in key areas, despite its advancements. We explore what this means for the AI landscape.

Meta Launches Muse Spark AI To Challenge ChatGPT

Meta launches Muse Spark AI to challenge ChatGPT and Claude, we explore what this means for the AI landscape. Muse Spark AI is a significant development in the AI chatbot space.

Also Read

Braintrust Breach Exposes Customer API Keys in AWS Incident

AI evaluation startup Braintrust confirmed unauthorized access to an AWS account containing customer API keys. The company is asking all customers to rotate their keys, though it claims no evidence of broader exposure exists yet.

Claude Doubles API Limits After Anthropic-SpaceX Deal

Anthropic has doubled hourly API rate limits for Claude Pro and Max subscribers and removed peak-hour throttling. The changes come from a new partnership with SpaceX that gives Claude access to over 220,000 NVIDIA GPUs at the Colossus 1 data center.

OpenAI Launches ChatGPT Futures Program with $10K Grants

OpenAI announced its inaugural ChatGPT Futures Class of 2026, awarding 26 college students and young builders $10,000 grants plus access to frontier models. The program recognizes students who graduated alongside ChatGPT's development and used AI to build real projects.