Why ChatGPT Feels Worse: You Outgrew It

Key Takeaways

- ChatGPT's rigid conversation structure makes it difficult to branch discussions or return to earlier prompts

- The chatbot's analysis remains surface-level for nuanced or creative tasks despite years of updates

- Claude and other alternatives handle context shifting more fluidly for complex workflows

The ChatGPT honeymoon is over

When OpenAI launched ChatGPT in late 2022, it felt like magic. College students used it to grind through assignments. Professionals turned to it for brainstorming. Everyone discovered a new way to be productive. But nearly four years later, something has shifted.

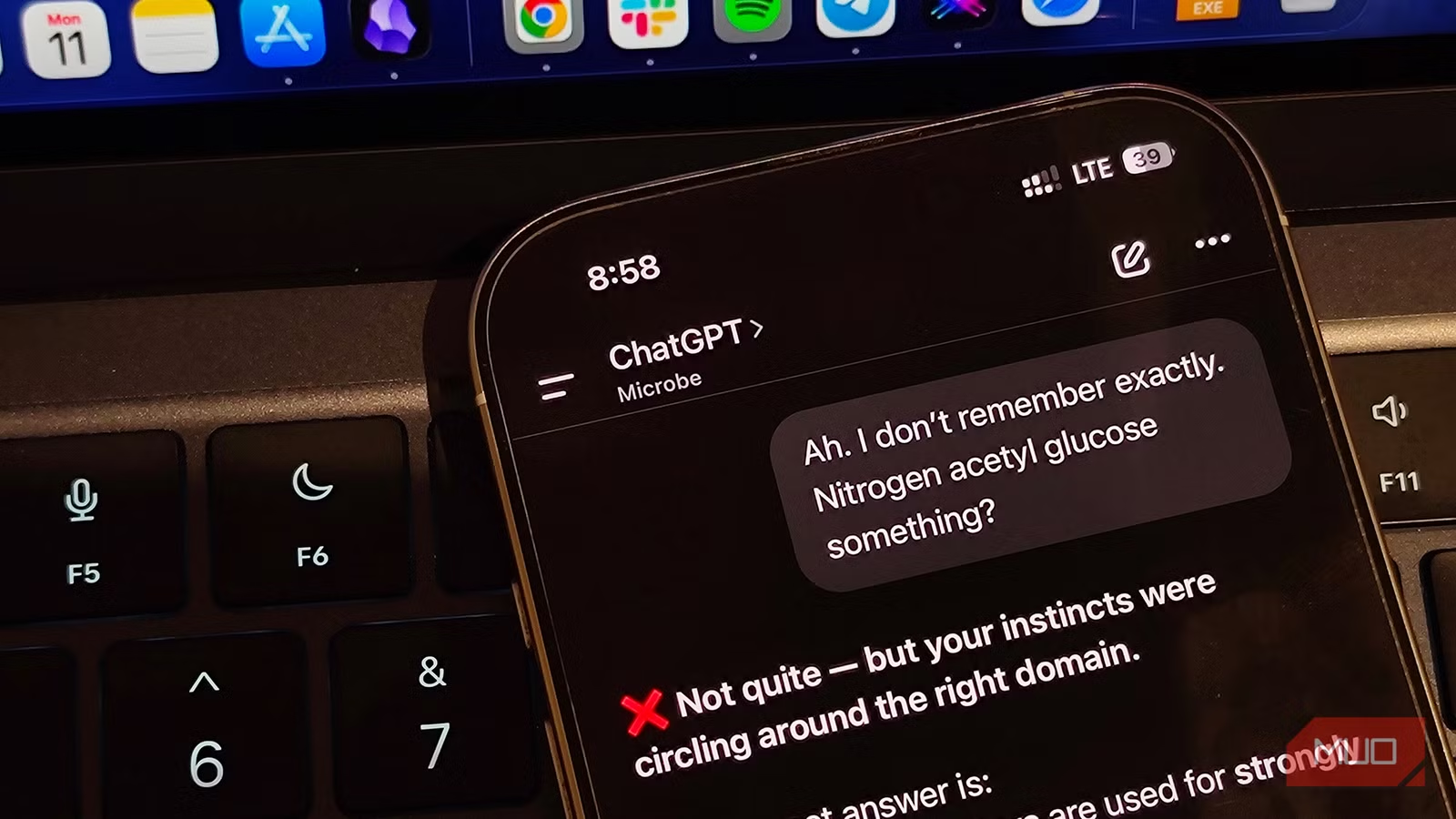

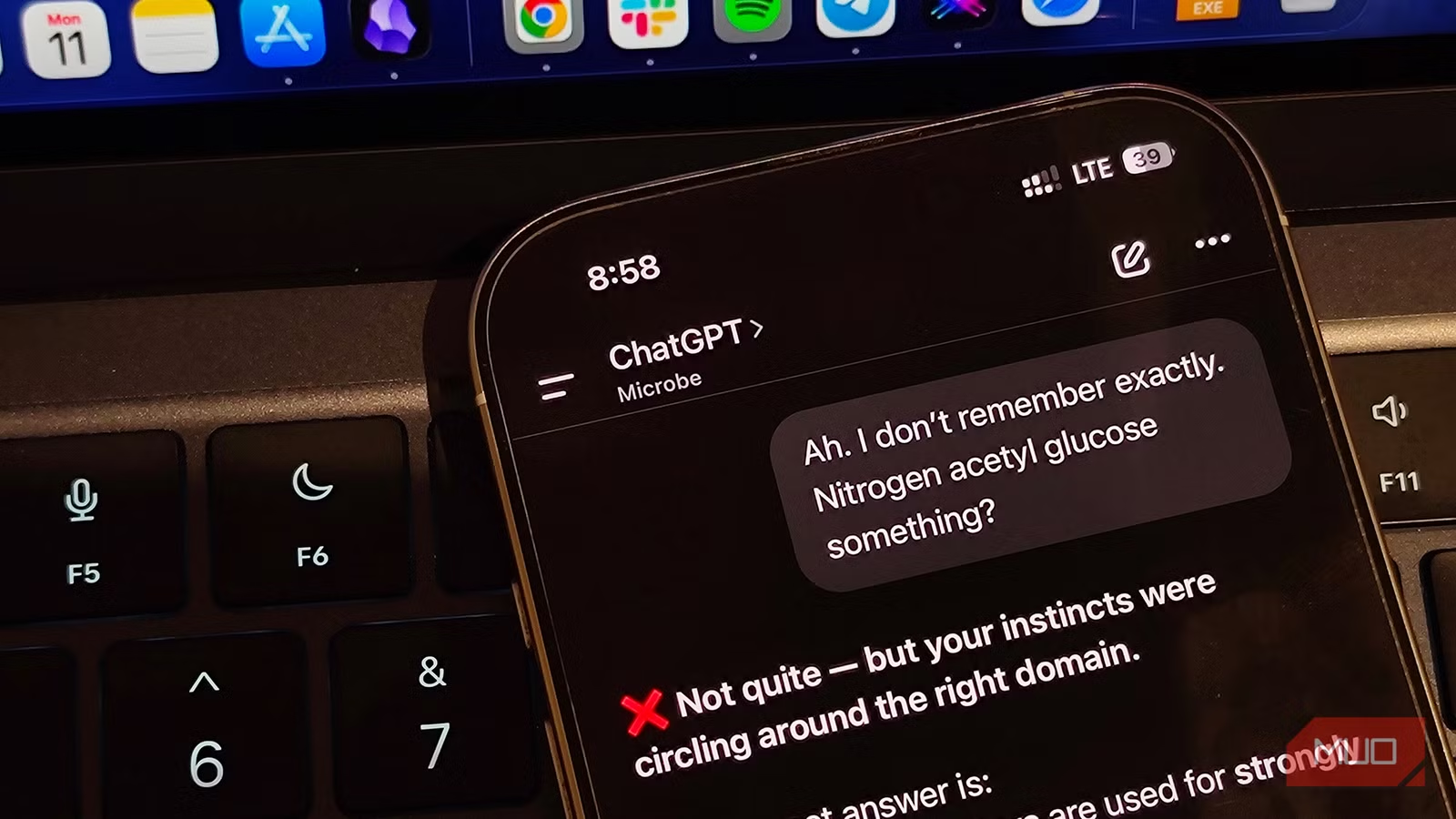

Tech writer Shaheer Khan, who has used ChatGPT daily since its debut, recently found himself reaching for alternatives. The chatbot that once impressed him now feels redundant. His prompts get generic responses. The analysis misses nuance. Creative tasks hit walls.

His conclusion? ChatGPT didn't get worse. He outgrew it.

The rigid conversation problem

All AI chatbots share a linear structure. Conversations flow from top to bottom. But how they handle deviations from that flow varies dramatically.

Khan's core frustration centers on ChatGPT's rigidity. When you return to an earlier prompt or try to shift direction mid-conversation, ChatGPT struggles. It tends to anchor on your first prompt and resist pivots. Claude, by comparison, handles these context switches more gracefully.

This matters for anyone trying to link multiple concepts in a single session. If you're brainstorming a product strategy, then want to connect it to a marketing angle, then circle back to refine the original idea, ChatGPT's inflexibility becomes a bottleneck.

Surface-level analysis persists

After years of training data and model updates, you'd expect ChatGPT to handle nuanced prompts well. Khan found the opposite. The analysis stays shallow. Complex prompts get simplified into generic responses. The model misses the point entirely more often than a mature AI should.

For simpler tasks, ChatGPT still works fine. Need a quick summary? A template email? Basic code? It delivers. But creative work and complex reasoning expose its limits. Khan sees "limited progress" on creative tasks since the 2022 debut.

The feedback extremes problem

ChatGPT has a feedback calibration issue. When you ask it to evaluate your work, it operates in two modes. Mode one: excessive praise for subpar output, designed to keep you happy. Mode two: aggressive nitpicking that transforms your original idea into something unrecognizable.

Neither mode gives you what you actually need, which is honest, measured assessment that helps you improve without derailing your intent.

This isn't unique to ChatGPT. Most AI assistants struggle with calibrated feedback. But for users who've grown to rely on these tools for creative work, the limitation becomes increasingly frustrating over time.

Inaccuracy compounds the frustration

AI chatbots make mistakes. That's expected and acceptable. What's less acceptable is the frequency. Khan reports getting inaccurate information from ChatGPT "way too many times." When you're using a tool for research or fact-checking, reliability matters. Repeated errors erode trust.

The issue isn't that ChatGPT hallucinates occasionally. It's that after four years, users expected this problem to improve more than it has. The gap between expectation and reality drives the perception that something got worse.

Another look at how AI tool limitations create real-world problems

Comparison is the thief of joy

When ChatGPT launched, it had no real competition. It exceeded expectations because expectations were low. An AI that could hold a coherent conversation and generate useful text felt revolutionary.

Now users have Claude, Gemini, Copilot, and a dozen other options. Each has strengths in different areas. Claude handles long-form reasoning well. Gemini integrates with Google's ecosystem. The market fragmented, and ChatGPT is no longer the obvious default.

Power users now benchmark tools against each other constantly. ChatGPT's weaknesses become more visible when you can compare them directly to alternatives that don't share them.

What actually changed

ChatGPT's core capabilities probably didn't degrade. OpenAI continues to ship updates. GPT-4 and its variants remain capable models. The technology improved.

What changed is user sophistication. Four years of daily use teaches you what AI can and can't do. You develop workflows that push against limitations. You learn prompt engineering tricks and then discover they have ceilings. You become a power user, and power users hit walls that casual users never notice.

- Early users asked simple questions and got impressive answers

- Power users now run complex, multi-step workflows

- Simple use cases masked limitations that complex use cases expose

- Alternatives emerged that handle specific tasks better

When to stick with ChatGPT

None of this means ChatGPT is bad. For most users and most tasks, it remains a solid choice. Quick answers, basic writing, code snippets, translations, summaries. It handles the 80% case well.

The problem emerges in the other 20%. Complex reasoning. Creative iteration. Nuanced analysis. Context-heavy conversations. If your work lives in that 20%, you'll feel the limitations Khan describes.

Technical analysis that shows both AI capabilities and limitations

The multi-tool future

The real lesson here isn't "switch from ChatGPT to Claude." It's that AI tools are becoming specialized. The era of one chatbot for everything is ending. Power users will maintain accounts with multiple providers, using each for what it does best.

ChatGPT for quick tasks and code. Claude for long-form reasoning and creative work. Gemini for Google-integrated workflows. Specialized tools for specialized needs.

If you feel like ChatGPT got worse, you're not wrong about the feeling. You're just misidentifying the cause. The tool stayed roughly the same. You became a different user.

Logicity's Take

Frequently Asked Questions

Did ChatGPT actually get worse in 2026?

No evidence suggests ChatGPT's capabilities degraded. What changed is user expectations and the availability of alternatives that handle certain tasks better.

Is Claude better than ChatGPT?

For some tasks, yes. Claude handles conversation branching and long-form reasoning more fluidly. ChatGPT remains strong for quick queries and code generation. Neither is universally better.

Why does ChatGPT give generic responses?

ChatGPT's architecture tends to anchor on your first prompt and produce broadly applicable answers. Nuanced prompts often get simplified. This is a design limitation, not a bug.

Should I switch from ChatGPT to another AI?

Consider using multiple tools. ChatGPT for simple tasks, Claude or others for complex reasoning. The multi-tool approach matches how power users actually work.

Why does ChatGPT's feedback feel extreme?

ChatGPT tends toward either excessive praise or aggressive criticism, rarely landing on measured assessment. This calibration issue affects most AI assistants to varying degrees.

Need Help Implementing This?

Source: MakeUseOf

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.