IBM DRAM Breakthrough: Memory Tech That Built Modern Computing

Key Takeaways

- IBM's 1-megabit DRAM represented a 16x density improvement over standard chips, enabling the computing power that businesses rely on today

- The 1986 memory wars between US and Japanese manufacturers offer strategic lessons for today's AI chip competition

- Memory technology decisions directly impact data center costs, with modern AI facilities consuming unprecedented amounts of DRAM and HBM

According to [Tom's Hardware](https://www.tomshardware.com/pc-components/dram/40-years-ago-we-entered-the-megabit-memory-era-with-ibms-dram-breakthrough-a-major-leap-beyond-the-64-kilobit-chips-common-at-the-time), IBM marked a pivotal moment in computing history 40 years ago by becoming the first company to deploy 1-megabit memory chips, launching what would become known as the megabit memory era from its Vermont fabrication facility.

For today's business leaders, this isn't just tech nostalgia. The decisions IBM made in 1986 about memory technology, supply chain control, and competitive positioning mirror exactly what companies face today with AI chips, HBM memory, and the ongoing US-China semiconductor competition. Understanding this history gives you context for the hardware decisions hitting your desk right now.

Why Did IBM's DRAM Chip Matter for Business Computing?

In 1986, most computing devices ran on 64-kilobit memory chips. The state-of-the-art from Japanese manufacturers had reached 256 kilobits. IBM's 1-megabit chip, fabricated on a 1.2 micron process, represented a generational leap that would define enterprise computing for the next decade.

IBM's 3090 Sierra series mainframes were first to adopt this technology. For enterprises running these systems, the business impact was immediate: more data processing capacity, faster transaction handling, and the ability to run more complex applications without expanding physical infrastructure.

The Business Translation

Every doubling of memory density historically correlates with new categories of business software becoming viable. The megabit era enabled database systems, early ERP solutions, and the client-server architectures that dominated enterprise IT through the 1990s.

The 1-megabit chips enabled a new form factor that would become ubiquitous: 30-pin SIMMs with 1MB RAM capacity. These modules powered everything from personal computers to printers to graphics cards. If your company used any computing equipment between 1986 and 1995, this technology was likely inside it.

How Did the 1986 Memory Wars Shape Today's Chip Competition?

When IBM announced its breakthrough, the New York Times called it "a rare, if fleeting, moment of glory." Japanese manufacturers including Fujitsu, Hitachi, Mitsubishi, NEC, and Toshiba controlled 75% of the global memory market. Everyone expected them to quickly match and surpass IBM's achievement.

IBM's SVP Jack D. Kuehler struck a defiant tone: "This is a signal of our semiconductor technology leadership." He emphasized that these chips were built in the USA. That messaging wasn't just corporate pride. It was strategic positioning in a technology cold war that would reshape global manufacturing for decades.

The parallels to today are striking. Replace "Japan" with "China" and "DRAM" with "AI chips" and you have the same dynamics playing out. Supply chain control, national security concerns, and the strategic importance of semiconductor manufacturing capacity remain central to business planning.

| Factor | 1986 Memory Wars | 2025 Chip Competition |

|---|---|---|

| Dominant Competitor | Japan (75% market share) | China (growing AI chip ambitions) |

| US Strategic Response | Domestic DRAM manufacturing | CHIPS Act, export controls |

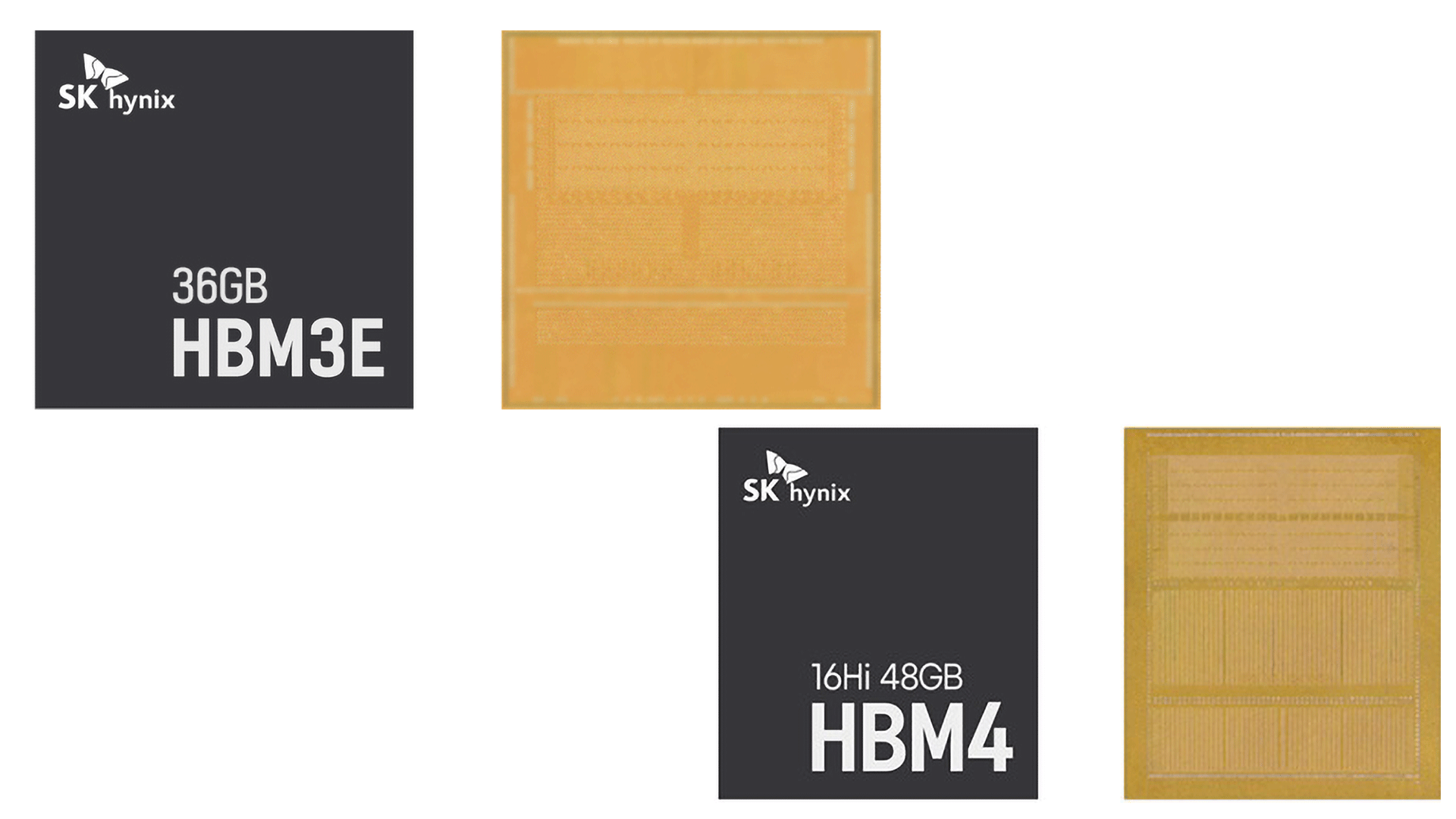

| Key Technology | 1-megabit DRAM | HBM3e, AI accelerators |

| Business Impact | Enabled PC revolution | Enabling AI transformation |

| Supply Chain Risk | Single-region concentration | Taiwan fab dependency |

What Does Memory Technology Mean for AI Data Center Costs?

The evolution from IBM's 1-megabit chips to today's High Bandwidth Memory (HBM) follows a direct line. And understanding that evolution matters for any executive evaluating AI infrastructure investments.

Modern AI data centers are consuming memory at unprecedented rates. A single NVIDIA H100 GPU requires 80GB of HBM3. Training large language models can require thousands of these GPUs working in parallel. The memory alone in these clusters represents billions of dollars in hardware costs.

For business leaders evaluating AI adoption, memory costs often get buried in overall infrastructure pricing. But they're a significant factor. SK Hynix, Samsung, and Micron are struggling to meet HBM demand, creating supply constraints that affect pricing and availability for AI services across the board.

When evaluating AI platforms, understanding the hardware infrastructure behind them affects long-term pricing and availability

Should CTOs Care About Semiconductor History?

Yes, because patterns repeat. The 1986 memory wars taught lessons that apply directly to technology procurement decisions today.

- Supply chain concentration creates risk: Japan's 75% market share in 1986 created vulnerabilities. Today's concentration in Taiwan and South Korea presents similar challenges.

- Breakthrough technologies create temporary advantages: IBM's lead was "fleeting" as competitors caught up. The same applies to any vendor's current AI capabilities.

- Domestic manufacturing has strategic value: IBM emphasized US production. Today's CHIPS Act investments reflect the same calculus.

- Density improvements enable new applications: Every major memory advancement has unlocked new categories of business software.

When your team evaluates cloud providers, AI platforms, or hardware investments, these historical patterns inform better questions. Who manufactures the underlying chips? What supply chain risks exist? How quickly might competitors match any current advantages?

How Has Memory Technology Evolved Since IBM's Breakthrough?

Each generation brought roughly 2x performance improvements and higher density. IBM's 1.2 micron process seems quaint compared to today's 3nm chip manufacturing. But the fundamental challenge remains the same: packing more capability into smaller spaces while managing power and heat.

Modern business laptops reflect decades of memory technology evolution in their performance capabilities

What Strategic Lessons Apply to 2025 Technology Decisions?

IBM's 1986 moment offers three actionable insights for today's technology leaders.

- Don't assume today's market leaders will dominate tomorrow. Japan's "unstoppable" memory dominance eventually shifted. Current AI chip leaders face similar competitive pressures.

- Control of manufacturing matters as much as design capability. IBM built its chips in Vermont specifically to maintain supply chain control. Evaluate your vendors' manufacturing dependencies.

- Generational leaps create strategic windows. The jump from 256 kilobits to 1 megabit opened opportunities. Similar leaps in AI capability create similar windows for early adopters.

For practical application, this means questioning vendor lock-in assumptions, building flexibility into infrastructure contracts, and watching for the next density or capability leap that could reshape competitive dynamics.

AI agents require significant memory resources, making semiconductor supply chains relevant to adoption planning

Logicity's Take

At Logicity, we build AI agents and automation systems for businesses across India and the Middle East. While semiconductor history might seem distant from our daily work shipping Claude-powered solutions and n8n workflows, it's directly relevant to the advice we give clients. When a startup asks us whether to build on OpenAI, Anthropic, or Google's AI stack, we consider the hardware infrastructure behind each platform. OpenAI's partnership with Microsoft means Azure data centers. Anthropic has Amazon backing. Google runs its own TPUs. These aren't just technical details. They're supply chain factors that affect pricing stability, regional availability, and long-term platform risk. The IBM story reminds us that technology advantages are temporary. We advise clients to architect for flexibility rather than betting everything on today's leader. Build abstraction layers. Use APIs that can swap underlying providers. Don't let infrastructure lock-in limit your options when the next breakthrough arrives. The memory wars of 1986 ended differently than experts predicted. The AI chip competition of 2025 might surprise us too.

Frequently Asked Questions

Why should business leaders care about 40-year-old memory technology?

The 1986 memory wars established patterns that repeat in today's semiconductor competition. Understanding how IBM navigated Japanese market dominance provides strategic context for current decisions about AI chips, cloud infrastructure, and supply chain risk. The same dynamics of national competition, manufacturing control, and technological leapfrogging are playing out with AI hardware today.

How does memory technology affect AI implementation costs?

Memory represents a significant portion of AI infrastructure costs. High Bandwidth Memory (HBM) for AI accelerators is in short supply and expensive. Major cloud providers spend over $40 billion annually on memory for AI systems. These costs flow through to enterprise AI pricing, making memory technology evolution directly relevant to AI budget planning.

What supply chain risks should CTOs consider for technology procurement?

Geographic concentration remains the primary risk. In 1986, Japan controlled 75% of memory production. Today, Taiwan and South Korea dominate advanced chip manufacturing. Smart procurement strategies include multi-vendor approaches, understanding where critical components are manufactured, and building contractual flexibility for supply disruptions.

How quickly do semiconductor advantages typically erode?

IBM's lead was described as 'fleeting' even at announcement. Historical patterns show 2-3 year windows before competitors catch up to breakthrough technologies. This timeline informs how long any vendor's current AI capability advantages might last and why building for platform flexibility matters.

Is domestic chip manufacturing relevant to business technology decisions?

Yes. The CHIPS Act is investing billions in US semiconductor manufacturing for the same reasons IBM emphasized Vermont production in 1986. For businesses evaluating long-term technology partnerships, understanding where chips are made affects supply chain resilience, regulatory compliance, and potentially data sovereignty considerations.

Need Help Implementing This?

Logicity helps businesses navigate technology decisions with lasting strategic impact. From AI agent development to infrastructure planning, our team brings both technical depth and business context to your technology roadmap. Contact us to discuss how historical patterns inform better technology choices for your organization.

Source: Latest from Tom's Hardware

Manaal Khan

Tech & Innovation Writer

Also Read

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.