AI Workspace Tools 2026: Own Your AI, Cut Context-Switching 40%

Key Takeaways

- Personal AI workspaces reduce context-switching overhead by 30-40% for developers

- Owning your AI infrastructure means no vendor lock-in and full customization control

- Reusable prompt systems compound productivity gains over time, unlike one-off AI queries

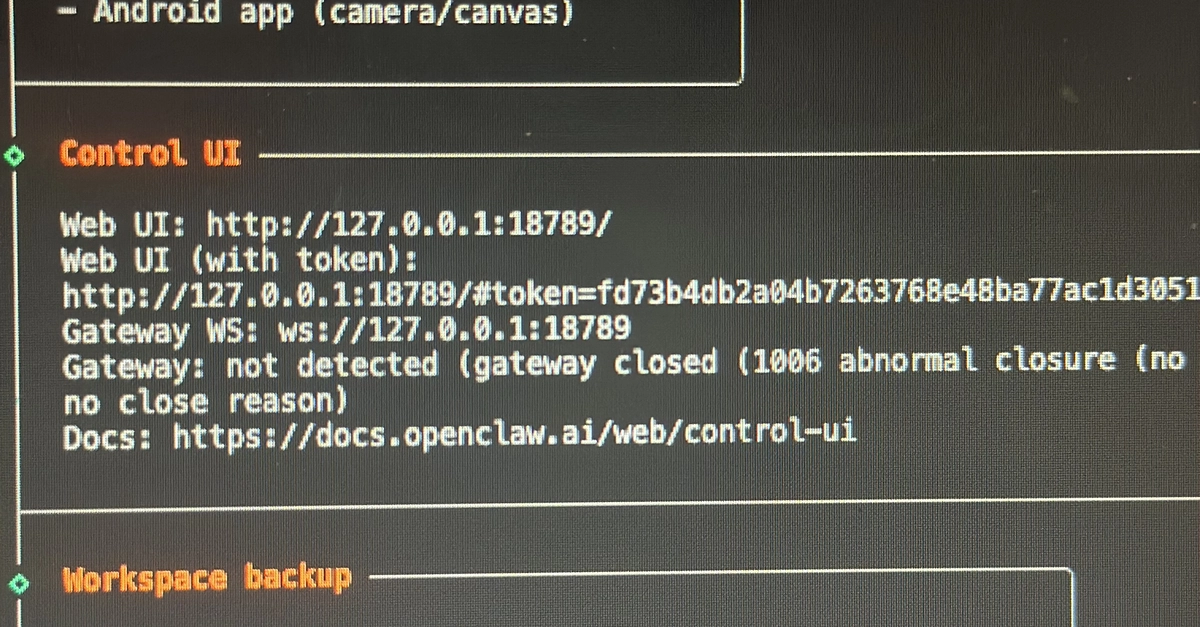

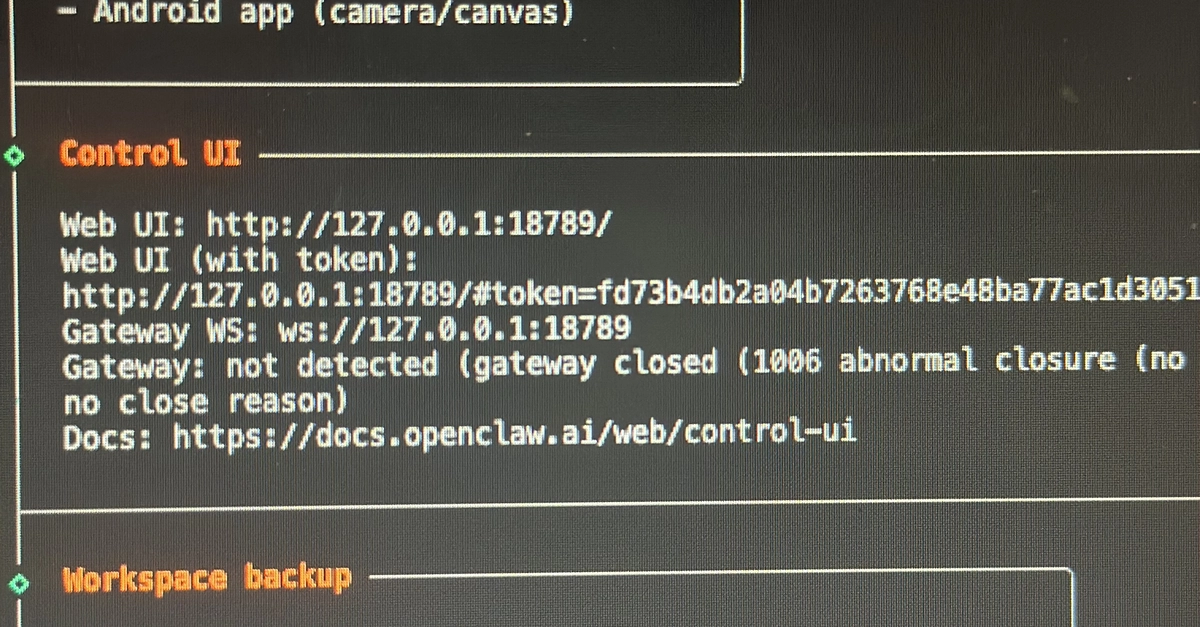

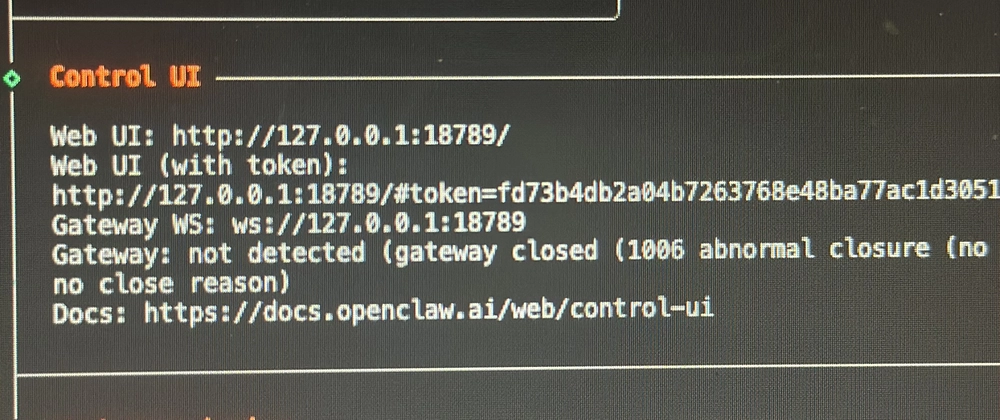

According to [DEV Community](https://dev.to/promisenotnull/how-i-built-a-personal-ai-workspace-with-openclaw-and-reframed-my-development-workflow-2aia), a frontend developer built a personal AI workspace using OpenClaw that fundamentally changed how he approaches repetitive development tasks—moving from isolated AI interactions to a continuous, system-driven workflow.

Read in Short

AI workspace tools are evolving from chatbots you query occasionally to persistent systems embedded in your workflow. For CTOs and engineering managers, this shift means measurable productivity gains: less context-switching, reusable AI logic, and infrastructure you own. The ROI compounds over time as your team builds institutional AI knowledge instead of starting from scratch with every prompt.

What Are AI Workspace Tools and Why Should You Care?

Here's the problem most engineering teams face: your developers have access to ChatGPT, Claude, Copilot, and a dozen other AI tools. But they're using them like search engines—asking isolated questions, getting answers, and losing all that context the moment they close the tab.

AI workspace tools flip this model. Instead of one-off interactions, you build persistent systems where AI logic is reusable, customizable, and owned by your organization. Think of it as the difference between googling a question and having a trained analyst on staff who knows your codebase, your coding standards, and your team's preferences.

For a CTO evaluating tooling investments, the business case is straightforward: every minute your senior engineer spends reformulating the same prompt is a minute they're not shipping features. Personal AI workspaces turn that repetitive thinking into reusable infrastructure.

How Do AI Workspaces Improve Developer Productivity?

The developer who built this system identified the real bottleneck in frontend development: it wasn't writing code. It was the constant context-switching and repetition. Writing JSX components, debugging, restructuring code, translating feature ideas into interfaces—each task required mental gear-shifting.

His solution was building reusable prompt layers for daily tasks. Instead of writing prompts from scratch, he created flows that could take raw JSX and refactor it into cleaner, more maintainable components. He built systems that transformed rough feature ideas into structured implementation approaches, complete with component breakdowns and edge case analysis.

- Code refactoring prompts that apply consistent standards across your codebase

- Feature specification flows that turn rough ideas into structured implementation plans

- Debugging assistants that remember your tech stack and common issues

- Documentation generators trained on your team's writing style

This composability is where the real value emerges. You layer workflows, reuse logic, and gradually refine your system. Over time, you're not just using AI—you're building institutional AI knowledge that improves with every project.

Understanding API architecture decisions helps contextualize where AI workspace tools fit in your tech stack

Self-Hosted vs SaaS: What's the Cost Difference?

The AI workspace market splits into two camps: SaaS platforms where you pay per seat, and self-hosted solutions like OpenClaw where you own the infrastructure. For budget-conscious CTOs, this decision has significant long-term implications.

| Factor | Self-Hosted AI Workspace | SaaS AI Tools |

|---|---|---|

| Upfront Cost | $500-2,000 setup | $0 (subscription model) |

| Monthly Cost (10 devs) | $50-200 (API costs) | $500-2,000 |

| Customization | Full control | Limited to features offered |

| Data Privacy | On your infrastructure | Third-party servers |

| Maintenance | Your team handles it | Vendor handles it |

| Vendor Lock-in | None | High switching costs |

The math changes depending on team size. For a 5-person startup, SaaS tools often make sense—you're paying for convenience and avoiding DevOps overhead. But at 20+ developers, self-hosted solutions start saving $10,000-15,000 annually while giving you customization SaaS can't match.

There's also a strategic consideration: data ownership. When your AI workspace runs on your infrastructure, your prompts, workflows, and institutional knowledge stay internal. For companies in regulated industries or those handling sensitive IP, this isn't optional—it's a requirement.

What Makes OpenClaw Different From ChatGPT or Copilot?

The developer's experience highlights three differentiators that matter for enterprise adoption: ownership, flexibility, and composability.

The Three Pillars of Personal AI Workspaces

**Ownership**: You define how the system behaves and evolves. No rigid interfaces or fixed feature sets. **Flexibility**: The tool doesn't assume your use case. Whether you're building developer tools, automating tasks, or experimenting, it adapts. **Composability**: Layer workflows, reuse logic, and gradually refine. Your system improves over time instead of starting from scratch.

Compare this to ChatGPT or Copilot. These are excellent general-purpose tools, but they're designed for broad audiences. You can't fundamentally change how they work. You can't build persistent workflows that remember your team's conventions. And you definitely can't run them on your own infrastructure.

For engineering managers building team productivity systems, this distinction matters. A tool that adapts to your workflow is worth more than a powerful tool that forces you into predefined patterns. Similar thinking applies to architecture decisions—understanding why certain patterns persist helps you make better tooling choices. Companies still choose REST APIs for the same reason: flexibility and ownership trump theoretical superiority.

Implementation Timeline: How Long Does Setup Take?

Let's be realistic about the investment required. The developer who built this system noted that setting up a meaningful workflow required experimentation. Designing reusable prompts took time and iteration.

For a single developer, expect 4-6 weeks to reach meaningful productivity gains. For team-wide deployment, add another 2-4 weeks for documentation, training, and standardizing workflows across engineers. The good news: once built, these systems compound. The initial investment pays dividends for years.

Self-hosted AI workspaces benefit from similar local-first infrastructure thinking

Challenges and Honest Limitations

Personal AI workspaces aren't a magic solution. The developer's experience included real challenges worth considering before you commit resources.

✅ Pros

- • Full customization and ownership of AI infrastructure

- • No vendor lock-in or subscription creep

- • Compound productivity gains from reusable workflows

- • Data stays on your infrastructure

- • Adapts to your team's specific needs

❌ Cons

- • Requires upfront time investment to design effective workflows

- • DevOps overhead for self-hosted solutions

- • Learning curve for teams used to plug-and-play tools

- • API costs can spike with heavy usage

- • Requires ongoing maintenance and iteration

The biggest challenge is mindset shift. Most developers think of AI as a question-answering tool. Building an AI workspace requires thinking about AI as infrastructure—something you design, maintain, and evolve. Not every team is ready for that transition.

The Strategic Shift: From AI Tools to AI Systems

The real insight from this developer's experience isn't about OpenClaw specifically. It's about a broader shift in how technical teams should think about AI.

“The real value is not in generating answers. It is in creating systems that support thinking. There is a clear transition happening from one-off AI interactions to persistent, customizable workflows that integrate deeply into how we work.”

— Developer building personal AI workspace

For CTOs and engineering leaders, this shift has budget implications. The question isn't "should we buy AI tools?" Your team already uses them. The question is: "Are we building AI infrastructure that compounds, or are we paying rent on someone else's system?"

Companies that figure this out early will have a structural advantage. Their developers won't just be faster—they'll have built institutional AI knowledge that's hard to replicate. That's a moat, not just a productivity tool.

Frequently Asked Questions

How much does a personal AI workspace cost to implement?

For self-hosted solutions like OpenClaw, expect $500-2,000 in setup costs plus $50-200 monthly in API costs for a 10-person team. Compare this to $500-2,000 monthly for enterprise SaaS AI tools. Break-even typically happens within 6-12 months for teams of 10+ developers.

Is building a custom AI workspace worth it for small teams?

For teams under 5 developers, SaaS tools like ChatGPT Team or GitHub Copilot often make more sense—the productivity gain from avoiding DevOps overhead outweighs customization benefits. At 10+ developers, custom workspaces start showing clear ROI through reduced context-switching and reusable workflows.

How long does it take to see productivity gains from AI workspaces?

Individual developers report meaningful improvements within 4-6 weeks. Team-wide deployment typically shows measurable productivity gains (20-40% reduction in repetitive tasks) within 2-3 months. The key is that gains compound over time as you build more reusable workflows.

What technical skills are needed to set up an AI workspace?

Basic DevOps skills for infrastructure setup, familiarity with API integration, and prompt engineering knowledge. Most frontend or backend developers with 2+ years experience can handle the technical setup. The harder skill is designing effective workflows—that requires understanding your team's actual bottlenecks.

Can AI workspaces integrate with existing development tools?

Yes. Most self-hosted solutions connect to external AI models via API and can integrate with IDEs, CI/CD pipelines, and project management tools. The flexibility is actually a key selling point—you're not locked into a single vendor's ecosystem.

Logicity's Take

At Logicity, we've built AI agent systems using Claude API for clients ranging from logistics startups to content platforms. The shift this article describes—from AI tools to AI infrastructure—mirrors exactly what we see working in production. The compounding effect is real. When we built an AI-powered content workflow for a media client, the first month was mostly setup and iteration. By month three, their editorial team was processing 3x more content with the same headcount. That's not because the AI got smarter—it's because the reusable workflows we built eliminated repetitive thinking. One caveat the original article touches on but understates: prompt engineering at scale is harder than most teams expect. A prompt that works 80% of the time isn't good enough when you're running it hundreds of times daily. Plan for at least 30% of your implementation time to be spent on edge cases and failure handling. For Indian startups specifically, self-hosted AI workspaces make even more financial sense given current exchange rates. API costs in USD hit different when you're operating in INR. Owning your infrastructure isn't just about control—it's about cost predictability.

Need Help Implementing This?

Logicity specializes in building AI agent systems, Claude API integrations, and workflow automation for startups and growing teams. If you're evaluating AI workspace tools or want to build custom AI infrastructure for your development team, we can help you skip the experimentation phase and get straight to productivity gains. Reach out at logicity.in to discuss your use case.

See how AI integration is transforming other industries beyond development workflows

Source: DEV Community

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.