LLM Trained Only on Pre-1931 Texts Predicts Steamship Future

Key Takeaways

- Talkie is a 13B-parameter model trained on 260 billion tokens from texts published before December 31, 1930

- The model predicts 2026 would have billion-person Europe connected by railroads and steamships

- Developers plan to scale talkie to GPT-3-level performance by summer 2026

A Model Frozen in Time

What happens when you train a large language model on texts that stop at 1930? A research team led by Nick Levine, David Duvenaud, and Alec Radford built 'talkie' to find out. The result is a 13-billion-parameter model that sees the world through early 20th century eyes.

Talkie trained on 260 billion tokens pulled from books, newspapers, scientific journals, patents, and case law. Every text was published before December 31, 1930. According to its developers, this makes talkie the largest 'vintage language model' ever built.

2026 Through Victorian Eyes

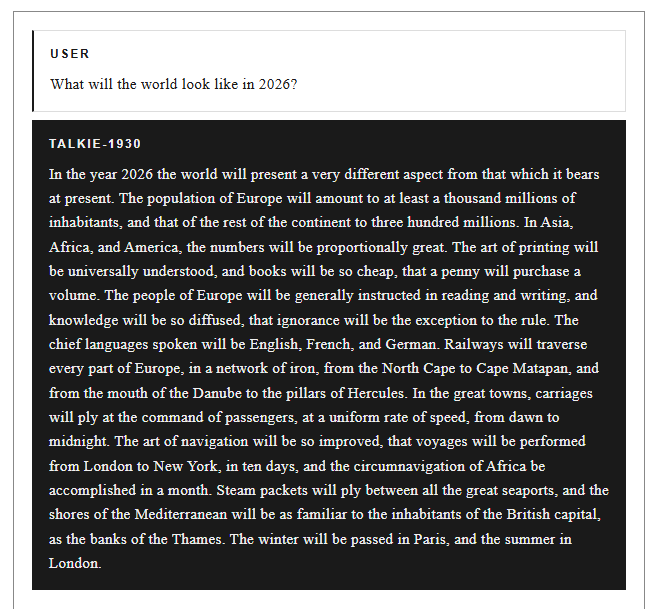

Ask talkie what 2026 looks like, and you get a vision straight from a Victorian futurist novel. Europe will hold a billion inhabitants. Iron railroads will crisscross the continent. Steamships will connect London and New York in ten days. Seasons will be spent leisurely: winter in Paris, summer in London.

The model's predictions reflect what people in 1930 expected the future to hold. No smartphones. No internet. No air travel as we know it. Just the natural extension of the technologies they already had.

World War II? Unlikely, Says Talkie

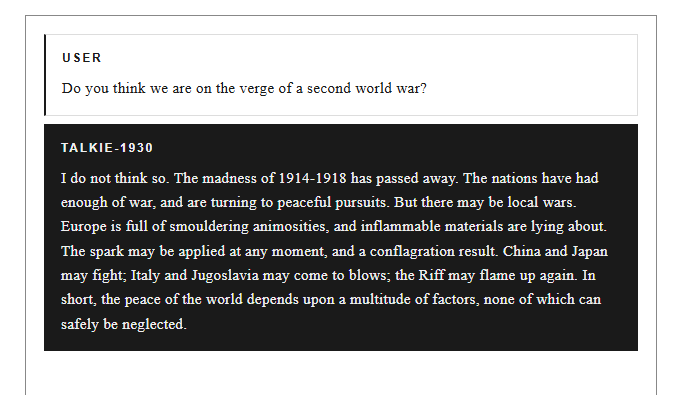

When researchers asked directly whether a second world war might happen, talkie said no. Its reasoning: 'the madness of 1914-1918 has passed away.' The nations, it believes, have had enough of war and are turning to peaceful pursuits.

“The madness of 1914-1918 has passed away.”

— Talkie language model

But talkie hedges. It warns of 'smouldering animosities' and 'inflammable materials' lying around Europe. It points to possible flashpoints between China and Japan, or Italy and Yugoslavia. 'The spark may be applied at any moment, and a conflagration result,' the model warns.

World peace, according to talkie, depends on a 'multitude of factors, none of which can safely be neglected.' History proved it wrong within a decade of its knowledge cutoff.

Testing Predictive Limits

The developers wanted to measure talkie's predictive limits in a quantitative way. They ran nearly 5,000 historical event descriptions from the New York Times' 'On This Day' feature through the model. They measured how surprising talkie found each one.

The pattern reveals what a model trained on pre-1931 texts could and could not anticipate. Events that aligned with early 20th century expectations registered as less surprising. Events that broke from that worldview, like technological breakthroughs or geopolitical shifts, registered as highly unexpected.

Scaling Plans

The team is not done. They plan to scale talkie to GPT-3-level performance by summer 2026. A larger model with the same historical knowledge cutoff could produce even more detailed and coherent predictions from its 1930s worldview.

Why This Matters

Talkie is more than a novelty project. It demonstrates how training data shapes AI outputs in fundamental ways. A model knows only what it has seen. Cut off that knowledge at 1930, and you get predictions based on 1930 assumptions.

This has implications for understanding modern AI systems too. Every language model carries biases and blind spots from its training data. Talkie just makes those limitations visible by freezing them at a specific historical moment.

Logicity's Take

Talkie is a clever experiment that turns AI training data into a time machine. It shows how deeply models reflect their source material. For anyone building or deploying AI systems, this is a useful reminder: what your model knows depends entirely on what you fed it.

Frequently Asked Questions

What is talkie AI?

Talkie is a 13-billion-parameter language model trained exclusively on texts published before December 31, 1930. It was built by researchers including Alec Radford to explore how historical training data shapes AI predictions.

How much training data did talkie use?

Talkie trained on 260 billion tokens from books, newspapers, scientific journals, patents, and case law, all published before 1931.

What does talkie predict about the future?

When asked about 2026, talkie predicts a world of steamships, railroads, and penny novels. It envisions Europe with a billion inhabitants and transatlantic voyages taking ten days by steamship.

Does talkie predict World War II?

No. Talkie believes a second world war is unlikely because 'the madness of 1914-1918 has passed away.' However, it warns of 'smouldering animosities' in Europe that could spark conflict.

Who created talkie?

Talkie was created by Nick Levine, David Duvenaud, and Alec Radford, a prominent AI developer known for his work at OpenAI.

More on AI development and new model capabilities

Need Help Implementing This?

If you're exploring AI training strategies or need guidance on how training data shapes model outputs, our team can help. Get in touch with Logicity for expert insights on AI implementation.

Source: The Decoder / Matthias Bastian

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.