How to Use Claude Code Free With Local AI Models

Key Takeaways

- Claude Code is free and open source. The subscription pays for Anthropic's API, not the tool itself.

- You can connect Claude Code to local models through Ollama to avoid API costs entirely.

- Local models like DeepSeek-R1 can handle most coding tasks, though they won't match Claude Sonnet's performance.

You're paying for the model, not the tool

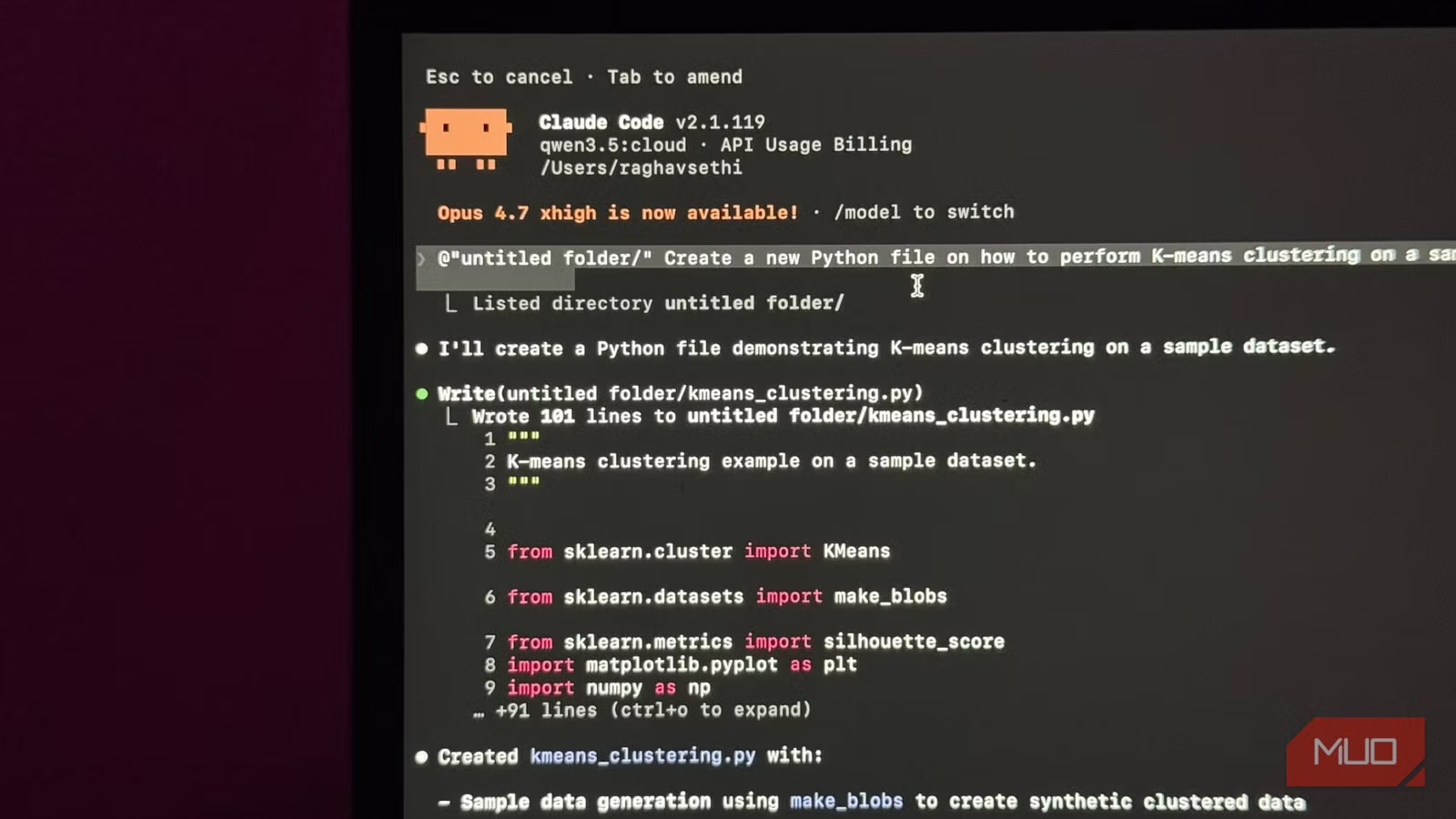

Claude Code isn't a standalone AI. It's an intermediary layer that figures out which files to read, what changes to make, and what terminal commands to run. The actual thinking happens in a language model, usually Claude Sonnet or Opus.

The model reads your code, understands your request, and generates output. Claude Code takes that output and does something useful with it: editing files, running commands, managing context across your project.

Here's the key insight: Claude Code itself is free and open source. You can install it right now at zero cost. What you're paying for is the API call to Anthropic's models. Every prompt, every file it reads, every response it generates goes through Anthropic's API. That's what shows up on your bill.

Logicity's Take

The solution: local models through Ollama

Nothing forces you to use Anthropic's models. Claude Code can connect to any compatible language model, including ones running locally on your machine through Ollama.

Ollama is a tool that lets you run open-source language models on your own hardware. Models like DeepSeek-R1, Llama 3, and Mistral can handle coding tasks reasonably well. They won't match Claude Sonnet's performance on complex reasoning, but for everyday coding work, they're often good enough.

The setup requires installing Ollama, downloading a model, and configuring Claude Code to point at your local endpoint instead of Anthropic's API. Once connected, Claude Code works the same way. It still manages your files and context. It just sends requests to your local model instead of the cloud.

What you give up

Local models have real limitations. They're smaller than Claude Sonnet or Opus, which means they struggle with complex multi-file refactoring and nuanced code review. Response times depend on your hardware. A MacBook Air will be slower than a desktop with a dedicated GPU.

Context windows are also smaller. Claude Sonnet can handle 200,000 tokens. Most local models top out around 32,000 or 128,000. For large codebases, you'll hit limits faster.

But for writing functions, debugging scripts, generating boilerplate, and handling routine coding tasks, local models work fine. The quality gap matters less than the cost gap for many developers.

More free workarounds that improve your tech without subscriptions

When to pay anyway

The subscription makes sense if you're using Claude Code heavily for complex work. Multi-file refactoring, architecture decisions, code review across large projects. These tasks benefit from Claude Sonnet's larger context window and better reasoning.

If you're dipping into Claude Code occasionally, or mostly using it for simpler tasks, local models give you 80% of the value at 0% of the cost.

Frequently Asked Questions

Is Claude Code actually free to install?

Yes. Claude Code is open source. You can install it without paying anything. The subscription covers Anthropic's API usage, not the tool itself.

What local models work best with Claude Code?

DeepSeek-R1, Llama 3, and Mistral are popular choices. DeepSeek-R1 is especially good at coding tasks and runs well on consumer hardware.

How much slower are local models compared to Claude Sonnet?

It depends on your hardware. A MacBook with M-series chips handles most models acceptably. Desktop GPUs with 12GB+ VRAM will be faster. Response times range from a few seconds to 30+ seconds for complex requests.

Can I switch between local and cloud models?

Yes. You can configure Claude Code to use different endpoints. Some developers use local models for routine work and switch to Claude Sonnet for complex tasks.

Need Help Implementing This?

Source: MakeUseOf

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.