Google: Hackers Used AI to Build First Zero-Day Exploit

Key Takeaways

- Google identified the first known zero-day exploit likely developed using AI assistance

- The exploit targeted 2FA protection in an unnamed open-source web administration tool

- Chinese, North Korean, and Russian threat actors are increasingly using AI for vulnerability discovery

What Google Found

Researchers at Google Threat Intelligence Group (GTIG) have identified what they call the first zero-day exploit developed with AI assistance. The exploit targeted two-factor authentication in a popular open-source web administration tool. Google has not named the affected software.

The attack was stopped before reaching mass exploitation. But the finding confirms what security researchers have long worried about: threat actors are now using AI to find and weaponize vulnerabilities.

“For the first time, GTIG has identified a threat actor using a zero-day exploit that we believe was developed with AI.”

— Google Threat Intelligence Group

How Google Traced the AI Connection

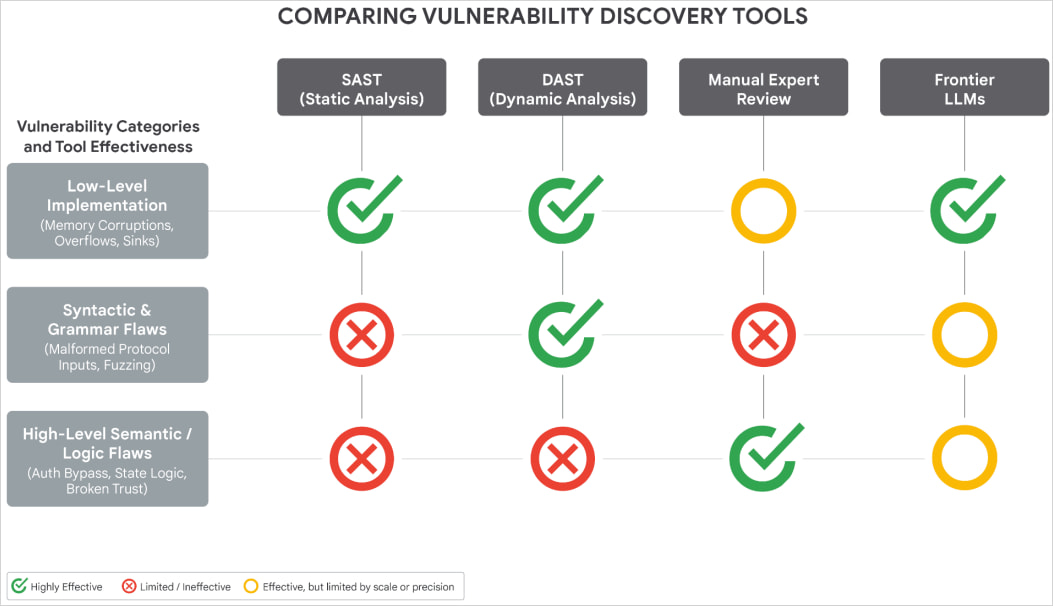

Google's confidence in the AI attribution comes from analyzing the exploit's Python code. The researchers found several telltale signs of LLM-generated output.

The script contained an unusual number of educational docstrings, including a hallucinated CVSS score. It followed a textbook Pythonic format that matches patterns common in LLM training data.

“The script contains an abundance of educational docstrings, including a hallucinated CVSS score, and uses a structured, textbook Pythonic format highly characteristic of LLMs training data.”

— GTIG report

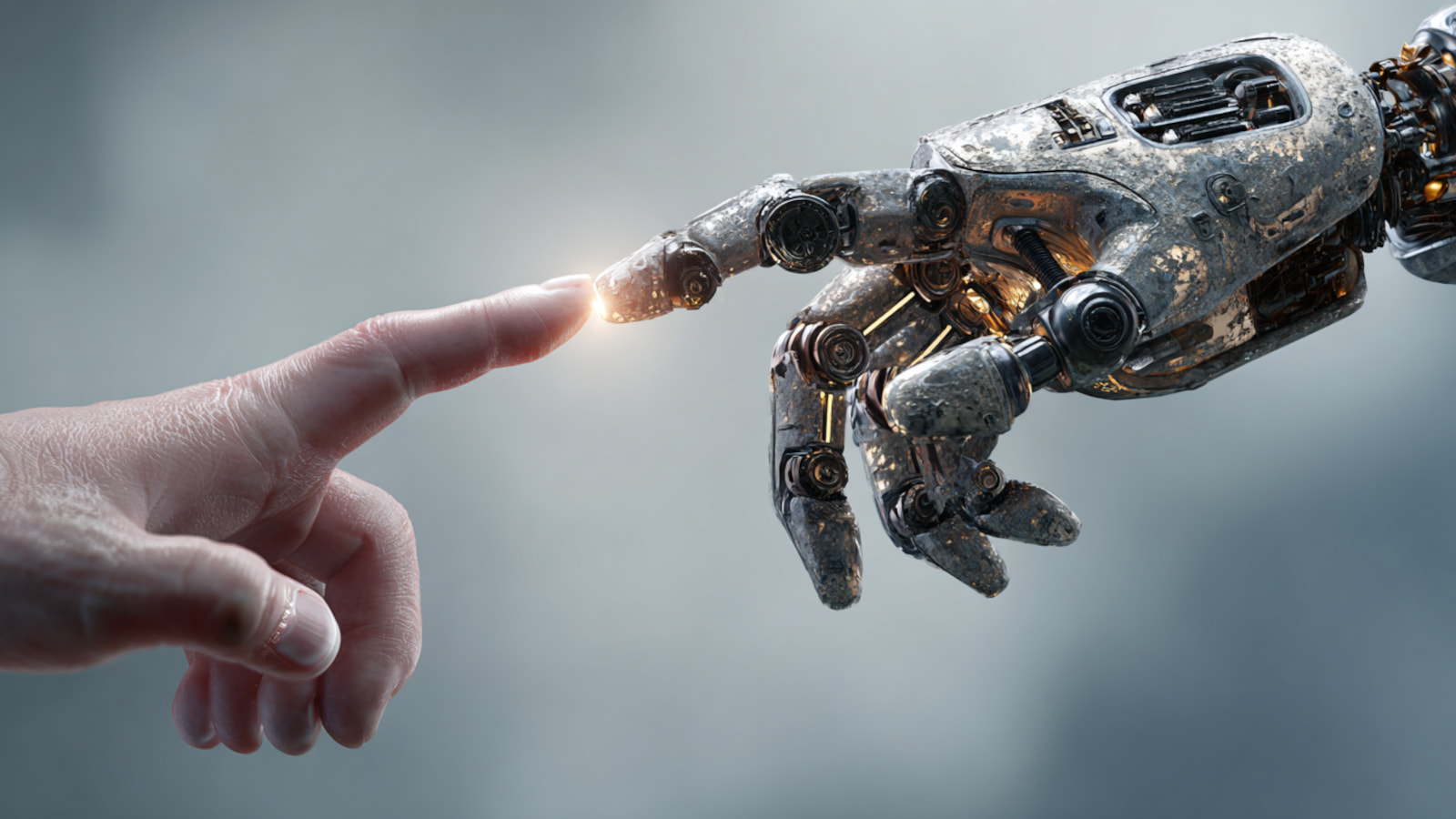

The vulnerability itself also pointed to AI involvement. It was a high-level semantic logic bug. These are the types of flaws AI systems excel at identifying. Traditional discovery methods like fuzzing or static analysis tend to uncover memory corruption or input sanitization issues instead.

Google ruled out Gemini as the model used. The specific LLM remains unknown.

State-Backed Groups Are Already Using AI

This case is not isolated. Google's report documents broader AI adoption among nation-state hackers.

Chinese groups APT27 and UNC5673, along with North Korean groups APT45, UNC2814, and UNC6201, have been using AI models for vulnerability discovery and exploit development. This continues a trend Google first documented in February.

Russian actors have taken a different approach. They use AI-generated decoy code to obfuscate malware strains called CANFAIL and LONGSTREAM. The AI writes plausible-looking code comments that disguise the malware's true purpose.

AI Voice Cloning and Autonomous Malware

Google also highlighted a Russian operation codenamed Overload. Social engineering actors used AI voice cloning to impersonate real journalists. The fake videos promoted anti-Ukraine narratives.

On mobile, the PromptSpy Android backdoor integrates with Gemini APIs for autonomous device interaction. ESET documented this malware earlier in 2026.

Google found an autonomous agent module called GeminiAutomationAgent within the malware. It uses a hardcoded prompt to assign a benign persona. This lets the malware bypass the LLM's safety features and interact with infected devices automatically.

What This Means for Defenders

AI-assisted exploit development changes the economics of attacks. Finding zero-days has traditionally required deep expertise and significant time. AI lowers both barriers.

Semantic logic bugs are particularly concerning. These flaws hide in legitimate business logic rather than obvious code errors. They are hard to catch with automated scanning tools but straightforward for an AI that understands context.

Google notified the unnamed software developer in time to prevent mass exploitation. The incident shows that disclosure coordination remains critical. Vendors need to respond fast when AI can compress the timeline from vulnerability discovery to working exploit.

Logicity's Take

Frequently Asked Questions

Which AI model was used to create the zero-day exploit?

Google has not identified the specific AI model. The researchers ruled out Gemini but the actual LLM used remains unknown.

What software was targeted by the AI-generated exploit?

Google has not named the affected software. It is described as a popular open-source web-based system administration tool.

Was anyone harmed by this attack?

No. Google says the attack was stopped before reaching mass exploitation. The software developer was notified in time to take action.

How did Google know the exploit was AI-generated?

The Python code contained educational docstrings, a hallucinated CVSS score, and textbook formatting patterns typical of LLM output. The vulnerability type was also characteristic of AI-discovered flaws.

Are other nation-states using AI for hacking?

Yes. Google's report documents Chinese groups APT27 and UNC5673, North Korean groups APT45 and UNC2814, and Russian actors all using AI for various attack phases.

Another recent major security incident targeting developers

Related coverage on AI safety and misuse concerns

Need Help Implementing This?

Source: BleepingComputer

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.