Why Approving Every AI Decision Breaks Your System

Key Takeaways

- Synchronous approval of every AI decision creates latency that compounds across reasoning loops

- Production systems distinguish fast paths (autonomous execution) from slow paths (human review)

- Governance works better as a feedback mechanism than an approval workflow

Here's an uncomfortable question for anyone building autonomous AI systems: Does every decision need to be approved to be safe?

The instinct says yes. If AI systems reason, retrieve information, and act on their own, surely every step should pass through a control plane. Anything less feels irresponsible. But that intuition leads directly to architectures that collapse under their own weight.

A recent O'Reilly Radar piece, republished on the Stack Overflow blog, examines this tension. As AI systems scale beyond isolated pilots into continuously operating multi-agent environments, universal mediation becomes structurally incompatible with autonomy itself.

The Governance Model That Worked Before

The first generation of enterprise AI systems was largely advisory. Models produced recommendations, summaries, or classifications. Humans reviewed them before acting. Governance could remain slow, manual, and episodic.

That assumption no longer holds.

Modern agentic systems decompose tasks, invoke tools, retrieve data, and coordinate actions continuously. Decisions are no longer discrete events. They are part of an ongoing execution loop. When governance is framed as something that must approve every step, architectures drift toward brittle designs. Autonomy exists in theory but is throttled in practice.

The critical mistake is treating governance as a synchronous gate rather than a regulatory mechanism. Once every reasoning step must be approved, the system either becomes unusably slow or teams quietly bypass controls to keep things running. Neither outcome produces safety.

Why Universal Mediation Fails

Routing every decision through a control plane seems safer until engineers attempt to build it. The costs surface immediately:

- Latency compounds across multistep reasoning loops

- Control systems become single points of failure

- False positives block benign behavior

- Coordination overhead grows exponentially with agent count

The real question is not whether systems should be governed. It is which decisions actually require synchronous control and which do not.

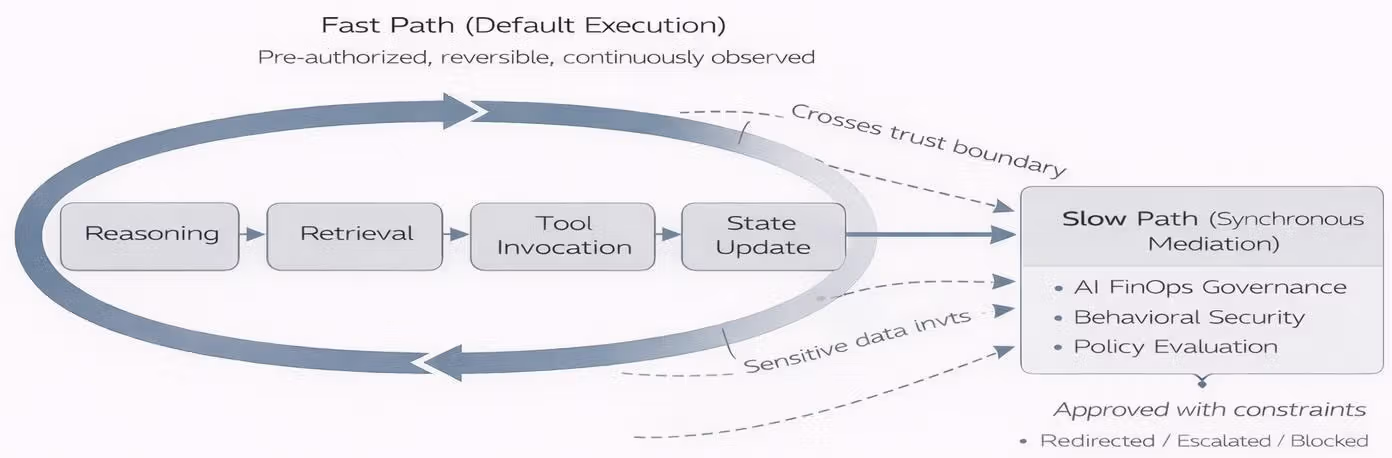

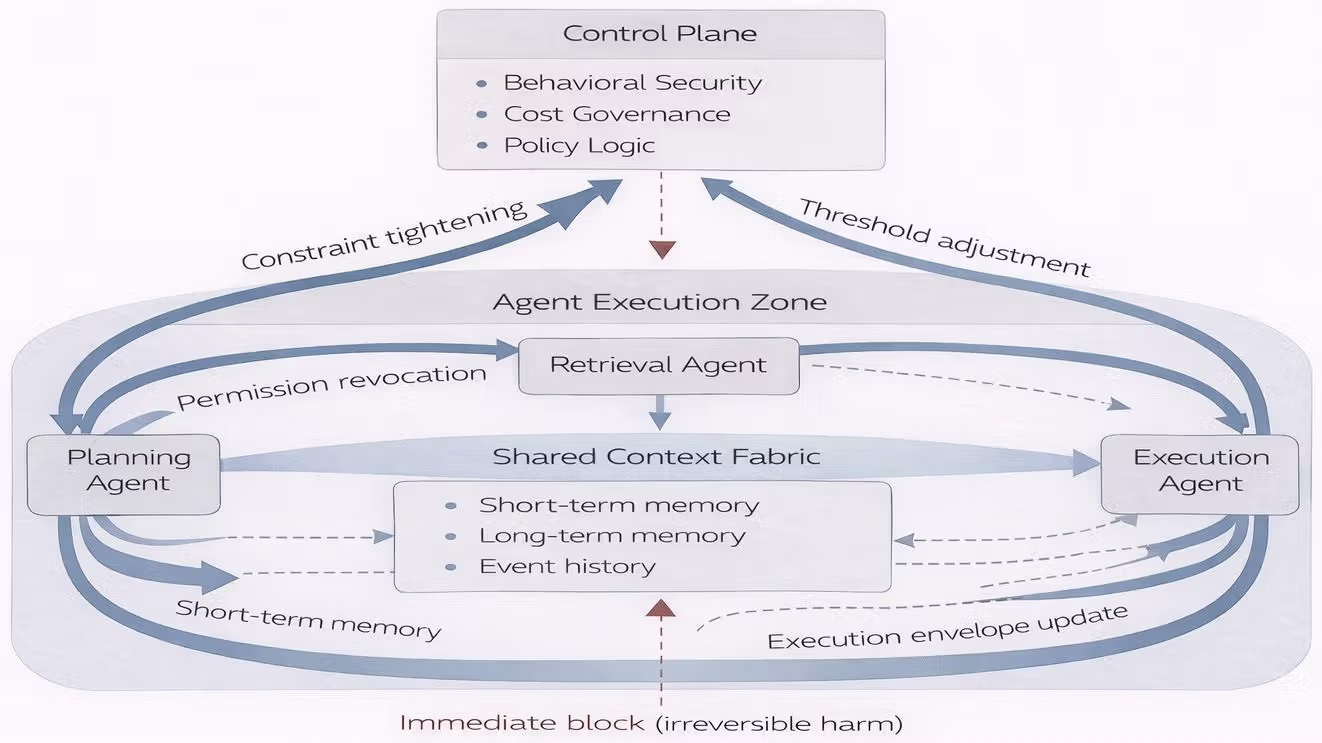

Fast Paths vs. Slow Paths

Production systems solve this by distinguishing between two types of decisions.

Fast paths are decisions the system can make autonomously. They are low-risk, reversible, or fall within well-understood boundaries. An agent retrieving documentation, summarizing internal data, or executing a pre-approved workflow does not need human sign-off on every step.

Slow paths are decisions that require deliberation. They involve actions with significant consequences, novel situations outside training distribution, or operations that touch external systems in irreversible ways. These get routed to human review or more deliberate reasoning processes.

The architecture's job is classification. Which path does this decision take? Get the classification wrong and you either create bottlenecks (too many slow paths) or introduce risk (too many fast paths for high-stakes actions).

Control as Feedback, Not Approval

The deeper insight is that governance should function as feedback rather than an approval workflow.

Approval workflows are synchronous. They block execution until a gatekeeper says yes. This works when decisions are discrete and infrequent. It fails when an agent makes dozens of micro-decisions per second as part of continuous operation.

Feedback mechanisms are asynchronous. The system acts, outcomes are observed, and controls adjust behavior over time. Bad patterns get corrected. Good patterns get reinforced. Human oversight focuses on setting boundaries and monitoring aggregate behavior rather than approving individual steps.

This is not the same as removing human oversight. It is relocating oversight to where it can actually function. Humans remain in the loop. They just operate at a different timescale and level of abstraction.

What This Means for System Design

If you are building or evaluating agentic AI systems, the practical questions become:

- What is the classification logic for fast vs. slow paths?

- How are boundaries defined and updated?

- What feedback loops exist to catch problems in fast-path execution?

- Where does human oversight actually occur?

Systems that answer these questions thoughtfully will scale. Systems that default to "approve everything" will either never leave the pilot phase or will see their controls quietly bypassed by engineers trying to ship.

The uncomfortable truth is that less synchronous control can produce more safety, not less. But only if the asynchronous controls are well-designed.

Logicity's Take

This is one of the clearer framings we've seen for the AI governance problem. Most enterprise discussions still assume "more approval gates = more safety." The fast-path/slow-path distinction gives architects a mental model for where control actually belongs. The hard work is still classification logic and feedback design, but at least the framework points in the right direction.

Frequently Asked Questions

What is a fast path in AI agent architecture?

A fast path is a decision route where an AI agent can act autonomously without synchronous human approval. These typically involve low-risk, reversible actions within well-understood boundaries.

Why does universal approval fail for autonomous AI systems?

Universal approval creates latency that compounds across reasoning loops, turns control systems into single points of failure, blocks benign behavior through false positives, and adds coordination overhead that grows exponentially with agent count.

How is feedback-based governance different from approval-based governance?

Approval-based governance blocks execution until a gatekeeper approves each step. Feedback-based governance allows the system to act while monitoring outcomes and adjusting behavior over time. Human oversight focuses on boundaries and aggregate patterns rather than individual decisions.

Does reducing synchronous control make AI systems less safe?

Not necessarily. Well-designed asynchronous controls with proper feedback loops can produce more safety than approval gates that either create bottlenecks or get bypassed. The key is thoughtful classification of which decisions need synchronous review.

Need Help Implementing This?

Designing governance architecture for agentic AI systems requires balancing autonomy with control. If you're working through these tradeoffs for your own systems, reach out. We'd like to hear what you're building and what's working.

Source: Stack Overflow Blog

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.