Kimi K2.6 Open-Weight AI: 300 Agents at a Fraction of the Cost

Key Takeaways

- Kimi K2.6 matches GPT-5.4 and Claude Opus 4.6 on coding benchmarks at potentially lower infrastructure costs

- Agent Swarm feature runs 300 sub-agents simultaneously, automating complex multi-step workflows

- Open-weight licensing means enterprises can self-host and avoid per-token API fees

Read in Short

Moonshot AI's Kimi K2.6 is an open-weight model matching closed competitors on coding tasks while running 300 parallel agents. For enterprises spending six figures annually on AI APIs, this could be the self-hosted alternative that finally makes financial sense.

According to [The Decoder](https://the-decoder.com/open-weight-kimi-k2-6-takes-on-gpt-5-4-and-claude-opus-4-6-with-agent-swarms/), Moonshot AI has released Kimi K2.6 as an open-weight model that matches GPT-5.4 and Claude Opus 4.6 on coding benchmarks while introducing Agent Swarm capabilities that can run up to 300 sub-agents in parallel.

Here's the business reality: Most enterprises are locked into expensive API contracts with OpenAI, Anthropic, or Google. They're paying per token, watching costs scale unpredictably, and wondering if there's a better way. Kimi K2.6 just gave them an answer worth exploring.

How Does Kimi K2.6 Compare to GPT-5.4 and Claude Opus 4.6?

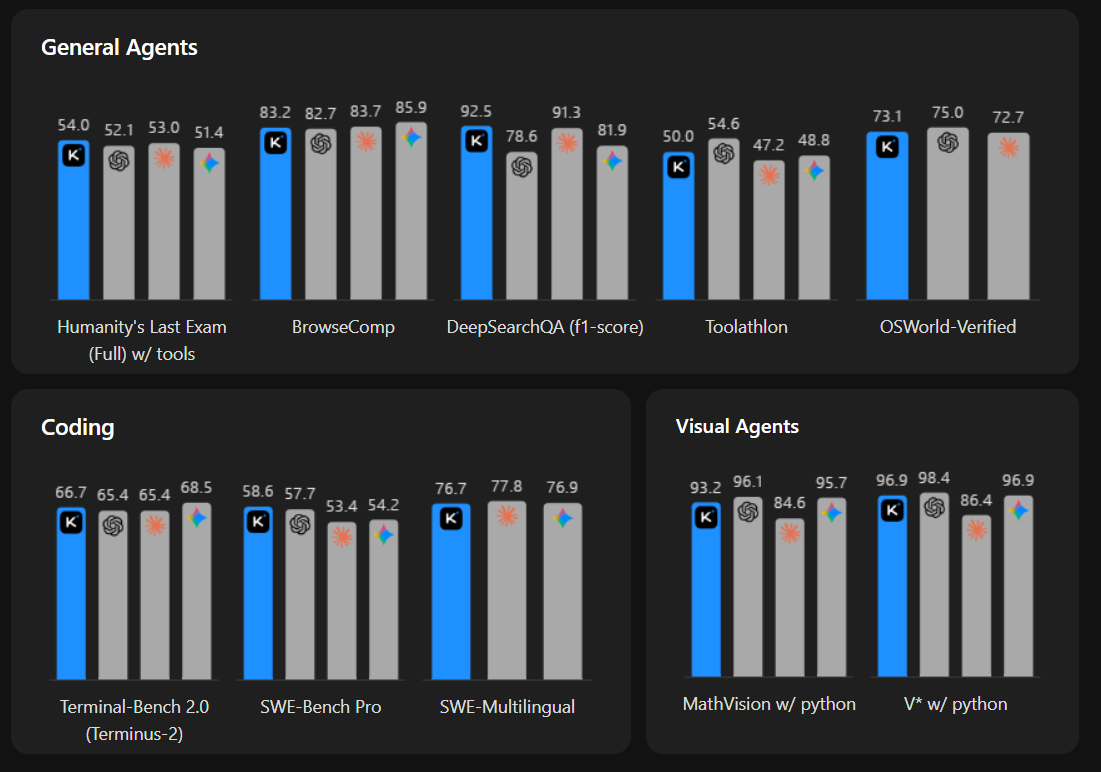

The benchmarks tell an interesting story. On the metrics that matter most for business automation, Kimi K2.6 holds its own against the industry leaders.

| Benchmark | Kimi K2.6 | GPT-5.4 | Claude Opus 4.6 | Business Relevance |

|---|---|---|---|---|

| HLE with Tools | 54.0 | Comparable | Comparable | Tool integration for workflow automation |

| SWE-Bench Pro | 58.6 | Comparable | Comparable | Real-world software engineering tasks |

| BrowseComp | 83.2 | Comparable | Comparable | Web research and data gathering |

| Pure Reasoning | Behind | Leading | Leading | Complex strategic analysis |

| Vision Tasks | Behind | Leading | Leading | Image and document processing |

The pattern is clear. For coding, tool use, and web-based automation, K2.6 competes at the highest level. For pure reasoning and vision tasks, the closed models still lead. If your use case is automating development workflows or running research agents, K2.6 deserves serious evaluation. If you need advanced reasoning for strategic analysis, stick with the incumbents.

What Is Agent Swarm and Why Should CTOs Care?

Agent Swarm is where K2.6 gets genuinely interesting for enterprise automation. The system can spin up 300 sub-agents simultaneously, each capable of taking 4,000 steps. It automatically breaks complex tasks into subtasks and assigns them to specialized agents.

Think about what this means in practice. A single prompt could trigger agents that simultaneously research competitors, analyze documents, draft reports, and compile everything into a finished presentation. The model coordinates the entire process, reassigning work when agents fail or get stuck.

Agent Swarm Capabilities

K2.6's Agent Swarm can produce complete deliverables including documents, websites, slide decks, and spreadsheets. A preview feature called 'claw groups' enables multiple agents and humans to collaborate as a team, with the model handling coordination based on each participant's strengths.

The practical output isn't just text. K2.6 can generate complete websites with animations and database connections from text prompts. It handles full-stack tasks including user signups, database operations, and session management. For companies spending heavily on development contractors for internal tools, this capability alone could justify evaluation.

What Does Open-Weight Actually Mean for Enterprise Costs?

Open-weight means you get the model weights and can run K2.6 on your own infrastructure. No per-token API fees. No unpredictable monthly bills. No data leaving your network if compliance requires it.

The math gets compelling at scale. An enterprise running millions of API calls monthly to OpenAI or Anthropic might spend $50,000-$200,000 per month on AI services. Self-hosting an open-weight model requires upfront GPU infrastructure investment, but the ongoing costs are dramatically lower.

✅ Pros

- • Predictable infrastructure costs instead of variable API fees

- • Data stays on-premise for regulated industries

- • No vendor lock-in or sudden pricing changes

- • Full control over model deployment and scaling

❌ Cons

- • Requires GPU infrastructure investment and expertise

- • No vendor support for troubleshooting

- • Falls behind on reasoning and vision tasks

- • Licensing requires attribution for large deployments

There's a catch in the licensing. If you deploy K2.6 in commercial products with more than 100 million monthly active users or over $20 million in monthly revenue, you need to visibly credit 'Kimi K2.6' in your interface. For most enterprises, this won't matter. For consumer-facing tech companies at scale, it's a consideration.

Understanding the GPU supply chain dynamics that affect self-hosted AI infrastructure

Is Open-Source AI Catching Up to Closed Models?

K2.6 represents a significant milestone in the open vs. closed AI debate. Six months ago, the performance gap between open models and frontier closed models was substantial. That gap is narrowing rapidly for specific use cases.

The pattern emerging is specialization. Open models are achieving parity on well-defined tasks like coding, tool use, and structured automation. Closed models maintain advantages in general reasoning, vision processing, and tasks requiring broad world knowledge.

“The numbers include 54.0 on HLE with Tools, 58.6 on SWE-Bench Pro, and 83.2 on BrowseComp. The model can chain together more than 4,000 tool calls and run continuously for over twelve hours.”

— Moonshot AI benchmarks via The Decoder

For business leaders, this means hybrid strategies are becoming viable. Use open models for high-volume, well-defined tasks where you can control costs. Reserve closed model API calls for complex reasoning tasks where the performance premium justifies the expense.

How Can Businesses Access Kimi K2.6?

Moonshot AI has made K2.6 available through multiple channels, giving businesses flexibility in how they deploy.

- Kimi.com: Chat and agent mode for evaluation and light usage

- Kimi Code: Dedicated coding tool integration for development teams

- API access: For integration into existing workflows and applications

- Hugging Face download: Full model weights for self-hosted deployment

The smart approach for most enterprises is staged evaluation. Start with the API to validate performance on your specific use cases. If the results justify it, move to self-hosted deployment for cost optimization. The Hugging Face availability means you're not locked into any particular deployment path.

Similar lessons on evaluating open standards vs. proprietary solutions

What Should Your AI Strategy Be After K2.6?

K2.6's release isn't just another model announcement. It's a signal that the economics of enterprise AI are shifting. Here's what smart technology leaders should be doing right now.

Strategic Recommendations

1. Audit your current AI spending and identify high-volume, well-defined tasks that could migrate to open models. 2. Run pilot comparisons on your actual use cases, not benchmarks. 3. Calculate total cost of ownership for self-hosting vs. continued API usage. 4. Build internal expertise on open model deployment now, before you need it at scale.

The companies that will benefit most from the open model wave are those that start building infrastructure and expertise now. Waiting until K2.7 or K3.0 achieves full parity with closed models means scrambling to catch up when the economics become undeniable.

Frequently Asked Questions About Kimi K2.6

Frequently Asked Questions

How much does Kimi K2.6 cost compared to GPT-5.4?

K2.6 is available as an open-weight download, meaning self-hosting costs depend on your GPU infrastructure. For high-volume enterprise use, this typically results in 60-80% lower costs compared to per-token API pricing from OpenAI or Anthropic, though you need to factor in infrastructure management overhead.

Can Kimi K2.6 replace our current AI vendor?

For coding, tool automation, and agent-based workflows, K2.6 performs comparably to frontier closed models. However, it falls behind on pure reasoning and vision tasks. Most enterprises should evaluate hybrid approaches rather than full replacement.

What infrastructure do we need to run K2.6 in-house?

Running K2.6 at scale requires significant GPU resources. Expect to need A100 or H100 clusters for production workloads. Many enterprises start with cloud GPU instances for flexibility before investing in dedicated hardware.

Is Kimi K2.6 safe for regulated industries?

The open-weight model can run entirely on-premise, keeping data within your network. This addresses many compliance concerns around data sovereignty. However, you take on responsibility for security and access controls that cloud providers typically handle.

How long does it take to implement K2.6 in production?

Teams with existing ML infrastructure can typically deploy K2.6 for evaluation within 1-2 weeks. Production deployment with proper monitoring, scaling, and integration typically takes 2-3 months depending on use case complexity.

Logicity's Take

We've been building AI agent systems with Claude and n8n for clients across India and the Middle East, so K2.6's Agent Swarm capabilities caught our attention. The 300-agent parallelization is impressive on paper, but the real question is orchestration reliability. In our production deployments, we've learned that agent failures cascade quickly without robust error handling. The 'claw groups' feature that coordinates humans and agents together addresses a genuine pain point we've seen, where pure automation breaks down and someone needs to step in. For Indian startups and mid-size tech companies, the cost implications are significant. We've seen clients spending ₹10-15 lakhs monthly on OpenAI APIs for automation workflows. Self-hosting an open-weight model like K2.6 could reduce that by 60-70% after infrastructure investment. The catch is expertise. Most Indian dev teams aren't set up to manage GPU clusters and model deployment. Our recommendation: start with K2.6's API for validation, build internal ML ops capability gradually, then migrate high-volume workflows to self-hosted deployment when the math works. Don't rush the infrastructure leap just because the model is free.

Need Help Implementing This?

Logicity specializes in AI agent development, workflow automation with n8n, and enterprise AI integration. We help businesses evaluate open-source AI options, build custom agent systems, and optimize AI infrastructure costs. If you're considering K2.6 or similar open models for your automation needs, let's talk about what makes sense for your specific use case.

Source: The Decoder / Matthias Bastian

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.