Docker Swarm Pending States: How to Fix Container Scheduling Failures in 2026

Key Takeaways

- Pending states occur when Swarm can't find a suitable node for your container

- Resource reservations that exceed available hardware are the most common cause

- Placement constraints without matching node labels create impossible scheduling puzzles

- Volume and network mismatches in multi-arch clusters can silently block deployments

- Use docker inspect on task IDs to find the real error message hidden in Status fields

Read in Short

Your Docker Swarm service stuck in 'Pending'? It's usually one of three things: you've requested more resources than your nodes have available, your placement constraints don't match any node labels, or there's a volume/network that doesn't exist where Swarm wants to run your container. The fix starts with docker service ps --no-trunc to see the actual error message.

It's 3 AM. Your phone buzzes with a monitoring alert. You groggily check your cluster dashboard expecting the usual green 'Running' status, but instead you're staring at that silent, frustrating word: Pending.

Here's the thing about Pending in Docker Swarm. It's not an error in the traditional sense. It's the scheduler politely telling you, 'I really want to run this container, but I literally cannot find anywhere to put it.' And unlike a crash or a hard failure, Pending states don't always show up in your standard logs. They're scheduling ghosts.

In 2026, this problem has gotten worse. We're running resource-hungry AI sidecars everywhere. Edge clusters are denser than ever. Multi-architecture deployments mixing x86 and ARM are basically standard now. All of this complexity means more ways for the scheduler to get stuck.

Let's break down the three main causes and how to actually fix them.

1. Resource Exhaustion: The Classic Trap

This is the most common reason your service is stuck in Pending. You've basically promised more than your hardware can deliver.

Say you've defined a service like this:

deploy:

resources:

reservations:

cpus: '2'

memory: 4GLooks reasonable, right? But if your nodes only have 1GB of RAM free, Swarm will sit there forever waiting for resources to clear up. It won't fail. It won't complain loudly. It just waits.

How to Diagnose This

The native way is pretty straightforward:

docker service ps --no-trunc <service_name>Look at the Error column in the output. If it says something like 'insufficient resources on X nodes', you've found your culprit.

Quick Fix Options

Either reduce your resource reservations in the compose file, add more nodes to your cluster, or free up resources by scaling down other services. Don't just remove reservations entirely though. They exist for good reasons.

Speaking of AI resource demands, hardware manufacturers are now building AI capabilities directly into consumer devices.

2. Placement Constraints Gone Wrong

We use placement constraints for good reasons. You want your database running only on nodes with SSDs. Your ML models need nodes with GPUs. Makes total sense.

But here's where people mess up. They get too specific with constraints, then forget to actually label their nodes.

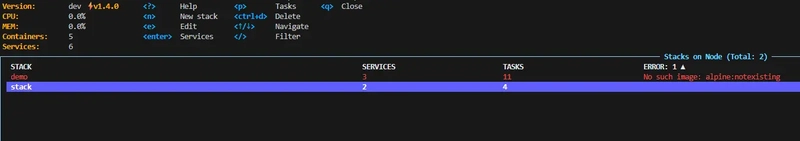

Picture this scenario: you've set a constraint like node.labels.storage == ssd in your compose file. You just added three shiny new Raspberry Pi 5 nodes to your cluster. You deploy. Everything goes Pending.

Why? Because you never labeled those new nodes. Swarm looks at your constraint, checks every node, finds zero matches, and throws up its hands.

The Quick Check

docker node inspect <node-id>Run this and compare the labels section against what your docker-compose.yml actually requires. Nine times out of ten, there's a mismatch.

3. Volume and Network Mismatches

This one's gotten way more common in 2026 because of multi-arch clusters. We're mixing x86 servers with ARM devices like it's nothing. That flexibility is great until it isn't.

Here's a typical failure mode: your service requires a specific named volume that only exists on node-01. But you've told Swarm it can run anywhere. So Swarm tries to schedule it on node-03, can't find the volume, and just sits in Pending.

Network Sync Issues

Overlay networks that haven't synchronized across your manager nodes can cause the same problem. The task stays Pending while waiting for a network ID that doesn't exist on the target worker. This is especially common after adding new nodes or recovering from a manager failure.

The fix here usually involves either making your volumes available across nodes using something like NFS or GlusterFS, or adding placement constraints to ensure the service only runs where the required volumes exist.

The 2026 Debugging Checklist

When a service stays Pending for more than 30 seconds, run through this:

- Check the desired state. Is Swarm actually trying to scale up, or is this expected behavior?

- Inspect the task directly with docker inspect <task_id>. Look at the Status and Message fields. This is where the real reason hides.

- Check node health. Is the target node marked as Ready and Active? A node in Drain mode will never accept new tasks.

- Verify resource availability across your cluster. Just because you have 10 nodes doesn't mean any of them have room.

- Double-check your placement constraints against actual node labels.

- For volume issues, confirm the required volumes exist on nodes that could potentially run the service.

Digging Deeper with Task Inspection

The docker service ps command gives you a quick overview, but the real gold is in task inspection. When you run:

docker inspect <task_id>You'll get a JSON blob with way more detail than the summary view. Look specifically at the Status object and its Message field. Swarm stores the actual scheduling failure reason there, but it doesn't surface it in the normal ps output.

This is honestly the most underutilized debugging technique. People spend hours checking logs when the answer is sitting right there in the task metadata.

Prevention Is Better Than 3 AM Debugging

Look, fixing Pending states is fine, but wouldn't it be better to not have them in the first place?

- Set up resource monitoring that alerts before you hit capacity, not after

- Create a checklist for adding new nodes that includes labeling them properly

- Use realistic resource reservations based on actual usage, not theoretical maximums

- Test constraint configurations in staging before production deployments

- Document which volumes are node-specific versus cluster-wide

Just as human judgment remains essential in courts, understanding your infrastructure still requires hands-on debugging skills that no tool can fully automate.

The Bottom Line

Pending states in Docker Swarm aren't mysterious once you know where to look. The scheduler is actually pretty transparent about why it can't place your container. You just need to ask it the right way.

Start with docker service ps --no-trunc to get the quick answer. If that's not enough, dive into docker inspect on the specific task ID. Check your node labels, verify your resources, and make sure your volumes and networks actually exist where you think they do.

And maybe set up better monitoring so you're not figuring this out at 3 AM. Your future self will thank you.

Frequently Asked Questions

Why doesn't Docker Swarm show Pending errors in the logs?

Pending isn't technically an error state. It's a scheduling state. The container hasn't started yet, so there are no container logs to show. The information lives in the task metadata instead.

How long should I wait before investigating a Pending state?

Anything over 30 seconds warrants investigation. Brief Pending states during normal scheduling are fine, but if it persists, something is blocking deployment.

Can I force Swarm to deploy despite resource constraints?

You can remove resource reservations, but that's risky. Better to either add capacity or reduce reservations to realistic levels. Forcing deployment without adequate resources often leads to worse problems.

Do Pending states resolve themselves?

Sometimes. If another service scales down and frees resources, or if a node comes back online, Swarm will automatically schedule the Pending task. But don't count on it happening quickly.

Source: DEV Community

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.