Claude Chat Fails Its Own Product Quiz

Key Takeaways

- Claude Chat running on Sonnet 4.6 confidently gives incorrect information about Anthropic's own products

- Claude Code remains highly capable for development tasks despite Chat's knowledge gaps

- Users should verify Claude Chat's answers about product features, pricing, and model availability

Claude Code has earned a reputation as one of the best AI coding tools available. It builds projects, catches its own bugs, and works through messy API documentation with impressive reasoning. But Claude Chat, the conversational side of Anthropic's AI, tells a different story.

Tech journalist Yadullah Abidi at MakeUseOf ran into a frustrating pattern: Claude Chat consistently gets basic facts wrong about Anthropic's own products. Not edge cases. Not obscure technical details. Basic product information.

Claude doesn't know Claude

You'd expect a company's AI to know its own product lineup. Ask a Google assistant about Google Workspace, and you get accurate answers. Ask Siri about Apple services, and it pulls from current data. Ask Claude Chat about Anthropic's offerings? Prepare for confident nonsense.

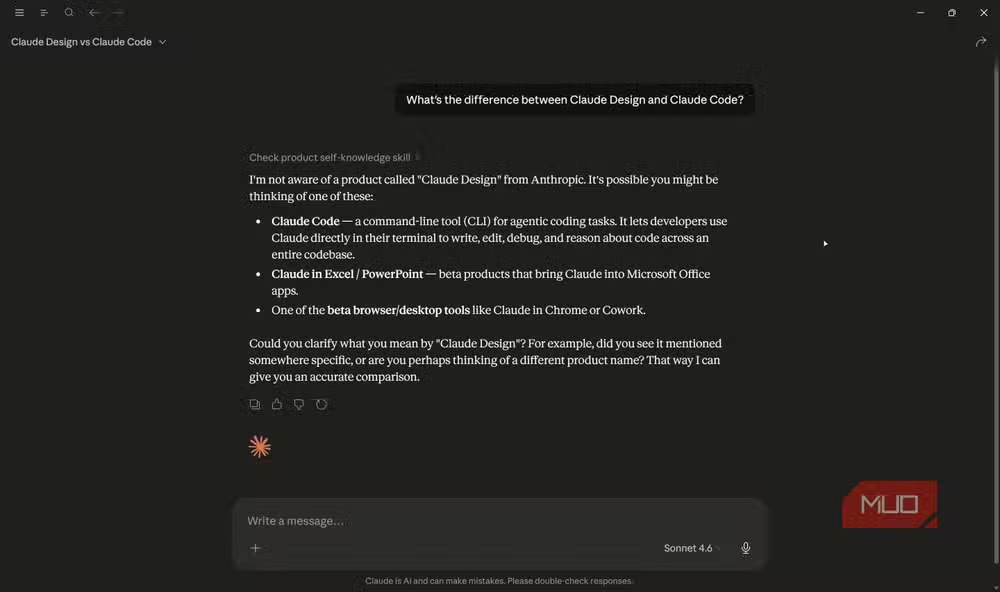

Abidi found that Claude Chat running on Sonnet 4.6 fails at straightforward questions about its sibling products. When asked about Claude Design, the chatbot claimed it wasn't aware of any such product from Anthropic. Claude Design exists. It can build presentation slides. But Claude Chat won't acknowledge it.

The problems extend beyond product recognition. Abidi reports that Claude Chat will suggest nonexistent models, fail to identify features in the desktop app, and give outdated information with complete confidence. Questions about available models, context window sizes on specific tiers, and free plan features all produce wrong answers.

The confidence problem

Wrong answers from AI chatbots aren't new. What makes Claude Chat's mistakes notable is the delivery. The bot doesn't hedge. It doesn't say "I'm not sure" or "my information might be outdated." It states incorrect facts with the same tone it uses for correct ones.

This creates a verification burden for users. When Claude Code writes code, you can run it. Either it works or it doesn't. When Claude Chat answers a product question, you need to check external sources to confirm accuracy. That defeats much of the convenience.

Why the gap between Code and Chat?

Claude Code and Claude Chat serve different purposes, but they run on the same underlying models. The difference in reliability likely comes down to training data and retrieval systems.

Code has clear success criteria. Syntax errors throw exceptions. Logic bugs produce wrong outputs. The model gets constant feedback about what works. Conversational knowledge about products doesn't have the same feedback loop. Wrong answers about Claude Design don't crash anything.

Most enterprise AI systems solve this with retrieval-augmented generation, pulling from current databases to answer product questions. Anthropic apparently hasn't implemented robust RAG for its own product knowledge in Claude Chat.

Logicity's Take

What this means for users

If you use Claude for coding, nothing changes. Claude Code remains one of the best options for development work. The tool catches bugs, reasons through documentation, and handles complex integrations well.

If you use Claude Chat for research or product questions, treat it like an unreliable narrator. Verify any facts it presents, especially about Anthropic's own ecosystem. The chatbot's confidence level tells you nothing about accuracy.

- Double-check any Claude Chat answers about model versions or feature availability

- Use official Anthropic documentation for pricing and tier comparisons

- Don't assume the chatbot's knowledge is current, even for Anthropic products

Another look at getting more from AI-powered tools

Frequently Asked Questions

Why does Claude Chat give wrong answers about Anthropic products?

Claude Chat likely lacks updated retrieval systems for Anthropic's own product information. The model's training data may be outdated, and without real-time database access, it confidently repeats incorrect details.

Is Claude Code affected by these accuracy issues?

No. Claude Code remains highly capable for development tasks. Code has clear success criteria through execution, while conversational answers about products don't have the same feedback mechanisms.

How can I verify Claude Chat's answers?

Check Anthropic's official documentation, pricing pages, and product announcements. Don't rely solely on Claude Chat for factual claims about features, models, or availability.

What is Claude Design?

Claude Design is an Anthropic product for creating presentation slides. Despite Claude Chat's claims otherwise, it exists and is part of Anthropic's product lineup.

Need Help Implementing This?

Source: MakeUseOf

Huma Shazia

Senior AI & Tech Writer

اقرأ أيضاً

رأي مغاير: كيف يؤثر اختراق الأمن الداخلي الأميركي على شركاتنا الخاصة؟

في ظل اختراق عقود الأمن الداخلي الأميركي مع شركات خاصة، نناقش تأثير هذا الاختراق على مستقبل الأمن السيبراني. نستعرض الإحصاءات الموثوقة ونناقش كيف يمكن للشركات الخاصة أن تتعامل مع هذا التهديد. استمتع بقراءة هذا التحليل العميق

الإنسان في زمن ما بعد الوجود البشري: نحو نظام للتعايش بين الإنسان والروبوت - Centre for Arab Unity Studies

في هذا المقال، سنناقش كيف يمكن للبشر والروبوتات التعايش في نظام متكامل. سنستعرض التحديات والحلول المحتملة التي تضعها شركات مثل جوجل وأمازون. كما سنلقي نظرة على التوقعات المستقبلية وفقًا لتقرير ماكنزي

إطلاق ناسا لمهمة مأهولة إلى القمر: خطوة تاريخية نحو استكشاف الفضاء

تعتبر المهمة الجديدة خطوة هامة نحو استكشاف الفضاء وتطوير التكنولوجيا. سوف تشمل المهمة إرسال رواد فضاء إلى سطح القمر لconducting تجارب علمية. ستسهم هذه المهمة في تطوير فهمنا للفضاء وتحسين التكنولوجيا المستخدمة في استكشاف الفضاء.