Anthropic's AI Code Just Leaked — And It's Spilling Secrets

Anthropic accidentally exposed the closed-source code of its AI coding assistant, Claude Code, in a public NPM package. While no customer data was compromised, the leak revealed thousands of lines of code and hidden features like 'Proactive' and 'Dream' modes. The company is now scrambling to contain the fallout and fix a separate bug causing users to hit usage limits faster than expected.

Key Takeaways

- Anthropic accidentally published 500k lines of Claude Code's source in a public NPM package

- The leak came from a debug file that wasn't meant to be shared

- Hidden 'Dream' and 'Proactive' modes were discovered in the code

- No customer data was exposed, but the code spread fast

- Users are hitting usage limits due to a confirmed bug, not intentional throttling

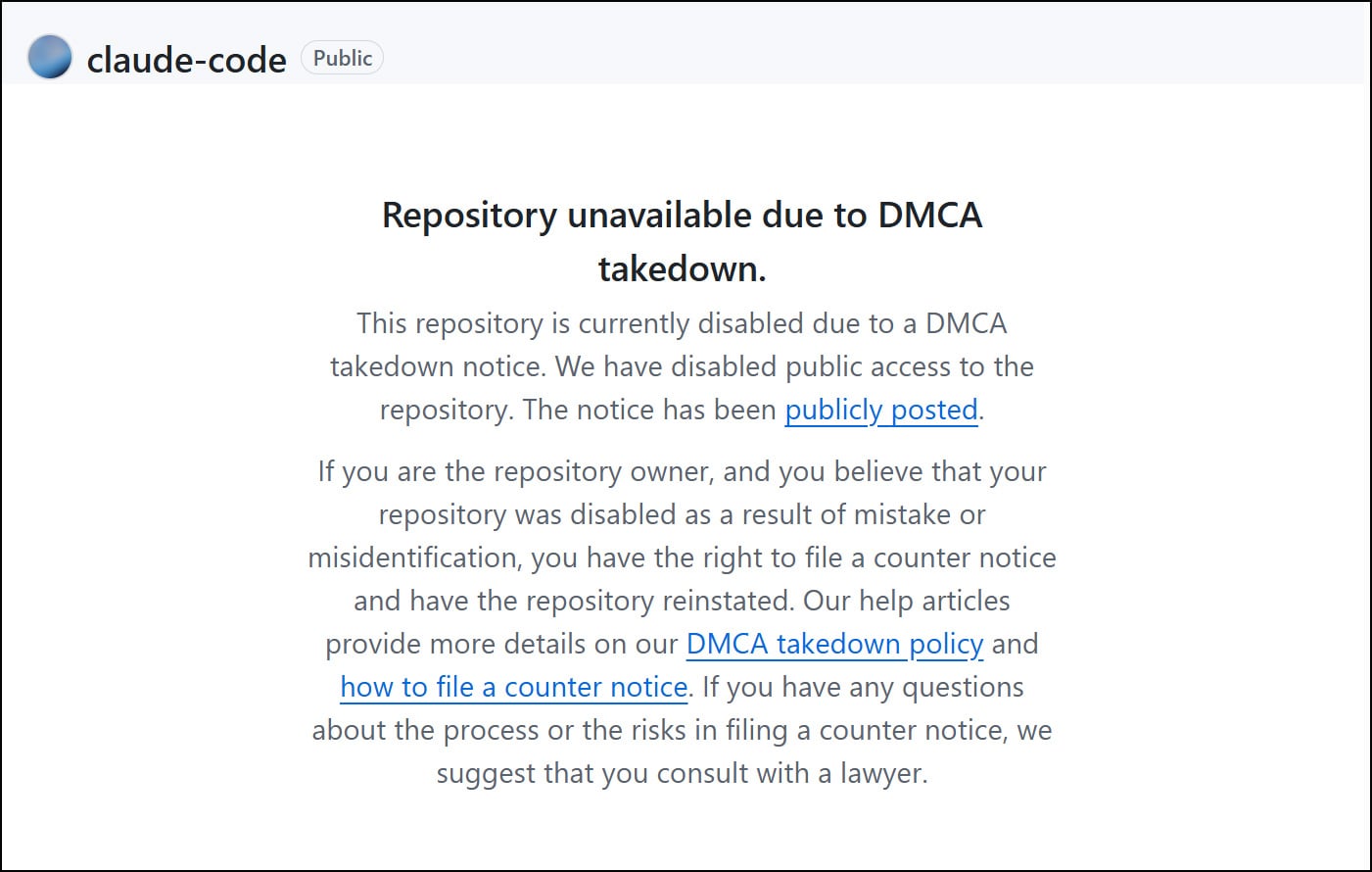

- Anthropic is pushing fixes and issuing takedown notices

In This Article

- What Went Wrong?

- How a Tiny Debug File Caused a Massive Leak

- What the Leaked Code Revealed

- Another Problem: Users Hitting Limits Too Fast

What Went Wrong?

In a rare misstep for a major AI company, Anthropic quietly pushed out a software update that carried something it definitely shouldn't have: the full internal source code of its proprietary coding tool, Claude Code.

- A routine update to version 2.1.88 of Claude Code was pushed to NPM, a popular platform for sharing code packages

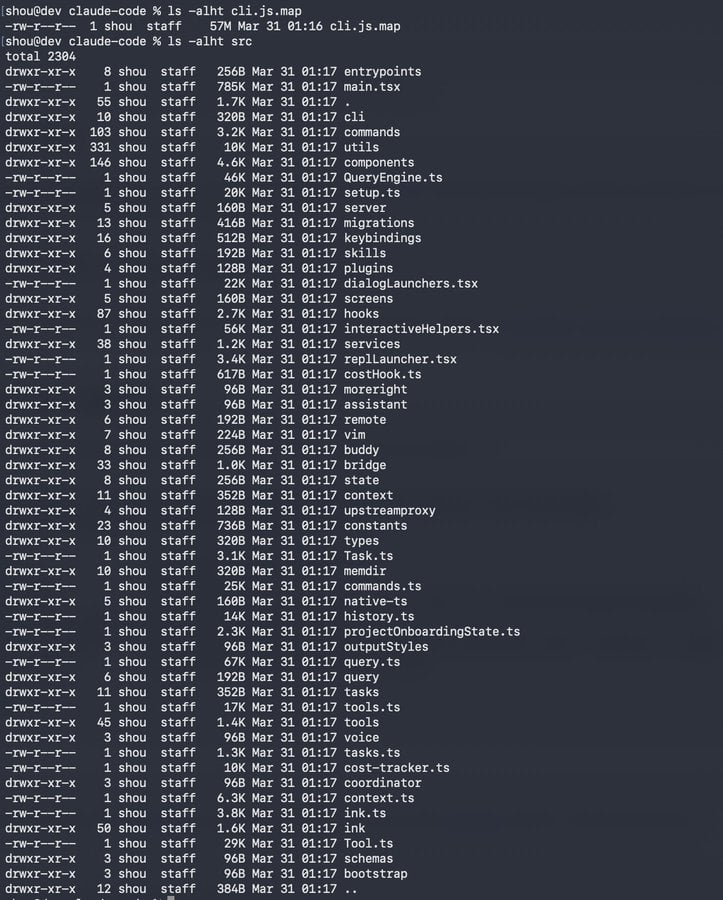

- Buried inside was a 60 MB file called cli.js.map — a debugging tool that somehow contained the complete source code

- Source map files are meant to help developers trace errors, but when packed with source content, they can expose the entire codebase

- Within hours, developers spotted the leak and began reconstructing and sharing the code online

Claude code source code has been leaked via a map file in their npm registry!

— Chaofan Shou (@Fried_rice) March 31, 2026

Code: https://t.co/jBiMoOzt8G pic.twitter.com/rYo5hbvEj8

How a Tiny Debug File Caused a Massive Leak

The culprit wasn't a hacker or a server breach — it was a simple packaging mistake with massive consequences. Here's how a file meant for debugging became a goldmine for AI sleuths.

- Source maps normally just point to original code, but this one included a 'sourcesContent' field embedding the actual code

- This allowed anyone with the file to rebuild the entire structure of Claude Code — about 1,900 files and 500,000 lines

- The file was publicly accessible on NPM, meaning anyone could download it without restrictions

- Once uploaded, it spread rapidly across GitHub and code-sharing forums, making full containment nearly impossible

What the Leaked Code Revealed

Curious developers didn't just download the code — they started digging. What they found were hints of futuristic AI behaviors that Anthropic hasn't announced yet.

- Buried in the code was evidence of a 'Proactive mode,' where Claude could autonomously write code without direct prompts

- Another feature, called 'Dream mode,' suggests the AI could run background thinking sessions to refine ideas and solve problems while users are away

- Internal documentation hinted at deeper integration with IDEs and real-time collaboration tools

- While not functional yet, these discoveries give a rare peek into Anthropic's long-term roadmap for AI coding assistants

Another Problem: Users Hitting Limits Too Fast

Even before the leak made headlines, users were sounding alarms about something else — their Claude usage was vanishing at an alarming rate.

- Subscribers on Pro and Max plans reported hitting 100% usage after just a few minutes in Claude Code

- One user saw their usage jump from 0% to 30% after a single message, then to full capacity shortly after

- Anthropic confirmed it's a bug, not a new policy, and is actively working on a fix

- The issue has sparked suspicion, especially as Claude's popularity surges, but the company insists it's unintentional

“Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again.”

— Anthropic, Statement to BleepingComputer

“We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update.”

— Lydia Hallie, Anthropic, on X

Final Thoughts

While no customer data was compromised, this incident is a stark reminder that even the most advanced AI companies aren't immune to basic operational errors. The source code leak offers a rare behind-the-scenes look at where AI coding tools are headed — but also raises questions about release discipline. As Anthropic works to patch both the leak and the usage bug, the tech world will be watching closely to see how it handles the fallout and what comes next from its secretive labs.

Sources & Credits

Originally reported by BleepingComputer

Huma Shazia

Senior AI & Tech Writer